Document 10768715

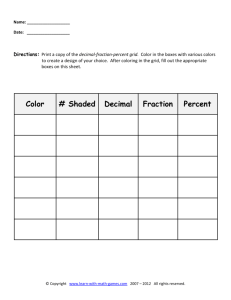

advertisement