The Entropy Rounding Method in Approximation Algorithms Thomas Rothvoß SODA 2012

advertisement

The Entropy Rounding Method in

Approximation Algorithms

Thomas Rothvoß

Department of Mathematics, M.I.T.

SODA 2012

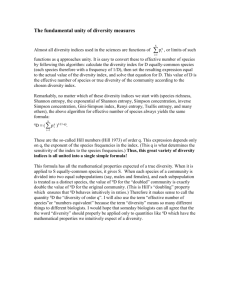

A general LP rounding problem

Problem:

◮

◮

Given: A ∈ Rn×m , fractional solution x ∈ [0, 1]m

Find: y ∈ {0, 1}m : Ax ≈ Ay

A general LP rounding problem

Problem:

◮

◮

Given: A ∈ Rn×m , fractional solution x ∈ [0, 1]m

Find: y ∈ {0, 1}m : Ax ≈ Ay

General strategies:

◮

Randomized rounding:

Flip Pr[yi = 1] = xi (in)dependently

A general LP rounding problem

Problem:

◮

◮

Given: A ∈ Rn×m , fractional solution x ∈ [0, 1]m

Find: y ∈ {0, 1}m : Ax ≈ Ay

General strategies:

◮

Randomized rounding:

Flip Pr[yi = 1] = xi (in)dependently

◮

Use properties of basic solutions:

|supp(x)| ≤ #rows(A)

A general LP rounding problem

Problem:

◮

◮

Given: A ∈ Rn×m , fractional solution x ∈ [0, 1]m

Find: y ∈ {0, 1}m : Ax ≈ Ay

General strategies:

◮

Randomized rounding:

Flip Pr[yi = 1] = xi (in)dependently

◮

Use properties of basic solutions:

|supp(x)| ≤ #rows(A)

Try another way:

◮

“Entropy rounding method” based on discrepancy theory

A schematic view

Input: x ∈ [0, 1]m , matrix A

Bansal

algorithm

Lovász, Spencer &

. Vesztergombi Theorem

Entropy rounding

Beck’s entropy

method

Output: y ∈ {0, 1}m with

kAx − Ayk∞ small

A schematic view

Input: x ∈ [0, 1]m , matrix A

Bansal

algorithm

Lovász, Spencer &

. Vesztergombi Theorem

Beck’s entropy

method

Output: y ∈ {0, 1}m with

kAx − Ayk∞ small

A schematic view

Input: x ∈ [0, 1]m , matrix A

Bansal

algorithm

Lovász, Spencer &

. Vesztergombi Theorem

Beck’s entropy

method

Output: y ∈ {0, 1}m with

kAx − Ayk∞ small

A schematic view

Input: x ∈ [0, 1]m , matrix A

Bansal

algorithm

Lovász, Spencer &

. Vesztergombi Theorem

Beck’s entropy

method

Output: y ∈ {0, 1}m with

kAx − Ayk∞ small

Discrepancy theory

◮

Set system S = {S1 , . . . , Sm }, Si ⊆ [n]

i

S

Discrepancy theory

b

◮

◮

Set system S = {S1 , . . . , Sm }, Si ⊆ [n]

Coloring χ : [n] → {−1, +1}

b

i

b

S

Discrepancy theory

b

◮

◮

◮

Set system S = {S1 , . . . , Sm }, Si ⊆ [n]

Coloring χ : [n] → {−1, +1}

Discrepancy

disc(S) =

where χ(S) =

min

max |χ(S)|.

χ:[n]→{±1} S∈S

P

i∈S

χ(i).

b

i

b

S

Discrepancy theory

b

◮

◮

◮

Set system S = {S1 , . . . , Sm }, Si ⊆ [n]

Coloring χ : [n] → {−1, +1}

Discrepancy

disc(S) =

where χ(S) =

Known results:

◮

min

b

i

b

S

max |χ(S)|.

χ:[n]→{±1} S∈S

P

i∈S

χ(i).

√

n sets, n elements: disc(S) = O( n) [Spencer ’85]

Discrepancy theory

b

◮

◮

◮

Set system S = {S1 , . . . , Sm }, Si ⊆ [n]

Coloring χ : [n] → {−1, +1}

Discrepancy

disc(S) =

where χ(S) =

Known results:

◮

◮

min

b

i

b

S

max |χ(S)|.

χ:[n]→{±1} S∈S

P

i∈S

χ(i).

√

n sets, n elements: disc(S) = O( n) [Spencer ’85]

Every element in ≤ t sets:√disc(S) < 2t [Beck & Fiala ’81]

Conjecture: disc(S) ≤ O( t)

Discrepancy theory

b

◮

◮

◮

Set system S = {S1 , . . . , Sm }, Si ⊆ [n]

Coloring χ : [n] → {−1, +1}

Discrepancy

disc(S) =

where χ(S) =

Known results:

◮

◮

min

b

i

b

S

max |χ(S)|.

χ:[n]→{±1} S∈S

P

i∈S

χ(i).

√

n sets, n elements: disc(S) = O( n) [Spencer ’85]

Every element in ≤ t sets:√disc(S) < 2t [Beck & Fiala ’81]

Conjecture: disc(S) ≤ O( t)

More definitions:

◮

Half coloring: χ : [n] → {0, −1, +1}, |supp(χ)| ≥ n/2

Discrepancy theory

b

◮

◮

◮

Set system S = {S1 , . . . , Sm }, Si ⊆ [n]

Coloring χ : [n] → {−1, +1}

Discrepancy

disc(S) =

where χ(S) =

Known results:

◮

◮

min

b

i

b

S

max |χ(S)|.

χ:[n]→{±1} S∈S

P

i∈S

χ(i).

√

n sets, n elements: disc(S) = O( n) [Spencer ’85]

Every element in ≤ t sets:√disc(S) < 2t [Beck & Fiala ’81]

Conjecture: disc(S) ≤ O( t)

More definitions:

◮

◮

Half coloring: χ : [n] → {0, −1, +1}, |supp(χ)| ≥ n/2

For matrix A: disc(A) =

min kAχk∞

χ∈{±1}n

The LSV-Theorem

Theorem (Lovász, Spencer & Vesztergombi ’86)

Given A ∈ Rn×m , x ∈ [0, 1]m .

Suppose for any A′ ⊆ A, ∃ coloring χ : kA′ χk∞ ≤ ∆.

Then ∃y ∈ {0, 1}m with kAx − Ayk∞ ≤ ∆.

The LSV-Theorem

Theorem (Lovász, Spencer & Vesztergombi ’86)

Given A ∈ Rn×m , x ∈ [0, 1]m .

Suppose for any A′ ⊆ A, ∃ coloring χ : kA′ χk∞ ≤ ∆.

Then ∃y ∈ {0, 1}m with kAx − Ayk∞ ≤ ∆.

◮

Suffices to find good matrix colorings!

The LSV-Theorem

Theorem (Lovász, Spencer & Vesztergombi ’86)

Given A ∈ Rn×m , x ∈ [0, 1]m .

Suppose for any A′ ⊆ A, ∃ half coloring χ : kA′ χk∞ ≤ ∆.

Then ∃y ∈ {0, 1}m with kAx − Ayk∞ ≤ ∆·log m.

◮

Suffices to find good matrix half colorings!

A schematic view

Input: x ∈ [0, 1]m , matrix A

Bansal

algorithm

Lovász, Spencer &

. Vesztergombi Theorem

Beck’s entropy

method

Output: y ∈ {0, 1}m with

kAx − Ayk∞ small

Entropy

Definition

For random variable Z, the entropy is

H(Z) =

X

z

Pr[Z = z] · log2

1

Pr[Z = z]

Entropy

Definition

For random variable Z, the entropy is

H(Z) =

X

z

Pr[Z = z] · log2

1

Pr[Z = z]

Properties:

◮

One likely event: ∃z : Pr[Z = z] ≥ ( 12 )H(Z)

Entropy

Definition

For random variable Z, the entropy is

H(Z) =

X

z

Pr[Z = z] · log2

1

Pr[Z = z]

Properties:

◮

◮

One likely event: ∃z : Pr[Z = z] ≥ ( 12 )H(Z)

Subadditivity: H(f (Z, Z ′ )) ≤ H(Z) + H(Z ′ ).

Theorem [Beck’s entropy method]

H

χi ∈{±1}

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

A2 χ

2∆

m

A=

{±1}m

2∆

A1 χ

Theorem [Beck’s entropy method]

H

χi ∈{±1}

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

2∆

A2 χ

m

A=

{±1}m

b

b

2∆

A1 χ

Theorem [Beck’s entropy method]

H

χi ∈{±1}

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

2∆

A2 χ

m

A=

{±1}m

b

A1 χ

b

b

b

2∆

Theorem [Beck’s entropy method]

H

χi ∈{±1}

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

2∆

A2 χ

m

A=

b

{±1}

m

b

A1 χ

b

b

b

b

2∆

Theorem [Beck’s entropy method]

H

χi ∈{±1}

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

2∆

A2 χ

m

A=

b

b

{±1}

m

b

b

A1 χ

b

b

b

b

2∆

Theorem [Beck’s entropy method]

H

χi ∈{±1}

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

2∆

A2 χ

m

A=

b

b

{±1}

m

b

b

b

b

2∆

A1 χ

b

b

b

b

Theorem [Beck’s entropy method]

H

χi ∈{±1}

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

2∆

A2 χ

m

A=

b

{±1}

b

b

b

b

b

m

b

b

b

b

2∆

A1 χ

b

b

Theorem [Beck’s entropy method]

H

χi ∈{±1}

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

2∆

A2 χ

m

A=

b

{±1}

b

b

b

2∆

A1 χ

b

b

b

b

b

b

m

b

b

b

b

Theorem [Beck’s entropy method]

H

χi ∈{±1}

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

2∆

A2 χ

m

A=

b

{±1}

b

b

b

b

2∆

A1 χ

b

b

b

b

b

b

b

m

b

b

b

b

Theorem [Beck’s entropy method]

H

χi ∈{±1}

◮

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

∃cell : Pr[Aχ ∈ cell] ≥ ( 21 )m/5 .

2∆

A2 χ

m

A=

b

{±1}

b

b

b

b

2∆

A1 χ

b

b

b

b

b

b

b

m

b

b

b

b

Theorem [Beck’s entropy method]

H

χi ∈{±1}

◮

◮

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

∃cell : Pr[Aχ ∈ cell] ≥ ( 21 )m/5 .

At least 2m · ( 21 )m/5 = 20.8m colorings χ have Aχ ∈ cell

2∆

A2 χ

m

A=

b

{±1}m

b

b

b

b

b

2∆

A1 χ

Theorem [Beck’s entropy method]

H

χi ∈{±1}

◮

◮

◮

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

∃cell : Pr[Aχ ∈ cell] ≥ ( 21 )m/5 .

At least 2m · ( 21 )m/5 = 20.8m colorings χ have Aχ ∈ cell

Pick χ1 , χ2 differing in half of entries

2∆

A2 χ

m

A=

b

χ1

{±1}m

χ2

b

b

b

Aχ1

Aχ2

2∆

A1 χ

Theorem [Beck’s entropy method]

H

χi ∈{±1}

◮

◮

◮

◮

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

∃cell : Pr[Aχ ∈ cell] ≥ ( 21 )m/5 .

At least 2m · ( 21 )m/5 = 20.8m colorings χ have Aχ ∈ cell

Pick χ1 , χ2 differing in half of entries

Define χ0 (i) := 21 (χ1 (i) − χ2 (i)) ∈ {0, ±1}.

2∆

A2 χ

m

A=

b

χ1

{±1}m

χ2

b

b

b

Aχ1

Aχ2

2∆

A1 χ

Theorem [Beck’s entropy method]

H

χi ∈{±1}

◮

◮

◮

◮

◮

l

Aχ

2∆

k

≤

m

5

⇒ ∃half-coloring χ0 : kAχ0 k∞ ≤ ∆.

∃cell : Pr[Aχ ∈ cell] ≥ ( 21 )m/5 .

At least 2m · ( 21 )m/5 = 20.8m colorings χ have Aχ ∈ cell

Pick χ1 , χ2 differing in half of entries

Define χ0 (i) := 21 (χ1 (i) − χ2 (i)) ∈ {0, ±1}.

Then kAχ0 k∞ ≤ 12 kAχ1 − Aχ2 k∞ ≤ ∆.

2∆

A2 χ

m

A=

b

χ1

{±1}m

χ2

b

b

b

Aχ1

Aχ2

2∆

A1 χ

A bound on the entropy

◮

◮

Let α = (α1 , . . l

. ,P

αm ) ∈ Rkm .

Consider Z :=

j χ(j)·αj

λ·kαk2

with χ(j) ∈ {±1} at random

A bound on the entropy

◮

◮

Let α = (α1 , . . l

. ,P

αm ) ∈ Rkm .

Consider Z :=

j χ(j)·αj

λ·kαk2

Pr[Z = 0]

1

0

with χ(j) ∈ {±1} at random

1

λ

A bound on the entropy

◮

◮

Let α = (α1 , . . l

. ,P

αm ) ∈ Rkm .

Consider Z :=

j χ(j)·αj

λ·kαk2

with χ(j) ∈ {±1} at random

Pr[Z = 0]

1

0

1

λ

H(Z)

b

1

2)

e−Ω(λ

λ

A bound on the entropy

◮

◮

Let α = (α1 , . . l

. ,P

αm ) ∈ Rkm .

Consider Z :=

j χ(j)·αj

λ·kαk2

with χ(j) ∈ {±1} at random

Pr[Z = 0]

1

0

1

λ

H(Z)

O(log( λ1 ))

b

1

2)

e−Ω(λ

λ

A bound on the entropy

◮

◮

Let α = (α1 , . . l

. ,P

αm ) ∈ Rkm .

Consider Z :=

j χ(j)·αj

λ·kαk2

with χ(j) ∈ {±1} at random

Pr[Z = 0]

1

0

1

λ

H(Z)

randomized rounding

O(log( λ1 ))

b

1

2)

e−Ω(λ

λ

A schematic view

Input: x ∈ [0, 1]m , matrix A

Bansal

algorithm

Lovász, Spencer &

. Vesztergombi Theorem

Beck’s entropy

method

Output: y ∈ {0, 1}m with

kAx − Ayk∞ small

A general rounding theorem

Theorem

Input:

◮

◮

◮

matrix A ∈ [−1, 1]n×m , ∆i > 0 satisfying entropy condition

for all submatrices

vector x ∈ [0, 1]m

P

row weights w(i) ( i w(i) = 1)

There is a random variable y ∈ {0, 1}m with

◮ Bounded difference:

◮

◮

◮

|Ai x − Ai y| ≤ O(log(m))

· ∆i

p

|Ai x − Ai y| ≤ O( 1/w(i))

Preserved expectation: E[yi ] = xi .

Bin Packing With Rejection

Input:

◮

Items i ∈ {1, . . . , n} with size si ∈ [0, 1], and rejection

penalty πi ∈ [0, 1]

Goal: Pack or reject. Minimize # bins + rejection cost.

1

0

1

bin 1

bin 2

si

input

πi

0.9

0.7

0.4

0.6

Bin Packing With Rejection

Input:

◮

Items i ∈ {1, . . . , n} with size si ∈ [0, 1], and rejection

penalty πi ∈ [0, 1]

Goal: Pack or reject. Minimize # bins + rejection cost.

1

0

1

bin 1

bin 2

si

input

πi

0.7

0.4

0.6

Bin Packing With Rejection

Input:

◮

Items i ∈ {1, . . . , n} with size si ∈ [0, 1], and rejection

penalty πi ∈ [0, 1]

Goal: Pack or reject. Minimize # bins + rejection cost.

1

0

1

bin 1

si

πi

bin 2

×

0.7

input

0.4

0.6

Bin Packing With Rejection

Input:

◮

Items i ∈ {1, . . . , n} with size si ∈ [0, 1], and rejection

penalty πi ∈ [0, 1]

Goal: Pack or reject. Minimize # bins + rejection cost.

1

0

1

bin 1

si

πi

bin 2

×

0.7

input

0.6

Bin Packing With Rejection

Input:

◮

Items i ∈ {1, . . . , n} with size si ∈ [0, 1], and rejection

penalty πi ∈ [0, 1]

Goal: Pack or reject. Minimize # bins + rejection cost.

1

0

1

bin 1

si

πi

bin 2

×

0.7

input

The column-based LP

Set Cover formulation:

◮

◮

LP:

Bins: Sets S ⊆ [n] with

P

i∈S si

≤ 1 of cost c(S) = 1

Rejections: Sets S = {i} of cost c(S) = πi

min

X

S∈S

c(S) · xS

X

S∈S

1S · xS ≥ 1

xS ≥ 0 ∀S ∈ S

The column-based LP - Example

1

si

πi

0.44

0.4

0.3

0.9

0.7

0.4

0.26

0.6

input

The column-based LP - Example

1

si

πi

min 1

1

0

0

0

0.44

0.4

0.3

0.9

0.7

0.4

0.26

input

0.6

1 1 1 1 1 1 1 1 1 1 1 | .9 .7 .4 .6 x

0 0 0 1 1 1 0 0 0 1 0 |1 0 0 0

1

1

1 0 0 1 0 0 1 1 0 0 1 | 0 1 0 0

x ≥

1

0 1 0 0 1 0 1 0 1 1 1 | 0 0 1 0

0 0 1 0 0 1 0 1 1 1 1 |0 0 0 1

1

x ≥ 0

The column-based LP - Example

1

si

πi

min 1

1

0

0

0

0.44

0.4

0.3

0.9

0.7

0.4

0.26

input

0.6

1 1 1 1 1 1 1 1 1 1 1 | .9 .7 .4 .6 x

0 0 0 1 1 1 0 0 0 1 0 |1 0 0 0

1

1

1 0 0 1 0 0 1 1 0 0 1 | 0 1 0 0

x ≥

1

0 1 0 0 1 0 1 0 1 1 1 | 0 0 1 0

0 0 1 0 0 1 0 1 1 1 1 |0 0 0 1

1

x ≥ 0

1/2×

1/2×

1/2×

Our results

Theorem

There is a randomized poly-time approximation algorithm for

Bin Packing With Rejection with

AP X ≤ OP Tf + O(log2 OP Tf )

(with high probability).

◮

Previously best known: AP X ≤ OP Tf +

OP Tf

(log OP Tf )1−o(1)

[Epstein & Levin ’10]

◮

As good as classical Bin Packing [Karmarkar & Karp ’82]

The end

Open question

Are there more (convincing) applications?

The end

Open question

Are there more (convincing) applications?

Thanks for your attention