Introduction to Machine Learning CSE474/574: Logistic Regression Varun Chandola <>

advertisement

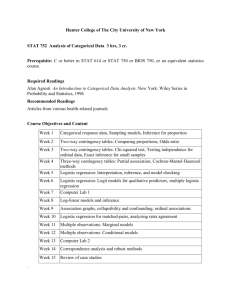

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Introduction to Machine Learning

CSE474/574: Logistic Regression

Varun Chandola <chandola@buffalo.edu>

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Outline

1

Generative vs. Discriminative Classifiers

2

Logistic Regression

3

Logistic Regression - Training

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Outline

1

Generative vs. Discriminative Classifiers

2

Logistic Regression

3

Logistic Regression - Training

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Generative vs. Discriminative Classifiers

Probabilistic classification task:

p(Y = benign|X = x), p(Y = malicious|X = x)

How do you estimate p(y |x)?

p(y |x) =

p(y , x)

p(x|y )p(y )

=

p(x)

p(x)

Two step approach - Estimate generative model and then posterior

for y (Naı̈ve Bayes)

Solving a more general problem [2, 1]

Why not directly model p(y |x)? - Discriminative approach

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Which is Better?

Number of training examples needed to learn a PAC-learnable

classifier ∝ VC-dimension of the hypothesis space

VC-dimension of a probabilistic classifier ∝ Number of

parameters [2] (or a small polynomial in the number of parameters)

Number of parameters for p(y , x) > Number of parameters for

p(y |x)

Discriminative classifiers need lesser training examples to for PAC

learning than generative classifiers

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Outline

1

Generative vs. Discriminative Classifiers

2

Logistic Regression

3

Logistic Regression - Training

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Logistic Regression

y |x is a Bernoulli distribution with parameter θ = sigmoid(w> x)

When a new input x∗ arrives, we toss a coin which has

sigmoid(w> x∗ ) as the probability of heads

If outcome is heads, the predicted class is 1 else 0

Learns a linear boundary

Learning Task for Logistic Regression

Given training examples hxi , yi iD

i=1 , learn w

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

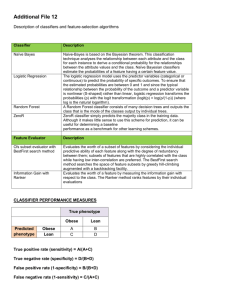

Logistic Regression - Recap

Bayesian Interpretation

Directly model p(y |x) (y ∈ {0, 1})

p(y |x) ∼ Bernoulli(θ = sigmoid(w> x))

Geometric Interpretation

Use regression to predict

discrete values

Squash output to [0, 1] using

sigmoid function

1

0.5

x

Output less than 0.5 is one

class and greater than 0.5 is the

other

Varun Chandola

1

1+e −ax

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Outline

1

Generative vs. Discriminative Classifiers

2

Logistic Regression

3

Logistic Regression - Training

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

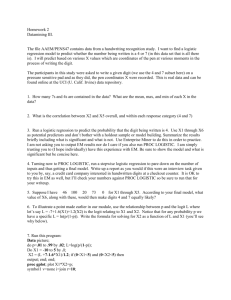

Learning Parameters

MLE Approach

Assume that y ∈ {0, 1}

What is the likelihood for a bernoulli sample?

1

1+exp(−w> xi )

1

If yi = 0, p(yi ) = 1 − θi = 1+exp(w

>x )

i

yi

1−yi

In general, p(yi ) = θi (1 − θi )

If yi = 1, p(yi ) = θi =

Log-likelihood

LL(w)

=

N

X

yi log θi + (1 − yi ) log (1 − θi )

i=1

No closed form solution for maximizing log-likelihood

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Using Gradient Descent for Learning Weights

Compute gradient of LL with respect to w

A convex function of w with a unique global maximum

N

X

d

LL(w) =

(yi − θi )xi

dw

0

i=1

−0.5

Update rule:

wk+1 = wk + η

d

LL(wk )

dwk

10

5

0 0

Varun Chandola

2

Introduction to Machine Learning

4

6

8

10

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Using Newton’s Method

Setting η is sometimes tricky

Too large – incorrect results

Too small – slow convergence

Another way to speed up convergence:

Newton’s Method

wk+1 = wk + ηH−1

k

Varun Chandola

d

LL(wk )

dwk

Introduction to Machine Learning

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

What is the Hessian?

Hessian or H is the second order derivative of the objective function

Newton’s method belong to the family of second order

optimization algorithms

For logistic regression, the Hessian is:

X

H=−

θi (1 − θi )xi x>

i

i

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Regularization with Logistic Regression

Overfitting is an issue, especially with large number of features

Add a Gaussian prior ∼ N (0, τ 2 )

Easy to incorporate in the gradient descent based approach

1

LL0 (w) = LL(w) − λw> w

2

d

d

LL0 (w) =

LL(w) − λw

dw

dw

H 0 = H − λI

where I is the identity matrix.

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Handling Multiple Classes

One vs. Rest and One vs. Other

p(y |x) ∼ Multinoulli(θ)

Multinoulli parameter vector θ is defined as:

exp(wj> x)

θj = PC

>

k=1 exp(wk x)

Multiclass logistic regression has C weight vectors to learn

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Bayesian Logistic Regression

How to get the posterior for w?

Not easy - Why?

Laplace Approximation

We do not know what the true posterior distribution for w is.

Is there a close-enough (approximate) Gaussian distribution?

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Laplace Approximation

Problem Statement

How to approximate a posterior with a Gaussian distribution?

When is this needed?

When direct computation of posterior is not possible.

No conjugate prior /

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Laplace Approximation using Taylor Series Expansion

Assume that posterior is:

p(w|D) =

1 −E (w)

e

Z

E (w) is energy function ≡ negative log of unnormalized log

posterior

Let w∗ be the mode or expected value of the posterior distribution

of w

Taylor series expansion of E (w) around the mode

E (w)

= E (w∗ ) + (w − w∗ )> E 0 (w) + (w − w∗ )> E 00 (w)(w − w∗ ) + . . .

≈ E (w∗ ) + (w − w ∗ )> E 0 (w) + (w − w∗ )> E 00 (w)(w − w∗ )

E 0 (w) = ∇ - first derivative of E (w) (gradient) and E 00 (w) = H is

the second derivative (Hessian)

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Laplace Approximation using Taylor Series Expansion

Assume that posterior is:

p(w|D) =

1 −E (w)

e

Z

E (w) is energy function ≡ negative log of unnormalized log

posterior

Let w∗ be the mode or expected value of the posterior distribution

of w

Taylor series expansion of E (w) around the mode

E (w)

= E (w∗ ) + (w − w∗ )> E 0 (w) + (w − w∗ )> E 00 (w)(w − w∗ ) + . . .

≈ E (w∗ ) + (w − w ∗ )> E 0 (w) + (w − w∗ )> E 00 (w)(w − w∗ )

E 0 (w) = ∇ - first derivative of E (w) (gradient) and E 00 (w) = H is

the second derivative (Hessian)

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Laplace Approximation using Taylor Series Expansion

Assume that posterior is:

p(w|D) =

1 −E (w)

e

Z

E (w) is energy function ≡ negative log of unnormalized log

posterior

Let w∗ be the mode or expected value of the posterior distribution

of w

Taylor series expansion of E (w) around the mode

E (w)

= E (w∗ ) + (w − w∗ )> E 0 (w) + (w − w∗ )> E 00 (w)(w − w∗ ) + . . .

≈ E (w∗ ) + (w − w ∗ )> E 0 (w) + (w − w∗ )> E 00 (w)(w − w∗ )

E 0 (w) = ∇ - first derivative of E (w) (gradient) and E 00 (w) = H is

the second derivative (Hessian)

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Laplace Approximation using Taylor Series Expansion

Assume that posterior is:

p(w|D) =

1 −E (w)

e

Z

E (w) is energy function ≡ negative log of unnormalized log

posterior

Let w∗ be the mode or expected value of the posterior distribution

of w

Taylor series expansion of E (w) around the mode

E (w)

= E (w∗ ) + (w − w∗ )> E 0 (w) + (w − w∗ )> E 00 (w)(w − w∗ ) + . . .

≈ E (w∗ ) + (w − w ∗ )> E 0 (w) + (w − w∗ )> E 00 (w)(w − w∗ )

E 0 (w) = ∇ - first derivative of E (w) (gradient) and E 00 (w) = H is

the second derivative (Hessian)

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Taylor Series Expansion Continued

Since w∗ is the mode, the first derivative or gradient is zero

E (w) ≈ E (w∗ ) + (w − w∗ )> H(w − w∗ )

Posterior p(w|D) may be written as:

1 −E (w∗ )

1

p(w|D) ≈

e

exp − (w − w∗ )> H(w − w∗ )

Z

2

=

N (w∗ , H−1 )

w∗ is the mode obtained by maximizing the posterior using gradient

ascent

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Taylor Series Expansion Continued

Since w∗ is the mode, the first derivative or gradient is zero

E (w) ≈ E (w∗ ) + (w − w∗ )> H(w − w∗ )

Posterior p(w|D) may be written as:

1 −E (w∗ )

1

p(w|D) ≈

e

exp − (w − w∗ )> H(w − w∗ )

Z

2

=

N (w∗ , H−1 )

w∗ is the mode obtained by maximizing the posterior using gradient

ascent

Varun Chandola

Introduction to Machine Learning

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Posterior of w for Logistic Regression

Prior:

p(w) = N (0, τ 2 I)

Likelihood of data

p(D|w) =

N

Y

θiyi (1 − θi )1−yi

i=1

where θi =

1

>

1+e −w xi

Posterior:

p(w|D) =

N (0, τ 2 I)

R

Varun Chandola

QN

yi

i=1 θi (1

− θi )1−yi

p(D|w)dw

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Approximating the Posterior - Laplace Approximation

Approximate posterior distribution

p(w|D) = N (wMAP , H−1 )

H is the Hessian of the negative log-posterior w.r.t. w

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

Using Gradient Descent for Learning Weights

Using Newton’s Method

Regularization with Logistic Regression

Handling Multiple Classes

Bayesian Logistic Regression

Laplace Approximation

Posterior of w for Logistic Regression

Approximating the Posterior

Getting Prediction on Unseen Examples

Getting Prediction

Z

p(y |x) =

p(y |x, w)p(w|D)dw

1

Use a point estimate of w (MLE or MAP)

2

Analytical Result

Monte Carlo Approximation

3

Numerical integration

Sample finite “versions” of w using p(w|D)

p(w|D) = N (wMAP , H−1 )

Compute p(y |x) using the samples and add

Varun Chandola

Introduction to Machine Learning

Generative vs. Discriminative Classifiers

Logistic Regression

Logistic Regression - Training

References

References

A. Y. Ng and M. I. Jordan.

On discriminative vs. generative classifiers: A comparison of logistic

regression and naive bayes.

In T. G. Dietterich, S. Becker, and Z. Ghahramani, editors, NIPS,

pages 841–848. MIT Press, 2001.

V. Vapnik.

Statistical learning theory.

Wiley, 1998.

Varun Chandola

Introduction to Machine Learning