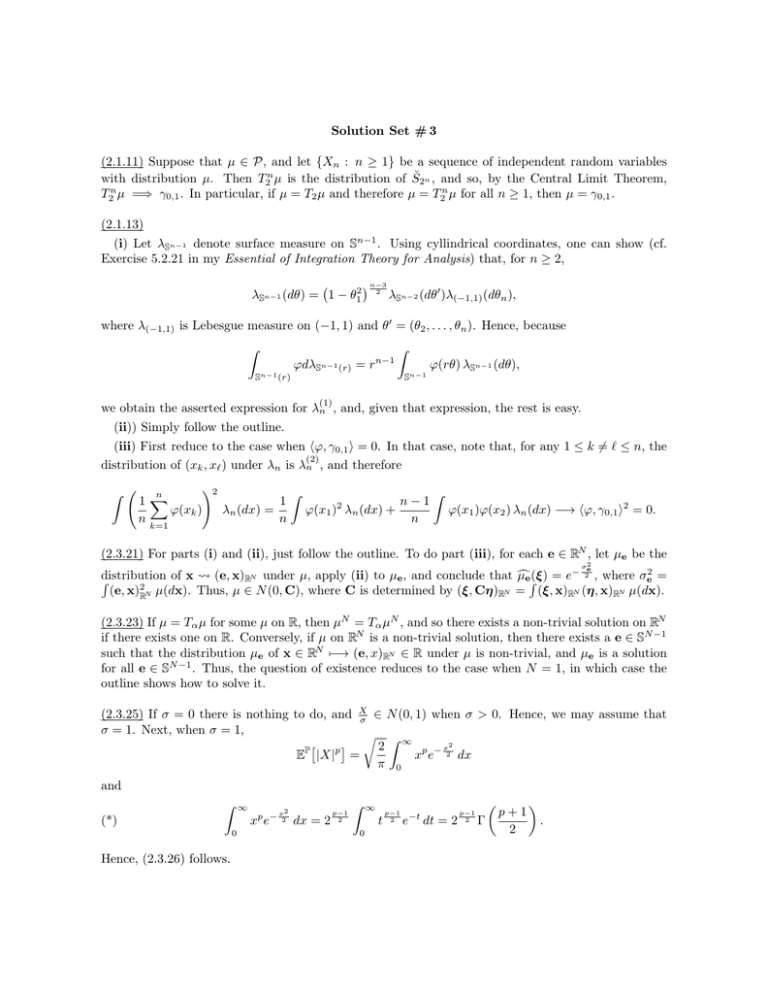

Solution Set # 3

advertisement

Solution Set # 3

(2.1.11) Suppose that µ ∈ P, and let {Xn : n ≥ 1} be a sequence of independent random variables

with distribution µ. Then T2n µ is the distribution of S̆2n , and so, by the Central Limit Theorem,

T2n µ =⇒ γ0,1 . In particular, if µ = T2 µ and therefore µ = T2n µ for all n ≥ 1, then µ = γ0,1 .

(2.1.13)

(i) Let λSn−1 denote surface measure on Sn−1 . Using cyllindrical coordinates, one can show (cf.

Exercise 5.2.21 in my Essential of Integration Theory for Analysis) that, for n ≥ 2,

λSn−1 (dθ) = 1 − θ12

n−3

2

λSn−2 (dθ0 )λ(−1,1) (dθn ),

where λ(−1,1) is Lebesgue measure on (−1, 1) and θ0 = (θ2 , . . . , θn ). Hence, because

Z

Sn−1 (r)

ϕdλSn−1 (r) = r

n−1

Z

Sn−1

ϕ(rθ) λSn−1 (dθ),

(1)

we obtain the asserted expression for λn , and, given that expression, the rest is easy.

(ii)) Simply follow the outline.

(iii) First reduce to the case when hϕ, γ0,1 i = 0. In that case, note that, for any 1 ≤ k 6= ` ≤ n, the

(2)

distribution of (xk , x` ) under λn is λn , and therefore

Z

n

1X

ϕ(xk )

n

k=1

!2

1

λn (dx) =

n

Z

n−1

ϕ(x1 ) λn (dx) +

n

2

Z

ϕ(x1 )ϕ(x2 ) λn (dx) −→ hϕ, γ0,1 i2 = 0.

(2.3.21) For parts (i) and (ii), just follow the outline. To do part (iii), for each e ∈ RN , let µe be the

2

σe

distribution

of x

(e, x)RN under µ, apply (ii) to µe , and conclude that µ

ceR(ξ) = e− 2 , where σe2 =

R

2

(e, x)RN µ(dx). Thus, µ ∈ N (0, C), where C is determined by (ξ, Cη)RN = (ξ, x)RN (η, x)RN µ(dx).

(2.3.23) If µ = Tα µ for some µ on R, then µN = Tα µN , and so there exists a non-trivial solution on RN

if there exists one on R. Conversely, if µ on RN is a non-trivial solution, then there exists a e ∈ SN −1

such that the distribution µe of x ∈ RN 7−→ (e, x)RN ∈ R under µ is non-trivial, and µe is a solution

for all e ∈ SN −1 . Thus, the question of existence reduces to the case when N = 1, in which case the

outline shows how to solve it.

(2.3.25) If σ = 0 there is nothing to do, and X

σ ∈ N (0, 1) when σ > 0. Hence, we may assume that

σ = 1. Next, when σ = 1,

r Z ∞

p

x2

2

P

xp e− 2 dx

E |X| =

π 0

and

Z

(*)

2

p − x2

x e

0

Hence, (2.3.26) follows.

∞

dx = 2

p−1

2

Z

∞

t

0

p−1

2

e

−t

dt = 2

p−1

2

Γ

p+1

2

.

(i) First note that

X

β

is 1-sub-Gaussian, and so it is enough to handle the case when β = 1. In this

case, the first estimate in Lemma 2.3.18 says that P(X ≥ R) ≤ 2e−

P

p

E |X|

Z

∞

t

=p

p−1

P |X| > t dt ≤ 2p

Z

∞

R2

2

, and so, by (*),

t2

p

tp−1 e− 2 dt = p2 2 Γ

p

0

0

2

.

σ

(ii) Again, by considering X

β , one can reduce to the case when β = 1 after replacing σ by β . Thus,

assume that β = 1. By Hölder’s inequality, EP [|X|p ] ≥ σ p for p ≥ 2. Now assume that p < 2. If

q ∈ (1, ∞), then 2 = pq + q10 2q−p

q−1 . Thus, by Hölder’s inequality,

1 1 2q−p p

2q−p 1

σ 2 = EP |X| q |X| q0 q−1 ≤ EP |X|p q EP |X| q−1 q0 .

Now choose q =

4−p

2 .

Then

2q−p

q−1

= 4, and the result follows by simple arithmetic.

(iii) Because S is B-sub-Gaussian and has variance Σ2 , there is nothing to do.

(iv) Follow the outline.

(2.2.28) and (2.3.29) Follow the outline.

![MA3421 (Functional Analysis 1) Tutorial sheet 7 [November 20, 2014] Name: Solutions](http://s2.studylib.net/store/data/010731565_1-51ad01714c75b95d2b5f7e0d5655f78c-300x300.png)