Georgian Mathematical Journal 1(1994), No. 2, 197-212 ERROR OF TRIGONOMETRIC SERIES REGRESSION

advertisement

Georgian Mathematical Journal

1(1994), No. 2, 197-212

LIMIT DISTRIBUTION OF THE INTEGRATED SQUARED

ERROR OF TRIGONOMETRIC SERIES REGRESSION

ESTIMATOR

E. NADARAYA

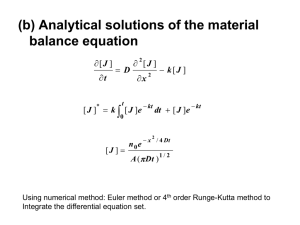

Abstract. Limit distribution is studied for the integrated squared

error of the projection regression estimator (2) constructed on the

basis of independent observations (1). By means of the obtained

limit theorems, a test is given for verifying the hypothesis on the

regression, and the power of this test is calculated in the case of

Pitman alternatives.

Let observations Y1 , Y2 , . . . , Yn be represented as

Yi = µ(xi ) + εi ,

i = 1, n,

(1)

where µ(x), x ∈ [−π, π], is the unknown regression function to be estimated

by observations Yi ; xi , i = 1, n, are the known numbers, and −π = x0 <

x1 < · · · < xn ≤ π, εi , i = 1, n, are independent equidistributed random

variables; Eε1 = 0, Eε21 = σ 2 , and Eε41 < ∞.

The problem of nonparametric estimation of the regression function µ(x)

for the model (1) has a recent history and has been treated only in few

papers. In particular, a kernel estimator of the Rosenblatt–Parzen type for

µ(x) was proposed for the first time in [1].

Assume that µ(x) is representable as a converging series in L2 (−π, π)

with respect to the orthonormal trigonometric system

o∞

n

.

(2π)−1/2 , π −1/2 cos ix, π −1/2 sin ix

i=1

Consider the estimator of the function µ(x) constructed by the projection

method of N.N. Chentsov [2]

N

a0n X

µnN (x) =

+

ain cos ix + bin sin ix,

2

i=1

1991 Mathematics Subject Classification. 62G07.

197

(2)

198

E. NADARAYA

where N = N (n) → ∞ for n → ∞ and

ain =

n

1X

Yj ∆j cos ixj ,

π j=1

bin =

n

1X

Yj ∆j sin ixj ,

π j=1

∆j = xj − xj−1 , j = 1, n,

i = 0, N .

The estimator (2) can be rewritten in a more compact way as

µnN =

n

X

j=1

where KN (u) =

1

2π

P

Yj ∆j KN (x − xj ),

eiru is the Dirichlet kernel.

|r|≤N

In [3], p.347, N.V. Smirnov considered estimators of the type (2) for

a specially chosen class of functions µ(x) in the case of equidistant points

xj ∈ [−π, π] and of independent and normally distributed observation errors

εi . In [4] an estimator of the type (2) is obtained, which is asymptotically

equivalent to projection estimators which are optimal in the sense of some

accuracy criterion. The asymptotics of the mean value of the integrated

squared error of the estimator (2) is considered in [5].

It is of interest to investigate the limit distribution of the integrated

squared error

Z

π

−π

[µnN (x) − µ(x)]2 dx,

which is the goal pursued in this paper. The method used to prove the

theorems below is based on the functional limit theorem for a sequence of

semimartingales [6].

Denote

Z π

2

n

µnN (x) − EµnN (x) dx,

UnN =

2π(2N + 1) −π

Qir = ∆i ∆r KN (xi − xr ),

ηik =

2

=

σnN

n

εi εk Qik ,

π(2N + 1)σnN

ξ1 = 0, ξk =

k−1

X

ηik ,

n X

r−1

X

n2 σ 4

Q2 ,

π 2 (2N + 1)2 r=2 j=1 jr

k = 2, n, ξk = 0, k > n,

i=1

and assume that Fk is σ-algebra generated by random variables ε1 , ε2 ,

. . . , εk , F0 = (φ, Ω).

Lemma 1 ([7], p.179). The stochastic sequence (ξk , Fk )k≥1 is a martingale-difference.

LIMIT DISTRIBUTION OF THE SQUARED ERROR

199

Lemma 2. Let p(x) be the known positive continuously differentiable

density on [−π, π], and points xi be chosen from the relation

Rdistribution

xi

i

p(u)

du

=

n , i = 1, n.

−π

If

N ln N

n

→ 0 for n → ∞, then

EUnN = θ1 + O

2

(2N + 1)σnN

Z π

σ2

p−1 (u) du,

, θ1 =

(2π)2 −π

Z π

σ4

→ θ2 =

p−2 (u) du.

4π 3 −π

N ln N

n

(3)

(4)

Proof. From the definition of xi we easily obtain

1

1

1+O

,

∆i =

np(xi )

n

where O n1 is uniform with respect to i = 1, n.

Hence it follows that

Qir =

1

1

K

)

(x

−

x

1

+

O

.

N

r

i

n2 p(xi )p(xr )

n

(5)

Taking into account the relation

max |KN (u)| = O(N )

−π≤u≤π

(6)

and (5), we find

2

=

σnN

n

n X

1

X

1

σ4

2

KN

(xi −xj )

+O

.

2

2

2

2

2π (2N +1) n i=1 j=1

[p(xi )p(xj )]

n

(7)

Let F (x) be a distribution function with density p(x) and Fn (x) be an

empirical

Pn distribution function of the “sample” x1 , x2 , . . . , xn , i.e., Fn (x) =

n−1 k=1 I(−∞,x) (xk ), where IA (·) is the indicator of the set A. Then the

right side of (7) can be written as the integral

2

σnN

=

σ4

2π 2 (2N + 1)2

Z

π

−π

Z

π

−π

2

KN

(t − s)

1

dFn (t) dFn (s)

O

+

.

[p(t)p(s)]2

n

200

E. NADARAYA

Further we have

Z Z

Z

π π 2

dFn (t) dFn (s)

s)

−

(t

−

K

N

[p(t)p(s)]2

−π

−π

π

−π

≤ I1 + I2 ,

Z

π

−π

2

(t − s)

KN

dF (t) dF (s)

≤

[p(t)p(s)]2

Z π Z π

dFn (s)

2

I1 =

KN (t − s)

dFn (t) − dF (t) ,

[p(t)p(s)]2

−π −π

Z Z

π π 2

dF (t)

I2 =

KN (t − s)

dFn (s) − dF (s) .

[p(t)p(s)]2

−π −π

By integration by parts in the internal integral in I1 we readily obtain

Z

Z π

0

dFn (s) π

(t − s)p(t) −

dFn (t) − dF (t) KN

I1 ≤ 2

2

−π

−π p (s)

(8)

−KN (t − s)p0 (t) KN (t − s)/p3 (t) dt.

1

Since sup−π≤x≤π |Fn (x) − F (x)| = O n and the relations [8]1

Z π

2

0

KN

(u) du = 2N + 1,

(u)| = O(N 2 ),

max |KN

−π≤u≤π

−π

(9)

Z π

|KN (u)| du = O(ln N )

−π

are fulfilled, from (8) we have the estimate

2

N ln N

I1 = O

.

n

In the same manner we show that

I2 = O

Therefore

2

(2N +1)σnN

σ4

= 3

4π

Z

π

−π

Z

π

N 2 ln N

n

.

dt ds

ΦN (s−t)

+O

p(s)p(t)

−π

N ln N

n

,

(10)

2

where ΦN (u) = 2N2π+1 KN

(u) is the Fejér kernel.

We shall complete the definition of the function p−1 outside [−π, π] as

regards its periodicity and also note that KN (u) and ΦN (u) are periodic

functions with the period 2π. The continued function will be denoted by

g(x). Then

Z π

Z π Z π

dt ds

ΦN (s − t)

=

p−2 (x) dx + χn ,

p(s)p(t)

−π −π

−π

1 See

p. 115 in the Russian version of [8]: “Mir”, Moscow, 1965.

LIMIT DISTRIBUTION OF THE SQUARED ERROR

201

where

|χn | ≤

Z

π

|σ̄N (x) − g(x)|dx,

−π

π

σ̄N (x) =

Z

−π

ΦN (u)g(x − u)du.

Hence, on account of the theorem on convergence of the Fejér integral

σ̄N (x) to g(x) in the norm of the space L1 (−π, π) (see [9], p.481), we have

χn → 0 for n → ∞.

Therefore

Z π

σ4

2

p−2 (x) dx.

(2N + 1)σnN →

4π 3 −π

Now we shall prove (3). We have

DµnN (x) = σ

2

n

X

j=1

1

1

2

K (x − xj ) 1 + O

.

np2 (xj ) N

n

Applying the same reasoning as in deriving (10), we find

Z

N 2 ln N

σ2 π 2

ds

KN (x − s)

DµnN (x) =

+O

.

n −π

p(s)

n2

(11)

Therefore

Z

Z

N ln N

ds dt

+O

=

p(s)

n

−π −π

Z π

N ln N

σ2

=

.

p−1 (s) ds + O

2

(2π) −π

n

EUnN =

σ2

(2π)2

π

π

ΦN (t − s)

d

Denote by the symbol → the convergence in distribution, and let ξ be a

random variable having normal distribution with zero mean and variance 1.

2

Theorem 1. Let xi i = 1, n be the same as in Lemma 2 and N nln N → 0

√

−1/2 d

→ ξ.

for n → ∞. Then, as n increases, 2N + 1(UnN − θ1 )θ2

Proof. We have

UnN − EUnN

= Hn(1) + Hn(2) ,

σnN

where

Hn(1) =

n

X

j=1

ξj ,

Hn(2) =

n

X

n

(ε2 − Eε2i )Qii .

2π(2N + 1)σnN i=1 i

202

E. NADARAYA

(2)

Hn converges to zero in probability. Indeed,

n

X

n2 Eε14

2

Qii

=

2

(2π)2 (2N + 1)2 σnN

i=1

n

1

X

Eε41

1

2

=

≤

K (0) 1 + O

2 · n2

(2π)2 (2N + 1)2 σnN

(p(xi ))4 N

n

i=1

N

1

≤C 2 =O

,

nσnN

n

DHn(2) ≤

(2)

P

whence Hn → 0. Here and in what follows C is the positive constant

varying from one formula to another and the letter P above the arrow

denotes convergence in probability.

(1) d

We will now prove that Hn → ξ. To this end we will verify the validity

of Corollaries 2 and 6 of Theorem 2 from [6]. We have to show whether

the conditions contained in these statements are fulfilled for asymptotic

normality of the square-integrable martingale-difference, which, by Lemma

1, is our sequence {ξk , Fk }k≥1 .

Pn

A direct calculation shows that k=1 Eξk2 = 1. Asymptotic normality

will take place if for n → ∞

n

X

E ξk2 · I(|ξk | ≥ ε) | Fk−1 → 0

(12)

k=1

and

n

X

k=1

P

ξk2 → 1.

(13)

P

It is shown in [6] that the fulfillment of (13) and the condition sup |ξk | → 0

1≤k≤n

implies the validy of (12) as well.

Since for ε > 0

P

(1)

d

n

X

Eξk4 ,

sup |ξk | ≥ ε ≤ ε−4

1≤k≤n

k=1

to prove Hn → ξ we have to verify only (13) by the relation (15) to be

given below.

Pn

P

We will establish k=1 ξk2 → 1. For this itPsuffices to make sure that

P

n

n

2

2

E( k=1 ξk − 1)) → 0 for n → ∞, i.e., due to i=1 Eξi2 = 1

E

n

X

k=1

ξk2

2

=

n

X

k=1

Eξk4 + 2

X

1≤k1 <k2 ≤n

Eξk21 ξk22 → 1.

(14)

LIMIT DISTRIBUTION OF THE SQUARED ERROR

203

Pn

In the first place we find that k=1 Eξk4 → 0 for n → ∞. By virtue of

the definitions of ξk and ηij we write

n

X

Eξk4 = Ln(1) + L(2)

n ,

k=1

where

n k−1

L(1)

n =

XX

n4

Q4jk ,

Eε41 (Eε41 − 3σ 4 )

4

4

4

π (2N + 1) σnN

j=1

k=2

Ln(2) =

3n4 σ 4 Eε41

4 π4

(2N + 1)4 σnN

n k−1

X

X

k=2

j=1

Q2jk

2

.

From (5) and (6) we obtain

|L(1)

n |=C

and also

|L(2)

n |=C

1

4

n2 N 4 σnN

≤C

=C

n

X

k=2

1

4

n2 N 4 σnN

1

4

n2 N 4 σnN

≤C

+

n k−1

1 i

X K 4 (xj − xk ) h

X

N

1+O

≤

4

4

4

4

n N σnN

[p(xj )p(xk )]

n

k=2 j=1

N 2

−4

≤ Cn−2 σnN

=O

n

1

hZ

1

−π

n

X

k=1

1

n

n Z π

X

−π

k=2

n h

X

4

n2 N 4 σnN

π

2

k−1

1 i

X K 2 (xj − xk ) h

1

N

≤

1+O

n j=1 p(xj )p(xk )

n

k=2

k−1

X

j=1

2

2

(xj − xk ) =

KN

2

2

KN

(xk − u)

(u)

≤

dF

n

p2 (u)

i2

2

KN

(xk − u)p−1 (u) du +

i2 2

2

.

KN

(xk − u)p−2 (u) d Fn (u) − F (u)

Hence, taking into accountthe

relation (9) and the formula of integration

(2)

by parts, we have |Ln | = O n1 . Therefore

n

X

k=1

Eξk4 → 0 for n → ∞.

(15)

204

E. NADARAYA

P

Let us now establish that 2

definition of ξi implies

ξk21 ξk22

=

1 −1

kX

2

ηik

1

i=1

+

2 −1

kX

2

ηik

2

i=1

2 −1

kX

1≤k1 <k2 ≤n

2

ηik

2

i=1

1 −1

kX

ηsk1 ηtk1 +

s6=t=1

=

(1)

Bk1 k2

+

+

Eξk21 ξk22 → 1 for n → ∞. The

1 −1

kX

2

ηik

1

i=1

1 −1

kX

i6=s=1

ηsk1 ηtk1

s6=t=1

(2)

Bk1 k2

+

2 −1

kX

ηik2 ηsk2 +

2 −1

kX

k6=r=1

(3)

Bk 1 k 2

+

(4)

Bk1 k2 .

ηkk1 ηrk2 =

Therefore

2

X

Eξk21 ξk22 =

A(i)

n =2

An(i) ,

i=1

1≤k1 <k2 ≤n

where

4

X

X

(i)

EBk1 k2 ,

i = 1, 4.

1≤k1 <k2 ≤n

(3)

In the first place we consider An . By the definition of ηij we obtain

2

6 t, k1 < k2 . Thus

η η = 0, s =

Eηik

2 sk1 tk1

An(3) = 0.

(16)

(2)

(2)

Let us derive an estimate of An . Divide the sum EBk1 k2 into two parts:

(2)

EBk1 k2 =

kX

1 −1

k1

X

2

Eηik

η η +

1 rk2 sk2

i=1 r6=s=1

kX

1 −1

kX

2 −1

2

Eηik

η η .

1 rk2 sk2

i=1 r6=s=k1 +1

The second term is equal to zero, since i cannot coincide with r or with s

2

2

and r =

η η

= 0, and Eηik

6 s; in this case Eηik

η η

= 0 also in the

1 rk2 sk2

1 rk2 sk2

first term each time except for the case s = k1 or r = k1 .

Thus

kX

1 −1

2

(2)

EBk1 k2 = 2

E ηik

η η

.

1 ik2 k1 k2

i=1

Hence, using the definition of ηij and the inequality |Qij | ≤ C nN2 obtained

from (5) and (6), we find

EB (2) ≤ C

k1 k2

kX

1 −1

1

Q2ik1 .

4

(2N + 1)2 σnN

i=1

(17)

LIMIT DISTRIBUTION OF THE SQUARED ERROR

205

Next, taking into account statement (4) of Lemma 2 and the definition

2

of σnN

, from (17) we have

|A(2)

n |≤C

n kX

1 −1

N

X

n

1

2

Q

≤

=

O

.

C

ik1

4

2

N 2 σnN

nσnN

n

i=1

(18)

k1 =2

(4)

Consider now An . By the definition of ηij we obtain

X

8n4

Qsk1 Qsk2 Qtk1 Qtk2 ≤

4

4

4

π (2N + 1) σnN

s<t<k1 <k2

n4 h X

≤C 4 4

Qsk1 Qsk2 Qtk1 Qtk2 +

N σnN

s,t,k1 ,k2

i

X

X

+

Qk1 t Qst Qk1 s Qss =

Q2k1 s Qk21 t +

A(4)

n =

k1 ,s,t

k1 ,s,t

n4

= C 4 4 |E1 | + |E2 | + |E3 | .

N σnN

(19)

According to (5) and (6) we write

E1 = n−7

×

Z

X

s,t,k1

π

−π

KN (xs − xk1 )KN (xt − xk1 ) ×

KN (xs − u)KN (xt − u) dFn (u) + O

N2

n

.

Hence, integrating by parts and taking into account (9), we obtain

−7

E1 = n

Z

π

X

−π s,t,k

1

KN (xs − xk1 )KN (xt − xk1 ) ×

KN (xs − u)KN (xt − u)p(u) du + O

N 4 ln N

.

n5

(20)

Applying the same operations three times, we represent (20) in the form

E1 = n−4

Z

π

−π

Z

π

−π

Z

π

−π

Z

π

−π

KN (z − u)KN (z − t)KN (y − u)KN (y − t) ×

×p(y)p(u)p(z)p(t) du dt dz dy + O

N 4 ln N

n5

N ln3 N

N 4 ln N

+O

.

=O

n4

n5

=

206

E. NADARAYA

Thus

ln3 N

N 2 ln N

n4

=

O

|E

|

+

O

.

1

4

N 4 σnN

N

n

(21)

N2

n4

|E

,

|

=

O

2

4

N 4 σnN

n

N2

n4

|

|E

.

=

O

3

4

N 4 σnN

n

(22)

Further, it is not difficult to show

Therefore (19), (21), and (22) imply

A(4)

n =O

N 2 ln N

n

+O

(1)

ln3 N

N

.

(23)

(1)

Finally, we will show that An → 1 for n → ∞. For this represent An

(1)

(1)

(2)

in the form An = Qn + Qn , where

Q(1)

n

=2

1 −1

X kX

i=1

k1 <k2

Qn(2) = 2

X

k1 <k2

2

Eηik

1

2 −1

kX

j=1

(1)

EBk1 k2 −

2

Eηik

,

2

1 −1

X kX

k1 <k2

2

Eηik

1

i=1

2 −1

kX

2

Eηik

2

j=1

.

2

it follows that

From the definition of σnN

Q(1)

n =1−

where

n k−1

X

X

k=2

Therefore

k=2

2

Eηik

i=1

2

,

n4 X X 2 2

Qik ≤

4

N 4 σnN

k=2 i=1

N2

1

≤C 4 =O

.

nσnN

n

2

Eηik

i=1

2

n k−1

X

X

n

k−1

≤C

2

Q(1)

n = 1 + O N /n .

(2)

(2)

Let us now show that Qn → 0. Qn can be written as

Q(2)

n =2

1 −1

X h kX

k1 <k2

i=1

i

2

2

2

2

cov(ηik

.

,

η

)

+

cov(η

,

η

)

ik2

ik1 k1 k2

1

(24)

LIMIT DISTRIBUTION OF THE SQUARED ERROR

207

But

n4

2

Eηik

η2 ≤ C

1 ik2

≤C

1

4

n4 N 4 σnN

4

N 4 σnN

4

max |KN (u)| = O

−π≤u≤π

−2

2

. Therefore

Similarly, Eηij

= O n−2 σnN

2

2

cov(ηik

)=O

, ηik

1

2

Further, since

Q2ik1 · Q2ik2 ≤

P

1≤k1 <k2 ≤n (k1

1

.

4

n4 σnN

1

.

4

n4 σnN

(25)

− 1) = O(n3 ), (25) implies

Qn(2) = O

Thus, according to (24) and (26)

N2

n

(26)

.

2

A(1)

n = 1 + O N /n .

(27)

Combining the relations (16), (18), (23) and (27), we finally obtain

E

n

X

k=1

2

ξk2 − 1

→ 0 for n → ∞.

Therefore

UnN − EUnN d

→ ξ.

σnN

N

2

→ θ2 ,

) and (2N + 1)σnN

Further, due to Lemma 2, EUnN = θ1 + O( N ln

n

and hence we obtain

−1/2 d

(2N + 1)1/2 (UnN − θ1 )θ2

Denote

TnN

n

=

2π(2N + 1)

Z

π

−π

→ ξ.

2

µnN (x) − µ(x) dx.

Theorem 2. Let xi , i = 1, n, be the same as in Lemma 2 and the function µ(x) with period 2π have bounded derivatives up to the second order.

Moreover, if N 2 ln N/n → 0 and n ln2 N/N 9/2 → 0 for n → ∞, then

√

−1/2 d

→ ξ.

2N + 1(TnN − θ1 )θ2

208

E. NADARAYA

Before we proceed to proving the theorem, we have to show

Z π

0

(u)|du = O(N ln N ).

|KN

(28)

−π

e ν (u) =

Denote D

we have

Pν

k=1

sin ku. Then by virtue of the Abel transformation

0

KN

(u) = −

N

X

k sin ku =

N

−1

X

ν=1

k=1

e ν (u) + N D

eN .

D

Rπ

e ν (u)|du → 1 for ν → ∞.

It is well known [8] that ην = (ln ν)−1 −π |D

PN −1

Denote bN = ν=1 ln ν. Then by the Toeplitz lemma

RN =

N −1

1 X

ln ν · ην → 1.

bN ν=1

Therefore

Z

π

−π

0

|KN

(u)|du ≤

N

−1 Z π

X

ν=1

= bN · RN + N

−π

Z

π

e ν (u)|du + N

|D

−π

Z

π

−π

e N (u)|du =

|D

e N (u)|du = O(N ln N ).

|D

Let us return to the proof of the theorem. We have

A1n

TnN = UnN + A1n + A2n ,

π

n

µnN (x) − EµnN (x) EµnN (x) − µ(x) dx,

=

π(2N + 1) −π

Z π

2

n

A2n =

EµnN (x) − µ(x) dx.

2π(2N + 1) −π

Z

It is not difficult to find

n

hZ π

X

nσ 2

√

∆2j

Kn (y − xj ) ×

2π 2N + 1 j=1

−π

i2 1/2

× EµnN (y) − µ(x) dy

.

√

2N + 1E|A1n | ≤

But

EµnN (y) =

Z

π

−π

µ(x)

1

1

KN (y − x) dFn (x) 1 + O

p(x)

n

LIMIT DISTRIBUTION OF THE SQUARED ERROR

and

Z

209

π

µ(x)p−1 (x)KN (y − x) dFn (x) =

Z π

1 Z π

0

|KN

(u)|du .

=

µ(x)KN (y − x) + O

n −π

−π

−π

It is well known ([10], p.22) that

Z π

ln N

µ(x)KN (y − x) dx = µ(y) + O

N2

−π

uniformly in y ∈ [−π, π]. By virtue of (28) this gives us

N ln N

ln N

+O

.

EµnN (x) = µ(x) + O

2

N

n

Therefore

h n ln2 N 1/2 ln N

√

2N + 1E|A1n | ≤ C

+

N 9/2

N 1/4

N 2 ln N 1/2 ln3/2 N i

√

+

→ 0.

n

N

(29)

(30)

Further, from (29) we have

n ln2 N

√

N 2 ln2 N

√

2N + 1A2n ≤ C

+

→ 0.

n

N 9/2

N

(31)

Finally, the statement of Theorem 2 directly follows from Theorem 1, (30),

and (31).

Using Theorems 1 and 2, it is easy to solve the problem concerning testing

of the hypothesis on µ(x). Given σ 2 , it is required to verify the hypothesis

H0 : µ(x) = µ0 (x). The critical region is defined approximately by the

inequality UnN ≥ dn (α) or TnN ≥ dn (α), where

dn (α) = σ 2 L1 + (2N + 1)−1/2 L2 λα ,

Z π

1 Z π

1/2

−2

L1 = ((2π)−2

p−1 (x) dx, L2 =

dx

p

(x)

,

4π 3 −π

−π

normal distribution.

and λα is the quantile of level α of standard

√

Let now σ 2 be unknown. We call an N -consistent estimate of variance

σ 2 , for instance,

n

2

1 X

Sn2 =

Yi − µnλ (xi ) ,

n i=1

where λ = λ(n) → ∞ is a sequence such that

N λ4

n → 0 for n → ∞.

λ

N

→ 0,

N ln2 λ

λ4

→ 0 and

210

E. NADARAYA

Indeed, using the expressions (11) and (29), we easily find

Denote

N λ 1/2

N 1/2 ln λ

√

N (ESn2 − σ 2 ) = O

+O

.

n

λ2

(32)

Zj = Yj − Rj ,

Rj =

n

X

k=1

Then

n2 DSn2 =

n

X

j=1

Yk ∆k Kλ (xj − xk ).

DZj2 +

X

cov(Zi2 , Zi21 ).

i6=i1

Simple calculations show that cov(Zj2 , Zj21 ) = O

4

√

P

O λn . This and (32) imply N (Sn2 − σ 2 ) → 0.

λ

n

λ4

n

. Therefore DSn2 =

Corollary. Let the conditions of Theorem 2 be fulfilled. Moreover, let

4

2

→ 0, Nnλ → 0 and N λln4 λ → 0. Then

√

d

Sn−2 L−1

2N + 1(UnN − Sn2 L1 ) → ξ,

2

√

d

2N + 1(TnN − Sn2 L1 ) → ξ.

Sn−2 L−1

2

This corollary enables one to construct a test for verifying H0 : µ(x) =

µ0 (x). The critical region is defined approximately by the inequality UnN ≥

den (α) or TnN ≥ den (α), where den (α) is obtained from dn (α) by using Sn2

instead of σ 2 .

Consider now the local behavior of the test power in the case where the

critical region is of the form {x ∈ R1 , x ≥ dn (α)}. More exactly, find a

distribution of the quadratic functional UnN under a sequence of alternatives

close to the hypothesis H0 : µ(x) = µ0 (x). The sequence is written as

H1 : µ̄(x) = µ0 (x) + γn ϕ(x) + o(γn ),

(33)

where γn → 0 appropriately and o(γn ) is uniform in x ∈ [−π, π].

Theorem 3. Let µ̄n (x) satisfy the conditions of Theorem 2. If 2N +

1 = nδ , γn = n−1/2+δ/4 , 92 < δ < 12 , then under the alternative H1

1/2

the

(2N √+ 1)

1 Rstatistic

(UnN − θ1 ) is distributed in the limit normally

π

2

θ

ϕ

(u)du,

.

2

2π −π

LIMIT DISTRIBUTION OF THE SQUARED ERROR

211

Proof. Let us represent UnN as the sum

Z π

2

n

µnN (x) − E1 µnN (x) dx +

UnN =

2π(2N + 1) −π

Z π

n

µnN (x) − E1 µnN (x) ϕ

en (x) dx +

+

γn

π(2N + 1)

−π

Z π

n

+

γ2

ϕ

e2 (x) dx = A1 (n) + A2 (n) + A3 (n),

2π(2N + 1) n −π n

where E1 (·) denotes the mathematical expectation under the hypothesis H1 ,

ϕ

en (x) =

n

X

j=1

ϕ(xj )∆j Kn (x − xj ).

√

Due to Theorem 1 one can readily assertain

that 2N + 1(A1 (n) − θ1 ) is

√

distributed asymptotically normal (0, θ2 ).

By analogy with the proof of Lemma 2 we find

Z π Z π

2

N 2 ln N

√

1

2N + 1A3 (n) =

ϕ(y)KN (x − y)dy dx + O

.

2π −π

n

−π

Hence, by virtue of theorem 2 from [9], p.474, we have

Z π

√

1

2N + 1A3 (n) →

ϕ2 (u)du.

2π −π

Further, for our choice of N and γn we can show by simple calculations that

ln2 n

√

ln n

2N + 1E|A2 (n)| ≤ C δ/4 + 1−7δ/4 .

n

n

Thus the local behaviour of the power PH1 (UnN ≥ dn (α)) is

Z π

−1/2 1

ϕ2 (u) du .

(34)

PH1 UnN ≥ dn (α) → 1 − Φ λα − θ2

2π −π

Rπ

Since −π ϕ2 (u)du > 0 and is equal to zero iff ϕ(x) = 0, from (34)

we conclude that the test for the hypothesis H0 : µ(x) = µ0 (x) against

alternatives of the form (33) is asymptotically strictly unbiased.

Remark. Similar results can be obtained by the same method for the

kernel estimator of Priestley and Chao [1].

References

1. M.B. Priestley and M.T. Chao, Nonparametric function fitting. J.

Roy. Statist. Asoc. ser. B 34(1972), 385-392.

212

E. NADARAYA

2. N.N. Chentsov, Estimation of the unknown distribution density by

observations. (Russian) Dokl. Akad. Nauk SSSR 147(1962), 45-48.

3. N.B. Smirnov and I.V. Dunin-Barkovskii, A course in probability

theory and mathematical statistics for technical applications. (Russian)

Nauka, Moscow, 1969.

4. B.T. Polyak and A.V. Tsibakov, Asymptotic optimality of the Cp -test

in the projection estimation of the regression. (Russian) Teor. veroyatnost.

i primenen. 35(1990), No. 2, 305-317.

5. R.L. Eubank, J.D. Hart, and P. Speckman, Trigonometric series regression estimators with application to partially linear models. J. Multivariate Anal. 32(1990), 70-83.

6. R.Sh. Liptser and A.N. Shiryayev, A functional central limit theorem

for semimartingales. (Russian) Teor. veroyatnost. i primenen. 25(1980),

No.4, 683-703.

7. E.A. Nadaraya, Nonparametric estimation of probability densities and

regression curves. Kluwer Academic Publishers, Dordrecht, Holland, 1989.

8. A. Zygmund, Trigonometric series, vol. 1. Cambridge University

Press, Cambridge, 1959.

9. A.N. Kolmogorov and S.V. Fomin, Elements of the theory of functions

and functional analysis. (Russian) ”Nauka”, Moscow, 1989.

10. D. Jackson, The theory of approximation. American Mathematical

Society, New York, 1930.

(Received 1.07.1992)

Author’s address:

Faculty of Mechanics and Mathamatics

I.Javakhishvili Tbilisi State University

2 University St., 380043 Tbilisi

Republic of Georgia