S D H UPERVISED

advertisement

S UPERVISED D ISCRETE H ASHING

F UMIN S HEN , C HUNHUA S HEN , W EI L IU , H ENG TAO S HEN

A major difficulty of learning to hash lies in

handling the discrete constraints imposed on the

pursued hash codes,

In the proposed framework, the label information is exploited such that these binary feature

vectors are easy to be classified. To implement

this idea, we propose a joint optimization problem which jointly learns the binary embeddings

and the linear classifier. We aim to learn a set of

binary codes B = {bi }ni=1 ∈ {−1, 1}L×n for X =

{xi }ni=1 to well preserve the semantic similarities.

We adopt the multi-class classification formula>

>

> >

tion y = G(b) = W b = [w1 b, · · · , wC b] where

wk ∈ RL×1 , k = 1, · · · , C is the classification vector for class k and y is the label vector, of which

the maximum item indicates the assigned class of

x. We then formulate the hashing problem as follows,

r

b ∈ {−1, 1} ,

which typically makes hash optimizations very

challenging (NPhard in general).

Relaxation: (1) discard the discrete constraints, (2) threshold (or quantize) the solved

continuous solution.

Such approximation typically leads to lowquality hash codes due to the accumulated quantization error, especially when with long code

lengths.

min

C ONTRIBUTIONS

We named the proposed supervised hashing

approach employing discrete cyclic coordinate

descent as Supervised Discrete Hashing (SDH).

Our contributions included

1. We proposed a novel supervised hashing

framework based on the assumption that

good hash codes are optimal for linear classification.

2. We proposed a supervised algorithm to

learn binary codes without continuous relaxations.

• Efficient: By means of discrete cyclic

coordinate descent (DCC), at each

step SDH solves the associated binary

optimization and obtains an analytical solution

• Locally optimal: Optimal solution for

each bit, with all other bits fixed.

B,W,F

n

X

L(yi , W> bi ) + λ||W||2 + ν

i=1

[1] F. Shen, C. Shen, W. Liu, H. T. Shen. Supervised Discrete

Hashing. In CVPR’15

||bi − F (xi )||2

(2)

L

s.t. bi ∈ {−1, 1} .

The hash function F (xi ) = P> φ(xi ), where φ(x)

is obtained by the RBF kernel mapping, is simultaneously learned to fit the generated binary bits.

The overall optimization problem is then solved

in an iterative fashion with three corresponding

sub-problems: each sub-problem solves for one

variable while keeping other two variables fixed.

With the `2 loss, it is trivial to solve for W and F .

The most important B sub-problem writes

>

2

min ||W B|| − 2Tr(B Q)

B

L×n

B ∈ {−1, 1}

s.t.

(3)

.

where Q = WY + νF (X).

We choose to learn the binary codes B by the

discrete cyclic coordinate descent (DCC) method. In

other words, We learn B bit by bit. Let z> be the

lth row of B, l = 1, · · · , L and B0 the matrix of B

excluding z. Then z is one bit for all n samples.

Similarly, let q> be the lth row of Q, Q0 the matrix

of Q excluding q, v> the lth row of W and W0 the

matrix of W excluding v. Then we have w.r.t. z:

min

R EFERENCES

n

X

i=1

>

z

s.t.

>

R ESULTS

F ORMULATION C ONT.

0>

0

>

(v W B − q )z

n

z ∈ {−1, 1} .

(4)

This problem has the optimal solution

z = sgn(q − B0> W0 v).

Table 2: Results on CIFAR with 64 bits.

Method

Precision MAP Training time Test time

BRE

0.1299

0.1156

12042.0

6.4e-5

MLH

0.2251

0.1730

2297.5

3.2e-5

KSH

0.1656

0.3822

2625.0

3.1e-6

SSH

0.2860

0.2091

96.9

3.6e-6

CCA-ITQ

0.3524

0.3379

4.3

1.7e-6

FastHash

0.1880

0.4187

1340.7

7.1e-4

SDH

0.4229

0.4555

62.6

2.6e-6

(1)

It is easy to see form (1) that, each bit z is computed based on the pre-learned L − 1 bits B0 . This

is expected because, one can iteratively update

each bit till the procedure converges with a set of

better codes B. In our experiments, the whole L

bits for X can be iteratively learned in tL times by

(1), where usually t = 2 ∼ 5.

By carefully choosing loss functions of the

classifier, the DCC method produces the optimal hash bits in a closed form, which consequently makes the entire optimization procedure

very efficient. Therefore, the proposed binary

code learning method can easily deal with largescale datasets. We name the proposed supervised

hashing method employing discrete cyclic coordinate as Supervised Discrete Hashing (SDH).

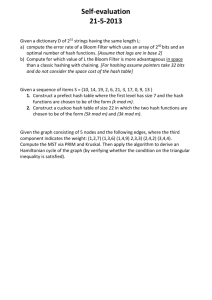

Fig. 1: Results on NUS-WIDE.

NUSWIDE

0.6

0.5

Precision

F ORMULATION

0.4

0.3

0.1

0

D ISCRETE OR N OT ?

To see how much the discrete optimization

will benefit the hash code learning, we perform

a comparison of our hash formulation (2) with or

without the discrete constraints. The comparative

results on CIFAR are shown in Table 1. As can be

seen, our discrete hashing framework SDH consistently yields better hash codes than the relaxed

one by removing the sign function. In particular for precision, the performance gaps between

these two methods are increased with longer hash

bits.

Table 1: Discrete vs. relaxed solution

Constraint 32 bits 64 bits 96 bits

Discrete

0.5090 0.4229 0.3515

Precision

Relaxed

0.4718 0.3354 0.1685

Discrete

0.4307 0.4555 0.4582

MAP

Relaxed

0.3777 0.4150 0.4244

S OURCE CODE

Available

soon

at

https://sites.

google.com/site/shenfumin or https:

//github.com/bd622.

SDH

KSH

CCA−ITQ

BRE

MLH

0.2

16 32

64

96

Code length

NUSWIDE

128

0.55

0.5

MAP

P ROBLEM

0.45

0.4

0.35

16 32

64

96

Code length

128

Table 3: Classification accuracy on CIFAR

with the learned binary codes by linear SVM

Method

64 bits 128 bits

BRE

0.332

0.335

CCA-ITQ 0.564

0.570

SSH

0.432

0.460

KSH

0.590

0.598

FastHash

0.578

0.603

SDH

0.636

0.651

GIST

0.580