Analysis of Variance in Matrix form STA302/1001 - week 10

advertisement

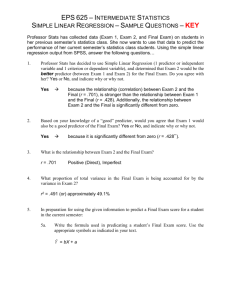

Analysis of Variance in Matrix form • The ANOVA table sums of squares, SSTO, SSR and SSE can all be expressed in matrix form as follows…. STA302/1001 - week 10 1 Multiple Regression • A multiple regression model is a model that has more than one explanatory variable in it. • Some of the reasons to use multiple regression models are: ¾ Often multiple X’s arise naturally from a study. ¾ We want to control for some X’s ¾ Want to fit a polynomial ¾ Compare regression lines for two or more groups STA302/1001 - week 10 2 Multiple Linear Regression Model • In a multiple linear regression model there are p predictor variables. • The model is Yi = β 0 + β 1 X i 1 + β 2 X i 2 + L + β p X i p + ε i , i = 1, ..., n • This model is linear in the β’s. The variables may be non-linear, e.g., log(X1), X1*X2 etc. • We need to estimate p +1 β’s and σ2. • There are p+2 parameters in this model and so we need at least that many observations to be able to estimate them, i.e., need n > p +2. STA302/1001 - week 10 3 Multiple Regression Model in Matrix Form • In matrix notation the multiple regression model is: Y=Xβ + ε where ⎡β 0 ⎤ ⎡1 X 11 L X 1 p ⎤ ⎡ε 1 ⎤ ⎡Y1 ⎤ ⎢β ⎥ ⎢1 X ⎥ ⎢ε ⎥ ⎢Y ⎥ X 21 2p ⎥ 1 Y = ⎢ 2⎥ , ε = ⎢ 2⎥ , β = ⎢ ⎥ , X = ⎢ ⎢M ⎥ ⎢M ⎢M ⎥ ⎢M ⎥ M M ⎥ ⎢ ⎥ ⎢ ⎥ ⎢ ⎥ ⎢ ⎥ 1 X X β ε Y ⎢⎣ ⎢⎣ p ⎥⎦ n1 np⎥ ⎣ n⎦ ⎣ n⎦ ⎦ • Note, Y and ε are n × 1 vectors, β is a ( p + 1) × 1 vector and X is a n × ( p + 1) matrix. The matrix X is called the ‘design matrix’. • The Gauss-Markov assumptions are: E(ε | X) = 0, Var(ε | X) = σ2I. • These result in E(Y | X) = 0, Var(Y | X) = σ2I. −1 • The Least-Square estimate of β is b = (X' X ) X' Y. STA302/1001 - week 10 4 Estimate of σ2 • The estimate of σ2 is: s 2 = MSE = 2 e ∑i df error = e' e n − p −1 • It has n-p-1 degrees of freedom because… • Claim: s2 is unbiased estimator of σ2. • Proof: STA302/1001 - week 10 5 General Comments about Multiple Regression • The regression equation gives the mean response for each combination of explanatory variables. • The regression equation will not be useful if it is very complicated or a function of large number of explanatory variables. • We generally want “parsimonious” model, that is, a model that is as simple as possible to adequately describe the response variable. • It is unwise to think that there is some exact, discoverable equation. • Many possible models are available. • One or two models may adequately approximate the mean of the response variable. STA302/1001 - week 10 6 Example – House Prices in Chicago • Data of 26 house sales in Chicago were collected (clearly collected some time ago). The variables in the data set are: price - selling price in $1000's bdr - number of bedrooms flr - floor space in square feet fp - number of fireplaces rms - number of rooms st - storm windows (1 if present, 0 absent) lot - lot size (frontage) in feet bth - number of bathrooms gar - garage size (0=no garage, 1=one-car garage, etc.) STA302/1001 - week 10 7 Interpreting Regression Coefficients • In general, in multiple regression we interpret the coefficient of the jth predictor variable (βj or bj) as the change in Y associated with a change of one unit in Xj with all the other variables held constant. • Note, that it may be impossible to hold all other variables constants. • Example, re the home price example above, for 100 extra square feet (everything else held constant), the price goes up by $1760 on average. For one more room (everything else held constant), the price goes up by $3900 on average. For one more bedroom (everything else held constant), the price goes down by $7700 on average. STA302/1001 - week 10 8 Inference for Regression Coefficients • As in simple linear regression, we are interesting in testing: H0: βj = 0 versus Ha: βj ≠ 0. • The test statistics is t stat = bj S .E (b j ) It has a t-distribution with n-p-1 degrees of freedom. • We can calculate the P-value from the t-table with n-p-1 df. • This test gives an indication of whether or not the jth predictor variable statistically significant contributes to the prediction of the response variable over and above all the other predictor variables. • Confidence interval for βj (assuming all the other predictor variables are in the model) is given by: b j ± t (n − p −1); α / 2 ⋅ S .E (b j ) STA302/1001 - week 10 9 ANOVA Table • The ANOVA table in multiple regression model is given by… STA302/1001 - week 10 10 Coefficient of Multiple Determination – R2 • As in simple linear regression model, R2 = SSReg/SST. • In multiple regression this is called the “coefficient of multiple determination”; it is not the square of a correlation coefficient. • In multiple regression, need to be cautious judging model with R2 because it always goes up when more predictor variables are added to the model, regardless of whether the predictor variables are useful for predicting Y. STA302/1001 - week 10 11 Adjusted R2 • An attempt to make R2 more useful is to calculate Adjusted R2 (“Adj R-Sq” in SAS) • Adjusted R2 is adjusted for the number of predictor variables in the model. • It can actually go down when more predictors are added. • It can be used for choosing the best model. • It is defined as Adj R 2 = 1 − (n − 1)MSE SST = 1− n − 1 SSE . n − p − 1 SST • Note that Adjusted R2 will increase only is MSE decrease. STA302/1001 - week 10 12 ANOVA F Test in Multiple Regression • In multiple regression, the ANOVA F test is designed to test the following hypothesis: H 0 : β1 = β 2 = L β p = 0 H a : at least one of β 1 , β 2 , K β p is not 0 • This test aims to assess whether or not the model have any predictive ability. • The test statistics is F stat = MSR eg MSE • If H0 is true, the above test statistics has an F distribution with p, n-p-1 degrees of freedom. STA302/1001 - week 10 13 F-Test versus t-Tests in Multiple Regression • In multiple regression, the F test is designed to test the overall model while the t tests are designed to test individual coefficients. • If the F-test is significant and all or some of the t-tests are significant, then there are some useful explanatory variables for predicting Y. • If the F-test is not significant (large P-value), and all the t-tests are not significant, it means that no explanatory variable contribute to the prediction of Y. • If the F-test is significant and all the t-tests are not significant, then it is an indication of “multicolinearity” – i.e., correlated X’s. It means that individual X’s don’t contribute to the prediction of Y over and above other X’s. STA302/1001 - week 10 14 • If the F-test is not significant and some of the t-tests are significant, it is an indication of one of two things: ¾ The model has no predictive ability but if there are many predictors, a few may have small P-value (type I error in t-tests). ¾ Predictors were chosen poorly. If one useful predictor is added to many that are unrelated to the outcome its contribution may not be enough for model to be significant (F-test). STA302/1001 - week 10 15 Multicollinearity • Multicollinearity occurs when explanatory variables are highly correlated, in which case, it is difficult or impossible to measure their individual influence on the response. • The fitted regression equation is unstable. • The estimated regression coefficients vary widely from data set to data set (even if data sets are very similar) and depending on which predictor variables are in the model. • The estimated regression coefficients may even have opposite sign than what is expected (e.g, bedroom in house price example). STA302/1001 - week 10 16 • The regression coefficients may not be statistically significant from 0 even when corresponding explanatory variable is known to have a relationship with the response. • When some X’s are perfectly correlated, we can’t estimate β because X’X is singular. • Even if X’X is close to singular, its determinant will be close to 0 and the standard errors of estimated coefficients will be large. STA302/1001 - week 10 17 Quantitative Assessment of Multicollinearity • To asses multicolinearity we calculate the Variance Inflation Factor for each of the predictor variables in the model. • The variance inflation factor for the ith predictor variable is defined as 1 VIF = 1 − Ri2 2 where Ri is the coefficient of multiple determination obtained when the ith predictor variable is regressed against p-1 other predictor variables. • Large value of VIFi is a sign of multicollinearity. STA302/1001 - week 10 18