Model Order Reduction for Wave Propagation

advertisement

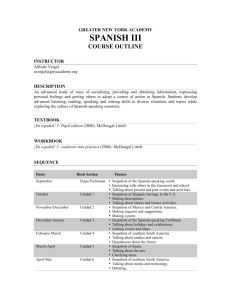

Speeding Up Seismic Imaging with Reduced-Order Modeling Victor Pereyra Energy Resources Engineering Stanford University Scientific Computing and Matrix Computations Seminar U.C. Berkeley 2/13/13 Seismic Oil Exploration Seismic oil exploration and reservoir imaging require the processing of very large scale data sets. Data is collected in the time domain but maps of the underground are required in the space domain. The physics involved is wave propagation in complex media. One of the most sophisticated data processing employed is Depth Migration and until recently only approximate solutions to the wave equation were used, such as the one way or parabolic approximation. This has limitations in complex areas. Current technology is called Reverse Time Migration that uses full wave simulation from the sources and backward propagation of the seismic data from the receivers in order to create depth migrated images from seismic data by cross-correlation. Full waveform tomography is another technique used to improve velocity models that requires large computing resources. All these techniques require massive numbers of simulations. In 3D it is not unusual to manipulate many terabytes of data in large clusters. These problems are still a challenge today even with large dedicated clusters, both because of computing, storage requirements and network traffic. BP builds world’s largest supercomputing complex for exploration applications BP has started to build in Houston what it says will be the largest commercial supercomputing complex in the world. The High-Performance Computing center is scheduled to open in mid-2013.The HPC will be the worldwide hub for processing and managing geologic and seismic data across all of BP’s assets. The HPC will be equipped with 67,000 CPUs and is expected to process data at a rate of as much as two petaflops. The total memory will be 536 terabytes and the disk space will total 23.5 Reverse Time Migration (RTM) Overturned Rays Courtesy of Antoine Guitton and Bruno Kaelin Reduced-order modeling It would be of great interest to be able to run these multiple simulations faster and using less data. Model order reduction is a technique that has been used in many application domains to do just that. Since there is a paucity of work for applications to the wave equation in the time domain, we are performing some feasibility studies in order to see if the resulting approximate solutions are sufficiently accurate and cost effective for some of these problems. Fast wave propagation by model order reduction. V. Pereyra and B. Kaelin. ETNA 30, 2008. Wave equation simulation in two-dimensions using a compressed modeler. V. Pereyra. Submitted for publication in ETNA, 2012. Acoustic Wave Equation Consider the wave equation in inhomogeneous media, discretized in space : 2w/t2 = Aw + Bu(t), v = Cw, Where xRl, w(t)Rn and A (discrete Laplacian),B (source term),C (output) are appropriate matrices, u(t) is an input forcing function and v is the desired output. n will usually be very large. Basic Idea The dynamics is obtained by integrating this very large system of Ordinary Differential Equations (method of lines). The procedure we propose attempts to obtain an approximate solution by reducing drastically the size of this system of ODE’s. This is specially important and useful when rapid response or repeated simulations are required. It is assumed that we have the ability to perform a few full size (high fidelity) solves. Proper Orthogonal Decomposition (POD) From one or a few full fidelity simulations we extract a certain number of snapshots: i.e., spatial states of the field at different times, fi, i=1,…,m. We form a matrix F (nxm), where n is the number of degrees of freedom of the original spatial discretization (veeery large) and m is the number of snapshots (relatively small). Then we proceed to calculate its QR Decomposition: F = Q R. The columns of the matrix Q form an orthogonal basis for the space of columns of F, the snapshots, and R is upper triangular. We actually use a progressive QR, in which a new snapshot is reduced on the fly and if its angle with the existing basis is not large enough it is skipped. In this way we incorporate some adaptivity in the snapshot selection and end up with a well conditioned basis. Reduced System Now we use Galerkin collocation on the original equations, by proposing the Ansatz: w = Q a(t). The key here is to introduce the time dependence through the weights a(t). Replacing in the wave equation we obtain the reduced system (of size m, the number of snapshots): att = (QTAQ) a(t) + (QTB) u(t) Observe that we still can input a source (it does not have to be the same source wavelet or even be applied at the same location as in the problem that generated the snapshots); we can also change the material properties or change the output in this reduced equation. i.e., we can change A, B (not drastically, obviously). High Fidelity and MOR Codes As a full fidelity code we use an 8th order finite difference approximation in space and a Runge-KuttaFehlberg fourth order integrator in time. Absorbing boundary conditions are included (R. Kosloff and D. Kosloff, Absorbing boundaries for wave propagation problems. J. Comp. Physics 63:363-376 (1986).) The second order acoustic wave equation is written in first order form (as required by RKF45), so that doubles the number of ODE’s for the time integration. The MOR code implements the procedure indicated above, using snapshots of previously run HF simulations. Vel4: a Large Size 2D Model In this example we consider an inhomogeneous model with the following specs: dx=dz=6.25m; dt=0.05sec (snapshot spacing) nx=2001, nz=2001 (total # space mesh n = 4004001). Nine shots are placed on the free surface, starting at x=5931.25 m, spaced by 25 m for a total distance of 200 m. We take 200 snapshots from the first and last shot simulations, for a total of 400 snapshots. With a threshold of the angle between a candidate snapshot and the current basis of 5o, we select m = 363 basis snapshots. We use RKF45, a standard Runge-Kutta 4th order solver to integrate the 8008002 ODE’s in time. RKF45 adapts its integration step to preserve stability and achieve a prescribed accuracy (in this case 0.001). A Complex, Large Scale 2D Model. Vel4, a Salt Body Courtesy of BP Velocity model. 2001 x 2001 mesh. Salt velocity is very high 13,000 ft/sec Seismic Section: A number of seismograms plotted side by side Performance Observe that if we consider the preprocessing time and prorate it over the seven internal shots, then the gain in time is much smaller, but still significant: 5.7. HF is Open MP optimized while MOR is not. This was run in a 12 processors machine and HF had parallel efficiency of 80%. This factor would be much larger in 3D or if we consider more internal shots. Accuracy. Crossplot of a Trace: HF vs MOR Midway Shot Full waveform inversion In this application we have seismic data collected (say) at the free surface on a number of receivers: Sio(tj) and a parameterized velocity model v(a). Starting from an initial guess v(a0) we use the HF and MOR codes to generate the calculated response at the receivers and use a least squares data fit to improve upon the values of the velocity parameters. For this purpose we employ a Levenberg-Marquardt code that approximates gradients with finite differences (LMDIF from MINPAK). Regularization/Convexification/Continuation RCC For difficult inverse problems is recommended to use regularization, i.e., add to the misfit functional a term of the form: mina (1-l)||So – Sc(a)||22 + l ||a – a0||22, that penalizes large changes in the unknown parameters from some reference value. We observe that the penalty term is a convex function. Also, this is the form for homotopy continuation, where we start from a known solution (a0, for l =1) and move l incrementally, towards 0, the problem we really want to solve. Multimodal Response Function We consider a simple 3 constant layers model and vary only the velocity in the first layer. We plot the response function for a range of values of v1 and of l. Some Preliminary Inversion Results We consider the same 2D, 3 homogeneous layer problem. Model size: 3500x3500. The data is generated by the HF code and corresponds to a shot at x=1600. There are 200 receivers spaced by 10m, starting at x=700 (400 samples per receiver). l was set to 0.05. The purpose is to recover the (constant) velocity in the layers, when starting away from the values that generated the synthetic data. This results in a 80000 x 3 nonlinear least squares problem. MOR uses 108 snapshots from the first HF simulation at a0. Numerical results Target Initial Final HF Final MOR-2 v1 1500 1400 1500 1500 v2 2500 2600 2500 2500 v3 3500 3425 3500 3500 Evals. 67 37 Total time 5025” 397.75” Final residual 0.0 0.0 Speed up = 5025/397.75 = 12.63 Using snapshots at initial guess. A Larger Problem: Model vel4pc Space: 2001x2001 7 parameters No regularization 12 threads (mainly for High Fidelity; efficiency 90%) HF Time/fcn = 5580” MOR Time/fcn = 186”; Pre-process Time: 3349”; rank=122 Ratio: 11.83 Ratio for 30 iters. Including pre-processing: 7.4 Model vel4pc: Complex Geology The real interest: 3D Currently, parallelization in a distributed setting is done per shot. In 3D, simulating a survey may require 50,000 shots. Each shot is simulated in a multi-core, large memory node and many nodes are used to simulate the whole survey. Can MOR help? Yes and no. What we did in 2D shows that the method works and will provide savings as long as the proportion of MOR and HF+pre-processing is adequate. This would in principle work even better in 3D. The problem with 3D is that those problems get too big to fit in current machinery. 3D: sizes of interest The local models associated with a shot can be as large as: nx=1000, ny=1000, nz=5000, so that snapshots will have dimension N=5x109, i.e., 20 Giga-bites in single precision. As the frequency, resolution and complexity of the models are increased, more snapshots are necessary. Say we consider 1000 snapshots. That means that the size of the matrix of snapshots is 20 Tera-bites, which is about 200 times larger than current large memory single nodes. With Michael Mahoney we are starting to look into randomized algorithms to see if those can do the trick. Proper Slanted Decomposition (PSD) The only place where orthogonality was required in POD is when eliminating U from the left hand side. Let us consider what happens if we use the original matrix of snapshots S instead. For this we assume that the solution is of the form: u(t)=S a(t). Thus: S att = A S a + B u(t) – 2 D(e) S at. Now, we multiply by ST to get att =(STS)-1 ST A S a + ST B … The coefficient of the first term in the right hand side X can be obtained by solving the matrix LS problem: SX=AS LSRN: A parallel iterative solver In a recent still unpublished work, Meng, Saunders and Mahoney describe a code for solving large, strongly over determined systems by least squares. Moreover, there is a publicly available code LSNR. The method uses random projections to generate a preconditioner that then makes iterative methods such as conjugate gradients or Chebyshev run very efficiently. We are investigating if this, or similar approaches in randomized linear algebra, can help in cracking this problem. Conclusions At this evaluation stage one can monitor the true error by comparing the MOR and the HF solutions as one walks away from the problems that generated the basis snapshots. We have done that and as we showed in one of the examples, that there are increasing errors as we solve problems farther away. The errors, however, seem to be concentrated on the amplitudes, while the phases of the events are quite accurate. This is good, since the phases are more important than the amplitudes in migration algorithms. It is important not to loose sight of the final objective of the simulations (whatever that might be). We are planning to test this technique for more applications in seismic data processing, to see how the approximation error affects the final result. The more MOR we can use for the same basis, the more efficient the overall approach will be. Very large problems are still out of reach. NEW BOOK