Slides

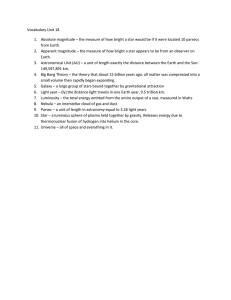

advertisement

QuickTime™ and a

TIFF (Uncompressed) decompressor

are needed to see this picture.

This work was conducted at West Virginia University and the Jet Propulsion

Laboratory under grants with NASA's Software Assurance Research

Program. Reference herein to any specific commercial product, process, or

service by trademark, manufacturer, or otherwise, does not constitute or

imply its endorsement by the United States Government.

If you fix everything you

lose fixes for everything else

Tim Menzies (WVU)

Jairus Hihn (JPL)

Oussama Elrawas (WVU)

Dan Baker (WVU)

Karen Lum (JPL)

International Workshop on Living with Uncertainty,

IEEE ASE 2007, Atlanta, Georgia,

Nov 5, 2007

tim@menzies.us

oelrawas@mix.wvu.edu

What does this mean?

A supposedly np-hard task

abduction over firstorder theories

nogood/2

Q: for what models does (a few peeks) = (many hard stares)?

2

A: models with

“collars”

Grow

–

Monte Carlo a model

–

For each run

“Collar” variables set the other

variables

–

–

Master variables

–

favoring settings with better

scores

If “collars”, then

–

–

–

… small rules …

… learned quickly …

… will suffice

Kohavi & John ‘97

Back doors

–

Crawford & Baker ‘94

Feature subset selection

–

DeKleer ’85

Rule generation experiments,

Amarel in the 60s

Minimal environments

Score each output

Add score to each input

settings

Harvest

–

Narrows

–

Picking input settings at

random

Williams et al ‘03

Etc

Implications for uncertainty?

Feather & Menzies RE’02

3

For

example

STAR: collars + simulated annealing on

Boehm’s USC’s software process models

USC software process models for effort, defects, threats

–

–

–

y[i] = impact[i] * project[i] + b[i] for i {1,2,3,…}

≤ project[i] ≤ : uncertainty in project description

≤ impact[i] ≤ : uncertainty in model calibration

controllable

uncontrollable

Random solution

pick project[i] and impact[i] from any .. , ..

.. set via domain knowledge;

e.g. process maturity in 3 to 5

– range of .. known from history;

–

–

Score solution by effort (Ef),

defects (De) and Threat (Th)

4

Two studies

y[i] = impact[i] * project[i] + b[i]

one

two

Certain methods

–

–

Using much historical data

Learn the magnitude of the

impact[i] relationship

– With fixed impact[I]

Tame

Monte Carlo at

andom across the

project[i] settings

E.g.

uncontrollables via

historical

records

–

Regression-based tools that

learn impact[I] from historical

records

– 93 records of JPL systems

– SCAT:

–

JPL’s current methods

2CEE:

WVU’s improvement over

SCAT (currently under test)

Methods with more uncertainty

–

Using no historical data

– Monte Carlo at random across

the project[i] settings and

impact[i] settings

E.g.

–

STAR

– Monte Carlo a model

– Score each output

– Sort settings by their “C”,

–

“C”= cumulative score

Rule generation experiments,

favoring settings with better “C”.

5

Bad

Inside STAR

1. sampling

- simulated annealing

2. summarizing

- post-processor

for setting Sx { value[setting] += E }

Sort all settings by their value

–

–

–

38 not-so- good ideas

Ignore uncontrollables impact[I]

Assume the top

(1 ≤ i ≤ max) project[I] settings

Randomly select the rest

“Policy point” :

–

Good

smallest I with lowest E

Median = 50% percentile

–

Spread = (75-50)% percentile

22 good ideas

6

SCAT vs

2CEE vs

STAR

project[i]

7

Control impact[I] via

historical data

SCAT vs

2CEE vs

STAR

project[i]

8

Control impact[I] via

historical data

SCAT vs

2CEE vs

STAR

project[i]

Stagger around

superset of possible

impact[I]

9

Control impact[I] via

historical data

SCAT vs

2CEE vs

STAR

project[i]

Stagger around

superset of possible

impact[I]

Flight (effort)

ed

ia

2C sp n

EE re

ad

m

ed

ia

ST sp n

AR re

ad

m

ed

ia

sp n

re

ad

m

Spread :

(75 - 50)%

SC

AT

Median:

50% point

1600

1400

1200

1000

800

600

400

200

0

10

Control impact[I] via

historical data

SCAT vs

2CEE vs

STAR

project[i]

Stagger around

superset of possible

impact[I]

Flight (effort)

SC

AT

m

Spread :

(75 - 50)%

ed

ia

2C sp n

EE re

ad

m

ed

ia

ST sp n

AR re

ad

m

ed

ia

sp n

re

ad

Median:

50% point

1600

1400

1200

1000

800

600

400

200

0

STAR/2cee= 50/ 800= 6%

STAR/scat= 50/1300= 4%

11

Control impact[I] via

historical data

SCAT vs

2CEE vs

STAR

project[i]

Stagger around

superset of possible

impact[I]

STAR/2cee= 50/ 800= 6%

STAR/scat= 50/1300= 4%

STAR/2cee= 30/620= 5%

STAR/scat= 30/730= 4%

OSP2 (effort)

OSP (effort)

1500

1000

500

m

ed

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

0

ia

n

2C spr

e

EE

ad

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

2000

450

400

350

300

250

200

150

100

50

0

m

ed

2500

SC

AT

m

ed

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

e

AR

ad

m

ed

ia

n

sp

re

ad

800

700

600

500

400

300

200

100

0

SC

AT

SC

AT

m

Spread :

(75 - 50)%

ed

ia

2C sp n

EE re

ad

m

ed

ia

ST sp n

AR re

ad

m

ed

ia

sp n

re

ad

Median:

50% point

1600

1400

1200

1000

800

600

400

200

0

Ground (effort)

SC

AT

Flight (effort)

STAR/2cee= 400/1600= 25% STAR/2cee= 180/ 400= 45%

STAR/scat= 400/1900= 21% STAR/scat= 180/1900= 60%

12

Control impact[I] via

historical data

SCAT vs

2CEE vs

STAR

project[i]

Stagger around

superset of possible

impact[I]

STAR/2cee= 50/ 800= 6%

STAR/scat= 50/1300= 4%

STAR/2cee= 30/620= 5%

STAR/scat= 30/730= 4%

OSP2 (effort)

OSP (effort)

1500

1000

500

m

ed

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

0

ia

n

2C spr

e

EE

ad

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

2000

450

400

350

300

250

200

150

100

50

0

m

ed

2500

SC

AT

m

ed

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

e

AR

ad

m

ed

ia

n

sp

re

ad

800

700

600

500

400

300

200

100

0

SC

AT

SC

AT

m

Spread :

(75 - 50)%

ed

ia

2C sp n

EE re

ad

m

ed

ia

ST sp n

AR re

ad

m

ed

ia

sp n

re

ad

Median:

50% point

1600

1400

1200

1000

800

600

400

200

0

Ground (effort)

SC

AT

Flight (effort)

STAR/2cee= 400/1600= 25% STAR/2cee= 180/ 400= 45%

STAR/scat= 400/1900= 21% STAR/scat= 180/1900= 60%

13

Control impact[I] via

historical data

SCAT vs

2CEE vs

STAR

project[i]

Stagger around

superset of possible

impact[I]

STAR/2cee= 30/620= 5%

STAR/scat= 30/730= 4%

Ignoring historical data is useful (!!!?)

OSP2 (effort)

OSP (effort)

1500

1000

500

m

ed

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

0

ia

n

2C spr

e

EE

ad

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

2000

450

400

350

300

250

200

150

100

50

0

m

ed

2500

SC

AT

m

ed

SC

AT

SC

AT

STAR/2cee= 50/ 800= 6%

STAR/scat= 50/1300= 4%

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

e

AR

ad

m

ed

ia

n

sp

re

ad

800

700

600

500

400

300

200

100

0

m

Spread :

(75 - 50)%

1600

1400

1200

1000

800

600

400

200

0

ed

ia

2C sp n

EE re

ad

m

ed

ia

ST sp n

AR re

ad

m

ed

ia

sp n

re

ad

Median:

50% point

Ground (effort)

SC

AT

Flight (effort)

STAR/2cee= 400/1600= 25% STAR/2cee= 180/ 400= 45%

STAR/scat= 400/1900= 21% STAR/scat= 180/1900= 60%

14

Control impact[I] via

historical data

SCAT vs

2CEE vs

STAR

project[i]

Stagger around

superset of possible

impact[I]

STAR/2cee= 30/620= 5%

STAR/scat= 30/730= 4%

Ignoring historical data is useful (!!!?)

OSP2 (effort)

OSP (effort)

1500

1000

500

m

ed

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

0

ia

n

2C spr

e

EE

ad

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

2000

450

400

350

300

250

200

150

100

50

0

m

ed

2500

SC

AT

m

ed

SC

AT

SC

AT

STAR/2cee= 50/ 800= 6%

STAR/scat= 50/1300= 4%

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

e

AR

ad

m

ed

ia

n

sp

re

ad

800

700

600

500

400

300

200

100

0

m

Spread :

(75 - 50)%

1600

1400

1200

1000

800

600

400

200

0

ed

ia

2C sp n

EE re

ad

m

ed

ia

ST sp n

AR re

ad

m

ed

ia

sp n

re

ad

Median:

50% point

Ground (effort)

SC

AT

Flight (effort)

STAR/2cee= 400/1600= 25% STAR/2cee= 180/ 400= 45%

STAR/scat= 400/1900= 21% STAR/scat= 180/1900= 60%

15

Control impact[I] via

historical data

SCAT vs

2CEE vs

STAR

project[i]

Stagger around

superset of possible

impact[I]

2000

1500

1000

500

STAR/2cee= 30/620= 5%

STAR/scat= 30/730= 4%

Ignoring historical data is useful (!!!?)

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

m

ed

SC

AT

ia

n

2C spr

ea

EE

d

m

ed

ia

n

ST spr

e

AR

ad

m

ed

ia

n

sp

re

ad

0

450

400

350

300

250

200

150

100

50

0

ia

n

2C spr

e

EE

ad

m

ed

ia

n

ST spr

ea

AR

d

m

ed

ia

n

sp

re

ad

2500

m

ed

SC

AT

SC

AT

STAR/2cee= 50/ 800= 6%

STAR/scat= 50/1300= 4%

OSP2 (effort)

OSP (effort)

m

ed

800

700

600

500

400

300

200

100

0

m

Spread :

(75 - 50)%

1600

1400

1200

1000

800

600

400

200

0

ed

ia

2C sp n

EE re

ad

m

ed

ia

ST sp n

AR re

ad

m

ed

ia

sp n

re

ad

Median:

50% point

Ground (effort)

SC

AT

Flight (effort)

STAR/2cee= 400/1600= 25% STAR/2cee= 180/ 400= 45%

STAR/scat= 400/1900= 21% STAR/scat= 180/1900= 60%

If you fix everything, you lose fixes for everything else16

Luke, trust the force,

I mean, collars

IEEE Computer, Jan 2007

“The strangest thing about software”

Extra Material

Related work

Abduction :

–

World W = minimal set of

assumptions (w.r.t. size) such that

–

Feather, DDP, treatment learning

–

Framework for

–

T A => G

Not(T U A => error)

validation,

diagnosis,

planning,

monitoring,

explanation,

tutoring,

test case generation,

prediction,…

Theoretically slow (NP-hard) but

this should be practical:

Abduction + stochastic sampling

Find collars

Learn constraints on collars

Optimization of

requirement models

XEROC PARC, 1980s, qualitative

representations (QR)

–

–

not overly-specific,

Quickly collected in a new

domain.

– Used for model diagnosis

and repair

– Can found creative solutions in

larger space of possible

qualitative behaviors,

than in the tighter space of precise

quantitative behaviors

19

Possible optimizations

(not used here)

STAR, an example of a general

process:

–

–

Stochastic sampling

– Sort settings by “value”

– Rule generation experiments

–

–

–

–

favoring highly “value”-ed settings

See also, elite sampling in the

cross-entropy method

If SA convergence too slow

Try moving back select into the SA;

– Constrain solution mutation to

prefer highly “value”-ed settings

BORE (best or rest)

–

n runs

Best= top 10% scores

Rest = remaining 90%

{a,b} = frequency of

discretized range in {best, rest

Sort settings by

Ask

-1 * (a/n)2 / (a/n + b/n)

me why,

off-line

Other valuable tricks:

–

Incremental discretization:

Gama&Pinto’s PID +

Fayyad&Irani

– Limited discrepancy search:

Harvey&Ginsberg

– Treatment learning: Menzies&Yu

20

“Uncertainty

helps

planning”

(questions? comments?)

At the “policy point”,

STAR’s random solutions

are surprisingly accurate

LC : learn impact[i] via regression (JPL data)

STAR: no tuning, randomly pick impact[i]

Diff = ∑ mre(lc)/ ∑ mre(star)

Mre = abs(predicted - actual) /actual

∑ mre(lc) / ∑ mre(star)

{ “” “”}

strategic

diff

diff

diff

diff

same

same

same

same

same

tactical

ground

66%

63%

all

91%

75%

OSP2

99%

125%

OSP

112%

111%

flight

101%

121%

same at {95, 99}% confidence (MWU)

Why so little Diff (median= 75%)?

–

diff

Most influential inputs tightly constrained

22

(Model uncertainty = collars) << inputs

In many models, a few “collar” variables set the other variables

–

–

–

–

–

–

Collars appear in all execution traces (by definition)

–

Narrows (Amarel in the 60s)

Minimal environments (DeKleer ’85)

Master variables (Crawford & Baker ‘94)

Feature subset selection (Kohavi & John ‘97)

Back doors (Williams et al ‘03)

See “The Strangest Thing About Software (IEEE Computer, Jan’07)”

You don’t have to find the collars, they’ll find you

So, to handle uncertainty

–

–

–

–

Write a simulator

Stagger over uncertainties

From stagger, find collars

Constrain collars

This talk: a very simple example of this process

23

Comparisons

Standard software process modeling

–

Models written more than run (PROSIM community)

Limited sensitivity analysis

Limited trade space

–

Or, expensive, error-prone, incomplete data collection

programs

Point solutions

Here:

–

–

–

–

No data collection

Found stable conclusions

within a space of possibilities

Search : very simple

Solution, not brittle

With trade-off space

22 good ideas, sorted

24

Bad

Summary

Living with uncertainty

–

–

Here, the smallest change

to simulating annealing

Useful:

–

–

Good

Simple:

–

Sometimes, simpler than you

may think

more useful than you might

think

Sometimes uncertainty can

teach you more than certainty

If you fix everything, you lose

fixes to everything else

Collars control certainty

–

–

Uncertainty plus constrained

collars more certainty

Also, can drive model to

better performance

An example you

can explain to

any business user

An example you

can explain to

any business user

22 good ideas, sorted

25