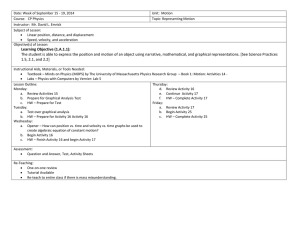

pptx - CUNY.edu

advertisement

Lecture 1: Introduction Machine Learning CUNY Graduate Center Today • • • • • Welcome Overview of Machine Learning Class Mechanics Syllabus Review Basic Classification Algorithm 1 My research and background • Speech – Analysis of Intonation – Segmentation • Natural Language Processing – Computational Linguistics • Evaluation Measures • All of this research relies heavily on Machine Learning 2 You • Why are you taking this class? • For Ph.D. students: – What is your dissertation on? – Do you expect it to require Machine Learning? • What is your background and comfort with – Calculus – Linear Algebra – Probability and Statistics • What is your programming language of preference? – C++, java, or python are preferred 3 Machine Learning • Automatically identifying patterns in data • Automatically making decisions based on data • Hypothesis: Data Learning Algorithm Behavior ≥ Data Programmer Behavior 4 Machine Learning in Computer Science Speech/Au dio Processing Natural Language Processing Biomedical/Cheme dical Informatics Robotics Planning Machine Learning Human Computer Interaction Vision/Imag e Processing Financial Modeling Analytics 5 Major Tasks • Regression – Predict a numerical value from “other information” • Classification – Predict a categorical value • Clustering – Identify groups of similar entities • Evaluation 6 Feature Representations • How do we view data? Entity in the World Web Page User Behavior Speech or Audio Data Vision Wine People Etc. Our Focus Feature Representation Machine Learning Algorithm Feature Extraction 7 Feature Representations Height Weight Eye Color Gender 66 170 Blue Male 73 210 Brown Male 72 165 Green Male 70 180 Blue Male 74 185 Brown Male 68 155 Green Male 65 150 Blue Female 64 120 Brown Female 63 125 Green Female 67 140 Blue Female 68 165 Brown Female 66 130 Green Female 8 Classification • Identify which of N classes a data point, x, belongs to. • x is a column vector of features. OR 9 Target Values • In supervised approaches, in addition to a data point, x, we will also have access to a target value, t. Goal of Classification Identify a function y, such that y(x) = t 10 Feature Representations Height Weight Eye Color Gender 66 170 Blue Male 73 210 Brown Male 72 165 Green Male 70 180 Blue Male 74 185 Brown Male 68 155 Green Male 65 150 Blue Female 64 120 Brown Female 63 125 Green Female 67 140 Blue Female 68 165 Brown Female 66 130 Green Female 11 Graphical Example of Classification 12 Graphical Example of Classification ? 13 Graphical Example of Classification ? 14 Graphical Example of Classification 15 Graphical Example of Classification 16 Graphical Example of Classification 17 Decision Boundaries 18 Regression • Regression is a supervised machine learning task. – So a target value, t, is given. • Classification: nominal t • Regression: continuous t Goal of Classification Identify a function y, such that y(x) = t 19 Differences between Classification and Regression • Similar goals: Identify y(x) = t. • What are the differences? – The form of the function, y (naturally). – Evaluation • • • • Root Mean Squared Error Absolute Value Error Classification Error Maximum Likelihood – Evaluation drives the optimization operation that learns the function, y. 20 Graphical Example of Regression ? 21 Graphical Example of Regression 22 Graphical Example of Regression 23 Clustering • Clustering is an unsupervised learning task. – There is no target value to shoot for. • Identify groups of “similar” data points, that are “dissimilar” from others. • Partition the data into groups (clusters) that satisfy these constraints 1. Points in the same cluster should be similar. 2. Points in different clusters should be dissimilar. 24 Graphical Example of Clustering 25 Graphical Example of Clustering 26 Graphical Example of Clustering 27 Mechanisms of Machine Learning • Statistical Estimation – Numerical Optimization – Theoretical Optimization • Feature Manipulation • Similarity Measures 28 Mathematical Necessities • Probability • Statistics • Calculus – Vector Calculus • Linear Algebra • Is this a Math course in disguise? 29 Why do we need so much math? • Probability Density Functions allow the evaluation of how likely a data point is under a model. – Want to identify good PDFs. (calculus) – Want to evaluate against a known PDF. (algebra) 30 Gaussian Distributions • We use Gaussian Distributions all over the place. 31 Gaussian Distributions • We use Gaussian Distributions all over the place. 32 Class Structure and Policies • Course website: – http://eniac.cs.qc.cuny.edu/andrew/gcml-11/syllabus.html • Google Group for discussions and announcements – http://groups.google.com/gcml-spring2011 – Please sign up for the group ASAP. – Or put your email address on the sign up sheet, and you will be sent an invitation. 33 Data Data Data • “There’s no data like more data” • All machine learning techniques rely on the availability of data to learn from. • There is an ever increasing amount of data being generated, but it’s not always easy to process. • UCI – http://archive.ics.uci.edu/ml/ • LDC (Linguistic Data Consortium) – http://www.ldc.upenn.edu/ 34 Half time. Get Coffee. Stretch. 35 Decision Trees color blue h w <66 m w <150 <140 w w <145 green brown m m h <66 <170 f h f f m <64 f m f • Classification Technique. 36 Decision Trees color blue h w <66 m w m <150 m h f h <66 <170 f w <140 w <145 green brown f m <64 f m f • Very easy to evaluate. • Nested if statements 37 More formal Definition of a Decision Tree • • • • A Tree data structure Each internal node corresponds to a feature Leaves are associated with target values. Nodes with nominal features have N children, where N is the number of nominal values • Nodes with continuous features have two children for values less than and greater than or equal to a break point. 38 Training a Decision Tree • How do you decide what feature to use? • For continuous features how do you decide what break point to use? • Goal: Optimize Classification Accuracy. 39 Example Data Set Height Weight Eye Color Gender 66 170 Blue Male 73 210 Brown Male 72 165 Green Male 70 180 Blue Male 74 185 Brown Male 68 155 Green Male 65 150 Blue Female 64 120 Brown Female 63 125 Green Female 67 140 Blue Female 68 165 Brown Female 66 130 Green Female 40 Baseline Classification Accuracy • Select the majority class. – Here 6/12 Male, 6/12 Female. – Baseline Accuracy: 50% • How good is each branch? – The improvement to classification accuracy 41 Training Example • Possible branches color blue 2M / 2F brown 2M / 2F green 2M / 2F 50% Accuracy before Branch 50% Accuracy after Branch 0% Accuracy Improvement 42 Example Data Set Height Weight Eye Color Gender 63 125 Green Female 64 120 Brown Female 65 150 Blue Female 66 170 Blue Male 66 130 Green Female 67 140 Blue Female 68 145 Brown Female 6 155 Green Male 70 180 Blue Male 72 165 Green Male 73 210 Brown Male 74 185 Brown Male 43 Training Example • Possible branches height <68 1M / 5F 5M / 1F 50% Accuracy before Branch 83.3% Accuracy after Branch 33.3% Accuracy Improvement 44 Example Data Set Height Weight Eye Color Gender 64 120 Brown Female 63 125 Green Female 66 130 Green Female 67 140 Blue Female 68 145 Brown Female 65 150 Blue Female 68 155 Green Male 72 165 Green Male 66 170 Blue Male 70 180 Blue Male 74 185 Brown Male 73 210 Brown Male 45 Training Example • Possible branches weight <165 1M / 6F 5M 50% Accuracy before Branch 91.7% Accuracy after Branch 41.7% Accuracy Improvement 46 Training Example • Recursively train child nodes. weight <165 5M height <68 5F 1M / 1F 47 Training Example weight • Finished Tree <165 5M height <68 5F weight <155 1F 1M 48 Generalization • What is the performance of the tree on the training data? – Is there any way we could get less than 100% accuracy? • What performance can we expect on unseen data? 49 Evaluation • Evaluate performance on data that was not used in training. • Isolate a subset of data points to be used for evaluation. • Evaluate generalization performance. 50 Evaluation of our Decision Tree • What is the Training performance? • What is the Evaluation performance? – Never classify female over 165 – Never classify male under 165, and under 68. – The middle section is trickier. • What are some ways to make these similar? 51 Pruning • There are many pruning techniques. • A simple approach is to have a minimum membership size in each node. weight weight <165 <165 5M height <68 5M height <68 5F 5F weight 1F / 1M <155 1F 1M 52 Decision Tree Recap • Training via Recursive Partitioning. • Simple, interpretable models. • Different node selection criteria can be used. – Information theory is a common choice. • Pruning techniques can be used to make the model more robust to unseen data. 53 Next Time: Math Primer • Probability – Bayes Rule – Naïve Bayes Classification • Calculus – Vector Calculus • Optimization – Lagrange Multipliers 54