Cache Memory - UniMAP Portal

advertisement

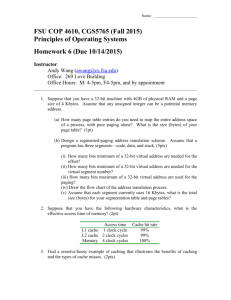

Chapter Five : Cache Memory Processor Memory Input/Output Chapter 5 Cache Memory 1 Chapter Five : Cache Memory Characteristics of memory systems Location Capacity Unit of transfer Access method Performance Physical type Physical characteristics Organization 2 Chapter Five : Cache Memory Location Internal memory is often equated with main memory. The processor requires its own local memory in the form of registers e.g. PC,IR,ID….. The control unit portion of the processor may also require its own internal memory. Cache is another form of internal memory External memory consists of peripheral storage devices e.g. disk, tape, that are accessible to the processor via I/O controller. 3 Chapter Three : Cache Memory Capacity Word size —The natural unit of organization —Typically equal to the number of bits used to represent integer and instruction length. —Common word lengths are 8, 16 and 32 bits. —External memory capacity is typically expressed in terms of bytes (1GB, 20MB) 4 Chapter Three : Cache Memory Unit of Transfer Internal memory — Number of bits read out of or written into memory at a time. — The unit of transfer is equal to the number of data lines of the memory module. — Equal to the word length but is often larger, such as 64,128 or 256 bits. External memory — Usually a block which is much larger than a word and these are referred as blocks. Addressable unit — Smallest location which can be uniquely addressed — Word internally 5 Chapter Three : Cache Memory Access Methods (1) Sequential —Start at the beginning and read through in order —Access time depends on location of data and previous location —e.g. tape Direct —Individual blocks have unique address —Access is by jumping to vicinity plus sequential search (pointer) —Access time depends on location and previous location —e.g. disk 6 Chapter Three : Cache Memory Access Methods (2) Random —Individual addresses identify locations exactly —Access time is independent of location or previous access —e.g. RAM Associative —Data is located by a comparison with contents of a portion of the store —Access time is independent of location or previous access —e.g. cache 7 Chapter Three : Cache Memory Performance Access time (latency) – for RAM, this is the time it takes to perform a read or write operation, that is, the time from the instant that an address is presented to the memory to the instant that data have been stored or made available for use. Memory cycle time – consists of the access time plus any additional time required before a second access can commence. This additional time may be required for transients to die out on signal lines or to regenerate data if they are read destructively. Transfer rate – this is the rate that data can be transferred into or out of a memory unit. Note: Two most important characteristics of memory : performance and capacity 8 Chapter Three : Cache Memory Physical Types of memory Semiconductor —RAM Magnetic —Disk & Tape Optical —CD & DVD Others —Bubble —Hologram 9 Chapter Three : Cache Memory Physical Characteristics Volatile – information decays naturally or is lost when electrical power is switched off. Non volatile – information once recorded remains without deterioration until deliberately changed and no electrical electric power is needed to retain information. e.g magneticsurface memory. Non erasable – cannot be altered, except by destroying the storage unit. E.g. ROM. Power consumption 10 Chapter Three : Cache Memory Cost, capacity and access time There is a trade-off among the three key characteristics of memory (cost, capacity and access time) Faster access time, greater cost per bit Greater capacity, smaller cost per bit Greater capacity, slower access time 11 Chapter Three : Cache Memory Memory Hierarchy Registers —In CPU Internal or Main memory —May include one or more levels of cache —“RAM” External memory —Backing store 12 Chapter Three : Cache Memory Memory Hierarchy - Diagram 13 Chapter Three : Cache Memory Memory Hierarchy - Diagram (Cont’d) Based on the figure, as one goes down the hierarchy the following occur — Decreasing cost per bit — Increasing capacity — Increasing Access time — Decreasing the frequency of access of the memory by the processor 14 Chapter Three : Cache Memory Memory Organization Smaller, more expensive, faster memories are supplemented by larger, cheaper, slower memories. The key to the success of this organization is decreasing frequency of access. This concept is explain detail in discussing on cache memory ( next slide ) virtual memory 15 Chapter Three : Cache Memory The Bottom Line How much? —Capacity How fast? —Time is money How expensive? 16 Chapter Three : Cache Memory Hierarchy List Registers L1 Cache L2 Cache Main memory Disk cache Disk Optical Tape 17 Chapter Three : Cache Memory Locality of Reference During the course of the execution of a program, memory references tend to cluster e.g. loops 18 Chapter Three : Cache Memory Cache-Introduction Small amount of fast memory Sits between normal main memory and CPU May be located on CPU chip or module Large capacity, slow Small capacity, fast 19 Chapter Three : Cache Memory Cache-Introduction Is an intermediate buffer between CPU and main memory. Objective :- to reduce the CPU waiting time during the main memory accesses. Without cache memory, every main memory access results in delay in instruction processing. Due to main memory access time is higher than processor clock period. High speed CPU’s time wasted during memory access (instruction fetch, operand fetch or result storing) To minimize the waiting time for the CPU, a small but fast memory is introduced as a cache buffer between main memory and CPU. 20 Chapter Three : Cache Memory A portion of program and data is brought into the cache memory in advance. The cache controller keeps track of locations of the main memory which are copied In cache memory When CPU needs for instruction or operand, it receives from the cache memory (if available) instead of accessing the main memory. Thus the memory access is fast because the slow main memory appears as a fast memory. The cache memory capacity is very small compared to main memory but the speed is several times better than main memory. Transfer between the CPU and cache memory usually one word at a time. The cache memory usually can be physically with processor IC 21 as internal cache, also known as on-chip cache. Chapter Three : Cache Memory Cache/Main Memory Structure Cache consists of C-lines. Each line contains K words and a tag of a few bits. The number of words in the line referred as line size. Tag- a portion of main memory address. Each line includes a tag identifies which particular block is currently being stored. 22 Remember Unit KB MB GB TB = = = = Value 210 Bytes 220 Bytes 230 Bytes 240 Bytes = = = = 1024 1024 1024 1024 Bytes KB MB GB 23 Chapter Three : Cache Memory Example Cache Memory Cache of 64KBytes Cache block of 4 bytes each. —64K/4=64(1024)bytes/4bytes=16 K= 214 lines —i.e. cache is 16K =(214) lines of 4 bytes each Main Memory 16MBytes main memory —(24)(220)bytes= (224)=16MBytes —24 bit address Note : 1 Kilobytes = 1,024 bytes or (210), 24 Chapter Three : Cache Memory Cache operation – overview CPU requests contents of memory location Check cache for this data If present, get from cache (fast) If not present, read required block from main memory to cache Then deliver from cache to CPU Cache includes tags to identify which block of main memory is in each cache slot 25 Chapter Three : Cache Memory Cache Read Operation - Flowchart 26 Chapter Three : Cache Memory Cache Design Size Mapping Function Replacement Algorithms Write Policy Line Size Number of Caches 27 Chapter Three : Cache Memory Size does matter Cost —More cache is expensive Speed —More cache is faster (up to a point) —Checking cache for data takes time 28 Chapter Three : Cache Memory Typical Cache Organization 29 Chapter Three : Cache Memory Mapping Function Fewer cache lines than the main memory block. Therefore, an algorithm is needed for mapping main memory blocks into cache lines. Needs for determining which memory block currently occupies a cache line-- Mapping function The choice of the mapping function, determines the how the cache is organized. Three techniques :—Direct —Associative —Set associative 31 Direct mapping cache example In a direct mapping cache with a capacity of 16 KB and a line length of 32 bytes, determine the following; i) How many bits are used to determine the byte that a memory operation references within the cache line? ii) How many bits are used to select the line in the cache that may contain the data. 32 Chapter Three : Cache Memory Direct Mapping Each block of main memory maps to only one cache line —i.e. if a block is in cache, it must be in one specific place Address is in two parts Least Significant w bits identify unique word Most Significant s bits specify one memory block The MSBs are split into a cache line field r and a tag of s-r (most significant) 33 Direct Mapping Address Structure : Example Tag s-r Line or Slot Word r w EXAMPLE : TAG=8, LINE = 14, WORD=2 24 bit address 2 bit word identifier (4 byte block) 22 bit block identifier —8 bit tag (=22-14) —14 bit slot or line No two blocks in the same line have the same Tag field Check contents of cache by finding line and checking 34 Tag Direct Mapping Cache Line Table Cache line 0 1 . . . m-1 Main Memory blocks held 0, m, 2m, 3m…2s-m 1,m+1, 2m+1…2s-m+1 . . . m-1, 2m-1,3m-1…2s-1 35 Direct Mapping Cache Organization 36 Direct Mapping Example 37 Direct Mapping Summary Address length = (s + w) bits Number of addressable units = 2s+w words or bytes Block size = line size = 2w words or bytes Number of blocks in main memory = 2s+ w/2w = 2s Number of lines in cache = m = 2r Size of tag = (s – r) bits 38 Direct Mapping pros & cons Simple Inexpensive Fixed location for given block — If a program accesses 2 blocks that map to the same line repeatedly, cache misses are very high 39 Associative Mapping A main memory block can load into any line of cache Memory address is interpreted as tag and word Tag uniquely identifies block of memory Every line’s tag is examined for a match Cache searching gets expensive 40 Fully Associative Cache Organization 41 Associative Mapping Example 42 Associative Mapping Address Structure Tag 22 bit Word 2 bit 22 bit tag stored with each 32 bit block of data Compare tag field with tag entry in cache to check for hit Least significant 2 bits of address identify which 16 bit word is required from 32 bit data block e.g. —Address Tag Data Cache line —FFFFFC FFFFFC 24682468 3FFF 43 Associative Mapping Summary Address length = (s + w) bits Number of addressable units = 2s+w words or bytes Block size = line size = 2w words or bytes Number of blocks in main memory = 2s+ w/2w = 2s Number of lines in cache = undetermined Size of tag = s bits 44 Set Associative Mapping Cache is divided into a number of sets Each set contains a number of lines A given block maps to any line in a given set —e.g. Block B can be in any line of set i e.g. 2 lines per set —2 way associative mapping —A given block can be in one of 2 lines in only one set 45 Set Associative Mapping Example 13 bit set number Block number in main memory is modulo 213 000000, 00A000, 00B000, 00C000 … map to same set 46 Two Way Set Associative Cache Organization 47 Set Associative Mapping Address Structure Tag 9 bit Set 13 bit Word 2 bit Use set field to determine cache set to look in Compare tag field to see if we have a hit 48 Two Way Set Associative Mapping Example 49 Set Associative Mapping Summary Address length = (s + w) bits Number of addressable units = 2s+w words or bytes Block size = line size = 2w words or bytes Number of blocks in main memory = 2d Number of lines in set = k Number of sets = v = 2d Number of lines in cache = kv = k * 2d Size of tag = (s – d) bits 50 Comparison Cache Type Hit Ratio Search Speed Direct Mapped Good Best Fully Associative Best Moderate N-Way Associative Very Good, Better as N increases Good, Worse as N increases 51 Replacement Algorithms (1) Direct mapping No choice Each block only maps to one line Replace that line 53 Replacement Algorithms (2) Associative & Set Associative Hardware implemented algorithm (speed) Least Recently used (LRU) e.g. in 2 way set associative —Which of the 2 block is lru? First in first out (FIFO) —replace block that has been in cache longest Least frequently used —replace block which has had fewest hits Random 54 Write Policy Must not overwrite a cache block unless main memory is up to date Multiple CPUs may have individual caches I/O may address main memory directly 55 Write through All writes go to main memory as well as cache Multiple CPUs can monitor main memory traffic to keep local (to CPU) cache up to date Lots of traffic Slows down writes Remember bogus write through caches! 56 Write back Updates initially made in cache only Update bit for cache slot is set when update occurs If block is to be replaced, write to main memory only if update bit is set Other caches get out of sync I/O must access main memory through cache N.B. 15% of memory references are writes 57 Pentium 4 Cache 80386 – no on chip cache 80486 – 8k using 16 byte lines and four way set associative organization Pentium (all versions) – two on chip L1 caches — Data & instructions Pentium III – L3 cache added off chip Pentium 4 — L1 caches • 8k bytes • 64 byte lines • four way set associative — L2 cache • Feeding both L1 caches • 256k • 128 byte lines • 8 way set associative — L3 cache on chip 58 Intel Cache Evolution Problem Solution Processor on which feature first appears External memory slower than the system bus. Add external cache using faster memory technology. 386 Increased processor speed results in external bus becoming a bottleneck for cache access. Move external cache on-chip, operating at the same speed as the processor. 486 Internal cache is rather small, due to limited space on chip Add external L2 cache using faster technology than main memory 486 Create separate data and instruction caches. Pentium Create separate back-side bus that runs at higher speed than the main (front-side) external bus. The BSB is dedicated to the L2 cache. Pentium Pro Contention occurs when both the Instruction Prefetcher and the Execution Unit simultaneously require access to the cache. In that case, the Prefetcher is stalled while the Execution Unit’s data access takes place. Increased processor speed results in external bus becoming a bottleneck for L2 cache access. Some applications deal with massive databases and must have rapid access to large amounts of data. The on-chip caches are too small. Move L2 cache on to the processor chip. Pentium II Add external L3 cache. Pentium III Move L3 cache on-chip. Pentium 4 59 Pentium 4 Block Diagram 60 Pentium 4 Core Processor Fetch/Decode Unit — Fetches instructions from L2 cache — Decode into micro-ops — Store micro-ops in L1 cache Out of order execution logic — Schedules micro-ops — Based on data dependence and resources — May speculatively execute Execution units — Execute micro-ops — Data from L1 cache — Results in registers Memory subsystem — L2 cache and systems bus 61 Pentium 4 Design Reasoning Decodes instructions into RISC like micro-ops before L1 cache Micro-ops fixed length — Superscalar pipelining and scheduling Pentium instructions long & complex Performance improved by separating decoding from scheduling & pipelining — (More later – ch14) Data cache is write back — Can be configured to write through L1 cache controlled by 2 bits in register — CD = cache disable — NW = not write through — 2 instructions to invalidate (flush) cache and write back then invalidate L2 and L3 8-way set-associative — Line size 128 bytes 62 PowerPC Cache Organization 601 – single 32kb 8 way set associative 603 – 16kb (2 x 8kb) two way set associative 604 – 32kb 620 – 64kb G3 & G4 —64kb L1 cache •8 way set associative —256k, 512k or 1M L2 cache •two way set associative G5 —32kB instruction cache —64kB data cache 63 PowerPC G5 Block Diagram 64 Layers of Cache Level Devices Cached Level1 Level2 cache, system RAM, Hard Disk, DC-ROM Level2 system RAM, Hard Disk, DC-ROM System RAM Hard Disk, DC-ROM Hard Disk/CD-ROM -- 65 More about Cache Disk cache, peripheral cache (e.g.: CDs, very slow initial access time), Internet cache (software). A 512kb level 2 cache, caching 64MB of system memory, can supply 90-95% of info. <=1% in size. This is due to locality of reference (large programs with several MBs of instructions, only small portions are loaded at a time (e.g.: Opening a word document)). SRAM 7-20 ns, DRAM50-70 ns Level2 256-512KB, level18-64KB 66 Locality of reference Special Locality: Reflects the tendency of the processor to access data locations sequentially. Temporal Locality: Reflects the tendency of the processor to access the recently used memory locations. 67