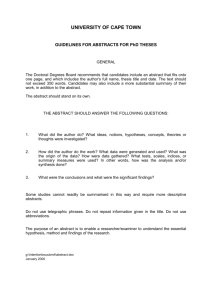

ResearchNotes - Cambridge English

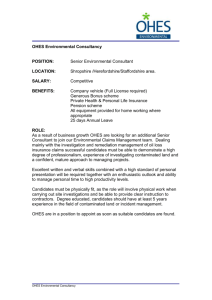

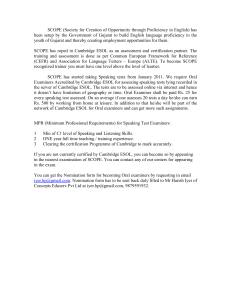

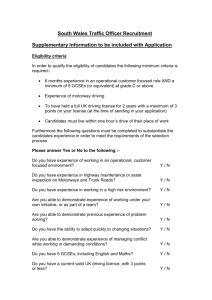

advertisement