On the Near Impossibility of Measuring the Returns

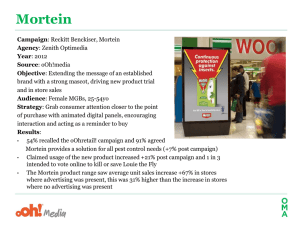

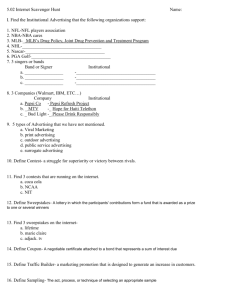

advertisement