CPS532 Parallel Architectures

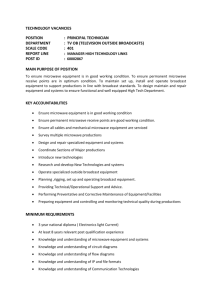

advertisement

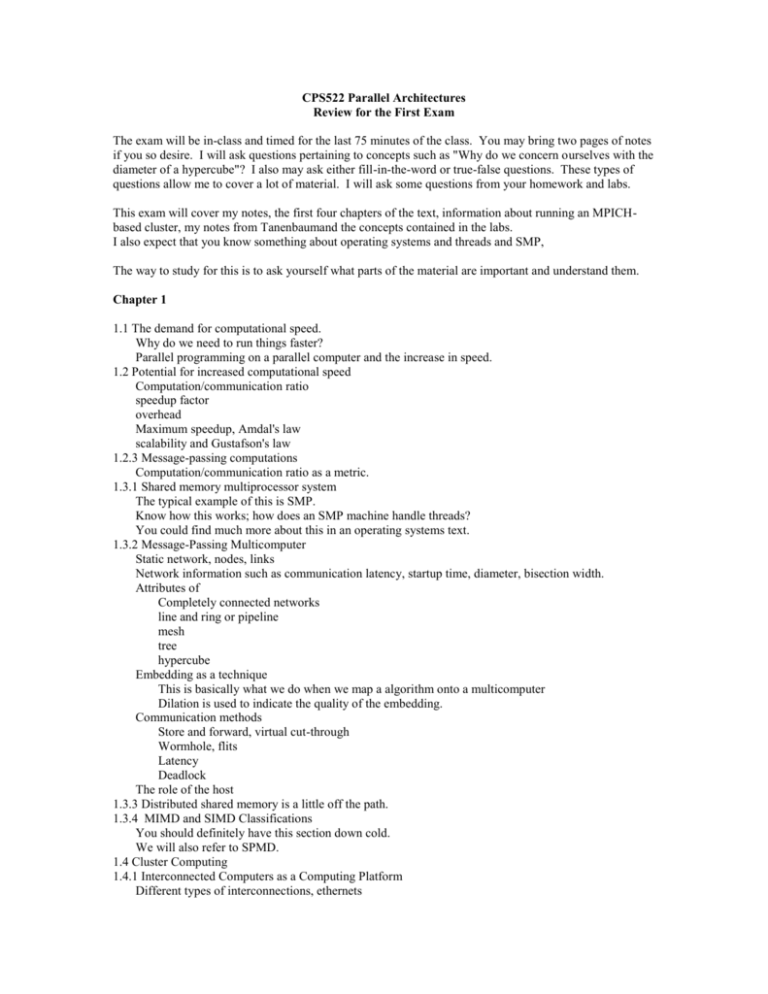

CPS522 Parallel Architectures Review for the First Exam The exam will be in-class and timed for the last 75 minutes of the class. You may bring two pages of notes if you so desire. I will ask questions pertaining to concepts such as "Why do we concern ourselves with the diameter of a hypercube"? I also may ask either fill-in-the-word or true-false questions. These types of questions allow me to cover a lot of material. I will ask some questions from your homework and labs. This exam will cover my notes, the first four chapters of the text, information about running an MPICHbased cluster, my notes from Tanenbaumand the concepts contained in the labs. I also expect that you know something about operating systems and threads and SMP, The way to study for this is to ask yourself what parts of the material are important and understand them. Chapter 1 1.1 The demand for computational speed. Why do we need to run things faster? Parallel programming on a parallel computer and the increase in speed. 1.2 Potential for increased computational speed Computation/communication ratio speedup factor overhead Maximum speedup, Amdal's law scalability and Gustafson's law 1.2.3 Message-passing computations Computation/communication ratio as a metric. 1.3.1 Shared memory multiprocessor system The typical example of this is SMP. Know how this works; how does an SMP machine handle threads? You could find much more about this in an operating systems text. 1.3.2 Message-Passing Multicomputer Static network, nodes, links Network information such as communication latency, startup time, diameter, bisection width. Attributes of Completely connected networks line and ring or pipeline mesh tree hypercube Embedding as a technique This is basically what we do when we map a algorithm onto a multicomputer Dilation is used to indicate the quality of the embedding. Communication methods Store and forward, virtual cut-through Wormhole, flits Latency Deadlock The role of the host 1.3.3 Distributed shared memory is a little off the path. 1.3.4 MIMD and SIMD Classifications You should definitely have this section down cold. We will also refer to SPMD. 1.4 Cluster Computing 1.4.1 Interconnected Computers as a Computing Platform Different types of interconnections, ethernets Addressing 1.4.2 Networked computers as a multicomputer platform Existing networks Dedicated Cluster Problems Chapter 2 Message-Passing Computing 2.1 Basics of Message-Passing Programming 2.1.1 Programming Options Programming a message-passing multicomputer can be achieved by Designing a special parallel programming language: occam on transputers Extending the syntax/reserved words of an existing sequential high-level language to handle message passing CC+ and Fortran M Using an existing sequential high-level language and providing a library of external procedures for message passing. Need a method of creating separate processes for execution on different computers and a method of sending and receiving messages. 2.1.2 Process Creation The Concept of a Process static process creation where all the processes are specified before execution and the system will execute a fixed number of processes master - slave or farmer - worker format Single program multiple data (SPMD) model, the different processes are merged into one program. Within the program are control statements that will customize the code, select different parts for each process. The program is compiled and each processor loads a copy MPI Dynamic Process Creation Processes can be created and their execution initiated during execution of other processes done by library/system calls The code for the processes has to be written and compiled before execution of any process Different program on each machine - MPMD Usually uses master/slave approach spawn(nameofprocess) PVM uses dynamic process creation, can also be SPMD 2.13 Message-Passing Routines Basic send and receive routines send(&x, destination_id); receive(&y, source_id); Synchronous Message Passing when the message is not sent until the receiver is ready to receive. rendezvous Doesn't need a buffer Usually require some sort of request to send and acknowledgement. Block and Nonblocking Message Passing Needs a buffer, so that it will return after their local actions complete. Message Selection Message tags are used so that messages can be given a id and a source or destination. e.g. receive message 5 from node 3. Broadcast, Gather, and Scatter Broadcast send the same message to all the processes. Multicast the same message to a defined group of processes. See Figure 2.6 Scatter is sending each element of an array of data in the root to a separate process. Gather is used to describe having one process collect individual values form a set of processes. Usually the opposite of scatter. Reduce operations combine gather with an arithmetic/logical operation . 2.2 Using Workstation clusters 2.2.1 Software tools We have the MPICH implementation of MPI in the Sun lab. 2.2.2 MPI This section and the MPI tutorial should do it. Process Creation and Execution Using the SPMD Computational Model Message-Passing Routines communicators point-to-point completion blocking routines nonblocking routines Send Communication Modes Collective Communication broadcast and scatter routines barrier Sample MPI program 2.2.3 Pseudocode Constructs The additions of PVM or MPI constructs to the code detract from readability. Use C-type pseudocode to describe the algorithms. Really only need send and receive. The process identification is place last in the list as in MPI. To send the message consisting of the integer x and a float y, form the process called master to the process called slave, assigning to a and b, we write in the master process send(&, &y, Pslave); and in the slave process recv(&a, &b, Pmaster); Note thta this is the format for locally blocking. We could use ssend(&data1, Pdestination); for a synchronous send. 2.3 Evaluating Parallel Programs For parallel algorithms we need to estimate communication overhead in addition to determining the number of computational steps. 2.3.1 Parallel Execution Time parallel execution time = computation time + communication time We can estimate the computation time in the same way we estimate time for a sequential algorithm. Communication time is dependent on the size of the message and some startup time for a workstation called latency. communication time = startup time + (number of data words * time for one data word) Latency hiding is having the processor do useful work while waiting for the communication to be completed. 2.3.2 Time Complexity Have you had an algorithm course? The O notation can be defined as f(x) = O(g(x)) if and only if there exits positive constants, c and x0, such that 0 <= f(x) <= cg(x) for all x >= x0 where f(x) and g(x) are functions of x. f(x) = 4x**2 + 2x + 12, we could use c = 6 for f(x) = O(x**2) since 0 <= 4x**2 +2x + 12 <= 6x**2 for x >=3 p. 67 Time complexity of a parallel algorithm Time complexity of a parallel algorithm will be the some of the time complexity of the computation and the communication For adding n numbers on 2 processors and adding the two results on one of them time for computation = n/2 + 1 communication requires time to send out half the numbers and to retrieve the partial result time for communication = time for start up + n/2 * time for data item + time for startup + time for one data item. Communication is costly, if both computation and communication have time the same time complexity, increasing the number of data items is unlikely to increase performance. If computation is greater than communication then we can get computation to dominate communication. A cost-optimal algorithm is one in which the cost to solve a problem is proportional to the execution time on a single processor. (parallel time complexity) X (number of processors) = sequential time complexity 2.3.3 Comments on Asymptotic Analysis In general we worry about limited size data sets on a small number of processors. Startup can dominate the time. Worrying about limits approaching infinity are usually not applicable. 2.3.4 Time Complexity of Broadcast/Gather Almost all problems require data to be broadcast to processes and data to be gathered from processes. Hypercube A message can be broadcast to all nodes in an n-node hypercube in log n steps. This implies the time complexity for broadcast/gather is O(n) which is optimal because the diameter of a hypercube is log n. Broadcast on a Mesh Network Without wraparound, from upper left, across top row, and down each column. This requires 2(n-1) steps or O(n) on a nxn mesh. Broadcast on a Workstation Cluster Broadcast on a single ethernet connection can be done using a single message that is read by all destinations on the network simultaneously. Can use a 1-to-n fan-out broadcast via daemons as in the PVM broadcast routine. This will usuall result in a tree structure. O(M + N/M) for one data item broadcast to N destination where there are N daemons. 2.4 Debugging and Evaluating Parallel Programs Not really interested in 2.4.1 and 2.4.2 2.4.1 Low-level Debugging 2.4.2 Visualization Tools 2.4.3 Debugging Strategies 1. If possible run the program as a single process and debug as a normal sequential program. 2. Execute the program using two to four multitasked processes on a single computer. Now examine actions such as checking that messages are indeed being sent to the correct places. It is very common to make mistakes with message tags and have messages sent to the wrong places. 3. Execute the program using the same two to four processes but not across several computers. This step helps find problems that are caused by network delays related to synchronization and timing. 2.4.4 Evaluating Programs Empirically Measuring execution time - we will have to do this. Communication by the Ping-Pong Method Profiling 2.4.5 Comments on Optimizing the Parallel Code. Chapter 3 You should have a basic understanding of how the two examples we discuss in class. Geometric transformation – lots of homework The mandelbrot set – section 3.2.2 and the code. Chapter 4 Review the homework questions.