Hash Tables

advertisement

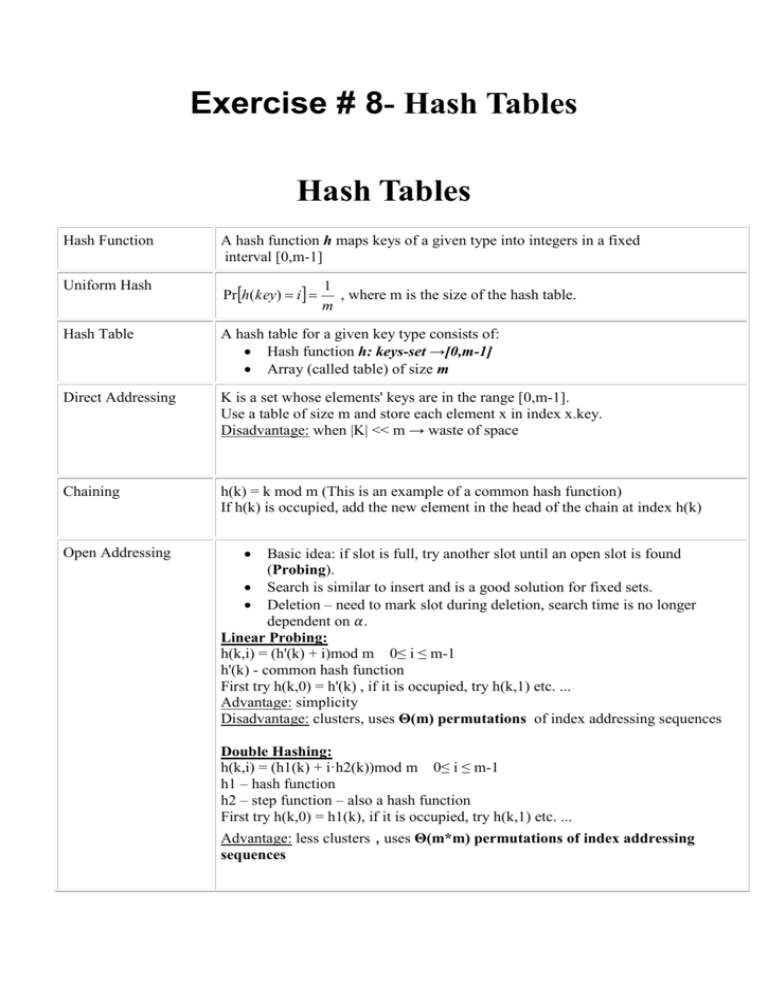

Exercise # 8- Hash Tables

Hash Tables

Hash Function

A hash function h maps keys of a given type into integers in a fixed

interval [0,m-1]

Uniform Hash

Prh(key) i

Hash Table

A hash table for a given key type consists of:

Hash function h: keys-set →[0,m-1]

Array (called table) of size m

Direct Addressing

K is a set whose elements' keys are in the range [0,m-1].

Use a table of size m and store each element x in index x.key.

Disadvantage: when |K| << m → waste of space

Chaining

h(k) = k mod m (This is an example of a common hash function)

If h(k) is occupied, add the new element in the head of the chain at index h(k)

Open Addressing

1

, where m is the size of the hash table.

m

Basic idea: if slot is full, try another slot until an open slot is found

(Probing).

Search is similar to insert and is a good solution for fixed sets.

Deletion – need to mark slot during deletion, search time is no longer

dependent on 𝛼.

Linear Probing:

h(k,i) = (h'(k) + i)mod m 0≤ i ≤ m-1

h'(k) - common hash function

First try h(k,0) = h'(k) , if it is occupied, try h(k,1) etc. ...

Advantage: simplicity

Disadvantage: clusters, uses Θ(m) permutations of index addressing sequences

Double Hashing:

h(k,i) = (h1(k) + i·h2(k))mod m 0≤ i ≤ m-1

h1 – hash function

h2 – step function – also a hash function

First try h(k,0) = h1(k), if it is occupied, try h(k,1) etc. ...

Advantage: less clusters , uses Θ(m*m) permutations of index addressing

sequences

Load Factor α

Average (expected)

Search Time

n

, Hash table with m slots that stores n elements (keys)

m

Open Addressing

unsuccessful search: O(1+1/(1-α))

successful search: O(1+ (1/α)ln( 1/(1-α)))

Chaining

unsuccessful search: Θ (1 + α)

successful search: Θ (1 + α/2) = Θ (1 + α)

Dictionary ADT

The dictionary ADT models a searchable collection of key-element items, and

supports the following operations:

Insert

Delete

Search

Bloom Filter

A Bloom filter model consists of a bit-Array of size m (the bloom filter) and k hash

functions h1, h2, ... hk. It supports insertion and search queries only:

Insert at O(1)

Search(x) in O(1), with (1-e kn / m ) k probability of getting a false positive

Remarks:

1. The efficiency of hashing is examined in the average case, not the worst case.

2. Load factor defined above is the average number of elements that are hashed to the

same value.

3. Let us consider chaining:

The load factor is the average number of elements that are stored in a single linked list.

If we examine the worst-case, then all n keys are hashed to the same cell and form a

linked list of length n. Thus, running time of searching of an element in the worst case

is (Θ(n)+ the time of computing the hash function). So, it is clear that we are not using

hash tables due to their worst case performance, and when analyzing running time of

hashing operations we refer to the average case.

Question 1

Given a hash table with m=11 entries and the following hash function h1 and step function h2:

h1(key) = key mod m

h2(key) = {key mod (m-1)} + 1

Insert the keys {22, 1, 13, 11, 24, 33, 18, 42, 31} in the given order (from left to right) to

the hash table using each of the following hash methods:

a. Chaining with h1 h(k) = h1(k)

b. Linear-Probing with h1 h(k,i) = (h1(k)+i) mod m

c. Double-Hashing with h1 as the hash function and h2 as the step function

h(k,i) = (h1(k) + ih2(k)) mod m

Solution:

Chaining

Linear Probing Double Hashing

0

33 → 11→ 22 22

22

1

1

1

1

2

24 → 13

13

13

3

11

4

24

11

5

33

18

6

7

31

18

18

8

9

10

24

33

31→ 42

42

31

42

Question 2

a. For the previous h1 and h2, is it OK to use h2 as a hash function and h1 as a step function?

b. Why is it important that the result of the step function and the table size will not have a common

divisor? That is, if hstep is the step function, why is it important to enforce GCD(hstep(k),m) = 1 for every

k?

Solution:

a. No, since h1 can return 0 and h2 skips the entry 0. For example, key 11 h1(11) = 0 , and all the steps

are of length 0, h(11,i) = h2(11) + i*h1(11) = h2(11) = 2. The sequence of index addressing for key 11

consists of only one index, 2.

b. Suppose GCD(hstep(k),m) = d >1 for some k. The search after k would only address 1/d of the hash

table, as the steps will always get us to the same cells. If those cells are occupied we may not find an

available slot even if the hast table if not full.

48

2 cells of the table would be addressed.

Example: m = 48, hstep(k) = 24 ,GCD(48,24) = 24 only

24

There are two guidelines on choosing m and step function so that GCD(hstep(k),m)=1:

1. The size of the table m is a prime number, and hstep(k) < m for every k. (An example for this is

illustrated in Question1 ).

2. The size of the table m is 2x, and the step function returns only odd values.

Question 3

Consider two sets of integers, S = {s1, s2, ..., sm} and T = {t1, t2, ..., tn}, m ≤ n.

a. Describe a deterministic algorithm that checks whether S is a subset of T.

What is the running time of your algorithm?

b. Devise an algorithm that uses a hash table of size m to test whether S is a subset of T.

What is the average running time of your algorithm?

c. Suppose we have a bloom filter of size 𝑟, and 𝑘 hash functions h1, h2, …hk : U → [0, r-1] (U is

the set of all possible keys).

Describe an algorithm that checks whether S is a subset of T at O(n) worst time. What is the

probability of getting a positive result although S is not a subset of T?

(As a function of n, m, r and |S∩T|, you can assume k = O(1))

Solution:

a)

Subset(T,S,n,m)

T=sort(T)

for each sj S

found = BinarySearch(T, sj)

if (!found)

return "S is not a subset of T"

return "S is a subset of T"

Time Complexity: O(nlogn+mlogn)=O(nlogn)

b) The solution that uses a hash table of size 𝑚 with chaining:

SubsetWithHashTable(T,S,n,m)

for each ti T

insert(HT, ti)

for each sj S

found = search(HT, sj)

if (!found)

return "S is not a subset of T"

return "S is a subset of T"

Time Complexity Analysis:

Inserting all elements ti to HT: n times 𝑂(1) = 𝑂(𝑛)

𝛼

If S ⊆ T then there are m successful searches: 𝑚 (1 + 2 ) = 𝑂(𝑚)

If S ⊈ T then in the worst case all the elements except the last one, sm is in S, are in T, so

𝛼

there are (m-1) successful searches and one unsuccessful search: (𝑚 − 1) (1 + 2 ) +

(1 + 𝛼) = 𝑂(𝑚)

Time Complexity: 𝑂(𝑛 + 𝑚) = 𝑂(𝑛)

c) We first insert all the elements in T to the Bloom filter. Then for each element x in S we query

search(x), once we get one negetive result we return FALSE and break, if we get positive results

on all the m queries we return TRUE.

Time Complexity Analysis:

n Insertions and m search queries to the bloom filter:

n•O(k) + m•O(k) = (n+m)•O(1) = O(n)

The probability of getting a false positive result:

Denote t = |S∩T|, for all t elements in |S∩T| we will get a positive result, the probability

that we get a positive result for each of the m-t elements in |S\T| is ~ (1-e kn / r ) k and thus

the probability of getting a positive result for all these m-t elements and so returning a

TRUE answer although S ⊈ T is ~ (1-e kn / r ) k ( m t )

Note that the above analysis (b and c) gives an estimation of average run time

Question 4

You are given an array A[1..n] with n distinct real numbers and some value X. You need to

determine whether there are two numbers in A[1..n] whose sum equals X.

a. Do it in deterministic O(nlogn) time.

b. Do it in expected O(n) time.

Solution a:

Sort A (O(nlogn) time). Pick A[1]. Perform a binary search (O(logn) time) on A to find an

element that is equal to X-A[1]. If found, you’re done. Otherwise, repeat the same with A[2],

A[3], …

Overall O(nlogn) time

Solution b:

1. Choose a universal hash function h from a universal collection of hash functions H.

2. Store all elements of array A in a Hash table of size n using h and chaining.

3. For (i = 1; i <= n; i++) {

If ((A[i] is in Table) && (X-A[i] is in Table)) {

Return true

}

Return false

Time Complexity: We don't know if the elements inside the array distribute randomly. If they do not

distribute randomly then using a "regular" hash function can result in α = O(n) and then the runtime could

be O(n^2).

If we use a universal hash function then regardless of the keys distribution the probability that two keys

collide is no more than 1/m = 1/n. As seen in class if we use chaining and a universal hash function then

the expected search time is O(1) and the expected runtime for the algorithm is O(n).

Question 5 - Rolling hash

Rolling hash is used when we want to enable fast search for parts of data. For example searching sub

paragraphs in a long paragraph.

In Rolling hash, a hash value is calculated for every fixed size part of the input in a sliding window

manner. Example:

Input: Hello my name is Inigo Montoya

Window size: 7

The result is a hash value for every 8 characters:

Window

Hash value example

Hello my name is Inigo Montoya

hash on Hello m = 20

Hello my name is Inigo Montoya

hash on ello my = 571

Hello my name is Inigo Montoya

hash on llo my = 12

Hello my name is Inigo Montoya

hash on lo my n = 322

Hello my name is Inigo Montoya

hash on o my na = 9

Hello my name is Inigo Montoya

hash on my nam = 111

Hello my name is Inigo Montoya

hash on my name = 7

Usually the window size is 512 and characters which means 512 bytes and each window differ from its

predecessor in 1 character in the beginning and 1 in the end.

Due to the fact that mod is a heavy command (if we are using mod as hash), and mod on a number with

512X8 bits (2^4096) is especially heavy, it is much easier to calculate the hash value of the window based

on the difference from the preceding window.

In a given text, the 1st character is: a (ascii between 0 and 255) and the (k+1)th character is b (ascii

between 0 and 255). If the hash value of the first k size window w1 is X, and the hush function is mod m,

what is the value of the second window w2?

Solution:

a is the most significant byte in a 8k bit number and b is the list significant byte. Thus:

w2 = (w1 – a*28(k-1))*28+b

w2 mod m = ((w1 – a*28(k-1))*2^8+b) mod m = (w1*28 – a*28k +b) mod m

= ((w1 mod m)* (28 mod m) – (a mod m)*(28k mod m) + b mod m) mod m

= X * (28 mod m) – (a mod m)*(28k mod m) + b mod m) mod m

Note that X is known from the last window, a,b and 28 are small numbers – easy to calculate mod and 28k

mod m can be calculated once in the beginning and saved.

Question 6

You have a large amount of data chunks that need to be stored in servers in the cloud. In order to for the

data to be distributed evenly, each data chunked is hashed to decide what server it will be stored in.

In case a server stops working, its data chunks are supposed to be distributed evenly among the remaining

servers.

The data chunks of the remaining server obviously need to continue being stored in their server.

If we implement such a system by numbering the servers and using modulo the number of servers as hash

function, we will have a problem when a server stops working and the number of server changes, all the

hash values will change.

Suggest a way to implement such a system.

Solution:

Consistent hashing

The servers and data chunks will be hashed to the same table (array). Each server will be mapped by a

number of hash functions so it will have a number of values in the table. Each server gets the chunks on

its right until it meets another server:

In this case:

Chunk 1 is mapped to server 1

Chunk 2 is mapped to server 3 (the array is circular)

Chunk 3 is mapped to server 2

Chunk 4 is mapped to server 2

Chunk 5 is mapped to server 2

Chunk 6 is mapped to server 2

Chunk 7 is mapped to server 3

Chunk 8 is mapped to server 1

If server 2 stops working, the other server still have their data and the data of server 2 is distributed

among them:

Now:

Chunk 1 is mapped to server 1

Chunk 2 is mapped to server 3 (the array is circular)

Chunk 3 is mapped to server 1

Chunk 4 is mapped to server 3

Chunk 5 is mapped to server 1

Chunk 6 is mapped to server 3

Chunk 7 is mapped to server 3

Chunk 8 is mapped to server 1

Question 7

You have a hash table with α=0.5. What is the probability that 3 or more keys will be mapped in to the

same index?

Solution:

According to Poisson distribution, if the expected number of keys in the same index is λ, than the

probability to get exactly k keys in 1 index is:

𝜆𝑘 𝑒 −𝜆

𝑘!

In our case λ=0.5 so:

The probability for an index to be empty is

0.50 𝑒 −0.5

0!

The probability for an index to hold exactly 1 key is

= 𝑒 −0.5

0.51 𝑒 −0.5

The probability for an index to hold exactly 2 keys is

1!

0.52 𝑒 −0.5

2!

= 0.5𝑒 −0.5

= 0.125𝑒 −0.5

And thus the probability of 3 or more keys is 1 − 𝑒 −0.5 − 0.5𝑒 −0.5 − 0.125𝑒 −0.5 = 0.014