docx - Tsinghua Math Camp 2015

advertisement

Probability & Statistics

Professor Wei Zhu

July 23rd

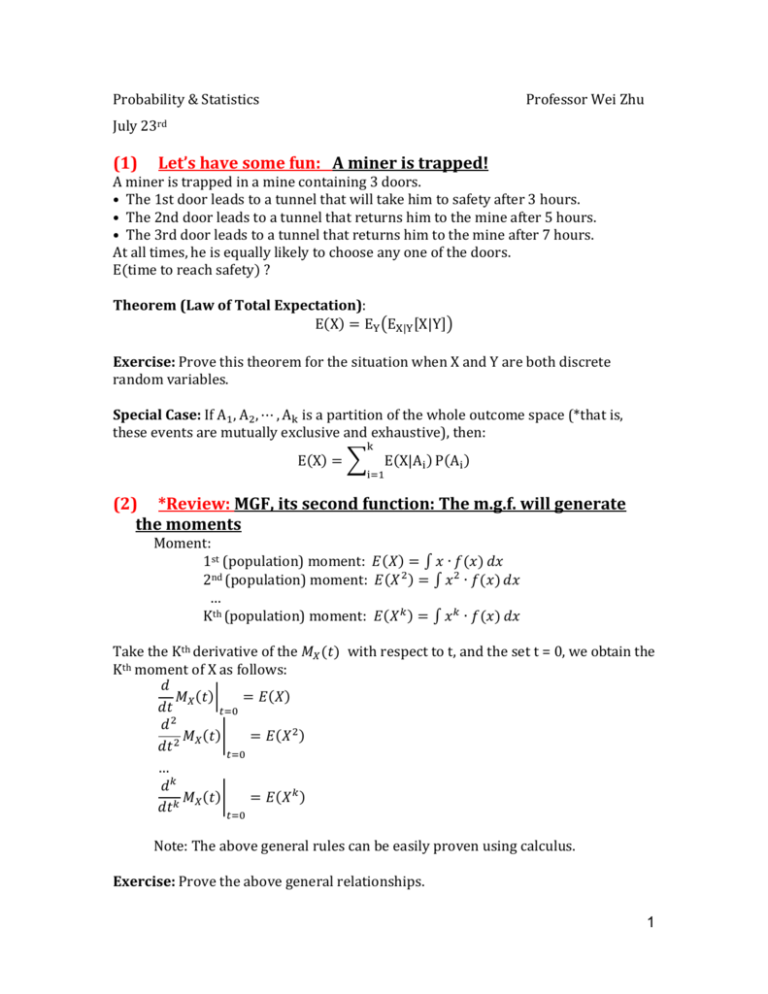

(1)

Let’s have some fun: A miner is trapped!

A miner is trapped in a mine containing 3 doors.

• The 1st door leads to a tunnel that will take him to safety after 3 hours.

• The 2nd door leads to a tunnel that returns him to the mine after 5 hours.

• The 3rd door leads to a tunnel that returns him to the mine after 7 hours.

At all times, he is equally likely to choose any one of the doors.

E(time to reach safety) ?

Theorem (Law of Total Expectation):

E(X) = EY (EX|Y [X|Y])

Exercise: Prove this theorem for the situation when X and Y are both discrete

random variables.

Special Case: If A1 , A2 , ⋯ , Ak is a partition of the whole outcome space (*that is,

these events are mutually exclusive and exhaustive), then:

k

E(X) = ∑

E(X|Ai ) P(Ai )

i=1

(2) *Review: MGF, its second function: The m.g.f. will generate

the moments

Moment:

1st (population) moment: 𝐸(𝑋) = ∫ 𝑥 ∙ 𝑓(𝑥) 𝑑𝑥

2nd (population) moment: 𝐸(𝑋 2 ) = ∫ 𝑥 2 ∙ 𝑓(𝑥) 𝑑𝑥

…

Kth (population) moment: 𝐸(𝑋 𝑘 ) = ∫ 𝑥 𝑘 ∙ 𝑓(𝑥) 𝑑𝑥

Take the Kth derivative of the 𝑀𝑋 (𝑡) with respect to t, and the set t = 0, we obtain the

Kth moment of X as follows:

𝑑

𝑀 (𝑡)|

= 𝐸(𝑋)

𝑑𝑡 𝑋

𝑡=0

𝑑2

𝑀 (𝑡)|

= 𝐸(𝑋 2 )

𝑑𝑡 2 𝑋

𝑡=0

…

𝑑𝑘

𝑀 (𝑡)|

= 𝐸(𝑋 𝑘 )

𝑑𝑡𝑘 𝑋

𝑡=0

Note: The above general rules can be easily proven using calculus.

Exercise: Prove the above general relationships.

1

Example (proof of a special case): When ~𝑁(𝜇, 𝜎 2 ) , we want to verify the above

equations for k=1 & k=2.

𝑑

𝑀 (𝑡)|

𝑑𝑡 𝑋

𝑡=0

1 2 2

𝑡

= (𝑒 𝜇𝑡+2𝜎

) ∙ (𝜇 + 𝜎 2 𝑡) (using the chain rule)

So when t=0

𝑑

𝑀 (𝑡)|

= 𝜇 = 𝐸(𝑋)

𝑑𝑡 𝑋

𝑡=0

𝑑2

𝑑 𝑑

(𝑡)

𝑀

=

[ 𝑀 (𝑡)]

𝑋

𝑑𝑡 2

𝑑𝑡 𝑑𝑡 𝑋

1 2 2

𝑑

= [(𝑒 𝜇𝑡+2𝜎 𝑡 ) ∙ (𝜇 + 𝜎 2 𝑡)] (using the result of the Product Rule)

𝑑𝑡

1 2 2

1 2 2

= (𝑒 𝜇𝑡+2𝜎 𝑡 ) ∙ (𝜇 + 𝜎 2 𝑡)2 + (𝑒 𝜇𝑡+2𝜎 𝑡 ) ∙ 𝜎 2

𝑑2

𝑀 (𝑡)|

= 𝜇 2 + 𝜎 2 = 𝐸(𝑋 2 )

𝑑𝑡 2 𝑋

𝑡=0

Considering 𝜎 2 = Var(X) = E(𝑋 2 ) − 𝜇 2

*(3). Review: Joint distribution, and independence

Definition. The joint cdf of two random variables X and Y are defined as:

𝐹𝑋,𝑌 (𝑥, 𝑦) = 𝐹(𝑥, 𝑦) = 𝑃(𝑋 ≤ 𝑥, 𝑌 ≤ 𝑦)

Definition. The joint pdf of two discrete random variables X and Y are defined as:

𝑓𝑋,𝑌 (𝑥, 𝑦) = 𝑓(𝑥, 𝑦) = 𝑃(𝑋 = 𝑥, 𝑌 = 𝑦)

Definition. The joint pdf of two continuous random variables X and Y are defined

as:

𝜕2

𝑓𝑋,𝑌 (𝑥, 𝑦) = 𝑓(𝑥, 𝑦) = 𝜕𝑥𝜕𝑦 𝐹(𝑥, 𝑦)

Definition. The marginal pdf of the discrete random variable X or Y can be obtained

by summation of their joint pdf as the following: 𝑓𝑋 (𝑥) = ∑𝑦 𝑓(𝑥, 𝑦) ; 𝑓𝑌 (𝑦) =

∑𝑥 𝑓(𝑥, 𝑦) ;

Definition. The marginal pdf of the continuous random variable X or Y can be

∞

obtained by integration of the joint pdf as the following: 𝑓𝑋 (𝑥) = ∫−∞ 𝑓(𝑥, 𝑦) 𝑑𝑦;

∞

𝑓𝑌 (𝑦) = ∫−∞ 𝑓(𝑥, 𝑦) 𝑑𝑥;

Definition. The conditional pdf of a random variable X or Y is defined as:

2

𝑓(𝑥, 𝑦)

𝑓(𝑥, 𝑦)

; 𝑓(𝑦|𝑥) =

𝑓(𝑦)

𝑓(𝑥)

Definition. The joint moment generating function of two random variables X and Y

is defined as

𝑀𝑋,𝑌 (𝑡1 , 𝑡2 ) = 𝐸(𝑒 𝑡1 𝑋+𝑡2 𝑌 )

Note that we can obtain the marginal mgf for X or Y as follows:

𝑀𝑋 (𝑡1 ) = 𝑀𝑋,𝑌 (𝑡1 , 0) = 𝐸(𝑒 𝑡1 𝑋+0∗𝑌 ) = 𝐸(𝑒 𝑡1 𝑋 ); 𝑀𝑌 (𝑡2 ) = 𝑀𝑋,𝑌 (0, 𝑡2 ) = 𝐸(𝑒 0∗𝑋+𝑡2 ∗𝑌 )

= 𝐸(𝑒 𝑡2 ∗𝑌 )

𝑓(𝑥|𝑦) =

Theorem. Two random variables X and Y are independent ⇔ (if and only if)

𝐹𝑋,𝑌 (𝑥, 𝑦) = 𝐹𝑋 (𝑥)𝐹𝑌 (𝑦) ⇔ 𝑓𝑋,𝑌 (𝑥, 𝑦) = 𝑓𝑋 (𝑥)𝑓𝑌 (𝑦) ⇔ 𝑀𝑋,𝑌 (𝑡1 , 𝑡2 ) = 𝑀𝑋 (𝑡1 ) 𝑀𝑌 (𝑡2 )

Definition. The covariance of two random variables X and Y is defined as

𝐶𝑂𝑉(𝑋, 𝑌) = 𝐸[(𝑋 − 𝜇𝑋 )(𝑌 − 𝜇𝑌 )].

Theorem. If two random variables X and Y are independent, then we have

𝐶𝑂𝑉(𝑋, 𝑌) = 0. (*Note: However, 𝐶𝑂𝑉(𝑋, 𝑌) = 0 does not necessarily mean that X

and Y are independent.)

(4) *Definitions: population correlation & sample correlation

Definition: The population (Pearson Product Moment) correlation coefficient ρ is

defined as:

𝑐𝑜𝑣(𝑋, 𝑌)

𝜌=

√𝑣𝑎𝑟(𝑋) ∗ 𝑣𝑎𝑟(𝑌)

Definition: Let (X1 , Y1), …, (Xn , Yn) be a random sample from a given bivariate

population, then the sample (Pearson Product Moment) correlation coefficient r is

defined as:

𝑟=

∑(𝑋𝑖 − 𝑋̅)(𝑌𝑖 − 𝑌̅)

√[∑(𝑋𝑖 − 𝑋̅)2 ][∑(𝑌𝑖 − 𝑌̅)2 ]

3

Left: Karl Pearson FRS[1] (/ˈpɪərsən/; originally named Carl; 27 March 1857 – 27 April

1936[2]) was an influential English mathematician and biometrician.

Right: Sir Francis Galton, FRS (/ˈfrɑːnsɪs ˈɡɔːltən/; 16 February 1822 – 17 January 1911)

was a British Scientist and Statistician.

(5) *Definition: Bivariate Normal Random Variable

(𝑋, 𝑌)~𝐵𝑁(𝜇𝑋 , 𝜎𝑋2 ; 𝜇𝑌 , 𝜎𝑌2 ; 𝜌) where 𝜌 is the correlation between 𝑋 & 𝑌

The joint p.d.f. of (𝑋, 𝑌) is

1

1

𝑥 − 𝜇𝑋 2

𝑥 − 𝜇𝑋 𝑦 − 𝜇𝑦

𝑓𝑋,𝑌 (𝑥, 𝑦) =

exp {−

[(

) − 2𝜌 (

)(

)

2

2(1 − 𝜌 )

𝜎𝑋

𝜎𝑋

𝜎𝑌

2𝜋𝜎𝑋 𝜎𝑌 √1 − 𝜌2

𝑦 − 𝜇𝑌 2

+(

) ]}

𝜎𝑌

Exercise: Please derive the mgf of the bivariate normal distribution.

Q5. Let X and Y be random variables with joint pdf

1

1

𝑥 − 𝜇𝑋 2

𝑥 − 𝜇𝑋 𝑦 − 𝜇𝑦

𝑓𝑋,𝑌 (𝑥, 𝑦) =

exp {−

[(

) − 2𝜌 (

)(

)

2

2(1 − 𝜌 )

𝜎𝑋

𝜎𝑋

𝜎𝑌

2𝜋𝜎𝑋 𝜎𝑌 √1 − 𝜌2

𝑦 − 𝜇𝑌 2

+(

) ]}

𝜎𝑌

Where −∞ < 𝑥 < ∞, −∞ < 𝑦 < ∞. Then X and Y are said to have the bivariate

normal distribution. The joint moment generating function for X and Y is

1

𝑀(𝑡1 , 𝑡2 ) = exp [𝑡1 𝜇𝑋 + 𝑡2 𝜇𝑌 + (𝑡12 𝜎𝑋2 + 2𝜌𝑡1 𝑡2 𝜎𝑋 𝜎𝑌 + 𝑡22 𝜎𝑌2 )]

2

.

(a) Find the marginal pdf’s of X and Y;

(b) Prove that X and Y are independent if and only if ρ = 0.

4

(Here ρ is indeed, the <population> correlation coefficient between X and Y.)

(c) Find the distribution of(𝑋 + 𝑌).

(d) Find the conditional pdf of f(x|y), and f(y|x)

Solution:

(a)

The moment generating function of X can be given by

1

𝑀𝑋 (𝑡) = 𝑀(𝑡, 0) = 𝑒𝑥𝑝 [𝜇𝑋 𝑡 + 𝜎𝑋2 𝑡 2 ].

2

Similarly, the moment generating function of Y can be given by

1

𝑀𝑌 (𝑡) = 𝑀(𝑡, 0) = 𝑒𝑥𝑝 [𝜇𝑌 𝑡 + 𝜎𝑌2 𝑡 2 ].

2

Thus, X and Y are both marginally normal distributed, i.e.,

𝑋~𝑁(𝜇𝑋 , 𝜎𝑋2 ), and 𝑌~𝑁(𝜇𝑌 , 𝜎𝑌2 ).

The pdf of X is

𝑓𝑋 (𝑥) =

The pdf of Y is

𝑓𝑌 (𝑦) =

1

√2𝜋𝜎𝑋

1

√2𝜋𝜎𝑌

𝑒𝑥𝑝 [−

𝑒𝑥𝑝 [−

(𝑥 − 𝜇𝑋 )2

2𝜎𝑋2

(𝑦 − 𝜇𝑌 )2

2𝜎𝑌2

].

].

(b)

If𝜌 = 0, then

1

𝑀(𝑡1 , 𝑡2 ) = exp [𝜇𝑋 𝑡1 + 𝜇𝑌 𝑡2 + (𝜎𝑋2 𝑡12 + 𝜎𝑌2 𝑡22 )] = 𝑀(𝑡1 , 0) ∙ 𝑀(0, 𝑡2 )

2

Therefore, X and Y are independent.

If X and Y are independent, then

1

𝑀(𝑡1 , 𝑡2 ) = 𝑀(𝑡1 , 0) ∙ 𝑀(0, 𝑡2 ) = exp [𝜇𝑋 𝑡1 + 𝜇𝑌 𝑡2 + (𝜎𝑋2 𝑡12 + 𝜎𝑌2 𝑡22 )]

2

1 2 2

= exp [𝜇𝑋 𝑡1 + 𝜇𝑌 𝑡2 + (𝜎𝑋 𝑡1 + 2𝜌𝜎𝑋 𝜎𝑌 𝑡1 𝑡2 + 𝜎𝑌2 𝑡22 )]

2

Therefore, 𝜌 = 0

(c)

𝑀𝑋+𝑌 (𝑡) = 𝐸[𝑒 𝑡(𝑋+𝑌) ] = 𝐸[𝑒 𝑡𝑋+𝑡𝑌 ]

Recall that 𝑀(𝑡1 , 𝑡2 ) = 𝐸[𝑒 𝑡1 𝑋+𝑡2 𝑌 ], therefore we can obtain 𝑀𝑋+𝑌 (𝑡)by 𝑡1 = 𝑡2 =

𝑡 in 𝑀(𝑡1 , 𝑡2 )

That is,

5

1

𝑀𝑋+𝑌 (𝑡) = 𝑀(𝑡, 𝑡) = exp [𝜇𝑋 𝑡 + 𝜇𝑌 𝑡 + (𝜎𝑋2 𝑡 2 + 2𝜌𝜎𝑋 𝜎𝑌 𝑡 2 + 𝜎𝑌2 𝑡 2 )]

2

1 2

= exp [(𝜇𝑋 + 𝜇𝑌 )𝑡 + (𝜎𝑋 + 2𝜌𝜎𝑋 𝜎𝑌 + 𝜎𝑌2 )𝑡 2 ]

2

∴ 𝑋 + 𝑌 ~𝑁(𝜇 = 𝜇𝑋 + 𝜇𝑌 , 𝜎 2 = 𝜎𝑋2 + 2𝜌𝜎𝑋 𝜎𝑌 + 𝜎𝑌2 )

(d)

The conditional distribution of X given Y=y is given by

𝑓(𝑥|𝑦) =

𝑓(𝑥, 𝑦)

1

=

𝑒𝑥𝑝 −

𝑓(𝑦)

√2𝜋𝜎𝑋 √1 − 𝜌2

2

𝜎

(𝑥 − 𝜇𝑋 − 𝜎𝑋 𝜌(𝑦 − 𝜇𝑌 ))

𝑌

{

Similarly, we have the conditional distribution of Y given X=x is

𝑓(𝑦|𝑥) =

𝑓(𝑥, 𝑦)

1

=

𝑒𝑥𝑝 −

𝑓(𝑥)

√2𝜋𝜎𝑌 √1 − 𝜌2

}

2

𝜎

(𝑦 − 𝜇𝑌 − 𝜎𝑌 𝜌(𝑥 − 𝜇𝑋 ))

𝑋

2(1 − 𝜌2 )𝜎𝑌2

{

Therefore:

.

2(1 − 𝜌2 )𝜎𝑋2

.

}

𝜎𝑋

(𝑦 − 𝜇𝑌 ), (1 − 𝜌2 )𝜎𝑋2 )

𝜎𝑌

𝜎𝑌

𝑌|𝑋 = 𝑥 ~ 𝑁 (𝜇𝑌 + 𝜌 (𝑥 − 𝜇𝑋 ), (1 − 𝜌2 )𝜎𝑌2 )

𝜎𝑋

𝑋|𝑌 = 𝑦 ~ 𝑁 (𝜇𝑋 + 𝜌

Exercise:

1. Linear transformation : Let X ~ N ( , 2 ) and Y a X b , where a&b are

constants, what is the distribution of Y?

2. The Z-Score Distribution: Let X ~ N ( , 2 ) , and Z

X

a

1

,b

What is the distribution of Z?

3. Distribution of the Sample Mean:

If X 1 , X 2 ,

i .i . d .

, X n ~ N ( , 2 ) , prove that X ~ N ( ,

2

n

).

6

4. Some musing over the weekend: We know from the bivariate normal

distribution that if X and Y follow a joint bivariate nornmal distribution,

then each variable (X or Y) is univariate normal, and furthermore, their

sum, (X+Y) also follows a univariate normal distribution. Now my question

is, do you think the sum of any two (univariate) normal random variables

(*even for those who do not have a joint bivriate normal distribution),

would always follow a univariate normal distribution? Prove you claim if

you answer is Yes, and provide at least one counter example if your answer

is no.

Exercise -- Solutions:

1. Linear transformation : Let X ~ N ( , 2 ) and Y a X b , where a&b are

constants, what is the distribution of Y?

Solution:

M Y (t ) E (e tY ) E[e t ( aX b ) ] E (e atX bt ) E (e atX e bt )

e E (e

bt

atX

) e e

bt

at

a 2 2t 2

2

exp[( a b)t

a 2 2 t 2

]

2

Thus,

Y ~ N (a b, a 2 2 )

2. Distribution of the Z-score:

Let X ~ N ( , 2 ) , and Z

X

a

1

,b

What is the distribution of Z?

Solution (1), the mgf approach:

M Z (t ) e

t

1

1

1

1

t

( t ) 2 ( t )2

t2

1

M X ( t) e e 2 e 2

→ m.g.f. for N (0,1)

Thus, Z

X

~ N (0,1)

7

Now with one standard normal table, we will be able to calculate the probability of

any normal random variable:

P ( a X b) P (

P(

a

a

X

Z

b

b

)

)

Solution (2), the c.d.f. approach:

FZ ( z ) P( Z z ) P(

X

z)

P( X z ) FX ( z )

f Z ( z)

d

d

FZ ( z )

FX ( z ) f X ( z )

dz

dz

1

e

2

( z ) 2

2 2

z2

1 2

e → the p.d.f. for N(0,1)

2

Solution (3), the p.d.f. approach:

1

f X ( x)

2

e

( x )2

2 2

x z

Let the Jacobian be J:

J

dx d

( z )

dz dz

1

f z ( z ) | J | f x ( x)

e

2

Z

X

( x )2

2 2

1

e

2

X ~ N ( ,

n

2

1 z2

e

2

~ N (0,1)

3. Distribution of the Sample Mean: If X 1 , X 2 ,

2

( z )2

2 2

i .i . d .

, X n ~ N ( , 2 ) , then

).

Solution:

M X (t ) E (e tX ) E (e

t

X 1 X 2 X n

n

)

8

= E (et

*

( X1 X n )

), where t * t / n,

M X1 X n (t * ) M X1 (t * )M X n (t * )

(e

e

X ~ N ( ,

1

2

t * 2t *2

)n

t 1

t

n n 2 ( ) 2

n 2

n

e

t

1 2 2

t

2 n

2

n

)

9