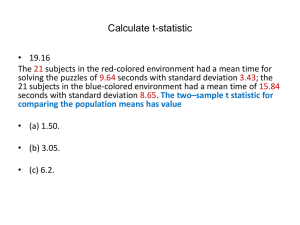

A history of large-scale testing in the US and its

advertisement