Class4

advertisement

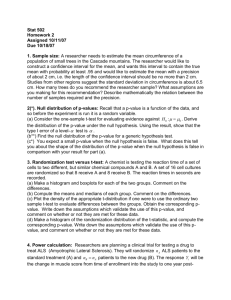

Class 4 More on t-test, p-value, type I and II errors T-tests There are two main types of t-test: paired and unpaired. The paired t-tests are also known as one-sample t-tests, or dependent t-tests. The unpaired t-tests are also known as two-sample t-tests or independent sample t-tests. Paired-tests (one-sample t-test) This is used when observations in two samples can be matched. If xi denotes observations from sample 1 and yi denotes observations from sample 2, then the difference between each matched pair, d i , can be used as to test whether the mean of the two populations is zero. For example, Table 1 shows two matched samples. Table 1 Two matched samples Sample 1 observations Sample 2 observations Difference x1 y1 d1 x2 y2 d2 … … … xn yn dn n d d i 1 i n d , where sd is the standard deviation of the d i sd n values. This statistic follows a t-distribution with n 1 degree of freedom. The t-statistic is formed using Unpaired-tests (two-sample t-test or independent sample t-test) This is used when observations in two samples cannot be matched. The two samples may contain different number of observations. If xi denotes observations from sample 1 and yi denotes observations from sample 2, then we test whether the population means from which these two samples are drawn are the same. For example, Table 2 shows observations from two samples. Table 2 Two independent samples Sample 1 observations Sample 2 observations x1 y1 x2 y2 … … xnx … yny ny nx x xi i 1 y nx y i i 1 ny xy , where s is a standard deviation computed in s two different ways, depending on whether we assume the two populations have the same variance. The t-statistic is formed using Equal variance If population 1 and population 2 are assumed to have the same variance, then a pooled variance is computed as follows: s nx 1 sx2 ny 1 s y2 nx ny 2 where s x is the sample standard deviation of the first sample, and s y is the sample standard deviation of the second sample. The t-statistic then follows a t-distribution with nx n y 2 degrees of freedom. Unequal variance If population 1 and population 2 are assumed to have different variances, then s is computed as follows: 2 sx2 s y s nx n y However, in this case, the degrees of freedom is computed as s 2 x nx s 2 2 x nx s y2 n y nx 1 s y2 2 ny 2 n y 1 P-value and confidence interval Formal definition: p-value is the probability of obtaining an observation as extreme as the current observation under the null hypothesis. For example, we test the following null hypothesis and alternative hypothesis: H0: µ=0 H1: µ≠0 We have a sample of 10 observations: 0, 5, -6, -4, -2, -9, -4, -5, 2, 9 Sample mean = -1.4 Sample standard deviation = 5.46 Standardised t statistic: -1.4/(5.46/sqrt(10))=-0.81 (sample mean/standard error) Two ways to test the null hypothesis: (1) Find 95% (or any level) confidence interval: t.05 with 9 degrees of freedom = 2.262 (R code: qt(0.975,9)) (The tdistribution is assumed to have a mean of 0, which is the null hypothesis) lower limit: -1.4 - 2.262*(5.46/sqrt(10)) upper limit: -1.4 + 2.262*(5.46/sqrt(10)) Check if 0 is in the confident interval. If it is, then we have to accept H0. (2) Find p-value. Use R code: 2*pt(-1.4/(5.46/sqrt(10)),9) = 2* pt(-0.81,9) If p-value is greater than 0.05, accept H0. If p-value is less than 0.05, reject H0 and accept H1. Use R command t.test to check these results. Type I error and Type II error Type I error is the probability of rejecting the null hypothesis when the null hypothesis is true. Type II error is the probability of accepting the null hypothesis when the alternative hypothesis is true. As an example, when we consider hypothesis testing at 95% confidence level, we have 5% chance of rejecting the null hypothesis when the null hypothesis is true. So Type I error is just 1 – confidence level (e.g., 1-0.95=0.05). Type II error is harder to compute. If the alternative hypothesis is H1: µ≠0, we don’t know the exact value of µ so we won’t be able to compute a probability. We usually have to assume a value of µ. For example, if µ=1 is true, we can then compute the probability of accepting the null hypothesis µ=0. To compute type II error, first, we work out the acceptance range of values. Under the null hypothesis we will accept the null hypothesis if an observation is in the range (-1.96, 1.96), at the 95% confidence level. We need to compute the probability that the observation is outside the (-1.96, 1.96) range when the distribution actually has a mean of 1. Probability(X>1.96 | µ=1)=0.1685 Probability(X<-1.96 | µ=1)=0.0015 So, if µ=1, the probability of wrongly accepting the null hypothesis µ=0 is 0.17. Different distributions Decide on a sample size (e.g., 10) Use simulation to draw multiple samples (e.g., 1000) from a normal distribution. Compute the sample mean, sample variance, and mean/(standard error) for each sample. Form sampling distributions of these statistics from the multiple samples. You should see normal distribution, chi-square ( 2 ) distribution, and t-distribution. Repeat with a different sample size (e.g, 20).