Active learning for information networks A Variance

advertisement

Ming Ji

Department of Computer Science

University of Illinois at Urbana-Champaign

Page 1

Information Networks: the Data

Information networks

Abstraction: graphs

Data instances connected by edges representing certain relationships

Examples

Telephone account networks linked by calls

Email user networks linked by emails

Social networks linked by friendship relations

Twitter users linked by the ``follow” relation

Webpage networks interconnected by hyperlinks in the World Wide

Web …

2

Active Learning: the Problem

Classical task: classification of the nodes in a graph

Applications: terrorist email detection, fraud detection …

Why active learning

Training classification models requires labels that are often very

expensive to obtain

Different labeled data will train different learners

Given an email network containing millions of users, we can only

sample a few users and ask experts to investigate whether they are

suspicious or not, and then use the labeled data to predict which users

are suspicious among all the users

3

Active Learning: the Problem

Problem definition of active learning

Input: data and a classification model

Output: find out which data examples (e.g., which users) should be

labeled such that the classifier could achieve higher prediction accuracy

over the unlabeled data as compared to random label selection

Goal: maximize the learner's ability given a fixed budget of labeling

effort.

4

Notations

𝒱 = {𝑣1 , … , 𝑣𝑛 }: the set of nodes

𝒚 = 𝑦1 , … , 𝑦𝑛 𝑇 : the labels of the nodes

𝑊 = 𝑤𝑖𝑗 ∈ ℝ𝑛×𝑛 , where 𝑤𝑖𝑗 is the weight on the edge

between two nodes 𝑣𝑖 and 𝑣𝑗

Goal: find out a subset of nodes ℒ ⊂ 𝒱, such that the

classifier learned from the labels of ℒ could achieve the

smallest expected prediction error on the unlabeled data 𝒰 =

𝒱\ℒ, measured by 𝑣𝑖 ∈𝒰 𝑦𝑖 − 𝑦𝑖∗ 2 , where 𝑦𝑖∗ is the label

prediction for 𝑣𝑖

5

Classification Model

Gaussian random field

1

exp

𝑍𝛽

𝑃 𝒚 =

𝐸 𝒚 =

𝑤 𝑦 − 𝑦𝑗 : energy function measuring the

2 𝑖,𝑗 𝑖𝑗 𝑖

smoothness of a label assignment 𝒚 = 𝑦1 , … , 𝑦𝑛 𝑇

1

−𝛽𝐸 𝒚

2

Label prediction

Without loss of generality, we can arrange the data points chosen to

be labeled to be the first 𝑙 instances, i.e., ℒ = {𝑣1 , … , 𝑣𝑙 }

Design constraint 𝒚∗ℒ = 𝑦1 , … , 𝑦𝑙 𝑇 , we want to predict 𝒚∗𝒰 with the

highest probability

𝐿

Let 𝐿 = 𝐷 − 𝑊 be the graph Laplacian, split 𝐿 as: 𝐿 = ( 𝑙𝑙

𝐿𝑢𝑙

Prediction: 𝒚∗𝒰 = −𝐿−1

𝑢𝑢 𝐿𝑢𝑙 𝒚ℒ

6

𝐿𝑙𝑢

)

𝐿𝑢𝑢

The Variance Minimization Criterion

Recall the goal of active learning

Analyze the distribution of the Gaussian field conditioned on

the labeled data

𝒚𝒰 ∼ 𝒩(𝒚∗𝒰 , 𝐿−1

𝑢𝑢 )

Compute the expected prediction error on the unlabeled

nodes

Maximize the learner's ability ⟺ Minimize the error

E

𝑣𝑖 ∈𝒰

𝑦𝑖 − 𝑦𝑖∗

2

= Tr Cov 𝒚𝒰

= Tr 𝐿−1

𝑢𝑢

Choose the nodes to label such that the expected error (=

total variance) is minimized

argminℒ⊂𝒱 Tr 𝐿−1

𝑢𝑢

7

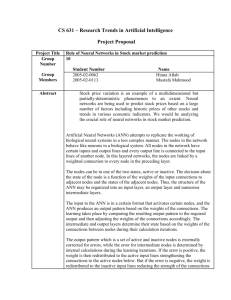

Experimental Results on the Co-author Network

# of labels

VM

ERM

Random

LSC

Uncertainty

20

50.4

47.0

41.7

30.0

39.4

50

62.2

54.7

50.7

54.3

54.1

Classification accuracy (%) comparison

8

Experimental Results on the Isolet Data Set

Classification accuracy vs. the number of labels used

9

Conclusions

Publication: Ming Ji and Jiawei Han, “A Variance Minimization

Criterion to Active Learning on Graphs”, Proc. 2012 Int. Conf.

on Artificial Intelligence and Statistics (AISTAT'12), La Palma,

Canary Islands, April 2012.

Main advantages of the novel criterion proposed

The first work to theoretically minimize the expected prediction error

of a classification model on networks/graphs

The only information used: the graph structure

Do not need to know any label information

The data points do not need to have feature representation

Future work

Test the assumptions and applicability of the criterion on real data

Study the expected error of other classification models

10