Document

advertisement

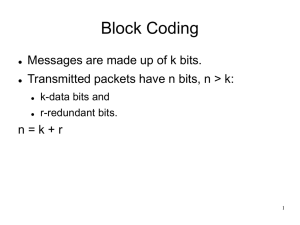

Coding Theory Communication System Voice Image Data Source encoder CRC encoder Channel encoder Error control Source decoder CRC encoder Channel encoder Interleaver Impairments Noise Fading Deinterleaver Modulator Channel Demodulator 2 Error control coding • Limits in communication systems – Bandwidth limit – Power limit – Channel impairments • Attenuation, distortion, interference, noise and fading • Error control techniques are used in the digital communication systems for reliable transmission under these limits. 3 Power limit vs. Bandwidth limit 4 Error control coding • Advantage of error control coding – In principle: • Every channel has a capacity C. • If you transmit information at a rate R < C, then the error-free transmission is possible. – In practice: • Reduce the error rates • Reduce the transmitted power requirements • Increase the operational range of a communication system • Classification of error control techniques – Forward error correction (FEC) – Error detection: cyclic redundancy check (CRC) – Automatic repeat request (ARQ) 5 History • Shannon (1948) – R: Transmission rate for data – C: Channel capacity – If R < C, it is possible to transfer information at error rates that can be reduced to any desired level. 6 History • Hamming codes (1950) – Single error correcting • Convolutional codes (Elias, 1956) • BCH codes (1960), RS codes (1960) – multiple error correcting • Goppa codes (1970) – Generalization of BCH codes • Algebraic geometric codes (1982) – Generalization of RS codes – Constructed over algebraic curves • Turbo codes (1993) • LDPC codes 7 Channel • Memoryless channel – The probability of error is independent from one symbol to the next. • Symmetric channel – P( i | j )=P( j | i ) for all symbol values i and j Ex) binary symmetric channel (BSC) • Additive white Gaussian noise (AWGN) channel • Burst error channel • Compound (or diffuse) channel – The errors consist of a mixture of bursts and random errors. • Many codes work best if errors are random. – Interleaver and deinterleaver are added. 8 Channel • Random error channels – Deep-space channels – Satellite channels Use random error correcting codes • Burst error channels: channels with memory – Radio channels • Signal fading due to multipath transmission – Wire and cable transmission • Impulse switching noise, crosstalk – Magnetic recording • Tape dropouts due to surface defects and dust particles Use burst error correcting codes 9 Encoding • Block codes k bits k bits k bits n bits n bits n bits message or information codeword Redundancy: n – k Code rate: k / n • Encoding of an [n , k] block code Message m (m1, m2, … , mk) codeword c (m1, m2, … , mk, p1, p2, … , pn - k ) Add n – k redundant parity check symbols (p1, p2, … , pn - k) 10 Decoding • Decoding [n , k] block code – Decide what the transmitted information was – The minimum distance decoding is optimum in a memoryless channel. Received data r (r1, r2, … , rn) ˆ Decoded message m ˆ 1, m ˆ 2 , ....., m ˆk) (m Correct errors and remove n – k redundant symbols Error vector e = (e1, e2, … , en) = (r1, r2, … , rn) – (c1, c2, … , cn) 11 Decoding • Decoding plane r c2 c4 c3 c1 c6 c5 12 Decoding Ex) Encoding and decoding procedure of [6, 3] code 1. Generate the information (100) in the source. 2. Transmit the codeword (100101) corresponding to (100). 3. The vector (101101) is received. 4. Choose the nearest codeword (100101) to (101101). 5. Extract the information (100) from the codeword (100101). Information 000 100 010 110 001 101 011 111 codeword 000000 100101 010011 110110 001111 101010 011100 111001 Distance from (101101) 4 1 5 4 2 3 3 2 13 Parameters of block codes • Hamming distance dH(u, v) – # positions at which symbols are different in two vectors Ex) u=(1 0 1 0 0 0) v=(1 1 1 0 1 0) dH(u, v) = 2 • Hamming weight wH(u) – # nonzero elements in a vector Ex) wH(u) = 2, wH(v) = 4 • Relation between hamming distance and hamming weight – Binary code: dH(u, v) = wH(u + v), where ‘+’ means exclusive OR (bit by bit) – Nonbinary code: dH(u, v) = wH(u – v) 14 Parameters of block codes • Minimum distance d – d = min dH(ci, cj) for all ci cj C • Any two codewords differ in at least d places. • [n, k] code with d [n, k, d] code • Error detection and correction capability – Let s = # errors to be detected t = # errors to be corrected (s t) – Then, we have d s + t + 1 • Error correction capability – Any block code correcting t or less errors satisfies d 2t + 1 – Thus, we have t = (d – 1) / 2 15 Parameters of block codes Ex) d = 3, 4 t = 1 : single error correcting (SEC) codes d = 5, 6 t = 2 : double error correcting (DEC) codes d = 7, 8 t = 3 : triple error correcting (TEC) codes • Coding sphere s t d ci t cj 16 Code performance and coding gain • Criteria for performance in the coded system – BER: bit error rate in the information after decoding, Pb – SNR: signal to noise ratio, Eb / N0 Eb = signal energy per bit N0 = one-sided noise power spectral density in the channel – Coding gain (for a given BER) G = (Eb / N0)without FEC – (Eb / N0)with FEC [dB] • At a given BER, Pb, we can save the transmission power by G [dB] over the uncoded system. 17 Minimum distance decoding • Maximum-likelihood decoding (MLD) ˆ : estimated message after decoding – m – cˆ : estimated codeword in the decoder ˆ m cˆ c m • Assume that c was transmitted. – A decoding error occurs if cˆ c . • Conditional error probability of the decoder, given r : P( E | r) P(cˆ c | r) • Error probability of the decoder: P( E ) r P( E | r) P(r) , where P(r) is independent of decoding rule 18 Minimum distance decoding • Optimum decoding rule: minimize error probability, P(E) – This can be obtained by minr P(E | r), which is equivalent to maxr P(cˆ c | r) • Optimum decoding rule is – argmaxc P(c | r) : Maximum a posteriori probability (MAP) – argmaxc P(r | c) : Maximum likelihood (ML) • Bayes’ rule P(c | r ) P(r | c) P(c) P(r ) – If equiprobable c, MAP = ML 19 Problems • Basic problems in coding – Find good codes – Find their decoding algorithm – Implement the decoding algorithms • Cost for forward error correction schemes – If we use [n, k] code, the transmission rate increase from k to n. • Increase of channel bandwidth by n / k or decrease of message transmission rate by k / n. • Cost for FEC 20 Classification • Classification of FEC – Block codes • Hamming, BCH, RS, Golay, Goppa, Algebraic geometric codes (AGC) Tree codes • Convolutional codes – Linear codes • Hamming, BCH, RS, Golay, Goppa, AGC, etc. Nonlinear codes • Nordstrom-Robinson, Kerdock, Preparata, etc. – Systematic codes vs. Nonsystematic codes 21