New Methods For Participatory Community

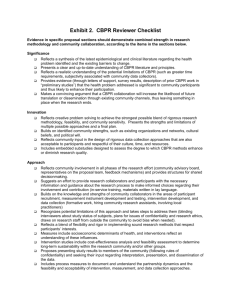

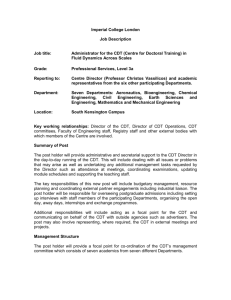

advertisement

NEW METHODS FOR PARTICIPATORY COMMUNITY-BASED INTERVENTION RESEARCH – HOW CAN WE DEVELOP A SYSTEMATIC UNDERSTANDING OF CHANGE THAT MATTERS? Bruce D. Rapkin, PhD Professor of Epidemiology and Population Health Division of Community Collaboration and Implementation Science Albert Einstein College of Medicine Conclusions • Participatory models of intervention research are superior to top down models. • Scientific rigor does not equal the randomized controlled trial. • Communities of shared interest must form around Learning Systems - with successive studies leading to refinement of key distinctions among interventions, types populations and settings • Comprehensive dynamic trials are intended to support the learning system, by inventing and evolving interventions in place, drawing upon multiple sources of information gained during the conduct of an intervention. Why do we need alternatives to the Randomized Clinical Trial model? • • • • • The community argument The business practices argument The statistical argument The scientific argument The psychological argument Community-Academic Relationships Imposed by the Medical Model • Funders resist changing interventions promoted as national standards (despite absence of external validity) • Communities must figure out how to fit themselves to the program – the program dictates the terms • What communities know about prevention or engaging clients is only relevant if it pertains to the manual • A tightly scripted protocol does not respond to collaborators’ circumstances • Danger that lessons learned will be framed as “what the community did wrong to make the program fail” • Unwillingness to consider limits of research theories and methods, local problems will remain unsolved Is this any way to run a business? • Businesses including clinics examine their practices continually to seek improvements • Research protocols are designed to resist or restrict change over the course of a study, to ensure “standardization” • Lessons learned must be “ignored” until the next study • Valuing fidelity over quality impedes progress to optimal intervention approaches What is a “Treatment Effect”? • The RCT is designed to determine an estimate of a population “treatment effect” • Is the “treatment effect” a useful construct? – How is the effect determined by the initial composition of the sample? – Is information beyond aggregate change error variance or meaningful trajectories? – How does the control condition determine the effect’? Is this ignorable? 10 8 6 4 2 0 -2 -4 -6 -8 -10 TIME 1 TIME 2 TIME 3 10 8 6 4 2 0 -2 -4 -6 -8 -10 TIME 1 TIME 2 TIME 3 Does a Successful RCT Mean that Faithful Replication of an Intervention will Ensure Outcomes? • Not necessarily because… – Original RCT findings do not generalize to a “universe” – too dependent on context – Mechanics of interventions have different implications, depending on setting norms – Even the meaning & impact of core elements may be transformed by local ecology • We don’t know because of the lack of attention to external validity! Desirable Features for Study Designs • Must take into account diversity inherent in the determinants of health and risk behavior • Must recognize that different people can respond to the same intervention in different ways, or in the same way for different reasons • Must accommodate diversity and personal preferences • Must avoid ethical dilemmas associated with substandard treatment of some participants • Must be responsive to evolving understanding of how to best administer an intervention, and to local innovations and ideas • Must contribute to community capacity building and empowerment at every step of the research process The Research Paradigm We Need… • A Learning System • A Community Science = A “WIKI” • Who has input – True integration of multiple methods and perspectives • Who makes decisions? – The peer review process – The community review process • Progress toward adequate intervention theory and practice can be quantified We have (some of) the building blocks But bridges are always built Somewhere - Comprehensive Dynamic Trials Designs • Comprehensive => use complete information from multiple sources to understand what is happening in a trial • Dynamic => built-in mechanisms for feedback to respond to different needs and changing circumstances • Trials => Systematic, replicable activities that yield high quality information useful for testing causal hypotheses The Multi-way Decision Matrix What outcomes distinct are associated with different intervention approaches? Oipc|t How do characteristics of target population affect outcomes? The conditional probability of an outcome, for this type of intervention with this population in this context, given what is known at the present time. How are outcomes affected by history, resources, and contexts? Three CDT Designs • Community Empowerment to enable communities to create new interventions • Quality Improvement to adapt existing manuals and procedures to new contexts • Titration-Mastery to optimize algorithms for delivering a continuum of services Rapkin & Trickett(2005) CDT Community Empowerment Design • Closest to the “orthodox” model of CBPR – No pre-conceived “intervention” – No need for externally-imposed explanation of the problem or theory of change • Common process of planning • Common criteria for evaluating implementation across multiple settings and/or multiple “epochs” CDT Quality Improvement Design • Starting point – An evidence-based intervention – A established standard of practice – An innovation ready for diffusion • Alternative to the traditional “top-down” model of intervention dissemination • Begin with a baseline intervention, then systematically evolve and optimize it CDT Titration to Mastery Design • Suited to practice settings committed to the client/patient/participant • Does NOT ask about intervention effects? • Rather, asks what combination of interventions will get closest to 100% positive outcome most efficiently? • Begins with a tailoring algorithm to systematically apply a tool kit, which is then evolved and optimized Ingredients of a Comprehensive Dynamic Trial Just add community and stir… How does the CDT Feedback Loop Work? What Types of Data Are Needed? • • • • • • • • Outcome Indicators Fidelity Mechanistic Measures Intervention Processes Structural Impediments Adverse Events Propitious Events Context Measures The Deliberation Process • Key stakeholders should be involved in deliberation • Research systematically provides data to stakeholders to make decisions about how to modify and optimize interventions • Timing is based upon the study design • The nature and extent of changes should be measurable, and expressed in terms of intervention components and procedures • Deliberation process should be bounded by theory Ethical Principles – Lounsbury et al. • • • • Transparency Shared Authority Specific Relevance Rights of Research Participants – Self-Determination – Third-Party Rights – Employees’ Rights • Privacy • Sound Business Practice • Shared Ownership A Model for Maximizing Partnership Success: Key Considerations for Planning, Development, and Self-Assessment – Weiss et al. Composition, Structure and Functions Characteristics of the Group Process Environmental Factors Intermediate Indicators of Partnership Effectiveness Development & Implementation of Programs & Activities Outcome Indicators of Partnership Effectiveness A CDT-QI Model to Disseminate an EvidencedBased Approach to Promote Breast Cancer Screening The Bronx ACCESS Project The Albert Einstein Cancer Center Program Project Application Under Development Exchange of Information Among ACCESS Projects & Research Cores a Project 3. Academic Consultation to Build Organizational Capacity, Competence and Commitment b f Project 1. Reach and Effectiveness of Adapted Strategies for BrCa Screening i d g Data Acquisition & Geospatial Core e c h j Project 2. Community Involvement in Intervention Adaptation k m l n Lay Health Advisors Core Intervention Core o Statistical Analysis & Modeling Core Schema for the Bronx ACCESS Plus Comprehensive Dynamic Trial CBPR Repeated Over Multiple Cycles to Optimize Performance and Outcomes in Different Settings C o n t e x t s Program Implementation Intervention Components Performance Deliberation Processes Research Staff Support Agency Leadership & Staff Community Stakeholders Screening Outcomes Changing the Rules to Conduct Research in the Real World • How to incorporate local input in an evidence based paradigm? – Solution: Fidelity gets a vote, but not a veto • How to deal with cultural and risk specificity of mammography screening interventions? – Solution: disseminate a “suite” of theoretically equivalent strategies as a tool kit • How to address agencies’ many priorities? – Solution: Encompass these as “community targeted strategies” for outreach & retention How Do You Get Science Out of All That Data? In Any One CDT … • Analyses are intrinsic to intervention • The program should get better as it goes along • Experimental effects may be examined in context • Particularly interested in accounting for diverse trajectories and patterns of responses • Ability to steer toward optimal intervention components • Case study of community problem solving • Able to examine setting impacts, sustainability The Real Payoff – Science as a Community Process The Epistemology of CDT • A Community Science = A “WIKI” • A learning system • Who has input – True integration of multiple methods • Who makes decisions? – the peer review process • Progress toward theory development can be quantified • Theory can be (provisionally) completed The Multi-way Decision Matrix What outcomes distinct are associated with different intervention approaches? Oipc|t How do characteristics of target population affect outcomes? The conditional probability of an outcome, for this type of intervention with this population in this context, given what is known at the present time. How are outcomes affected by history, resources, and contexts? Wiring Up the Decision Matrix: Systems Dynamics? Neural Networks? Genetic Algorithms? 1 2 1) An intervention 3 Tx 4 5 6 2) experienced by different people 4) may lead to different outcomes. 7 3) in different contexts 8 ARROWS indicate probabilistic pathways Oipc|t at time T Scientific Enterprise Needed to Support this paradigm • • • • • • • Evaluation for funding will consider soundness of researchers’ relationships with communities Multiple sources of data will gain importance Emphasis on practice-based evidence Case studies of planning, decision making and community involvement will be highly important Awareness that results depend on context, so a single trial will not receive undue weight Investigators will work in tandem to create service suites and knowledge bases Meta-analysis will grow more important, as a way of integrating multiple types of studies Where’s the Science? • In community process. • In understanding how researchers’ roles and activities impact CBPR. • In the evolution and refinement of intervention implementation strategies, through dynamic exchange and reflection. • It emerges out of the synthesis of CBPR findings Conclusions • Participatory models of intervention research are superior to top down models. • Scientific rigor does not equal the randomized controlled trial. • Communities of shared interest must form around Learning Systems - with successive studies leading to refinement of key distinctions among interventions, types populations and settings • Comprehensive dynamic trials are intended to support the learning system, by inventing and evolving interventions in place, drawing upon multiple sources of information gained during the conduct of an intervention.