Chapter 12 - Distributed Database Management Systems

advertisement

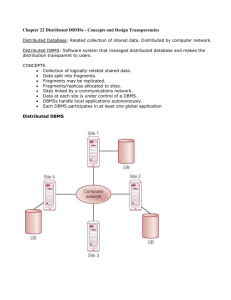

Database Systems: Design, Implementation, and Management Tenth Edition Chapter 12 Distributed Database Management Systems The Evolution of Distributed Database Management Systems • Distributed database management system (DDBMS) – Governs storage and processing of logically related data over interconnected computer systems – Both data and processing functions are distributed among several sites • 1970s - Centralized database required that corporate data be stored in a single central site – Usually a mainframe computer – Data access via dumb terminals Database Systems, 10th Edition 2 The Evolution of Distributed Database Management Systems • Wasn’t responsive to need for faster response times and quick access to information • Slow process to approve and develop new application Database Systems, 10th Edition 3 The Evolution of Distributed Database Management Systems • Social and technological changes led to change • Businesses went global; competition was now in cyberspace not next door • Customer demands and market needs required Webbased services • rapid development of low-cost, smart mobile devices increased the demand for complex and fast networks to interconnect them – cloud based services • Multiple types of data (voice, image, video, music) which are geographically distributed must be managed Database Systems, 10th Edition 4 The Evolution of Distributed Database Management Systems • As a result, businesses had to react quickly to remain competitive. This required: • Rapid ad hoc data access became crucial in the quick-response decision making environment • Distributed data access to support geographically dispersed business units Database Systems, 10th Edition 5 The Evolution of Distributed Database Management Systems • The following factors strongly influenced the shape of the response • Acceptance of the Internet as the platform for data access and distribution • The mobile wireless revolution • Created high demand for data access • Use of “applications as a service” • Company data stored on central servers but applications are deployed “in the cloud” • Increased focus on mobile BI • Use of social networks increases need for on-the-spot decision making Database Systems, 10th Edition 6 The Evolution of Distributed Database Management Systems • The distributed database is especially desirable because centralized database management is subject to problems such as: • Performance degradation as remote locations and distances increase • High cost to maintain and operate • Reliability issues with a single site and need for data replication • Scalability problems due to a single location (space, power consumption, etc) • Organizational rigidity imposed by the database – might not be able to support flexibility and agility required by modern global organizations Database Systems, 10th Edition 7 8 Distributed Processing and Distributed Databases • Distributed processing – Database’s logical processing is shared among two or more physically independent sites connected through a network 9 Distributed Processing and Distributed Databases • Distributed database – Stores logically related database over two or more physically independent sites – Database composed of database fragments • Located at different sites and can be replicated among various sites 10 Distributed Processing and Distributed Databases • Distributed processing does not require a distributed database, but a distributed database requires distributed processing • Distributed processing may be based on a single database located on a single computer • For the management of distributed data to occur, copies or parts of the database processing functions must be distributed to all data storage sites • Both distributed processing and distributed databases require a network of interconnected components 11 Characteristics of Distributed Management Systems • Application interface to interact with the end user, application programs and other DBMSs within the distributed database • Validation to analyze data requests for syntax correctness • Transformation to decompose complex requests into atomic data request components • Query optimization to find the best access strategy • Mapping to determine the data location of local and remote fragments • I/O interface to read or write data from or to permannet local storage 12 Characteristics of Distributed Management Systems (cont’d.) • Formatting to prepare the data for presentation to the end user or to an application • Security to provide data privacy at both local and remote databases • Backup and recovery to ensure the availability and recoverability of the database in case of failure • DB administration features for the DBA • Concurrency control to manage simultaneous data access and to ensure data consistency across database fragments in the DDBMS • Transaction management to ensure the data move from one consistent state to another 13 Characteristics of Distributed Management Systems (cont’d.) • Must perform all the functions of centralized DBMS • Must handle all necessary functions imposed by distribution of data and processing – Must perform these additional functions transparently to the end user 14 • The single logical database consists of two database fragments A1 and A2 located at sites 1 and 2 • All users “see” and query the database as if it were a local database, • The fact that there are fragments is completely transparent to the user 15 DDBMS Components • Must include (at least) the following components: – Computer workstations/remote devices – Network hardware and software that reside in each device or w/s to interact and exchange data – Communications media that carry data from one site to another 16 DDBMS Components (cont’d.) – Transaction processor (a.k.a application processor, transaction manager) • Software component found in each computer that receives and processes the application’s remote and local data requests – Data processor or data manager • Software component residing on each computer that stores and retrieves data located at the site • May be a centralized DBMS 17 DDBMS Components (cont’d.) • The communication among the TPs and DPs is made possible through protocols which determine how the DDBMS will – Interface with the network to transport data and commands between the DPs and TPs – Synchronize all data received from DPs and route retrieved data to appropriate TPs – Ensure common DB functions in a distributed system e.g., data security, transaction management, concurrency control, data partitioning and synchronization and data backup and recovery 18 19 Levels of Data and Process Distribution • Current systems classified by how process distribution and data distribution are supported 20 Single-Site Processing, Single-Site Data • All processing is done on single CPU or host computer (mainframe, midrange, or PC) • All data are stored on host computer’s local disk • Processing cannot be done on end user’s side of system • Typical of most mainframe and midrange computer DBMSs • DBMS is located on host computer, which is accessed by dumb terminals connected to it – The TP and DP functions are embedded within the DBMS on the host computer – DBMS usually runs under a time-sharing, multitasking OS 21 22 Multiple-Site Processing, Single-Site Data • Multiple processes run on different computers sharing single data repository • MPSD scenario requires network file server running conventional applications – Accessed through LAN • Many multiuser accounting applications, running under personal computer network 23 Multiple-Site Processing, Single-Site Data • The TP on each w/s acts only as a redirector to route all network data requests to the file server • The end user sees the fileserver as just another hard drive • The end user must make a direct reference to the file server to access remote data – All record- and file-locking are performed at the end-user location • All data selection, search and update take place at the w/s – Entire files travel through the network for processing at the w/s which increases network traffic, slows response time and increases communication costs 24 Multiple-Site Processing, Single-Site Data • Suppose the file server stores a CUSTOMER table containing 100,000 data rows, 50 of which have balances greater than $1,000 • The SQL command SELECT * FROM CUSTOMER WHERE CUST_BALANCE > 1000 causes all 100,000 rows to travel to end user w/s • A variation of MSP/SSD is client/server architecture – All DB processing is done at the server site 25 Database Systems, 10th Edition 26 Multiple-Site Processing, Multiple-Site Data • Fully distributed database management system • Support for multiple data processors and transaction processors at multiple sites • Classified as either homogeneous or heterogeneous • Homogeneous DDBMSs – Integrate multiple instances of the same DBMS over a network Database Systems, 10th Edition 27 Multiple-Site Processing, Multiple-Site Data (cont’d.) • Heterogeneous DDBMSs – Integrate different types of centralized DBMSs over a network but all support the same data model • Fully heterogeneous DDBMSs – Support different DBMSs – Support different data models (relational, hierarchical, or network) – Different computer systems, such as mainframes and microcomputers 28 29 Distributed Database Transparency Features • Allow end user to feel like database’s only user • Features include: – – – – – Distribution transparency Transaction transparency Failure transparency Performance transparency Heterogeneity transparency 30 Distributed Database Transparency Features • Distribution Transparency – Allows management of physically dispersed database as if centralized – The user does not need to know • That the table’s rows and columns are split vertically or horizontally and stored among multiple sites • That the data are geographically dispersed among multiple sites • That the data are replicated among multiple sites 31 Distributed Database Transparency Features • Transaction Transparency – Allows a transaction to update data at more than one network site – Ensures that the transaction will be either entirely completed or aborted in order to maintain database integrity • Failure Transparency – Ensures that the system will continue to operate in the event of a node or network failure – Functions that were lost will be picked up by another network node 32 Distributed Database Transparency Features • Performance Transparency – Allows the system to perform as if it were a centralized DBMS • No performance degradation due to use of a network or platform differences • System will find the most cost effective path to access remote data • System will increase performance capacity without affecting overall performance when adding more TP or DP nodes • Heterogeneity Transparency – Allows the integration of several different local DBMSs under a common global schema • DDBMS translates the data requests from the global schema to the local DBMS schema 33 Distribution Transparency • Allows management of physically dispersed database as if centralized • Three levels of distribution transparency: – Fragmentation transparency • End user does not need to know that a DB is partitioned – SELECT * FROM EMPLOYEE WHERE… – Location transparency • Must specify the database fragment names but not the location – SELECT * FROM E1 WHERE … UNION – Local mapping transparency • Must specify fragment name and location – SELECT * FROM E1 “NODE” NY WHERE … UNION 34 35 Distribution Transparency • Supported by a distributed data dictionary (DDD) or distributed data catalog (DDC) – Contains the description of the entire database as seen by the DBA – It is distributed and replicated at the network nodes – The database description, known as the distributed global schema, is the common database schema used by local TPs to translate user requests into subqueries that will be processed by different DPs 36 Transaction Transparency • Ensures database transactions will maintain distributed database’s integrity and consistency • Ensures transaction completed only when all database sites involved complete their part • Distributed database systems require complex mechanisms to manage transactions and ensure consistency and integrity 37 Distributed Requests and Distributed Transactions • Remote request: single SQL statement accesses data from single remote database – The SQL statement can reference data only at one remote site 38 Distributed Requests and Distributed Transactions • Remote transaction: composed of several requests, accesses data at single remote site – – – – Updates PRODUCT and INVOICE tables at site B Remote transaction is sent to B and executed there Transaction can reference only one remote DP Each SQL statement can reference only one remote DP and the entire transaction can reference and be executed at only one remote DP 39 Distributed Requests and Distributed Transactions • Distributed transaction: requests data from several different remote sites on network – Each single request can reference only one local or remote DP site – The transaction as a whole can reference multiple DP sites because each request can reference a different site 40 Distributed Requests and Distributed Transactions • Distributed request: single SQL statement references data at several DP sites – A DB can be partitioned into several fragments – Fragmentation transparency: reference one or more of those fragments with only one request 41 Distributed Requests and Distributed Transactions • A single request can reference a physically partitioned table – CUSTOMER table is divided into two fragments C1 and C2 located at sites B and C 42 Distributed Concurrency Control • Concurrency control is important in distributed environment – Multisite multiple-process operations create inconsistencies and deadlocked transactions • Suppose a transaction updates data at three DP sites – The first two DP sites complete the transaction and commit the data at each local DP – The third DP cannot commit the transaction but the first two sites cannot be rolled back since they were committed. This results in an inconsistent database 43 44 Two-Phase Commit Protocol • Distributed databases make it possible for transaction to access data at several sites • 2PC guarantees that if a portion of a transaction can not be committed, all changes made at the other sites will be undone – Final COMMIT is issued after all sites have committed their parts of transaction – Requires that each DP’s transaction log entry be written before database fragment updated 45 Two-Phase Commit Protocol • DO-UNDO-REDO protocol with write-ahead protocol – DO performs the operation and records the “before” and “after” values in the transaction log – UNDO reverses an operation using the log entries written by the DO portion of the sequence – REDO redoes an operation, using the log entries written by the DO portion • Requires a write-ahead protocol where the log entry is written to permanent storage before the actual operation takes place • 2PC defines the operations between the coordinator (transaction initiator) and one or more subordinates 46 Two-Phase Commit Protocol • Phase 1: preparation – The coordinator sends a PREPARE TO COMMIT message to all subordinates • The subordinates receive the message, write the transaction log using the write-ahead protocol and send an acknowledgement message (YES/PREPARED TO COMMIT or NO/NOT PREAPRED ) to the coordinator • The coordinator make sure all nodes are ready to commit or it aborts the action 47 Two-Phase Commit Protocol • Phase 2 The Final COMMIT – The coordinator broadcasts a COMMIT to all subordinates and waits for replies – Each subordinate receives the COMMIT and then updates the database using the DO protocol – The subordinates replay with a COMMITTED or NOT COMMITTED message to the coordinator – If one or more subordinates do not commit, the coordinator sends an ABORT message and the subordinates UNDO all changes 48 Performance and Failure Transparency • Performance transparency – Allows a DDBMS to perform as if it were a centralized database; no performance degradation • Failure transparency – System will continue to operate in the case of a node or network failure • Query optimization – Minimize the total cost associated with the execution of a request (CPU, communication, I/O) 49 Performance and Failure Transparency • In a DDBMS, transactions are distributed among multiple nodes. Determining what data are being used becomes more complex – Data distribution: determine which fragment to access, create multiple data requests to the chosen DPs, combine the responses and present the data to the application – Data Replication: data may be replicated at several different sites making the access problem even more complex as all copies must be consistent • Replica transparency - DDBMS’s ability to hide multiple copies of data from the user 50 Performance and Failure Transparency • Network and node availability – The response time associated with remote sites cannot be easily predetermined because some nodes finish their part of the query in less time than others and network path performance varies because of bandwidth and traffic loads – The DDBMS must consider • Network latency – Delay imposed by the amount of time required for a data packet to make a round trip from point A to point B • Network partitioning – Delay imposed when nodes become suddenly 51 unavailable due to a network failure Distributed Database Design • Data fragmentation – How to partition database into fragments • Data replication – Which fragments to replicate • Data allocation – Where to locate those fragments and replicas Database Systems, 10th Edition 52 Data Fragmentation • Breaks single object into two or more segments or fragments • Each fragment can be stored at any site over computer network • Information stored in distributed data catalog (DDC) – Accessed by TP to process user requests 53 Data Fragmentation Strategies • Horizontal fragmentation – Division of a relation into subsets (fragments) of tuples (rows) – Each fragment is stored at a different node and each fragment has unique rows • Vertical fragmentation – Division of a relation into attribute (column) subsets – Each fragment is stored at a different node and each fragment has unique columns with the exception of the key column which is common to all fragments • Mixed fragmentation – Combination of horizontal and vertical strategies 54 Data Fragmentation Strategies • Horizontal fragmentation based on CUS_STATE 55 Data Fragmentation Strategies • Vertical fragmentation based on use by service and collections departments • Both require the same key column and have the same number of rows 56 Data Fragmentation Strategies • Mixed fragmentation based on location as well as use by service and collections departments 57 Data Replication • Data copies stored at multiple sites served by computer network • Fragment copies stored at several sites to serve specific information requirements – Enhance data availability and response time – Reduce communication and total query costs • Mutual consistency rule: all copies of data fragments must be identical 58 Data Replication • Styles of replication – Push replication: after a data update, the originating DP node sends the changes to the replica nodes to ensure that data are immediately updated • Decreases data availability due to the latency involved in ensuring data consistemcy at all nodes – Pull replication: after a data update, the originating DP sends “messages” to the replica nodes to notify them of a change. The replica nodes decide when to apply the updates to their local fragment • Could have temporary data inconsistencies 59 Data Replication • Fully replicated database – Stores multiple copies of each database fragment at multiple sites – Can be impractical due to amount of overhead • Partially replicated database – Stores multiple copies of some database fragments at multiple sites • Unreplicated database – Stores each database fragment at single site – No duplicate database fragments • Data replication is influenced by several factors – Database size – Usage frequency – Cost: performance, overhead 60 Data Allocation • Deciding where to locate data – Allocation is closely related to the way a database is fragmented or divided – Centralized data allocation • Entire database is stored at one site – Partitioned data allocation • Database is divided into several disjointed parts (fragments) and stored at several sites – Replicated data allocation • Copies of one or more database fragments are stored at several sites 61 The CAP Theorem • Initials CAP stand for three desirable properties – Consistency – Availability – Partition tolerance (similar to failure transparency) • When dealing with highly distributed systems, some companies forfeit consistency and isolation to achieve higher availability • This has led to a new type of DDBMS in which data are basically available, soft state, eventually consistent (BASE) – Data changes are not immediate but propagate slowly through the system until all replicas are eventually 62 consistent Database Systems, 10th Edition 63 C. J. Date’s Twelve Commandments for Distributed Databases 64