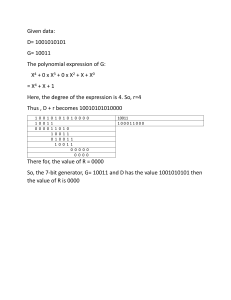

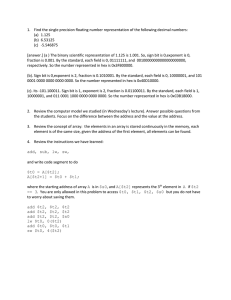

Name : 洪理川 ID : B113040056 Chapter 3:Arithmetic for Computers 3.1 Introduction This chapter will help us understand representation of real number, arithmetic algorithm, hardware that follows these algorithms – and the implications of all this for instruction sets. For example, how fractions being represented in computer, how does hardware really multiply or divide numbers? 3.2 Addition and Subtraction Both Addition and Subtraction uses addition to accomplish. For addition it is just a simple added bit by bit from right to left, with carries passed to the left. For subtraction we can use addition using the two’s complement. 2 principles of overflow 1. In addition, with 2 different operands overflow will not happen. 2. In subtraction with 2 same operands overflow will not happen. Overflow conditions for addition and subtraction. 3.3 Multiplication Multiplicand Multiplier Product Multiplication in binary is the same as in our usual world. What we can see from this example is that the product will be n + m length. We will see a more optimized multiply hardware below. First version of the multiplication hardware Refined version of multiplication hardware The first multiplication algorithm (using the first version of multiplication hardware) Fast multiplication hardware Iteration Step 0 Initial values 1 1a: 1 -> Prod = Prod + Mcand 2: Shift left Multiplicand 3: Shift right Multiplier 2 1a: 1 -> Prod = Prod + Mcand 2: Shift left Multiplicand 3: Shift right Multiplier 3 1: 0 -> No operation 2: Shift left Multiplicand 3: Shift right Multiplier 4 1: 0 -> No operation 2: Shift left Multiplicand 3: Shift right Multiplier Multiplier 0011 0011 0011 0001 0001 0001 0000 0000 0000 0000 0000 0000 0000 Multiplicand 0000 0010 0000 0010 0000 0100 0000 0100 0000 0100 0000 1000 0000 1000 0000 1000 0001 0000 0001 0000 0001 0000 0010 0000 0010 0000 Product 0000 0000 0000 0010 0000 0010 0000 0010 0000 0110 0000 0110 0000 0110 0000 0110 0000 0110 0000 0110 0000 0110 0000 0110 0000 0110 Signed Multiplication: We convert the multiplier and multiplicand to positive number and remember the original sign. Then we calculate with the positive numbers, only negat the product if the original signs disagree. Faster Multiplication: We can use carry save adders to further improve the speed of multiplication. Since it uses pipeline design that able to support many multiplies simultaneously. Multiply in RISC-V: 4 instructions of multipy, multiply(mul), multiply high (mulh), multiply high unsigned (mulhu), multiply high signed-unsigned (mulhsu). To get upper 32 bits product -> mul To get upper 32 bits of the 64-bit product (both signed)-> mulh To get upper 32 bits of the 64-bit product (both unsigned)-> mulhu To get upper 32 bits of the 64-bit product (1 signed, 1 unsigned)-> mulhsu 3.4 Division Dividend = Quotient x Divisor + Remainder Improved version of division hardware The first version of the division hardware Algorithm using left picture hardware. Iteration Step Quotient Divisor Remainder 0 Initial values 0000 0010 0000 0000 0111 1 1: Rem = Rem - Div 0000 0010 0000 1110 0111 2b: Rem < 0 -> +Div, SLL Q, Q0=0 0000 0010 0000 0000 0111 3: Shift Div right 0000 0001 0000 0000 0111 1: Rem = Rem - Div 0000 0000 0000 1111 0111 2b: Rem < 0 -> +Div, SLL Q, Q0=0 0000 0000 0000 0000 0111 3: Shift Div right 0000 0000 0000 0000 0111 1: Rem = Rem - Div 0000 0000 1000 1111 1110 2b: Rem < 0 → +Div, SLL Q, Q0=0 0000 0000 1000 0000 0111 3: Shift Div right 0000 0000 0100 0000 0111 1: Rem = Rem - Div 0000 0000 0100 0000 0011 2a: Rem ≥ 0 → SLL Q, Q0=1 0001 0000 0100 0000 0011 3: Shift Div right 0001 0000 0010 0000 0011 1: Rem = Rem - Div 0001 0000 0010 0000 0001 2 3 4 5 2a: Rem ≥ 0 → SLL Q, Q0=1 0011 0000 0010 0000 0001 3: Shift Div right 0011 0000 0001 0000 0001 Signed Division: Negate the quotient if the signs of the operands are different and makes the sign of the nonzero reminder match the dividend. Faster Division: Using SRT Division technique tries to predict several quotient per step, using a table lookup based on the upper bits of the dividend and remainder. Divide in RISC-V: divide (div), divide unsigned (divu), remainder (rem), and remainder unsigned (remu). 3.5 Floating Point Floating Points are numbers with fractions, which is called real numbers in mathematics, for example 3.14159265… ten (π), 2.71828… ten (e), etc. In binary the form is: 1.xxxxxxxx two x 2yyyy Floating-Point Representation Overflow (floating-point): A positive exponent becomes too large to fit in exponent field. Underflow(floating-point): A negative exponent becomes too large to fit in the exponent field. Single precision : A floating-point value represented in a single 32-bit word. Double precision : A floating-point value represented in two 32-bit words. IEEE 754 encoding of floating-point numbers. Single precision Double precision Object represented Exponent Fraction Exponent Fraction 0 0 0 0 0 0 Nonzero 0 Nonzero ± denormalized number 1-254 Anything 1-2046 Anything ± floating-point number 255 0 2047 0 ± infinity 255 Nonzero 2047 Nonzero NaN (Not a Number) Floating-Point Addition Step 1. Align the decimal point of the number that has the smaller exponent. Step 2. The addition of the significands Step 3. The sum is not in normalized scientific notation, so needs to be adjusted. Step 4. Round the number with precision we want. Block diagram for floating point addtion Floating point addition algorithm Floating point multiplication algorithm Floating-Point Multiplication Step 1. Calculate new exponent by adding the exponents of the operands Step 2. The multiplication of the significands Step 3. The sum is not in normalized scientific notation, so needs to be adjusted. Step 4. Round the number with precision we want. Step 5. Change the sign based on the original operands. If both same then positive, else it’s negative. Floating-Point Instructions in RISC-V It supports single-precision and double-precision formats with the instructions below: Addition Subtraction Multiplication Division Square Root Equals Less-than Less-than-or-equals Single-precision Fadd.s Fsub.s Fmul.s Fvid.s Fsqrt.s Feq.s Flt.s Fle.s Double-precision Fadd.d Fsub.d Fmul.d Fvid.d Fsqrt.d Feq.d Flt.d Fle.d Accurate Arithmetic To make floating point more precision, we introduce guard and round. Guard -> Two extra bit that kept in the right Round -> Method to round the number using Guard Sticky bit -> A bit used to round in addition to guard and round where there’s a set. Parallelism and Computer Arithmetic: Subworld Parallelism Nowadays, almost every gadget has a graphical display, and we represent colour with 8-bit information. Sound also requires some bits to work, so due to the inconsistency of this data, we can solve this by creating a 128-bit adder. Then, the processor could use parallelism to perform suitable bit operations. Real Stuff: Streaming SIMD Extensions and Advanced Vector Extensions in x86 The original MMX (MultiMedia eXtension) provide instructions for short vectors of integers The SSE (Streaming SIMD Extension) provide instructions for the short vectors of singleprecision floating point numbers. Then in 2001, Intel added 144 instructions as part of SSE2, to support double precision floating-point registers and operations. To holding a single precision or double precision number in a register, Intel allows multiple floating-point operands to be packed into a single 128-bit SSE2 register. Later in 2011 Intel doubled the width of the registers again, now called YMM, with Advanced Vector Extensions (AVX). In 2015, it doubled the registers again to 512 bits, which called ZIMM, with AVX512. Fallacies and Pitfalls Fallacy: Just as a left shift instruction can replace an integer multiply by a power of 2, a right shift is the same as an integer division by a power of 2. - It is just true for unsigned integer, but it’s not true for signed integer Pitfall: Floating-point addition is not associative. - Because floating-point numbers are approximations of real numbers and because computer arithmetic has limited precision, it does not hold for floating-point numbers. Fallacy: Parallel execution strategies that work for integer data types also work for floatingpoint data types - Because when floating-point sums calculated in different order will result in different answer, so it does not work. Fallacy: Only theoretical mathematicians care about floating-point accuracy. - Businessman, Engineer, even ordinary person care about floating-point accuracy.