Learning Bayesian Networks

Richard E. Neapolitan

Northeastern Illinois University

Chicago, Illinois

In memory of my dad, a difficult but loving father, who raised me well.

ii

Contents

Preface

ix

I

1

Basics

1 Introduction to Bayesian Networks

1.1 Basics of Probability Theory . . . . . . . . . . . . . . . . . . . .

1.1.1 Probability Functions and Spaces . . . . . . . . . . . . . .

1.1.2 Conditional Probability and Independence . . . . . . . . .

1.1.3 Bayes’ Theorem . . . . . . . . . . . . . . . . . . . . . . .

1.1.4 Random Variables and Joint Probability Distributions . .

1.2 Bayesian Inference . . . . . . . . . . . . . . . . . . . . . . . . . .

1.2.1 Random Variables and Probabilities in Bayesian Applications . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

1.2.2 A Definition of Random Variables and Joint Probability

Distributions for Bayesian Inference . . . . . . . . . . . .

1.2.3 A Classical Example of Bayesian Inference . . . . . . . . .

1.3 Large Instances / Bayesian Networks . . . . . . . . . . . . . . . .

1.3.1 The Difficulties Inherent in Large Instances . . . . . . . .

1.3.2 The Markov Condition . . . . . . . . . . . . . . . . . . . .

1.3.3 Bayesian Networks . . . . . . . . . . . . . . . . . . . . . .

1.3.4 A Large Bayesian Network . . . . . . . . . . . . . . . . .

1.4 Creating Bayesian Networks Using Causal Edges . . . . . . . . .

1.4.1 Ascertaining Causal Influences Using Manipulation . . . .

1.4.2 Causation and the Markov Condition . . . . . . . . . . .

3

5

6

9

12

13

20

2 More DAG/Probability Relationships

2.1 Entailed Conditional Independencies . . . . . . . . . . . .

2.1.1 Examples of Entailed Conditional Independencies .

2.1.2 d-Separation . . . . . . . . . . . . . . . . . . . . .

2.1.3 Finding d-Separations . . . . . . . . . . . . . . . .

2.2 Markov Equivalence . . . . . . . . . . . . . . . . . . . . .

2.3 Entailing Dependencies with a DAG . . . . . . . . . . . .

2.3.1 Faithfulness . . . . . . . . . . . . . . . . . . . . . .

65

66

66

70

76

84

92

95

iii

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

20

24

27

29

29

31

40

43

43

44

51

iv

CONTENTS

2.4

2.5

2.6

II

2.3.2 Embedded Faithfulness . . . . . . . . . . . . . .

Minimality . . . . . . . . . . . . . . . . . . . . . . . . .

Markov Blankets and Boundaries . . . . . . . . . . . . .

More on Causal DAGs . . . . . . . . . . . . . . . . . . .

2.6.1 The Causal Minimality Assumption . . . . . . .

2.6.2 The Causal Faithfulness Assumption . . . . . . .

2.6.3 The Causal Embedded Faithfulness Assumption

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Inference

3 Inference: Discrete Variables

3.1 Examples of Inference . . . . . . . . . . . . . . . .

3.2 Pearl’s Message-Passing Algorithm . . . . . . . . .

3.2.1 Inference in Trees . . . . . . . . . . . . . . .

3.2.2 Inference in Singly-Connected Networks . .

3.2.3 Inference in Multiply-Connected Networks .

3.2.4 Complexity of the Algorithm . . . . . . . .

3.3 The Noisy OR-Gate Model . . . . . . . . . . . . .

3.3.1 The Model . . . . . . . . . . . . . . . . . .

3.3.2 Doing Inference With the Model . . . . . .

3.3.3 Further Models . . . . . . . . . . . . . . . .

3.4 Other Algorithms that Employ the DAG . . . . . .

3.5 The SPI Algorithm . . . . . . . . . . . . . . . . . .

3.5.1 The Optimal Factoring Problem . . . . . .

3.5.2 Application to Probabilistic Inference . . .

3.6 Complexity of Inference . . . . . . . . . . . . . . .

3.7 Relationship to Human Reasoning . . . . . . . . .

3.7.1 The Causal Network Model . . . . . . . . .

3.7.2 Studies Testing the Causal Network Model

99

104

108

110

110

111

112

121

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

123

124

126

127

142

153

155

156

156

160

161

161

162

163

168

170

171

171

173

4 More Inference Algorithms

4.1 Continuous Variable Inference . . . . . . . . . . . . . . . . . . .

4.1.1 The Normal Distribution . . . . . . . . . . . . . . . . .

4.1.2 An Example Concerning Continuous Variables . . . . .

4.1.3 An Algorithm for Continuous Variables . . . . . . . . .

4.2 Approximate Inference . . . . . . . . . . . . . . . . . . . . . . .

4.2.1 A Brief Review of Sampling . . . . . . . . . . . . . . . .

4.2.2 Logic Sampling . . . . . . . . . . . . . . . . . . . . . . .

4.2.3 Likelihood Weighting . . . . . . . . . . . . . . . . . . . .

4.3 Abductive Inference . . . . . . . . . . . . . . . . . . . . . . . .

4.3.1 Abductive Inference in Bayesian Networks . . . . . . . .

4.3.2 A Best-First Search Algorithm for Abductive Inference .

.

.

.

.

.

.

.

.

.

.

.

181

181

182

183

185

205

205

211

217

221

221

224

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

CONTENTS

5 Influence Diagrams

5.1 Decision Trees . . . . . . . . . . . . . . . . . . .

5.1.1 Simple Examples . . . . . . . . . . . . .

5.1.2 Probabilities, Time, and Risk Attitudes

5.1.3 Solving Decision Trees . . . . . . . . . .

5.1.4 More Examples . . . . . . . . . . . . . .

5.2 Influence Diagrams . . . . . . . . . . . . . . . .

5.2.1 Representing with Influence Diagrams .

5.2.2 Solving Influence Diagrams . . . . . . .

5.3 Dynamic Networks . . . . . . . . . . . . . . . .

5.3.1 Dynamic Bayesian Networks . . . . . .

5.3.2 Dynamic Influence Diagrams . . . . . .

III

v

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Learning

239

239

239

242

245

245

259

259

266

272

272

279

291

6 Parameter Learning: Binary Variables

6.1 Learning a Single Parameter . . . . . . . . . . . . . . . . . . . . .

6.1.1 Probability Distributions of Relative Frequencies . . . . .

6.1.2 Learning a Relative Frequency . . . . . . . . . . . . . . .

6.2 More on the Beta Density Function . . . . . . . . . . . . . . . . .

6.2.1 Non-integral Values of a and b . . . . . . . . . . . . . . .

6.2.2 Assessing the Values of a and b . . . . . . . . . . . . . . .

6.2.3 Why the Beta Density Function? . . . . . . . . . . . . . .

6.3 Computing a Probability Interval . . . . . . . . . . . . . . . . . .

6.4 Learning Parameters in a Bayesian Network . . . . . . . . . . . .

6.4.1 Urn Examples . . . . . . . . . . . . . . . . . . . . . . . .

6.4.2 Augmented Bayesian Networks . . . . . . . . . . . . . . .

6.4.3 Learning Using an Augmented Bayesian Network . . . . .

6.4.4 A Problem with Updating; Using an Equivalent Sample

Size . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

6.5 Learning with Missing Data Items . . . . . . . . . . . . . . . . .

6.5.1 Data Items Missing at Random . . . . . . . . . . . . . . .

6.5.2 Data Items Missing Not at Random . . . . . . . . . . . .

6.6 Variances in Computed Relative Frequencies . . . . . . . . . . . .

6.6.1 A Simple Variance Determination . . . . . . . . . . . . .

6.6.2 The Variance and Equivalent Sample Size . . . . . . . . .

6.6.3 Computing Variances in Larger Networks . . . . . . . . .

6.6.4 When Do Variances Become Large? . . . . . . . . . . . .

293

294

294

303

310

311

313

315

319

323

323

331

336

7 More Parameter Learning

7.1 Multinomial Variables . . . . . . . . . . . . . . . . .

7.1.1 Learning a Single Parameter . . . . . . . . .

7.1.2 More on the Dirichlet Density Function . . .

7.1.3 Computing Probability Intervals and Regions

7.1.4 Learning Parameters in a Bayesian Network .

381

381

381

388

389

392

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

348

357

358

363

364

364

366

372

373

vi

CONTENTS

7.1.5 Learning with Missing Data Items . . . . . .

7.1.6 Variances in Computed Relative Frequencies

7.2 Continuous Variables . . . . . . . . . . . . . . . . . .

7.2.1 Normally Distributed Variable . . . . . . . .

7.2.2 Multivariate Normally Distributed Variables

7.2.3 Gaussian Bayesian Networks . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

398

398

398

399

413

425

8 Bayesian Structure Learning

8.1 Learning Structure: Discrete Variables . . . . . . . . . . . . . . .

8.1.1 Schema for Learning Structure . . . . . . . . . . . . . . .

8.1.2 Procedure for Learning Structure . . . . . . . . . . . . . .

8.1.3 Learning From a Mixture of Observational and Experimental Data. . . . . . . . . . . . . . . . . . . . . . . . . .

8.1.4 Complexity of Structure Learning . . . . . . . . . . . . .

8.2 Model Averaging . . . . . . . . . . . . . . . . . . . . . . . . . . .

8.3 Learning Structure with Missing Data . . . . . . . . . . . . . . .

8.3.1 Monte Carlo Methods . . . . . . . . . . . . . . . . . . . .

8.3.2 Large-Sample Approximations . . . . . . . . . . . . . . .

8.4 Probabilistic Model Selection . . . . . . . . . . . . . . . . . . . .

8.4.1 Probabilistic Models . . . . . . . . . . . . . . . . . . . . .

8.4.2 The Model Selection Problem . . . . . . . . . . . . . . . .

8.4.3 Using the Bayesian Scoring Criterion for Model Selection

8.5 Hidden Variable DAG Models . . . . . . . . . . . . . . . . . . . .

8.5.1 Models Containing More Conditional Independencies than

DAG Models . . . . . . . . . . . . . . . . . . . . . . . . .

8.5.2 Models Containing the Same Conditional Independencies

as DAG Models . . . . . . . . . . . . . . . . . . . . . . . .

8.5.3 Dimension of Hidden Variable DAG Models . . . . . . . .

8.5.4 Number of Models and Hidden Variables . . . . . . . . . .

8.5.5 Efficient Model Scoring . . . . . . . . . . . . . . . . . . .

8.6 Learning Structure: Continuous Variables . . . . . . . . . . . . .

8.6.1 The Density Function of D . . . . . . . . . . . . . . . . .

8.6.2 The Density function of D Given a DAG pattern . . . . .

8.7 Learning Dynamic Bayesian Networks . . . . . . . . . . . . . . .

441

441

442

445

9 Approximate Bayesian Structure Learning

9.1 Approximate Model Selection . . . . . . . . . . . . . . .

9.1.1 Algorithms that Search over DAGs . . . . . . . .

9.1.2 Algorithms that Search over DAG Patterns . . .

9.1.3 An Algorithm Assuming Missing Data or Hidden

9.2 Approximate Model Averaging . . . . . . . . . . . . . .

9.2.1 A Model Averaging Example . . . . . . . . . . .

9.2.2 Approximate Model Averaging Using MCMC . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

449

450

451

452

453

462

468

468

472

473

476

477

479

484

486

487

491

491

495

505

511

. . . . . 511

. . . . . 513

. . . . . 518

Variables 529

. . . . . 531

. . . . . 532

. . . . . 533

CONTENTS

vii

10 Constraint-Based Learning

541

10.1 Algorithms Assuming Faithfulness . . . . . . . . . . . . . . . . . 542

10.1.1 Simple Examples . . . . . . . . . . . . . . . . . . . . . . . 542

10.1.2 Algorithms for Determining DAG patterns . . . . . . . . 545

10.1.3 Determining if a Set Admits a Faithful DAG Representation552

10.1.4 Application to Probability . . . . . . . . . . . . . . . . . . 560

10.2 Assuming Only Embedded Faithfulness . . . . . . . . . . . . . . 561

10.2.1 Inducing Chains . . . . . . . . . . . . . . . . . . . . . . . 562

10.2.2 A Basic Algorithm . . . . . . . . . . . . . . . . . . . . . . 568

10.2.3 Application to Probability . . . . . . . . . . . . . . . . . . 590

10.2.4 Application to Learning Causal Influences1 . . . . . . . . 591

10.3 Obtaining the d-separations . . . . . . . . . . . . . . . . . . . . . 599

10.3.1 Discrete Bayesian Networks . . . . . . . . . . . . . . . . . 600

10.3.2 Gaussian Bayesian Networks . . . . . . . . . . . . . . . . 603

10.4 Relationship to Human Reasoning . . . . . . . . . . . . . . . . . 604

10.4.1 Background Theory . . . . . . . . . . . . . . . . . . . . . 604

10.4.2 A Statistical Notion of Causality . . . . . . . . . . . . . . 606

11 More Structure Learning

11.1 Comparing the Methods . . . . . . . . . . . . .

11.1.1 A Simple Example . . . . . . . . . . . .

11.1.2 Learning College Attendance Influences

11.1.3 Conclusions . . . . . . . . . . . . . . . .

11.2 Data Compression Scoring Criteria . . . . . . .

11.3 Parallel Learning of Bayesian Networks . . . .

11.4 Examples . . . . . . . . . . . . . . . . . . . . .

11.4.1 Structure Learning . . . . . . . . . . . .

11.4.2 Inferring Causal Relationships . . . . .

IV

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Applications

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

617

617

618

620

623

624

624

624

625

633

647

12 Applications

649

12.1 Applications Based on Bayesian Networks . . . . . . . . . . . . . 649

12.2 Beyond Bayesian networks . . . . . . . . . . . . . . . . . . . . . . 655

Bibliography

657

Index

686

1 The

relationships in the examples in this section are largely fictitious.

viii

CONTENTS

Preface

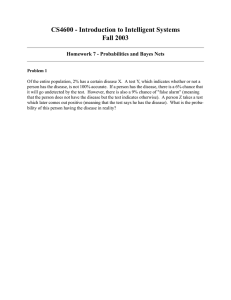

Bayesian networks are graphical structures for representing the probabilistic

relationships among a large number of variables and doing probabilistic inference

with those variables. During the 1980’s, a good deal of related research was done

on developing Bayesian networks (belief networks, causal networks, influence

diagrams), algorithms for performing inference with them, and applications that

used them. However, the work was scattered throughout research articles. My

purpose in writing the 1990 text Probabilistic Reasoning in Expert Systems was

to unify this research and establish a textbook and reference for the field which

has come to be known as ‘Bayesian networks.’ The 1990’s saw the emergence

of excellent algorithms for learning Bayesian networks from data. However,

by 2000 there still seemed to be no accessible source for ‘learning Bayesian

networks.’ Similar to my purpose a decade ago, the goal of this text is to

provide such a source.

In order to make this text a complete introduction to Bayesian networks,

I discuss methods for doing inference in Bayesian networks and influence diagrams. However, there is no effort to be exhaustive in this discussion. For

example, I give the details of only two algorithms for exact inference with discrete variables, namely Pearl’s message passing algorithm and D’Ambrosio and

Li’s symbolic probabilistic inference algorithm. It may seem odd that I present

Pearl’s algorithm, since it is one of the oldest. I have two reasons for doing

this: 1) Pearl’s algorithm corresponds to a model of human causal reasoning,

which is discussed in this text; and 2) Pearl’s algorithm extends readily to an

algorithm for doing inference with continuous variables, which is also discussed

in this text.

The content of the text is as follows. Chapters 1 and 2 cover basics. Specifically, Chapter 1 provides an introduction to Bayesian networks; and Chapter 2

discusses further relationships between DAGs and probability distributions such

as d-separation, the faithfulness condition, and the minimality condition. Chapters 3-5 concern inference. Chapter 3 covers Pearl’s message-passing algorithm,

D’Ambrosio and Li’s symbolic probabilistic inference, and the relationship of

Pearl’s algorithm to human causal reasoning. Chapter 4 shows an algorithm for

doing inference with continuous variable, an approximate inference algorithm,

and finally an algorithm for abductive inference (finding the most probable

explanation). Chapter 5 discusses influence diagrams, which are Bayesian networks augmented with decision nodes and a value node, and dynamic Bayesian

ix

x

PREFACE

networks and influence diagrams. Chapters 6-10 address learning. Chapters

6 and 7 concern parameter learning. Since the notation for these learning algorithm is somewhat arduous, I introduce the algorithms by discussing binary

variables in Chapter 6. I then generalize to multinomial variables in Chapter 7.

Furthermore, in Chapter 7 I discuss learning parameters when the variables are

continuous. Chapters 8, 9, and 10 concern structure learning. Chapter 8 shows

the Bayesian method for learning structure in the cases of both discrete and

continuous variables, while Chapter 9 discusses the constraint-based method

for learning structure. Chapter 10 compares the Bayesian and constraint-based

methods, and it presents several real-world examples of learning Bayesian networks. The text ends by referencing applications of Bayesian networks in Chapter 11.

This is a text on learning Bayesian networks; it is not a text on artificial

intelligence, expert systems, or decision analysis. However, since these are fields

in which Bayesian networks find application, they emerge frequently throughout

the text. Indeed, I have used the manuscript for this text in my course on expert

systems at Northeastern Illinois University. In one semester, I have found that

I can cover the core of the following chapters: 1, 2, 3, 5, 6, 7, 8, and 9.

I would like to thank those researchers who have provided valuable corrections, comments, and dialog concerning the material in this text. They include Bruce D’Ambrosio, David Maxwell Chickering, Gregory Cooper, Tom

Dean, Carl Entemann, John Erickson, Finn Jensen, Clark Glymour, Piotr

Gmytrasiewicz, David Heckerman, Xia Jiang, James Kenevan, Henry Kyburg,

Kathryn Blackmond Laskey, Don Labudde, David Madigan, Christopher Meek,

Paul-André Monney, Scott Morris, Peter Norvig, Judea Pearl, Richard Scheines,

Marco Valtorta, Alex Wolpert, and Sandy Zabell. I thank Sue Coyle for helping

me draw the cartoon containing the robots.

Part I

Basics

1

Chapter 1

Introduction to Bayesian

Networks

Consider the situation where one feature of an entity has a direct influence on

another feature of that entity. For example, the presence or absence of a disease

in a human being has a direct influence on whether a test for that disease turns

out positive or negative. For decades, Bayes’ theorem has been used to perform

probabilistic inference in this situation. In the current example, we would use

that theorem to compute the conditional probability of an individual having a

disease when a test for the disease came back positive. Consider next the situation where several features are related through inference chains. For example,

whether or not an individual has a history of smoking has a direct influence

both on whether or not that individual has bronchitis and on whether or not

that individual has lung cancer. In turn, the presence or absence of each of these

diseases has a direct influence on whether or not the individual experiences fatigue. Also, the presence or absence of lung cancer has a direct influence on

whether or not a chest X-ray is positive. In this situation, we would want to do

probabilistic inference involving features that are not related via a direct influence. We would want to determine, for example, the conditional probabilities

both of bronchitis and of lung cancer when it is known an individual smokes, is

fatigued, and has a positive chest X-ray. Yet bronchitis has no direct influence

(indeed no influence at all) on whether a chest X-ray is positive. Therefore,

these conditional probabilities cannot be computed using a simple application

of Bayes’ theorem. There is a straightforward algorithm for computing them,

but the probability values it requires are not ordinarily accessible; furthermore,

the algorithm has exponential space and time complexity.

Bayesian networks were developed to address these difficulties. By exploiting

conditional independencies entailed by influence chains, we are able to represent

a large instance in a Bayesian network using little space, and we are often able

to perform probabilistic inference among the features in an acceptable amount

of time. In addition, the graphical nature of Bayesian networks gives us a much

3

4

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

P(h1) = .2

H

P(b1|h1) = .25

P(b1|h2) = .05

B

L

P(l1|h1) = .003

P(l1|h2) = .00005

F

P(f1|b1,l1) = .75

P(f1|b1,l2) = .10

P(f1|b2,l1) = .5

P(f1|b2,l2) = .05

C

P(c1|l1) = .6

P(c1|l2) = .02

Figure 1.1: A Bayesian nework.

better intuitive grasp of the relationships among the features.

Figure 1.1 shows a Bayesian network representing the probabilistic relationships among the features just discussed. The values of the features in that

network represent the following:

Feature

H

B

L

F

C

Value

h1

h2

b1

b2

l1

l2

f1

f2

c1

c2

When the Feature Takes this Value

There is a history of smoking

There is no history of smoking

Bronchitis is present

Bronchitis is absent

Lung cancer is present

Lung cancer is absent

Fatigue is present

Fatigue is absent

Chest X-ray is positive

Chest X-ray is negative

This Bayesian network is discussed in Example 1.32 in Section 1.3.3 after we

provide the theory of Bayesian networks. Presently, we only use it to illustrate

the nature and use of Bayesian networks. First, in this Bayesian network (called

a causal network) the edges represent direct influences. For example, there is

an edge from H to L because a history of smoking has a direct influence on the

presence of lung cancer, and there is an edge from L to C because the presence

of lung cancer has a direct influence on the result of a chest X-ray. There is no

1.1. BASICS OF PROBABILITY THEORY

5

edge from H to C because a history of smoking has an influence on the result

of a chest X-ray only through its influence on the presence of lung cancer. One

way to construct Bayesian networks is by creating edges that represent direct

influences as done here; however, there are other ways. Second, the probabilities

in the network are the conditional probabilities of the values of each feature given

every combination of values of the feature’s parents in the network, except in the

case of roots they are prior probabilities. Third, probabilistic inference among

the features can be accomplished using the Bayesian network. For example, we

can compute the conditional probabilities both of bronchitis and of lung cancer

when it is known an individual smokes, is fatigued, and has a positive chest

X-ray. This Bayesian network is discussed again in Chapter 3 when we develop

algorithms that do this inference.

The focus of this text is on learning Bayesian networks from data. For

example, given we had values of the five features just discussed (smoking history, bronchitis, lung cancer, fatigue, and chest X-ray) for a large number of

individuals, the learning algorithms we develop might construct the Bayesian

network in Figure 1.1. However, to make it a complete introduction to Bayesian

networks, it does include a brief overview of methods for doing inference in

Bayesian networks and using Bayesian networks to make decisions. Chapters 1

and 2 cover properties of Bayesian networks which we need in order to discuss

both inference and learning. Chapters 3-5 concern methods for doing inference

in Bayesian networks. Methods for learning Bayesian networks from data are

discussed in Chapters 6-11. A number of successful experts systems (systems

which make the judgements of an expert) have been developed which are based

on Bayesian networks. Furthermore, Bayesian networks have been used to learn

causal influences from data. Chapter 12 references some of these real-world applications. To see the usefulness of Bayesian networks, you may wish to review

that chapter before proceeding.

This chapter introduces Bayesian networks. Section 1.1 reviews basic concepts in probability. Next, Section 1.2 discusses Bayesian inference and illustrates the classical way of using Bayes’ theorem when there are only two features. Section 1.3 shows the problem in representing large instances and introduces Bayesian networks as a solution to this problem. Finally, we discuss how

Bayesian networks can often be constructed using causal edges.

1.1

Basics of Probability Theory

The concept of probability has a rich and diversified history that includes many

different philosophical approaches. Notable among these approaches include the

notions of probability as a ratio, as a relative frequency, and as a degree of belief.

Next we review the probability calculus and, via examples, illustrate these three

approaches and how they are related.

6

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

1.1.1

Probability Functions and Spaces

In 1933 A.N. Kolmogorov developed the set-theoretic definition of probability,

which serves as a mathematical foundation for all applications of probability.

We start by providing that definition.

Probability theory has to do with experiments that have a set of distinct

outcomes. Examples of such experiments include drawing the top card from a

deck of 52 cards with the 52 outcomes being the 52 different faces of the cards;

flipping a two-sided coin with the two outcomes being ‘heads’ and ‘tails’; picking

a person from a population and determining whether the person is a smoker

with the two outcomes being ‘smoker’ and ‘non-smoker’; picking a person from

a population and determining whether the person has lung cancer with the

two outcomes being ‘having lung cancer’ and ‘not having lung cancer’; after

identifying 5 levels of serum calcium, picking a person from a population and

determining the individual’s serum calcium level with the 5 outcomes being

each of the 5 levels; picking a person from a population and determining the

individual’s serum calcium level with the infinite number of outcomes being

the continuum of possible calcium levels. The last two experiments illustrate

two points. First, the experiment is not well-defined until we identify a set of

outcomes. The same act (picking a person and measuring that person’s serum

calcium level) can be associated with many different experiments, depending on

what we consider a distinct outcome. Second, the set of outcomes can be infinite.

Once an experiment is well-defined, the collection of all outcomes is called the

sample space. Mathematically, a sample space is a set and the outcomes are

the elements of the set. To keep this review simple, we restrict ourselves to finite

sample spaces in what follows (You should consult a mathematical probability

text such as [Ash, 1970] for a discussion of infinite sample spaces.). In the case

of a finite sample space, every subset of the sample space is called an event. A

subset containing exactly one element is called an elementary event. Once a

sample space is identified, a probability function is defined as follows:

Definition 1.1 Suppose we have a sample space Ω containing n distinct elements. That is,

Ω = {e1 , e2 , . . . en }.

A function that assigns a real number P (E) to each event E ⊆ Ω is called

a probability function on the set of subsets of Ω if it satisfies the following

conditions:

1. 0 ≤ P ({ei }) ≤ 1

for 1 ≤ i ≤ n.

2. P ({e1 }) + P ({e2 }) + . . . + P ({en }) = 1.

3. For each event E = {ei1 , ei2 , . . . eik } that is not an elementary event,

P (E) = P ({ei1 }) + P ({ei2 }) + . . . + P ({eik }).

The pair (Ω, P ) is called a probability space.

1.1. BASICS OF PROBABILITY THEORY

7

We often just say P is a probability function on Ω rather than saying on the

set of subsets of Ω.

Intuition for probability functions comes from considering games of chance

as the following example illustrates.

Example 1.1 Let the experiment be drawing the top card from a deck of 52

cards. Then Ω contains the faces of the 52 cards, and using the principle of

indifference, we assign P ({e}) = 1/52 for each e ∈ Ω. Therefore, if we let kh

and ks stand for the king of hearts and king of spades respectively, P ({kh}) =

1/52, P ({ks}) = 1/52, and P ({kh, ks}) = P ({kh}) + P ({ks}) = 1/26.

The principle of indifference (a term popularized by J.M. Keynes in 1921)

says elementary events are to be considered equiprobable if we have no reason

to expect or prefer one over the other. According to this principle, when there

are n elementary events the probability of each of them is the ratio 1/n. This

is the way we often assign probabilities in games of chance, and a probability

so assigned is called a ratio.

The following example shows a probability that cannot be computed using

the principle of indifference.

Example 1.2 Suppose we toss a thumbtack and consider as outcomes the two

ways it could land. It could land on its head, which we will call ‘heads’, or

it could land with the edge of the head and the end of the point touching the

ground, which we will call ‘tails’. Due to the lack of symmetry in a thumbtack,

we would not assign a probability of 1/2 to each of these events. So how can

we compute the probability? This experiment can be repeated many times. In

1919 Richard von Mises developed the relative frequency approach to probability

which says that, if an experiment can be repeated many times, the probability of

any one of the outcomes is the limit, as the number of trials approach infinity,

of the ratio of the number of occurrences of that outcome to the total number of

trials. For example, if m is the number of trials,

P ({heads}) = lim

m→∞

#heads

.

m

So, if we tossed the thumbtack 10, 000 times and it landed heads 3373 times, we

would estimate the probability of heads to be about .3373.

Probabilities obtained using the approach in the previous example are called

relative frequencies. According to this approach, the probability obtained is

not a property of any one of the trials, but rather it is a property of the entire

sequence of trials. How are these probabilities related to ratios? Intuitively,

we would expect if, for example, we repeatedly shuffled a deck of cards and

drew the top card, the ace of spades would come up about one out of every 52

times. In 1946 J. E. Kerrich conducted many such experiments using games of

chance in which the principle of indifference seemed to apply (e.g. drawing a

card from a deck). His results indicated that the relative frequency does appear

to approach a limit and that limit is the ratio.

8

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

The next example illustrates a probability that cannot be obtained either

with ratios or with relative frequencies.

Example 1.3 If you were going to bet on an upcoming basketball game between

the Chicago Bulls and the Detroit Pistons, you would want to ascertain how

probable it was that the Bulls would win. This probability is certainly not a

ratio, and it is not a relative frequency because the game cannot be repeated

many times under the exact same conditions (Actually, with your knowledge

about the conditions the same.). Rather the probability only represents your

belief concerning the Bulls chances of winning. Such a probability is called a

degree of belief or subjective probability. There are a number of ways

for ascertaining such probabilities. One of the most popular methods is the

following, which was suggested by D. V. Lindley in 1985. This method says an

individual should liken the uncertain outcome to a game of chance by considering

an urn containing white and black balls. The individual should determine for

what fraction of white balls the individual would be indifferent between receiving

a small prize if the uncertain outcome happened (or turned out to be true) and

receiving the same small prize if a white ball was drawn from the urn. That

fraction is the individual’s probability of the outcome. Such a probability can be

constructed using binary cuts. If, for example, you were indifferent when the

fraction was .75, for you P ({bullswin}) = .75. If I were indifferent when the

fraction was .6, for me P ({bullswin}) = .6. Neither of us is right or wrong.

Subjective probabilities are unlike ratios and relative frequencies in that they do

not have objective values upon which we all must agree. Indeed, that is why they

are called subjective.

Neapolitan [1996] discusses the construction of subjective probabilities further. In this text, by probability we ordinarily mean a degree of belief. When

we are able to compute ratios or relative frequencies, the probabilities obtained

agree with most individuals’ beliefs. For example, most individuals would assign

a subjective probability of 1/13 to the top card being an ace because they would

be indifferent between receiving a small prize if it were the ace and receiving

that same small prize if a white ball were drawn from an urn containing one

white ball out of 13 total balls.

The following example shows a subjective probability more relevant to applications of Bayesian networks.

Example 1.4 After examining a patient and seeing the result of the patient’s

chest X-ray, Dr. Gloviak decides the probability that the patient has lung cancer

is .9. This probability is Dr. Gloviak’s subjective probability of that outcome.

Although a physician may use estimates of relative frequencies (such as the

fraction of times individuals with lung cancer have positive chest X-rays) and

experience diagnosing many similar patients to arrive at the probability, it is

still assessed subjectively. If asked, Dr. Gloviak may state that her subjective

probability is her estimate of the relative frequency with which patients, who

have these exact same symptoms, have lung cancer. However, there is no reason

to believe her subjective judgement will converge, as she continues to diagnose

1.1. BASICS OF PROBABILITY THEORY

9

patients with these exact same symptoms, to the actual relative frequency with

which they have lung cancer.

It is straightforward to prove the following theorem concerning probability

spaces.

Theorem 1.1 Let (Ω, P ) be a probability space. Then

1. P (Ω) = 1.

2. 0 ≤ P (E) ≤ 1

for every E ⊆ Ω.

3. For E and F ⊆ Ω such that E ∩ F = ∅,

P (E ∪ F) = P (E) + P (F).

Proof. The proof is left as an exercise.

The conditions in this theorem were labeled the axioms of probability

theory by A.N. Kolmogorov in 1933. When Condition (3) is replaced by infinitely countable additivity, these conditions are used to define a probability

space in mathematical probability texts.

Example 1.5 Suppose we draw the top card from a deck of cards. Denote by

Queen the set containing the 4 queens and by King the set containing the 4 kings.

Then

P (Queen ∪ King) = P (Queen) + P (King) = 1/13 + 1/13 = 2/13

because Queen ∩ King = ∅. Next denote by Spade the set containing the 13

spades. The sets Queen and Spade are not disjoint; so their probabilities are not

additive. However, it is not hard to prove that, in general,

P (E ∪ F) = P (E) + P (F) − P (E ∩ F).

So

P (Queen ∪ Spade) = P (Queen) + P (Spade) − P (Queen ∩ Spade)

1

1

4

1

+ −

= .

=

13 4 52

13

1.1.2

Conditional Probability and Independence

We have yet to discuss one of the most important concepts in probability theory,

namely conditional probability. We do that next.

Definition 1.2 Let E and F be events such that P (F) 6= 0. Then the conditional probability of E given F, denoted P (E|F), is given by

P (E|F) =

P (E ∩ F)

.

P (F)

10

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

The initial intuition for conditional probability comes from considering probabilities that are ratios. In the case of ratios, P (E|F), as defined above, is the

fraction of items in F that are also in E. We show this as follows. Let n be the

number of items in the sample space, nF be the number of items in F, and nEF

be the number of items in E ∩ F. Then

nEF /n

nEF

P (E ∩ F)

=

=

,

P (F)

nF /n

nF

which is the fraction of items in F that are also in E. As far as meaning, P (E|F)

means the probability of E occurring given that we know F has occurred.

Example 1.6 Again consider drawing the top card from a deck of cards, let

Queen be the set of the 4 queens, RoyalCard be the set of the 12 royal cards, and

Spade be the set of the 13 spades. Then

P (Queen) =

P (Queen|RoyalCard) =

P (Queen|Spade) =

1

13

1/13

1

P (Queen ∩ RoyalCard)

=

=

P (RoyalCard)

3/13

3

1/52

1

P (Queen ∩ Spade)

=

= .

P (Spade)

1/4

13

Notice in the previous example that P (Queen|Spade) = P (Queen). This

means that finding out the card is a spade does not make it more or less probable

that it is a queen. That is, the knowledge of whether it is a spade is irrelevant

to whether it is a queen. We say that the two events are independent in this

case, which is formalized in the following definition.

Definition 1.3 Two events E and F are independent if one of the following

hold:

1. P (E|F) = P (E)

and

P (E) 6= 0, P (F) 6= 0.

2. P (E) = 0 or P (F) = 0.

Notice that the definition states that the two events are independent even

though it is based on the conditional probability of E given F. The reason is

that independence is symmetric. That is, if P (E) 6= 0 and P (F) 6= 0, then

P (E|F) = P (E) if and only if P (F|E) = P (F). It is straightforward to prove that

E and F are independent if and only if P (E ∩ F) = P (E)P (F).

The following example illustrates an extension of the notion of independence.

Example 1.7 Let E = {kh, ks, qh}, F = {kh, kc, qh}, G = {kh, ks, kc, kd},

where kh means the king of hearts, ks means the king of spades, etc. Then

P (E) =

P (E|F) =

3

52

2

3

1.1. BASICS OF PROBABILITY THEORY

P (E|G) =

P (E|F ∩ G) =

11

2

1

=

4

2

1

.

2

So E and F are not independent, but they are independent once we condition on

G.

In the previous example, E and F are said to be conditionally independent

given G. Conditional independence is very important in Bayesian networks and

will be discussed much more in the sections that follow. Presently, we have the

definition that follows and another example.

Definition 1.4 Two events E and F are conditionally independent given G

if P (G) 6= 0 and one of the following holds:

1. P (E|F ∩ G) = P (E|G)

and

P (E|G) 6= 0, P (F|G) 6= 0.

2. P (E|G) = 0 or P (F|G) = 0.

Another example of conditional independence follows.

Example 1.8 Let Ω be the set of all objects in Figure 1.2. Suppose we assign

a probability of 1/13 to each object, and let Black be the set of all black objects,

White be the set of all white objects, Square be the set of all square objects, and

One be the set of all objects containing a ‘1’. We then have

P (One) =

P (One|Square) =

P (One|Black) =

P (One|Square ∩ Black) =

P (One|White) =

P (One|Square ∩ White) =

5

13

3

8

1

3

=

9

3

1

2

=

6

3

1

2

=

4

2

1

.

2

So One and Square are not independent, but they are conditionally independent

given Black and given White.

Next we discuss a very useful rule involving conditional probabilities. Suppose we have n events E1 , E2 , . . . En such that Ei ∩ Ej = ∅ for i 6= j and

12

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

1

1

2

2

1

2

1

2

2

2

1

2

2

Figure 1.2: Containing a ‘1’ and being a square are not independent, but they

are conditionally independent given the object is black and given it is white.

E1 ∪ E2 ∪ . . . ∪ En = Ω. Such events are called mutually exclusive and

exhaustive. Then the law of total probability says for any other event F,

P (F) =

n

X

P (F ∩ Ei ).

(1.1)

i=1

If P (Ei ) 6= 0, then P (F ∩ Ei ) = P (F|Ei )P (Ei ). Therefore, if P (Ei ) 6= 0 for all i,

the law is often applied in the following form:

P (F) =

n

X

P (F|Ei )P (Ei ).

(1.2)

i=1

It is straightforward to derive both the axioms of probability theory and

the rule for conditional probability when probabilities are ratios. However,

they can also be derived in the relative frequency and subjectivistic frameworks

(See [Neapolitan, 1990].). These derivations make the use of probability theory

compelling for handling uncertainty.

1.1.3

Bayes’ Theorem

For decades conditional probabilities of events of interest have been computed

from known probabilities using Bayes’ theorem. We develop that theorem next.

Theorem 1.2 (Bayes) Given two events E and F such that P (E) 6= 0 and

P (F) 6= 0, we have

P (F|E)P (E)

.

(1.3)

P (E|F) =

P (F)

Furthermore, given n mutually exclusive and exhaustive events E1 , E2 , . . . En

such that P (Ei ) 6= 0 for all i, we have for 1 ≤ i ≤ n,

P (Ei |F) =

P (F|Ei )P (Ei )

.

P (F|E1 )P (E1 ) + P (F|E2 )P (E2 ) + · · · P (F|En )P (En )

(1.4)

1.1. BASICS OF PROBABILITY THEORY

13

Proof. To obtain Equality 1.3, we first use the definition of conditional probability as follows:

P (E|F) =

P (E ∩ F)

P (F)

and

P (F|E) =

P (F ∩ E)

.

P (E)

Next we multiply each of these equalities by the denominator on its right side to

show that

P (E|F)P (F) = P (F|E)P (E)

because they both equal P (E ∩ F). Finally, we divide this last equality by P (F)

to obtain our result.

To obtain Equality 1.4, we place the expression for F, obtained using the rule

of total probability (Equality 1.2), in the denominator of Equality 1.3.

Both of the formulas in the preceding theorem are called Bayes’ theorem

because they were originally developed by Thomas Bayes (published in 1763).

The first enables us to compute P (E|F) if we know P (F|E), P (E), and P (F), while

the second enables us to compute P (Ei |F) if we know P (F|Ej ) and P (Ej ) for

1 ≤ j ≤ n. Computing a conditional probability using either of these formulas

is called Bayesian inference. An example of Bayesian inference follows:

Example 1.9 Let Ω be the set of all objects in Figure 1.2, and assign each

object a probability of 1/13. Let One be the set of all objects containing a 1, Two

be the set of all objects containing a 2, and Black be the set of all black objects.

Then according to Bayes’ Theorem,

P (One|Black) =

=

P (Black|One)P (One)

P (Black|One)P (One) + P (Black|Two)P (Two)

5

)

( 35 )( 13

1

= ,

3

5

6

8

3

( 5 )( 13 ) + ( 8 )( 13 )

which is the same value we get by computing P (One|Black) directly.

The previous example is not a very exciting application of Bayes’ Theorem

as we can just as easily compute P (One|Black) directly. Section 1.2 discusses

useful applications of Bayes’ Theorem.

1.1.4

Random Variables and Joint Probability Distributions

We have one final concept to discuss in this overview, namely that of a random

variable. The definition shown here is based on the set-theoretic definition of

probability given in Section 1.1.1. In Section 1.2.2 we provide an alternative

definition which is more pertinent to the way random variables are used in

practice.

Definition 1.5 Given a probability space (Ω, P ), a random variable X is a

function on Ω.

14

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

That is, a random variable assigns a unique value to each element (outcome)

in the sample space. The set of values random variable X can assume is called

the space of X. A random variable is said to be discrete if its space is finite

or countable. In general, we develop our theory assuming the random variables

are discrete. Examples follow.

Example 1.10 Let Ω contain all outcomes of a throw of a pair of six-sided

dice, and let P assign 1/36 to each outcome. Then Ω is the following set of

ordered pairs:

Ω = {(1, 1), (1, 2), (1, 3), (1, 4), (1, 5), (1, 6), (2, 1), (2, 2), . . . (6, 5), (6, 6)}.

Let the random variable

let the random variable

to a pair if at least one

table shows some of the

X assign the sum of each ordered pair to that pair, and

Y assign ‘odd’ to each pair of odd numbers and ‘even’

number in that pair is an even number. The following

values of X and Y :

e

(1, 1)

(1, 2)

···

(2, 1)

···

(6, 6)

X(e)

2

3

···

3

···

12

Y (e)

odd

even

···

even

···

even

The space of X is {2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12}, and that of Y is {odd, even}.

For a random variable X, we use X = x to denote the set of all elements

e ∈ Ω that X maps to the value of x. That is,

X =x

represents the event

{e such that X(e) = x}.

Note the difference between X and x. Small x denotes any element in the space

of X, while X is a function.

Example 1.11 Let Ω , P , and X be as in Example 1.10. Then

X=3

represents the event

P (X = 3) =

{(1, 2), (2, 1)} and

1

.

18

It is not hard to see that a random variable induces a probability function

on its space. That is, if we define PX ({x}) ≡ P (X = x), then PX is such a

probability function.

Example 1.12 Let Ω contain all outcomes of a throw of a single die, let P

assign 1/6 to each outcome, and let Z assign ‘even’ to each even number and

‘odd’ to each odd number. Then

1

PZ ({even}) = P (Z = even) = P ({2, 4, 6}) =

2

1.1. BASICS OF PROBABILITY THEORY

15

1

PZ ({odd}) = P (Z = odd) = P ({1, 3, 5}) = .

2

We rarely refer to PX ({x}). Rather we only reference the original probability

function P , and we call P (X = x) the probability distribution of the random

variable X. For brevity, we often just say ‘distribution’ instead of ‘probability

distribution’. Furthermore, we often use x alone to represent the event X = x,

and so we write P (x) instead of P (X = x) . We refer to P (x) as ‘the probability

of x’.

Let Ω, P , and X be as in Example 1.10. Then if x = 3,

1

.

18

Given two random variables X and Y , defined on the same sample space Ω,

we use X = x, Y = y to denote the set of all elements e ∈ Ω that are mapped

both by X to x and by Y to y. That is,

P (x) = P (X = x) =

X = x, Y = y

represents the event

{e such that X(e) = x} ∩ {e such that Y (e) = y}.

Example 1.13 Let Ω, P , X, and Y be as in Example 1.10. Then

X = 4, Y = odd

represents the event

{(1, 3), (3, 1)}, and

P (X = 4, Y = odd) = 1/18.

Clearly, two random variables induce a probability function on the Cartesian

product of their spaces. As is the case for a single random variable, we rarely

refer to this probability function. Rather we reference the original probability

function. That is, we refer to P (X = x, Y = y), and we call this the joint

probability distribution of X and Y . If A = {X, Y }, we also call this the

joint probability distribution of A. Furthermore, we often just say ‘joint

distribution’ or ‘probability distribution’.

For brevity, we often use x, y to represent the event X = x, Y = y, and

so we write P (x, y) instead of P (X = x, Y = y). This concept extends in a

straightforward way to three or more random variables. For example, P (X =

x, Y = y, Z = z) is the joint probability distribution function of the variables

X, Y , and Z, and we often write P (x, y, z).

Example 1.14 Let Ω, P , X, and Y be as in Example 1.10. Then if x = 4 and

y = odd,

P (x, y) = P (X = x, Y = y) = 1/18.

If, for example, we let A = {X, Y } and a = {x, y}, we use

A=a

to represent

X = x, Y = y,

and we often write P (a) instead of P (A = a). The same notation extends to

the representation of three or more random variables. For consistency, we set

P (∅ = ∅) = 1, where ∅ is the empty set of random variables. Note that if ∅

is the empty set of events, P (∅) = 0.

16

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

Example 1.15 Let Ω, P , X, and Y be as in Example 1.10. If A = {X, Y },

a = {x, y}, x = 4, and y = odd,

P (A = a) = P (X = x, Y = y) = 1/18.

This notation entails that if we have, for example, two sets of random variables A = {X, Y } and B = {Z, W }, then

A = a, B = b

represents

X = x, Y = y, Z = z, W = w.

Given a joint probability distribution, the law of total probability (Equality

1.1) implies the probability distribution of any one of the random variables

can be obtained by summing over all values of the other variables. It is left

as an exercise to show this. For example, suppose we have a joint probability

distribution P (X = x, Y = y). Then

P (X = x) =

X

P (X = x, Y = y),

y

P

where y means the sum as y goes through all values of Y . The probability

distribution P (X = x) is called the marginal probability distribution of X

because it is obtained using a process similar to adding across a row or column in

a table of numbers. This concept also extends in a straightforward way to three

or more random variables. For example, if we have a joint distribution P (X =

x, Y = y, Z = z) of X, Y , and Z, the marginal distribution P (X = x, Y = y) of

X and Y is obtained by summing over all values of Z. If A = {X, Y }, we also

call this the marginal probability distribution of A.

Example 1.16 Let Ω, P , X, and Y be as in Example 1.10. Then

P (X = 4) =

X

P (X = 4, Y = y)

y

= P (X = 4, Y = odd) + P (X = 4, Y = even) =

1

1

1

+

=

.

18 36

12

The following example reviews the concepts covered so far concerning random variables:

Example 1.17 Let Ω be a set of 12 individuals, and let P assign 1/12 to each

individual. Suppose the sexes, heights, and wages of the individuals are as follows:

1.1. BASICS OF PROBABILITY THEORY

Case

1

2

3

4

5

6

7

8

9

10

11

12

Sex

female

female

female

female

female

female

male

male

male

male

male

male

Height (inches)

64

64

64

64

68

68

64

64

68

68

70

70

17

Wage ($)

30, 000

30, 000

40, 000

40, 000

30, 000

40, 000

40, 000

50, 000

40, 000

50, 000

40, 000

50, 000

Let the random variables S, H and W respectively assign the sex, height and

wage of an individual to that individual. Then the distributions of the three

variables are as follows (Recall that, for example, P (s) represents P (S = s).):

s

female

male

P (s)

1/2

1/2

h

64

68

70

P (h)

1/2

1/3

1/6

w

30, 000

40, 000

50, 000

P (w)

1/4

1/2

1/4

The joint distribution of S and H is as follows:

s

female

female

female

male

male

male

h

64

68

70

64

68

70

P (s, h)

1/3

1/6

0

1/6

1/6

1/6

The following table also shows the joint distribution of S and H and illustrates

that the individual distributions can be obtained by summing the joint distribution over all values of the other variable:

64

68

70

Distribution of S

s

female

male

1/3

1/6

1/6

1/6

0

1/6

1/2

1/2

Distribution of H

1/2

1/3

1/6

h

The table that follows shows the first few values in the joint distribution of S,

H, and W . There are 18 values in all, of which many are 0.

18

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

s

female

female

female

female

···

h

64

64

64

68

···

w

30, 000

40, 000

50, 000

30, 000

···

P (s, h, w)

1/6

1/6

0

1/12

···

We have the following definition:

Definition 1.6 Suppose we have a probability space (Ω, P ), and two sets A and

B containing random variables defined on Ω. Then the sets A and B are said to

be independent if, for all values of the variables in the sets a and b, the events

A = a and B = b are independent. That is, either P (a) = 0 or P (b) = 0 or

P (a|b) = P (a).

When this is the case, we write

IP (A, B),

where IP stands for independent in P .

Example 1.18 Let Ω be the set of all cards in an ordinary deck, and let P

assign 1/52 to each card. Define random variables as follows:

Variable

R

T

S

Value

r1

r2

t1

t2

s1

s2

Outcomes Mapped to this Value

All royal cards

All nonroyal cards

All tens and jacks

All cards that are neither tens nor jacks

All spades

All nonspades

Then we maintain the sets {R, T } and {S} are independent. That is,

IP ({R, T }, {S}).

To show this, we need show for all values of r, t, and s that

P (r, t|s) = P (r, t).

(Note that it we do not show brackets to denote sets in our probabilistic expression because in such an expression a set represents the members of the set. See

the discussion following Example 1.14.) The following table shows this is the

case:

1.1. BASICS OF PROBABILITY THEORY

s

s1

s1

s1

s1

s2

s2

s2

s2

r

r1

r1

r2

r2

r1

r1

r2

r2

t

t1

t2

t1

t2

t1

t2

t1

t2

P (r, t|s)

1/13

2/13

1/13

9/13

3/39 = 1/13

6/39 = 2/13

3/39 = 1/13

27/39 = 9/13

19

P (r, t)

4/52 = 1/13

8/52 = 2/13

4/52 = 1/13

36/52 = 9/13

4/52 = 1/13

8/52 = 2/13

4/52 = 1/13

36/52 = 9/13

Definition 1.7 Suppose we have a probability space (Ω, P ), and three sets A,

B, and C containing random variable defined on Ω. Then the sets A and B are

said to be conditionally independent given the set C if, for all values of

the variables in the sets a, b, and c, whenever P (c) 6= 0, the events A = a and

B = b are conditionally independent given the event C = c. That is, either

P (a|c) = 0 or P (b|c) = 0 or

P (a|b, c) = P (a|c).

When this is the case, we write

IP (A, B|C).

Example 1.19 Let Ω be the set of all objects in Figure 1.2, and let P assign

1/13 to each object. Define random variables S (for shape), V (for value), and

C (for color) as follows:

Variable

V

S

C

Value

v1

v2

s1

s2

c1

c2

Outcomes Mapped to this Value

All objects containing a ‘1’

All objects containing a ‘2’

All square objects

All round objects

All black objects

All white objects

Then we maintain that {V } and {S} are conditionally independent given {C}.

That is,

IP ({V }, {S}|{C}).

To show this, we need show for all values of v, s, and c that

P (v|s, c) = P (v|c).

The results in Example 1.8 show P (v1|s1, c1) = P (v1|c1) and P (v1|s1, c2) =

P (v1|c2). The table that follows shows the equality holds for the other values of

the variables too:

20

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

c

c1

c1

c1

c1

c2

c2

c2

c2

s

s1

s1

s2

s2

s1

s1

s2

s2

v

v1

v2

v1

v2

v1

v2

v1

v2

P (v|s, c)

2/6 = 1/3

4/6 = 2/3

1/3

2/3

1/2

1/2

1/2

1/2

P (v|c)

3/9 = 1/3

6/9 = 2/3

3/9 = 1/3

6/9 = 2/3

2/4 = 1/2

2/4 = 1/2

2/4 = 1/2

2/4 = 1/2

For the sake of brevity, we sometimes only say ‘independent’ rather than

‘conditionally independent’. Furthermore, when a set contains only one item,

we often drop the set notation and terminology. For example, in the preceding

example, we might say V and S are independent given C and write IP (V, S|C).

Finally, we have the chain rule for random variables, which says that given

n random variables X1 , X2 , . . . Xn , defined on the same sample space Ω,

regola della catena

P (x1 , x2 , . . .xn ) = P (xn |xn−1 , xn−2 , . . .x1 ) · · · P (x2 |x1 )P (x1 )

whenever P (x1 , x2 , . . .xn ) 6= 0. It is straightforward to prove this rule using the

rule for conditional probability.

1.2

Bayesian Inference

We use Bayes’ Theorem when we are not able to determine the conditional

probability of interest directly, but we are able to determine the probabilities

on the right in Equality 1.3. You may wonder why we wouldn’t be able to

compute the conditional probability of interest directly from the sample space.

The reason is that in these applications the probability space is not usually

developed in the order outlined in Section 1.1. That is, we do not identify a

sample space, determine probabilities of elementary events, determine random

variables, and then compute values in joint probability distributions. Instead, we

identify random variables directly, and we determine probabilistic relationships

among the random variables. The conditional probabilities of interest are often

not the ones we are able to judge directly. We discuss next the meaning of

random variables and probabilities in Bayesian applications and how they are

identified directly. After that, we show how a joint probability distribution can

be determined without first specifying a sample space. Finally, we show a useful

application of Bayes’ Theorem.

1.2.1

Random Variables and Probabilities in Bayesian Applications

Although the definition of a random variable (Definition 1.5) given in Section

1.1.4 is mathematically elegant and in theory pertains to all applications of

probability, it is not readily apparent how it applies to applications involving

1.2. BAYESIAN INFERENCE

21

Bayesian inference. In this subsection and the next we develop an alternative

definition that does.

When doing Bayesian inference, there is some entity which has features,

the states of which we wish to determine, but which we cannot determine for

certain. So we settle for determining how likely it is that a particular feature is

in a particular state. The entity might be a single system or a set of systems.

An example of a single system is the introduction of an economically beneficial

chemical which might be carcinogenic. We would want to determine the relative

risk of the chemical versus its benefits. An example of a set of entities is a set

of patients with similar diseases and symptoms. In this case, we would want to

diagnose diseases based on symptoms.

In these applications, a random variable represents some feature of the entity

being modeled, and we are uncertain as to the values of this feature for the

particular entity. So we develop probabilistic relationships among the variables.

When there is a set of entities, we assume the entities in the set all have the same

probabilistic relationships concerning the variables used in the model. When

this is not the case, our Bayesian analysis is not applicable. In the case of the

chemical introduction, features may include the amount of human exposure and

the carcinogenic potential. If these are our features of interest, we identify the

random variables HumanExposure and CarcinogenicP otential (For simplicity,

our illustrations include only a few variables. An actual application ordinarily

includes many more than this.). In the case of a set of patients, features of

interest might include whether or not a disease such as lung cancer is present,

whether or not manifestations of diseases such as a chest X-ray are present,

and whether or not causes of diseases such as smoking are present. Given these

features, we would identify the random variables ChestXray, LungCancer,

and SmokingHistory. After identifying the random variables, we distinguish a

set of mutually exclusive and exhaustive values for each of them. The possible

values of a random variable are the different states that the feature can take.

For example, the state of LungCancer could be present or absent, the state of

ChestXray could be positive or negative, and the state of SmokingHistory

could be yes or no. For simplicity, we have only distinguished two possible

values for each of these random variables. However, in general they could have

any number of possible values or they could even be continuous. For example,

we might distinguish 5 different levels of smoking history (one pack or more

for at least 10 years, two packs or more for at least 10 years, three packs or

more for at lest ten years, etc.). The specification of the random variables and

their values not only must be precise enough to satisfy the requirements of the

particular situation being modeled, but it also must be sufficiently precise to

pass the clarity test, which was developed by Howard in 1988. That test

is as follows: Imagine a clairvoyant who knows precisely the current state of

the world (or future state if the model concerns events in the future). Would

the clairvoyant be able to determine unequivocally the value of the random

variable? For example, in the case of the chemical introduction, if we give

HumanExposure the values low and high, the clarity test is not passed because

we do not know what constitutes high or low. However, if we define high as

22

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

when the average (over all individuals), of the individual daily average skin

contact, exceeds 6 grams of material, the clarity test is passed because the

clairvoyant can answer precisely whether the contact exceeds that. In the case

of a medical application, if we give SmokingHistory only the values yes and

no, the clarity test is not passed because we do not know whether yes means

smoking cigarettes, cigars, or something else, and we have not specified how

long smoking must have occurred for the value to be yes. On the other hand, if

we say yes means the patient has smoked one or more packs of cigarettes every

day during the past 10 years, the clarity test is passed.

After distinguishing the possible values of the random variables (i.e. their

spaces), we judge the probabilities of the random variables having their values.

However, in general we do not always determine prior probabilities; nor do we determine values in a joint probability distribution of the random variables. Rather

we ascertain probabilities, concerning relationships among random variables,

that are accessible to us. For example, we might determine the prior probability

P (LungCancer = present), and the conditional probabilities P (ChestXray =

positive|LungCancer = present), P (ChestXray = positive|LungCancer =

absent), P (LungCancer = present| SmokingHistory = yes), and finally

P (LungCancer = present|SmokingHistory = no). We would obtain these

probabilities either from a physician or from data or from both. Thinking in

terms of relative frequencies, P (LungCancer = present|SmokingHistory =

yes) can be estimated by observing individuals with a smoking history, and determining what fraction of these have lung cancer. A physician is used to judging

such a probability by observing patients with a smoking history. On the other

hand, one does not readily judge values in a joint probability distribution such as

P (LungCancer = present, ChestXray = positive, SmokingHistory = yes). If

this is not apparent, just think of the situation in which there are 100 or more

random variables (which there are in some applications) in the joint probability

distribution. We can obtain data and think in terms of probabilistic relationships among a few random variables at a time; we do not identify the joint

probabilities of several events.

As to the nature of these probabilities, consider first the introduction of the

toxic chemical. The probabilities of the values of CarcinogenicP otential will

be based on data involving this chemical and similar ones. However, this is

certainly not a repeatable experiment like a coin toss, and therefore the probabilities are not relative frequencies. They are subjective probabilities based

on a careful analysis of the situation. As to the medical application involving a set of entities, we often obtain the probabilities from estimates of relative frequencies involving entities in the set. For example, we might obtain

P (ChestXray = positive|LungCancer = present) by observing 1000 patients

with lung cancer and determining what fraction have positive chest X-rays.

However, as will be illustrated in Section 1.2.3, when we do Bayesian inference

using these probabilities, we are computing the probability of a specific individual being in some state, which means it is a subjective probability. Recall from

Section 1.1.1 that a relative frequency is not a property of any one of the trials

(patients), but rather it is a property of the entire sequence of trials. You may

1.2. BAYESIAN INFERENCE

23

feel that we are splitting hairs. Namely, you may argue the following: “This

subjective probability regarding a specific patient is obtained from a relative

frequency and therefore has the same value as it. We are simply calling it a

subjective probability rather than a relative frequency.” But even this is not

the case. Even if the probabilities used to do Bayesian inference are obtained

from frequency data, they are only estimates of the actual relative frequencies.

So they are subjective probabilities obtained from estimates of relative frequencies; they are not relative frequencies. When we manipulate them using Bayes’

theorem, the resultant probability is therefore also only a subjective probability.

Once we judge the probabilities for a given application, we can often obtain values in a joint probability distribution of the random variables. Theorem 1.5 in Section 1.3.3 obtains a way to do this when there are many variables. Presently, we illustrate the case of two variables. Suppose we only

identify the random variables LungCancer and ChestXray, and we judge the

prior probability P (LungCancer = present), and the conditional probabilities P (ChestXray = positive|LungCancer = present) and P (ChestXray =

positive|LungCancer = absent). Probabilities of values in a joint probability

distribution can be obtained from these probabilities using the rule for conditional probability as follows:

P (present, positive) = P (positive|present)P (present)

P (present, negative) = P (negative|present)P (present)

P (absent, positive) = P (positive|absent)P (absent)

P (absent, negative) = P (negative|absent)P (absent).

Note that we used our abbreviated notation. We see then that at the outset we

identify random variables and their probabilistic relationships, and values in a

joint probability distribution can then often be obtained from the probabilities

relating the random variables. So what is the sample space? We can think of the

sample space as simply being the Cartesian product of the sets of all possible

values of the random variables. For example, consider again the case where we

only identify the random variables LungCancer and ChestXray, and ascertain

probability values in a joint distribution as illustrated above. We can define the

following sample space:

Ω=

{(present, positive), (present, negative), (absent, positive), (absent, negative)}.

We can consider each random variable a function on this space that maps

each tuple into the value of the random variable in the tuple. For example,

LungCancer would map (present, positive) and (present, negative) each into

present. We then assign each elementary event the probability of its corresponding event in the joint distribution. For example, we assign

P̂ ({(present, positive)}) = P (LungCancer = present, ChestXray = positive).

24

CHAPTER 1. INTRODUCTION TO BAYESIAN NETWORKS

It is not hard to show that this does yield a probability function on Ω and

that the initially assessed prior probabilities and conditional probabilities are

the probabilities they notationally represent in this probability space (This is a

special case of Theorem 1.5.).

Since random variables are actually identified first and only implicitly become functions on an implicit sample space, it seems we could develop the concept of a joint probability distribution without the explicit notion of a sample

space. Indeed, we do this next. Following this development, we give a theorem

showing that any such joint probability distribution is a joint probability distribution of the random variables with the variables considered as functions on

an implicit sample space. Definition 1.1 (of a probability function) and Definition 1.5 (of a random variable) can therefore be considered the fundamental

definitions for probability theory because they pertains both to applications

where sample spaces are directly identified and ones where random variables

are directly identified.

1.2.2

A Definition of Random Variables and Joint Probability Distributions for Bayesian Inference

For the purpose of modeling the types of problems discussed in the previous

subsection, we can define a random variable X as a symbol representing any

one of a set of values, called the space of X. For simplicity, we will assume

the space of X is countable, but the theory extends naturally to the case where

it is not. For example, we could identify the random variable LungCancer as

having the space {present, absent}. We use the notation X = x as a primitive

which is used in probability expressions. That is, X = x is not defined in terms

of anything else. For example, in application LungCancer = present means the

entity being modeled has lung cancer, but mathematically it is simply a primitive which is used in probability expressions. Given this definition and primitive,

we have the following direct definition of a joint probability distribution: