Linear Programming

Interior-Point Methods

D. Eiland

Linear Programming Problem

LP is the optimization of a linear equation that is

subject to a set of constraints and is normally

expressed in the following form :

Minimize :

Subject to :

f ( x) c x

A x b

x0

Barrier Function

To enforce the inequality x 0 on the previous

problem, a penalty function can be added to f (x )

n

f p ( x) c x ln( x j )

j 1

Then if any xj 0, then

f p (x)

trends toward

As 0 , then f p (x) is equivalent to f (x )

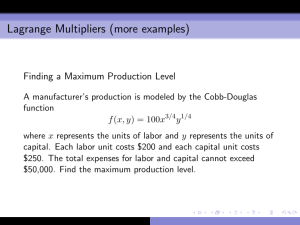

Lagrange Multiplier

To enforce the A x b constraints, a Lagrange

Multiplier (-y) can be added to f p (x)

n

L( x, y) c x ln( x j ) y( A x b)

j 1

Giving a linear function that can be minimized.

Optimal Conditions

Previously, we found that the optimal solution of a

function is located where its gradient (set of partial

derivatives) is zero.

That implies that the optimal solution for L(x,y) is

found when :

x L( x, y) c X e A y 0

1

y L( x, y) A x b 0

Where : X diag ( x)

e (1,...,1)

T

Optimal Conditions (Con’t)

By defining the vector z X 1 e , the previous

set of optimal conditions can be re-written as

A x b

T

L ( x , y , z ) A y z c 0

X z e

Newton’s Method

Newton’s method defines an iterative

mechanism for finding a function’s roots and is

represented by :

f ( vn )

vn 1 vn

f ' ( vn )

When vn1 vn , f (vn1 ) 0

Optimal Solution

Applying this to

following :

A

0

Z

0

T

A

0

L( x, y, z )

we can derive the

0 x b A x

T

1 y c A y z

X z e X z

Interior Point Algorithm

This system can then be re-written as three separate equations :

A ( X Z 1 ) AT y b A x A ( x Z 1 e X Z 1 ( AT y z c)

z AT y c AT y z

x X Z 1 z Z 1 e x

Which is used as the basis for the interior point algorithm :

1. Choose initial points for x0,y0,z0 and the select value for τ between 0 and 1

2. While Ax - b != 0

a) Solve first above equation for Δy [Generally done by matrix factorization]

b) Compute Δx and Δz

c) Determine the maximum values for xn+1, yn+1,zn+1 that do not violate the

constraints x >= 0 and z >= 0 from :

xn 1 xn ax

zn 1 zn az

yn 1 yn ay

With : 0 < a <=1

0

0