MSD

advertisement

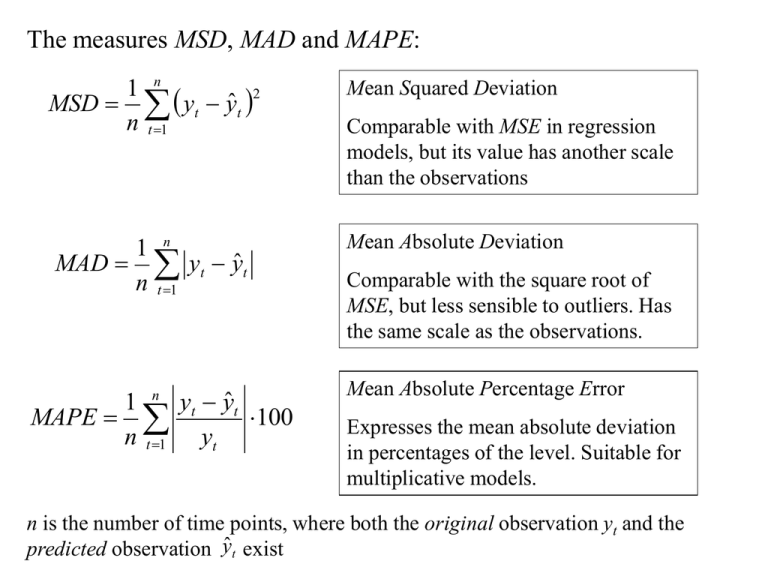

The measures MSD, MAD and MAPE:

1 n

2

MSD yt yˆ t

n t 1

Mean Squared Deviation

1 n

MAD yt yˆ t

n t 1

Mean Absolute Deviation

MAPE

n

1

n t 1

yt yˆ t

100

yt

Comparable with MSE in regression

models, but its value has another scale

than the observations

Comparable with the square root of

MSE, but less sensible to outliers. Has

the same scale as the observations.

Mean Absolute Percentage Error

Expresses the mean absolute deviation

in percentages of the level. Suitable for

multiplicative models.

n is the number of time points, where both the original observation yt and the

predicted observation ŷt exist

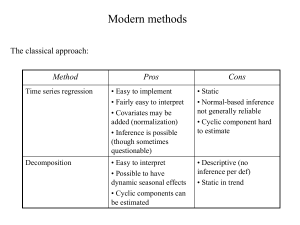

Modern methods

The classical approach:

Method

Pros

Cons

Time series regression

• Easy to implement

• Fairly easy to interpret

• Covariates may be

added (normalization)

• Inference is possible

(though sometimes

questionable)

• Static

• Normal-based inference

not generally reliable

• Cyclic component hard

to estimate

Decomposition

• Easy to interpret

• Possible to have

dynamic seasonal effects

• Cyclic components can

be estimated

• Descriptive (no

inference per def)

• Static in trend

Explanation to the static behaviour:

The classical approach assumes all components except the irregular ones

(i.e. t and IRt ) to be deterministic, i.e. fixed functions or constants

To overcome this problem, all components should be allowed to be

stochastic, i.e. be random variates.

A time series yt should from a statistical point of view be treated as a

stochastic process.

We will interchangeably use the terms time series and process

depending on the situation.

Stationary and non-stationary time series

3000

Non-stationary

Stationary

20

10

2000

1000

0

Index

0

10

20

30

40

50

60

70

80

90 100

Index

Characteristics for a stationary time series:

• Constant mean

• Constant variance

A time series with trend is non-stationary!

100

200

300

Box-Jenkins models

A stationary times series can be modelled on basis of the serial

correlations in it.

A non-stationary time series can be transformed into a stationary time

series, modelled and back-transformed to original scale (e.g. for

purposes of forecasting)

ARIMA – models

This part has

to do with the

transformation

Auto Regressive,

Integrated,

Moving Average

These parts can

be modelled on a

stationary series

Different types of transformation

1. From a series with linear trend to a series with no trend:

First-order differences zt = yt – yt – 1

MTB > diff c1 c2

20

15

10

5

0

Note that the differences series varies around zero.

Variable

linear trend

no trend

2. From a series with quadratic trend to a series with no trend:

Second-order differences

wt = zt – zt – 1 = (yt – yt – 1) – (yt – 1 – yt – 2) = yt – 2yt – 1 + yt – 2

MTB > diff 2 c3 c4

20

15

10

5

0

Variable

quadratic trend

no trend 2

3. From a series with non-constant variance (heteroscedastic) to a series with

constant variance (homoscedastic):

Box-Cox transformations (per def 1964)

yt 1

for 0 and yt 0

g yt

ln yt for 0 and yt 0

Practically is chosen so that yt + is always > 0

Simpler form: If we know that yt is always > 0 (as is the usual case for

measurements)

yt

4

yt

g yt ln yt

1 y

t

1 yt

if modest heterosced asticity

-"if pronounced heterosced asticity

if heavy heterosced asticity

if extreme heterosced asticity

The log transform (ln yt ) usually also makes the data ”more” normally distributed

Example: Application of root (yt ) and log (ln yt ) transforms

25

20

15

10

5

0

Variable

original

root

log

AR-models (for stationary time series)

Consider the model

yt = δ + ·yt –1 + at

with {at } i.i.d with zero mean and constant variance = σ2

and where δ (delta) and (phi) are (unknown) parameters

Set δ = 0 by sake of simplicity E(yt ) = 0

Let R(k) = Cov(yt,yt-k ) = Cov(yt,yt+k ) = E(yt ·yt-k ) = E(yt ·yt+k )

R(0) = Var(yt) assumed to be constant

Now:

R(0) = E(yt ·yt ) = E(yt ·( ·yt-1 + at ) = · E(yt ·yt-1 ) + E(yt ·at ) =

= ·R(1) + E(( ·yt-1 + at ) ·at ) = ·R(1) + · E(yt-1 ·at ) + E(at ·at )=

= ·R(1) + 0 + σ2

(for at is independent of yt-1 )

R(1) = E(yt ·yt+1 ) = E(yt ·( ·yt + at+1 ) = · E(yt ·yt ) + E(yt ·at+1 ) =

= ·R(0) + 0

(for at+1 is independent of yt )

R(2) = E(yt ·yt+2 ) = E(yt ·( ·yt+1 + at+2 ) = · E(yt ·yt+1 ) +

+ E(yt ·at+2 ) = ·R(1) + 0

(for at+1 is independent of yt )

R(0) = ·R(1) + σ2

R(1) = ·R(0)

Yule-Walker equations

R(2) = ·R(1)

…

R(k ) = ·R(k – 1) =…= k·R(0)

R(0) = 2 ·R(0) + σ2

2

R(0)

1 2

Note that for R(0) to become positive and finite (which we require

from a variance) the following must hold:

1 1

2

This in effect the condition for an AR(1)-process to be weakly

stationary

Note now that

Corr ( yt , yt k ) k

k

k R(0)

R(0)

Cov( yt , yt k )

Var ( yt ) Var ( yt k )

k

R( k )

R( k )

R(0) R(0) R(0)

ρk is called the Autocorrelation function (ACF) of yt

”Auto” because it gives correlations within the same time series.

For pairs of different time series one can define the Cross correlation

function which gives correlations at different lags between series.

By studying the ACF it might be possible to identify the

approximate magnitude of

Examples:

ACF for AR(1), phi=0.1

1

0.8

0.6

0.4

0.2

0

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

12

13

14

15

k

ACF for AR(1), phi=0.3

1

0.8

0.6

0.4

0.2

0

1

2

3

4

5

6

7

8

k

9

10

11

ACF for AR(1), phi=0.5

ACF for AR(1), phi=0.8

1

1

0.8

0.8

0.6

0.6

0.4

0.4

0.2

0.2

0

0

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

1

2

3

4

5

6

12

13

14

15

ACF for AR(1), phi=0.99

1

0.8

0.6

0.4

0.2

0

1

2

3

4

5

6

7

8

9

10

11

7

8

9

10

11

12

13

14

15

ACF for AR(1), phi=-0.5

ACF for AR(1), phi=-0.1

1

1

0.8

0.6

0.4

0.2

0

-0.2

-0.4

-0.6

-0.8

-1

0.8

0.6

0.4

0.2

0

-0.2

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

-0.4

-0.6

-0.8

-1

1

2

3

4

5

6

ACF for AR(1), phi=-0.8

1

0.8

0.6

0.4

0.2

0

-0.2

-0.4

-0.6

-0.8

-1

1

2

3

4

5

6

7

8

9 10 11 12 13 14 15

7

8

9

10 11 12 13 14 15

The look of an ACF can be similar for different kinds of time series,

e.g. the ACF for an AR(1) with = 0.3 could be approximately the

same as the ACF for an Auto-regressive time series of higher order

than 1 (we will discuss higher order AR-models later)

To do a less ambiguous identification we need another statistic:

The Partial Autocorrelation function (PACF):

υk = Corr (yt ,yt-k | yt-k+1, yt-k+2 ,…, yt-1 )

i.e. the conditional correlation between yt and yt-k given all

observations in-between.

Note that –1 υk 1

A concept sometimes hard to interpret, but it can be shown that

for AR(1)-models with positive the look of the PACF is

1.00

0.00

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

k

and for AR(1)-models with negative the look of the PACF is

1

0

1

2

3

4

5

6

7

8

-1

k

9

10

11 12 13 14 15

Assume now that we have a sample y1, y2,…, yn from a time series

assumed to follow an AR(1)-model.

Example:

Monthly exchange rates

DKK/USD 1991-1998

10

8

6

4

2

0

The ACF and the PACF can be estimated from data by their sample

counterparts:

Sample Autocorrelation function (SAC):

nk

rk

(y

t 1

t

y )( yt k y )

if n large, otherwise a scaling

n

2

(

y

y

)

t

might be needed

t 1

Sample Partial Autocorrelation function (SPAC)

Complicated structure, so not shown here

The variance function of these two estimators can also be estimated

Opportunity to test

H0: k = 0

vs.

Ha: k 0

H0: k = 0

vs.

Ha: k 0

or

for a particular value of k.

Estimated sample functions are usually plotted together with critical

limits based on estimated variances.

Example (cont) DKK/USD exchange:

SAC:

SPAC:

Critical

limits

Ignoring all bars within the red limits, we would identify the series as

being an AR(1) with positive .

The value of is approximately 0.9 (ordinate of first bar in SAC plot

and in SPAC plot)

Higher-order AR-models

AR(2):

yt 1 yt 1 2 yt 2 at

yt 2 yt 2 at

AR(3):

or

yt-2 must be present

yt 1 yt 1 2 yt 2 3 yt 3 at

or other combinations with 3 yt-3

AR(p):

yt 1 yt 1 ... p yt p at

i.e. different combinations with p yt-p

Stationarity conditions:

For p > 2, difficult to express on closed form.

For p = 2:

yt 1 yt 1 2 yt 2 at

The values of 1 and 2 must lie within the blue triangle in the figure below:

Typical patterns of ACF and PACF functions for higher order

stationary AR-models (AR( p )):

ACF: Similar pattern as for AR(1), i.e. (exponentially) decreasing

bars, (most often) positive for 1 positive and alternating for 1

negative.

PACF: The first p values of k are non-zero with decreasing

magnitude. The rest are all zero (cut-off point at p )

(Most often) all positive if 1 positive and alternating if 1

negative

Examples:

AR(2), 1 positive:

PACF

ACF

1

1

0

0

1

2

3

4

5

6

7

AR(5), 1 negative:

8

1

9 10 11 12 13 14 15

2

3

4

5

6

7

ACF

9 10 11 12 13 14 15

PACF

1

1

0

0

1

-1

8

2

3

4

5

6

7

8

1

9 10 11 12 13 14 15

-1

2

3

4

5

6

7

8

9 10 11 12 13 14 15