Introduction to TDDC78 Lab Series Lu Li Linköping University

advertisement

Introduction to

TDDC78 Lab Series

Lu Li

Linköping University

Parts of Slides developed by Usman Dastgeer

Goals

Shared- and Distributed-memory

systems

Programming parallelism (typical

problems)

Goals

Shared- and Distributed-memory

systems

Programming parallelism (typical

problems)

Approach and solve

oPartitioning

Domain decomposition

Functional decomposition

oCommunication

oAgglomeration

oMapping

o

TDDC78 Labs: Memory-based Taxonomy

Memory

Distributed

Shared

Labs

1

2&3

Distributed

5

Use

MPI

POSIX threads &

OpenMP

MPI

LAB 4 (tools). May saves your time for LAB 5.

Information sources

Compendium

oYour primary source of information

http://www.ida.liu.se/~TDDC78/labs/

oComprehensive

Environment description

Lab specification

Step-by-step instructions

Others

Triolith: http://www.nsc.liu.se/systems/triolith/

MPI: http://www.mpi-forum.org/docs/

…

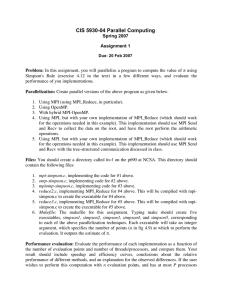

TDDC 78 Labs: Memory-based

Taxonomy

Memory

Distributed

Shared

Labs

1

2&3

Distributed

5

Use

MPI

POSIX threads &

OpenMP

MPI

LAB 5 (tools) at every stage. Saves your time.

Learn about MPI

Define

MPI types

Send / Receive

Broadcast

Scatter / Gather

LAB 1

Use virtual topologies

MPI_Issend / MPI_Probe / MPI_Reduce

Sending larger pieces of data

LAB 5

Synchronize / MPI_Barrier

Lab-1 TDDC78: Image Filters with MPI

Blur & Threshold

o See compendium for details

Your goal is to understand:

Define types

Send / Receive

Broadcast

Scatter / Gather

For syntax and examples refer to the

MPI lecture slides

Decompose

domains

Apply filter in

parallel

MPI Types Example

typedef struct {

int id;

double data[10];

} buf_t;

// Composite type

buf_t item;

// Element of the type

MPI_Datatype buf_t_mpi; // MPI type to commit

int block_lengths [] = { 1, 10 }; // Lengths of type elements

MPI_Datatype block_types [] = { MPI_INT, MPI_DOUBLE }; //Set types

MPI_Aint start, displ[2];

MPI_Address( &item, &start );

MPI_Address( &item.id, &displ[0] );

MPI_Address( &item.data[0], &displ[1] );

displ[0] -= start; // Displacement relative to address of start

displ[1] -= start; // Displacement relative to address of start

MPI_Type_struct( 2, block_lengths, displ, block_types, &buf_t_mpi );

MPI_Type_commit( &buf_t_mpi );

Send-Receive

...

int s_data, r_data;

...

MPI_Request request;

MPI_ISend( &s_data, sizeof(int), MPI_INT,

(my_id == 0)?1:0, 0, MPI_COMM_WORLD, &request);

MPI_Status status;

MPI_Recv( &r_data, sizeof(int), MPI_INT,

(my_id == 0)?1:0, 0, MPI_COMM_WORLD, &status );

MPI_Wait(&request, &status);

...

P0

SendTo(P1)

program

execution

P1

SendTo(P0)

RecvFrom(P1) RecvFrom(P0)

Send-Receive Modes (1)

SEND

BLOCKING

Standard

Synchronous

Buffered

Ready

MPI_Send

MPI_Ssend

MPI_Bsend

MPI_Rsend

RECEIVE

BLOCKING

MPI_Recv

NONBLOCKING

MPI_Isend

MPI_Issend

MPI_Ibsend

MPI_Irsend

NONBLOCKING

MPI_Irecv

Lab-4

Lab 5: Particles

Moving particles

Moving Validate

particlesthe pressure law

ValidateDynamic

the pressure

law: pV=nRT

interaction

patterns

Dynamic interaction

patterns

# of particles that

fly across borders is n

o# of particles that fly across borders is not

static You need advanced domain decomp

You need advanced

Motivate yourdomain

choice!

decomposition

oMotivate your choice!

Process Topologies (1)

Process Topologies (0)

By default processors are arranged

into

1-dimensional

arraysinto 1By default

processors are arranged

dimensional arrays

Processor

ranks are computed

! accordingly

Processor ranks are computed accordingly

What if processors need

!

to communicate in 2

What if processors

need

dimensions

or more?

to communicate in 2

dimensions or more?

Use

virtual

topologies

achieving

2D

! Use virtual topologies achieving 2D instead of 1D

instead

ofof1D

arrangement

of

arrangement

processors

with convenient

ranking schemes

processors

with convenient ranking

Process Topologies (1)

int dims[2]; // 2D matrix / grid

dims[0]= 2; // 2 rows

dims[1]= 3; // 3 columns

MPI_Dims_create( nproc, 2, dims);

int periods[2];

periods[0]= 1; // Row-periodic

periods[1]= 0; // Column-non-periodic

int reorder = 1; // Re-order allowed

MPI_Comm grid_comm;

MPI_Cart_create( MPI_COMM_WORLD, 2, dims, periods,

reorder, &grid_comm);

Process Topologies (2)

int

int

int

int

my_coords[2]; // Cartesian Process coordinates

my_rank;

// Process rank

right_nbr[2];

right_nbr_rank;

MPI_Cart_get( grid_comm, 2, dims, periods,

my_coords);

MPI_Cart_rank( grid_comm, my_coords, &my_rank);

right_nbr[0] = my_coords[0]+1;

right_nbr[1] = my_coords[1];

MPI_Cart_rank( grid_comm, right_nbr, &

right_nbr_rank);

Collective Communication (CC)

...

// One processor

for(int j=1; j < nproc; j++) {

MPI_Send(&message, sizeof(message_t), ...);

}

...

// All the others

MPI_Recv(&message,sizeof(message_t), ...);

CC: Scatter / Gather

Distributing (unevenly sized) chunks

of data

sendbuf = (int *) malloc( nproc * stride * sizeof(int));

displs = (int *) malloc( nproc * sizeof( int));

scounts = (int *) malloc( nproc * sizeof( int));

for (i = 0; i < nproc; ++i) {

displs[i] = ...

scounts[i] = ...

}

MPI_Scatterv( sendbuf, scounts, displs, MPI_INT,

rbuf, 100, MPI_INT, root, comm);

Summary

Learning goals

oPoint-to-point communication

oProbing / Non-blocking send (choose)

oBarriers & Wait = Synchronization

oDerived data types

oCollective communications

oVirtual topologies

Send/Receive modes

oUse with care to keep your code

portable, e.g. MPI_Bsend

o“It works there but not here!”

MPI Labs at home?

No problem

www.open-mpi.org

Simple to install

Simple to use