CIS4930 Introduction to Data Mining Classification Tallahassee, Florida, 2016

advertisement

CIS4930

Introduction to Data Mining

Classification

Peixiang Zhao

Tallahassee, Florida, 2016

Definition

• Given a collection of records (training set )

– Each record contains a set of attributes, one of the attributes is the

class

• Find a model for class attribute as a function of the

values of other attributes

• Goal: previously unseen records should be assigned a

class as accurately as possible

– A test set is used to determine the accuracy of the model

– Usually, the given data set is divided into training and test sets,

with training set used to build the model and test set used to

validate it

1

Example: Distinguish Dogs from Cats

Deep Blue beat Kasparov at chess in 1997.

Watson beat the brightest trivia minds at Jeopardy in 2011.

Can you tell Fido from Mittens in 2013?

https://www.kaggle.com/c/dogs-vs-cats

2

Example: Distinguish Dogs from Cats

Cat

Dog

Cat

Dog

What about this?

3

Example: AlphaGo

4

Illustration

Tid

Attrib1

Attrib2

Attrib3

Class

1

Yes

Large

125K

No

2

No

Medium

100K

No

3

No

Small

70K

No

4

Yes

Medium

120K

No

5

No

Large

95K

Yes

6

No

Medium

60K

No

7

Yes

Large

220K

No

8

No

Small

85K

Yes

9

No

Medium

75K

No

10

No

Small

90K

Yes

Learning

algorithm

Induction

Learn

Model

Model

10

Training Set

Tid

Attrib1

Attrib2

Attrib3

11

No

Small

55K

?

12

Yes

Medium

80K

?

13

Yes

Large

110K

?

14

No

Small

95K

?

15

No

Large

67K

?

Apply

Model

Class

Deduction

10

Test Set

5

Classification—A Two-Step Process

• Model construction: describing a set of predetermined classes

– Each tuple/sample is assumed to belong to a predefined class, as determined by

the class label attribute

– The set of tuples used for model construction is training set

– The model is represented as rules, decision trees, or mathematical formulae

• Model usage: for classifying future or unknown objects

– Estimate accuracy of the model

• The known label of test sample is compared with the classified result from

the model

• Accuracy rate is the percentage of test set samples that are correctly

classified by the model

• Test set is independent of training set (otherwise overfitting)

– If the accuracy is acceptable, use the model to classify data whose class labels

are not known

6

Step 1: Model Construction

Training

Data

NAME

M ike

M ary

B ill

Jim

D ave

Anne

RANK

YEARS TENURED

A ssistan t P ro f

3

no

A ssistan t P ro f

7

yes

P ro fesso r

2

yes

A sso ciate P ro f

7

yes

A ssistan t P ro f

6

no

A sso ciate P ro f

3

no

Classification

Algorithms

Classifier

(Model)

IF rank = ‘professor’

OR years > 6

THEN tenured = ‘yes’

7

Step 2: Model Usage

Classifier

Testing

Data

Unseen Data

(Jeff, Professor, 4)

NAME

Tom

M erlisa

G eo rg e

Jo sep h

RANK

YEARS TENURED

A ssistan t P ro f

2

no

A sso ciate P ro f

7

no

P ro fesso r

5

yes

A ssistan t P ro f

7

yes

Tenured?

8

Supervised vs. Unsupervised Learning

• Supervised learning (classification)

– Supervision: the training data (observations, measurements, etc.)

are accompanied by labels indicating the class of the observations

– New data is classified based on the training set

• Unsupervised learning (clustering)

– The class labels of training data is unknown

– Given a set of measurements, observations, etc. with the aim of

establishing the existence of classes or clusters in the data

9

Prediction

• Classification

– predicts categorical class labels (discrete or nominal)

– classifies data (constructs a model) based on the training set and

the values (class labels) in a classifying attribute and uses it in

classifying new data

• Numeric Prediction

– models continuous-valued functions, i.e., predicts unknown or

missing values

• Applications

– Credit/loan approval

– Medical diagnosis: if a tumor is cancerous or benign

– Fraud detection: if a transaction is fraudulent

– Web page categorization: which category it is

10

Classification Techniques

• Decision tree based methods

• Naïve Bayes model

• Support Vector Machines (SVM)

• Ensemble methods

• Model evaluation

To be, or not to be, that is the question.

--- William Shakespeare

11

Decision Tree Induction

Splitting Attributes

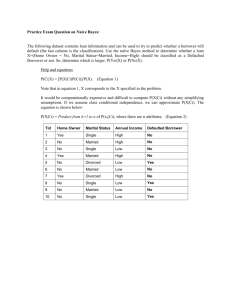

Tid Refund Marital

Status

Taxable

Income Cheat

1

Yes

Single

125K

No

2

No

Married

100K

No

3

No

Single

70K

No

4

Yes

Married

120K

No

5

No

Divorced 95K

Yes

6

No

Married

No

7

Yes

Divorced 220K

No

8

No

Single

85K

Yes

9

No

Married

75K

No

10

No

Single

90K

Yes

60K

Refund

Yes

No

NO

MarSt

Single, Divorced

TaxInc

< 80K

NO

Married

NO

> 80K

YES

Model: Decision Tree

10

Training Data

There could be more than one tree that

fits the same data!

12

Decision Tree Classification Task

Tid

Attrib1

Attrib2

Attrib3

Class

1

Yes

Large

125K

No

2

No

Medium

100K

No

3

No

Small

70K

No

4

Yes

Medium

120K

No

5

No

Large

95K

Yes

6

No

Medium

60K

No

7

Yes

Large

220K

No

8

No

Small

85K

Yes

9

No

Medium

75K

No

10

No

Small

90K

Yes

Tree

Induction

algorithm

Induction

Learn

Model

Model

10

Training Set

Tid

Attrib1

Attrib2

Attrib3

11

No

Small

55K

?

12

Yes

Medium

80K

?

13

Yes

Large

110K

?

14

No

Small

95K

?

15

No

Large

67K

?

Apply

Model

Class

Deduction

10

Test Set

13

Apply Model to Test Data

Test Data

Start from the root of tree

Refund

Yes

Refund Marital

Status

Taxable

Income Cheat

No

80K

Married

?

10

No

NO

MarSt

Single, Divorced

TaxInc

< 80K

NO

Married

NO

> 80K

YES

14

Apply Model to Test Data

Test Data

Refund

Yes

10

No

NO

MarSt

Single, Divorced

TaxInc

< 80K

NO

Married

NO

> 80K

YES

Refund Marital

Status

Taxable

Income Cheat

No

80K

Married

?

Apply Model to Test Data

Test Data

Refund

Yes

10

No

NO

MarSt

Single, Divorced

TaxInc

< 80K

NO

Married

NO

> 80K

YES

Refund Marital

Status

Taxable

Income Cheat

No

80K

Married

?

Apply Model to Test Data

Test Data

Refund

Yes

10

No

NO

MarSt

Single, Divorced

TaxInc

< 80K

NO

Married

NO

> 80K

YES

Refund Marital

Status

Taxable

Income Cheat

No

80K

Married

?

Apply Model to Test Data

Test Data

Refund

Yes

10

No

NO

MarSt

Single, Divorced

TaxInc

< 80K

NO

Married

NO

> 80K

YES

Refund Marital

Status

Taxable

Income Cheat

No

80K

Married

?

Apply Model to Test Data

Test Data

Refund

Yes

Refund Marital

Status

Taxable

Income Cheat

No

80K

Married

?

10

No

NO

MarSt

Single, Divorced

TaxInc

< 80K

NO

Married

NO

> 80K

YES

Assign Cheat to “No”

Decision Tree Classification Task

Tid

Attrib1

Attrib2

Attrib3

Class

1

Yes

Large

125K

No

2

No

Medium

100K

No

3

No

Small

70K

No

4

Yes

Medium

120K

No

5

No

Large

95K

Yes

6

No

Medium

60K

No

7

Yes

Large

220K

No

8

No

Small

85K

Yes

9

No

Medium

75K

No

10

No

Small

90K

Yes

Tree

Induction

algorithm

Induction

Learn

Model

Model

10

Training Set

Tid

Attrib1

Attrib2

Attrib3

11

No

Small

55K

?

12

Yes

Medium

80K

?

13

Yes

Large

110K

?

14

No

Small

95K

?

15

No

Large

67K

?

Apply

Model

Class

Decision Tree

Deduction

10

Test Set

20

A General Algorithm

• Basic algorithm (a greedy algorithm)

– Tree is constructed in a top-down recursive divide-and-conquer

manner

– At start, all the training examples are at the root

– Attributes are categorical (if continuous-valued, they are

discretized in advance)

– Examples are partitioned recursively based on selected attributes

– Test attributes are selected on the basis of a heuristic or statistical

measure

• Conditions for stopping partitioning

– All samples for a given node belong to the same class

– There are no remaining attributes for further partitioning –

majority voting is employed for classifying the leaf

– There are no samples left

21

Decision Tree Induction

• Let Dt be the set of training records that reach a node t

• General Procedure:

– If Dt contains records that belong the same class yt, then t is a

leaf node labeled as yt

– If Dt is an empty set, then t is a leaf node labeled by the default

class, yd

– If Dt contains records that belong to more than one class, use

an attribute test to split the data into smaller subsets. Recursively

apply the procedure to each subset

Dt

?

22

Example

Refund

Training

Data

Yes

No

Don’t

Cheat

???

Refund

Refund

Yes

Yes

No

Don’t

Cheat

Don’t

Cheat

Marital

Status

Single,

Divorced

???

Married

No

Marital

Status

Single,

Divorced

Don’t

Cheat

Tid Refund Marital

Status

Taxable

Income Cheat

1

Yes

Single

125K

No

2

No

Married

100K

No

3

No

Single

70K

No

4

Yes

Married

120K

No

5

No

Divorced 95K

Yes

6

No

Married

No

7

Yes

Divorced 220K

No

8

No

Single

85K

Yes

9

No

Married

75K

No

10

No

Single

90K

Yes

60K

10

Married

Don’t

Cheat

Taxable

Income

< 80K

>= 80K

Don’t

Cheat

Cheat

23

Decision Tree Induction

• Greedy strategy

– Split the training data based on an attribute test that optimizes

certain criterion

• Issues

– How to split the training records?

CarType

Family

• How to specify the attribute test condition?

Luxury

Sports

– Depend on attribute types: nominal, ordinal, continuous

– Depend on number of ways to split

Size

{Small,

Medium}

• How to determine the best split?

{Large}

– Nodes with homogeneous class distribution are preferred

– Need a measure of node impurity

– when to stop splitting?

Taxable

Income

> 80K?

Taxable

Income?

< 10K

Yes

> 80K

No

[10K,25K)

(i) Binary split

[25K,50K)

[50K,80K)

(ii) Multi-way split

24

How to Determine the Best Split

Before Splitting: 10 records of class 0, 10 records of class 1

Own

Car?

Yes

Car

Type?

Family

No

Student

ID?

Luxury

c1

Sports

C0: 6

C1: 4

C0: 4

C1: 6

C0: 1

C1: 3

C0: 8

C1: 0

C0: 1

C1: 7

C0: 1

C1: 0

...

c10

C0: 1

C1: 0

c11

C0: 0

C1: 1

c20

...

C0: 0

C1: 1

Which test condition is the best?

C0: 5

C1: 5

C0: 9

C1: 1

Non-homogeneous,

Homogeneous,

High degree of impurity

Low degree of impurity

25

How to Determine the Best Split

Before Splitting:

C0

C1

N00

N01

M0

A?

B?

Yes

No

Node N1

C0

C1

Node N2

N10

N11

C0

C1

N20

N21

M2

M1

Yes

No

Node N3

C0

C1

Node N4

N30

N31

C0

C1

M3

M12

N40

N41

M4

M34

Gain = M0 – M12 vs. M0 – M34

26

Measure of Impurity: GINI

• Gini Index for a given node t :

GINI (t ) 1 [ p( j | t )]2

j

(NOTE: p( j | t) is the relative frequency of class j at node t)

– Maximum (1 - 1/nc) when records are equally distributed among

all classes, implying least interesting information

– Minimum (0) when all records belong to one class, implying

most interesting information

C1

C2

0

6

Gini=0.000

C1

C2

1

5

Gini=0.278

C1

C2

2

4

Gini=0.444

C1

C2

3

3

Gini=0.500

27

Example

GINI (t ) 1 [ p( j | t )]2

j

C1

C2

0

6

P(C1) = 0/6 = 0

C1

C2

1

5

P(C1) = 1/6

C1

C2

2

4

P(C1) = 2/6

P(C2) = 6/6 = 1

Gini = 1 – P(C1)2 – P(C2)2 = 1 – 0 – 1 = 0

P(C2) = 5/6

Gini = 1 – (1/6)2 – (5/6)2 = 0.278

P(C2) = 4/6

Gini = 1 – (2/6)2 – (4/6)2 = 0.444

28

Splitting Based on GINI

• Used in CART, SLIQ, SPRINT

– Classification And Regression Tree (CART) by Leo Breiman

– When a node p is split into k partitions (children), the quality of

split is computed as,

k

ni

GINI split GINI (i )

i 1 n

where,

ni = number of records at child i

n = number of records at node p

29

Example: Binary Attributes

• Splits into two partitions

• Effect of weighing partitions:

– Larger and purer partitions are sought for

B?

Parent

C1

6

C2

6

Gini = 0.500

Yes

Node N1

No

Node N2

C1

C2

N1 N2

5

1

2

4

Gini=0.333

Gini(N1)

= 1 – (5/7)2 – (2/7)2 = 0.408

Gini(N2)

= 1 – (1/5)2 – (4/5)2 = 0.320

Gini(Children)

= 7/12 * 0.408 + 5/12 * 0.320

= 0.371

30

Example: Categorical Attributes

• For each distinct value, gather counts for each class in

the dataset

• Use the count matrix to make decisions

Multi-way split

Two-way split

(find best partition of values)

CarType

Family Sports Luxury

C1

C2

Gini

1

4

2

1

0.393

1

1

C1

C2

Gini

CarType

{Sports,

{Family}

Luxury}

3

1

2

4

0.400

C1

C2

Gini

CarType

{Family,

{Sports}

Luxury}

2

2

1

5

0.419

31

Example: Continuous Attributes

• Choices for the splitting value

– Each splitting value has a count matrix associated with it

• Class counts in each of the partitions, A < v and A v

• Algorithm

– Sort the attribute on values

– Linearly scan these values, each time updating the count matrix and computing

gini index

– Choose the split position that has the least gini index

Cheat

No

No

No

Yes

Yes

Yes

No

No

No

No

100

120

125

220

Taxable Income

Sorted Values

Split Positions

60

70

55

75

65

85

72

90

80

95

87

92

97

110

122

172

230

<=

>

<=

>

<=

>

<=

>

<=

>

<=

>

<=

>

<=

>

<=

>

<=

>

<=

>

Yes

0

3

0

3

0

3

0

3

1

2

2

1

3

0

3

0

3

0

3

0

3

0

No

0

7

1

6

2

5

3

4

3

4

3

4

3

4

4

3

5

2

6

1

7

0

Gini

0.420

0.400

0.375

0.343

0.417

0.400

0.300

0.343

0.375

0.400

0.420

32

Alternative Splitting Criteria: Information Gain

• Entropy at a given node t:

Entropy(t ) p( j | t ) log p( j | t )

j

(NOTE: p( j | t) is the relative frequency of class j at node t)

– Measures homogeneity of a node

• Maximum (log nc) when records are equally distributed among all

classes implying least information

• Minimum (0) when all records belong to one class, implying most

information

– Entropy based computation is similar to the GINI index

computation

33

Example

Entropy(t ) p( j | t ) log p( j | t )

j

C1

C2

0

6

C1

C2

1

5

P(C1) = 1/6

C1

C2

2

4

P(C1) = 2/6

P(C1) = 0/6 = 0

2

P(C2) = 6/6 = 1

Entropy = – 0 log 0 – 1 log 1 = – 0 – 0 = 0

P(C2) = 5/6

Entropy = – (1/6) log2 (1/6) – (5/6) log2 (1/6) = 0.65

P(C2) = 4/6

Entropy = – (2/6) log2 (2/6) – (4/6) log2 (4/6) = 0.92

34

Information Gain

• Definition

n

GAIN Entropy ( p) Entropy (i)

n

k

split

i

i 1

Parent Node, p is split into k partitions;

ni is number of records in partition i

– Measures reduction in Entropy achieved because of the split

– Choose the split that achieves most reduction (maximizes

information gain)

– Used in ID3 and C4.5 by Ross Quinlan

– Disadvantage: tends to prefer splits that result in large number

of partitions, each being small but pure

35

Gain Ratio

• Gain Ratio:

GainRATIO

split

GAIN

SplitINFO

Split

n

n

SplitINFO log

n

n

k

i

i

i 1

Parent Node, p is split into k partitions

ni is the number of records in partition i

– Adjusts Information Gain by the entropy of the partitioning

(SplitINFO). Higher entropy partitioning (large number of small

partitions) is penalized!

– Used in C4.5

– Designed to overcome the disadvantage of Information Gain

36

Splitting Based on Classification Error

• Classification error at a node t :

Error (t ) 1 max P(i | t )

i

(NOTE: p( i | t) is the relative frequency of class i at node t)

• Measures misclassification error made by a node

• Maximum (1 - 1/nc) when records are equally distributed among all

classes, implying least interesting information

• Minimum (0) when all records belong to one class, implying most

interesting information

37

Example

Error (t ) 1 max P(i | t )

i

C1

C2

0

6

C1

C2

1

5

P(C1) = 1/6

C1

C2

2

4

P(C1) = 2/6

P(C1) = 0/6 = 0

P(C2) = 6/6 = 1

Error = 1 – max (0, 1) = 1 – 1 = 0

P(C2) = 5/6

Error = 1 – max (1/6, 5/6) = 1 – 5/6 = 1/6

P(C2) = 4/6

Error = 1 – max (2/6, 4/6) = 1 – 4/6 = 1/3

38

Comparison of Splitting Criteria

• For a 2-class problem

39

When to Stop?

• Stop expanding a node when

– all the records belong to the same class

– all the records have similar attribute values

• Early termination

– Related to overfitting

40

Underfitting and Overfitting

Underfitting: when model is too simple, both training and test errors are large

41

Overfitting due to Noise

Decision boundary is distorted by noise point

42

Overfitting due to Insufficient Examples

Lack of data points in the lower half of the diagram makes it difficult to

predict correctly the class labels of that region

• Insufficient number of training records in the region causes the decision tree

to predict the test examples using other training records that are irrelevant to

the classification task

43

Overfitting

• Overfitting results in decision trees that are more

complex than necessary

– Training error no longer provides a good estimate of how well the

tree will perform on previously unseen records

• Occam’s Razor

– Given two models of similar errors, one should prefer the

simpler model over the more complex model

– For complex models, there is a greater chance that it was fitted

accidentally by errors in data

44

How to Address Overfitting

• Pre-Pruning (Early Stopping Rule)

– Stop the algorithm before it becomes a fully-grown tree

– Typical stopping conditions for a node:

• Stop if all instances belong to the same class

• Stop if all the attribute values are the same

– More restrictive conditions:

• Stop if number of instances is less than some user-specified threshold

• Stop if class distribution of instances are independent of the available

features (e.g., using 2 test)

• Stop if expanding the current node does not improve impurity measures

(e.g., Gini or information gain)

45

How to Address Overfitting

• Post-pruning

– Grow decision tree to its entirety

– Trim the nodes of the decision tree in a bottom-up fashion

– If generalization error improves after trimming, replace sub-tree

by a leaf node.

– Class label of leaf node is determined from majority class of

instances in the sub-tree

46

Decision Tree Based Classification

• Advantages:

–

–

–

–

Inexpensive to construct

Extremely fast at classifying unknown records

Easy to interpret for small-sized trees

Accuracy is comparable to other classification techniques for

many simple data sets

• Example: C4.5

–

–

–

–

Simple depth-first construction and uses Information Gain

Sorts Continuous Attributes at each node

Needs entire data to fit in memory, so unsuitable for large datasets

You can download the software

http://www.rulequest.com/Personal/c4.5r8.tar.gz

47

Issues

• Data Fragmentation

– Number of instances gets smaller as you traverse down the tree

– Number of instances at the leaf nodes could be too small to make

any statistically significant decision

• Search Strategy

– Finding an optimal decision tree is NP-hard

– The algorithm presented so far uses a greedy, top-down, recursive

partitioning strategy to induce a reasonable solution

– Other strategies?

• Bottom-up

• Bi-directional

48

Issues

• Expressiveness

– Decision tree provides expressive representation for learning discrete-valued

function

– But they do not generalize well to certain types of Boolean

functions

• Example: parity function:

– Class = 1 if there is an even number of Boolean attributes = True

– Class = 0 if there is an odd number of Boolean attributes = True

• For accurate modeling, must have a complete tree

– Not expressive enough for modeling continuous variables

• Particularly when test condition involves only a single attribute ata-time

49

Decision Boundary

1

0.9

x < 0.43?

0.8

0.7

Yes

No

y

0.6

y < 0.33?

y < 0.47?

0.5

0.4

Yes

0.3

0.2

:4

:0

0.1

No

Yes

:0

:4

:0

:3

No

:4

:0

0

0

0.1

0.2

0.3

0.4

0.5

x

0.6

0.7

0.8

0.9

1

• Border line between two neighboring regions of different classes is

known as decision boundary

• Decision boundary is parallel to axes because test condition

involves a single attribute at-a-time

50

Oblique Decision Trees

x+y<1

Class = +

Class =

• Test condition may involve multiple attributes

• More expressive representation

• Finding optimal test condition is computationally expensive

51

Issues

• Tree Replication

– Same subtree appears in multiple branches

P

Q

S

0

R

0

Q

1

S

0

1

0

1

52

Nearest Neighbor Classification

• Basic idea:

– If it walks like a duck, quacks like a duck, then it’s probably a duck

• Lazy learners

– It does not build models explicitly

Compute

Distance

Training

Records

Test

Record

Choose k of the

“nearest” records

53

Nearest Neighbor Classification

Unknown record

Requires three things

– The set of stored records

– Distance Metric to compute

distance between records

– The value of k, the number of

nearest neighbors to retrieve

To classify an unknown record:

– Compute distance to other training

records

– Identify k nearest neighbors

– Use class labels of nearest

neighbors to determine the class

label of unknown record (e.g., by

taking majority vote)

54

Definition of Nearest Neighbors

X

(a) 1-nearest neighbor

X

X

(b) 2-nearest neighbor

(c) 3-nearest neighbor

K-nearest neighbors of a record x are data points

that have the k smallest distances to x

55

Nearest Neighbor Classification

• Compute distance between two points:

– Euclidean distance

d ( p, q )

( pi

i

q )

2

i

• Determine the class from nearest neighbor list

– take the majority vote of class labels among the k-nearest

neighbors

– Weigh the vote according to distance

• weight factor, w = 1/d2

56

Nearest Neighbor Classification

• Choosing the value of k:

– If k is too small, sensitive to noise points

– If k is too large, neighborhood may include points from other

classes

• Scaling issues

– Attributes may have to be scaled to prevent distance measures

from being dominated by one of the attributes

– Example:

• height of a person may vary from 1.5m to 1.8m

• weight of a person may vary from 90lb to 300lb

• income of a person may vary from $10K to $1M

X

57

Bayes Classification: Probability

• A probabilistic framework for solving classification

problems

Marginal Probability

Joint Probability

Conditional Probability

58

Bayes Classification: Probability

Sum Rule

Product Rule

59

Bayes Theorem

posterior likelihood × prior

• Given:

– A doctor knows that meningitis causes stiff neck 50% of the time

– Prior probability of any patient having meningitis is 1/50,000

– Prior probability of any patient having stiff neck is 1/20

• If a patient has stiff neck, what’s the probability

he/she has meningitis?

P( M | S )

P( S | M ) P( M ) 0.5 1 / 50000

0.0002

P( S )

1 / 20

60

Naïve Bayes Classification

• Consider each attribute and class label as random

variables

– Given a record with attributes (A1, A2,…,An),

– Goal is to predict class C

• Specifically, we want to find the value of C that maximizes P(C| A1,

A2,…,An )

• Can we estimate P(C| A1, A2,…,An ) directly from data?

• Approach

– compute the posterior probability P(C | A1, A2, …, An) for all

values of C using the Bayes theorem

61

Naïve Bayes Classification

P ( A A A | C ) P (C )

P (C | A A A )

P( A A A )

1

1

2

2

n

n

1

2

n

• Choose value of C that maximizes P(C | A1, A2, …, An)

– Equivalent to choosing value of C that maximizes

P(A1, A2, …, An|C)*P(C)

• How to estimate P(A1, A2, …, An | C )?

– Assume independence among attributes Ai when class is given:

P(A1, A2, …, An |C) = P(A1| Cj) P(A2| Cj)… P(An| Cj)

– Can estimate P(Ai| Cj) for all Ai and Cj.

• New point is classified to Cj if P(Cj) P(Ai| Cj) is maximal

62

l

a

ic

r

o

l

a

ic

r

o

s

u

o

u

n

How to Estimate Probabilities

from

Data?

s

i

g

g

t

s

e

e

t

t

n

a

ca

Class: P(C) = Nc/N

– e.g., P(No) = 7/10,

P(Yes) = 3/10

For discrete attributes

P(Ai | Ck) = |Aik|/ Nc

– where |Aik| is number of

instances having attribute Ai

and belongs to class Ck

– Examples:

P(Status=Married|No) = 4/7

P(Refund=Yes|Yes)=0

ca

cl

co

Tid Refund Marital

Status

Taxable

Income Evade

1

Yes

Single

125K

No

2

No

Married

100K

No

3

No

Single

70K

No

4

Yes

Married

120K

No

5

No

Divorced 95K

Yes

6

No

Married

No

7

Yes

Divorced 220K

No

8

No

Single

85K

Yes

9

No

Married

75K

No

Single

90K

Yes

10 No

60K

10

63

How to Estimate Probabilities from Data?

• For continuous attributes

– Discretize the range into bins

– Two-way split: (A < v) or (A > v)

• choose only one of the two splits as new attribute

– Probability density estimation:

• Assume attribute follows a normal distribution

• Use data to estimate parameters of distribution

(e.g., mean and standard deviation)

• Once probability distribution is known, can use it to estimate the

conditional probability P(Ai|c)

64

l

a

ric

l

a

ric

How to

Estimate

u Probabilities from Data?

o

o

n

s

i

g

g

t

ca

Tid

Refund

e

t

ca

s

u

o

e

Marital

Status

c

t

n

o

Taxable

Income

as

l

c

Evade

1

Yes

Single

125K

No

2

No

Married

100K

No

3

No

Single

70K

No

4

Yes

Married

120K

No

5

No

Divorced

95K

Yes

6

No

Married

60K

No

7

Yes

Divorced

220K

No

8

No

Single

85K

Yes

9

No

Married

75K

No

10

No

Single

90K

Yes

Normal distribution:

1

P( A | c )

e

2

i

j

( Ai ij ) 2

2 ij2

2

ij

– One for each (Ai,ci) pair

For (Income, Class=No):

– If Class=No

sample mean = 110

sample variance = 2975

10

1

P( Income 120 | No)

e

2 (54.54)

( 120110) 2

2 ( 2975)

0.0072

65

Example

Given a Test Record:

X (Refund No, Married, Income 120K)

naive Bayes Classifier:

P(Refund=Yes|No) = 3/7

P(Refund=No|No) = 4/7

P(Refund=Yes|Yes) = 0

P(Refund=No|Yes) = 1

P(Marital Status=Single|No) = 2/7

P(Marital Status=Divorced|No)=1/7

P(Marital Status=Married|No) = 4/7

P(Marital Status=Single|Yes) = 2/7

P(Marital Status=Divorced|Yes)=1/7

P(Marital Status=Married|Yes) = 0

For taxable income:

If class=No:

sample mean=110

sample variance=2975

If class=Yes: sample mean=90

sample variance=25

P(X|Class=No)

= P(Refund=No|Class=No)

P(Married| Class=No)

P(Income=120K| Class=No)

= 4/7 4/7 0.0072 = 0.0024

P(X|Class=Yes)

= P(Refund=No| Class=Yes)

P(Married| Class=Yes)

P(Income=120K| Class=Yes)

= 1 0 1.2 10-9 = 0

Since P(X|No)P(No) > P(X|Yes)P(Yes)

Therefore P(No|X) > P(Yes|X)

=> Class = No

66

Smoothing

• If one of the conditional probability is zero, then the

entire expression becomes zero

• Probability estimation:

N ic

Original : P( Ai | C )

Nc

N ic 1

Laplace : P( Ai | C )

Nc c

N ic mp

m - estimate : P( Ai | C )

Nc m

c: number of classes

p: prior probability

m: parameter

67

Another Example

Name

human

python

salmon

whale

frog

komodo

bat

pigeon

cat

leopard shark

turtle

penguin

porcupine

eel

salamander

gila monster

platypus

owl

dolphin

eagle

Give Birth

yes

Give Birth

yes

no

no

yes

no

no

yes

no

yes

yes

no

no

yes

no

no

no

no

no

yes

no

Can Fly

no

no

no

no

no

no

yes

yes

no

no

no

no

no

no

no

no

no

yes

no

yes

Can Fly

no

Live in Water Have Legs

no

no

yes

yes

sometimes

no

no

no

no

yes

sometimes

sometimes

no

yes

sometimes

no

no

no

yes

no

Class

yes

no

no

no

yes

yes

yes

yes

yes

no

yes

yes

yes

no

yes

yes

yes

yes

no

yes

mammals

non-mammals

non-mammals

mammals

non-mammals

non-mammals

mammals

non-mammals

mammals

non-mammals

non-mammals

non-mammals

mammals

non-mammals

non-mammals

non-mammals

mammals

non-mammals

mammals

non-mammals

Live in Water Have Legs

yes

no

Class

?

A: attributes

M: mammals

N: non-mammals

6 6 2 2

P( A | M ) 0.06

7 7 7 7

1 10 3 4

P( A | N ) 0.0042

13 13 13 13

7

P( A | M ) P( M ) 0.06 0.021

20

13

P( A | N ) P( N ) 0.004 0.0027

20

P(A|M)P(M) > P(A|N)P(N)

=> Mammals

68

Naïve Bayes: Summary

• Robust to isolated noise points

• Handle missing values by ignoring the instance during

probability estimate calculations

• Robust to irrelevant attributes

• Independence assumption may not hold for some

attributes

– Use other techniques such as Bayesian Belief Networks (BBN)

69

Model Evaluation

• Metrics for Performance Evaluation

– How to evaluate the performance of a model?

• Methods for Performance Evaluation

– How to obtain reliable estimates?

• Methods for Model Comparison

– How to compare the relative performance among competing

models?

70

Metrics for Performance Evaluation

• Focus on the predictive capability of a model

– Rather than how fast it takes to classify or build models, scalability,

etc.

• Confusion Matrix:

PREDICTED CLASS

Class=Yes

Class=No

a: TP (true positive)

b: FN (false negative)

ACTUAL Class=Yes

CLASS

Class=No

Accuracy

a

b

c: FP (false positive)

d: TN (true negative)

c

d

ad

TP TN

a b c d TP TN FP FN

71

Limitation of Accuracy

• Consider a 2-class problem

– Number of Class 0 examples = 9990

– Number of Class 1 examples = 10

• If model predicts everything to be class 0, accuracy is

9990/10000 = 99.9 %

– Accuracy is misleading because model does not detect any class 1

example

PREDICTED CLASS

C(i|j)

ACTUAL

CLASS

Class=Yes

Class=No

Class=Yes

C(Yes|Yes)

C(No|Yes)

Class=No

C(Yes|No)

C(No|No)

C(i|j): Cost of misclassifying

class j example as class i

72

Computing Cost of Classification

Cost

Matrix

PREDICTED CLASS

ACTUAL

CLASS

Model M1

ACTUAL

CLASS

PREDICTED CLASS

+

-

+

150

40

-

60

250

C(i|j)

+

-

+

-1

100

-

1

0

Model M2

ACTUAL

CLASS

PREDICTED CLASS

+

-

+

250

45

-

5

200

Accuracy = 80%

Accuracy = 90%

Cost = 3910

Cost = 4255

73

Cost vs. Accuracy

Count

PREDICTED CLASS

Class=Yes

Class=Yes

ACTUAL

CLASS

a

Class=No

b

Accuracy is proportional to cost

if

1. C(Yes|No)=C(No|Yes) = q

2. C(Yes|Yes)=C(No|No) = p

N=a+b+c+d

Class=No

c

d

Accuracy = (a + d)/N

Cost

PREDICTED CLASS

Class=Yes

ACTUAL

CLASS

Class=No

Class=Yes

p

q

Class=No

q

p

Cost = p (a + d) + q (b + c)

= p (a + d) + q (N – a – d)

= q N – (q – p)(a + d)

= N [q – (q-p) Accuracy]

74

Cost-Sensitive Measures

a

Precision (p)

ac

a

Recall (r)

ab

2rp

2a

F - measure (F)

r p 2a b c

Precision is biased towards C(Yes|Yes) & C(Yes|No)

Recall is biased towards C(Yes|Yes) & C(No|Yes)

F-measure is biased towards all except C(No|No)

75

Methods for Performance Evaluation

• Holdout

– Reserve 2/3 for training and 1/3 for testing

• Random subsampling

– Repeated holdout k times, accuracy = avg. of the accuracies

• Cross validation

– Partition data into k disjoint subsets

– k-fold: train on k-1 partitions, test on the remaining one

– Leave-one-out: k=n

• Stratified sampling

• Bootstrap

– Sampling with replacement

– Works well in small datasets

76

Methods For Model Comparison

• ROC (Receiver Operating Characteristics)

– ROC curve plots TP (on the y-axis) against FP (on the x-axis)

– Performance of each classifier represented as a point on the ROC

curve

• changing the threshold of algorithm, sample distribution or cost

matrix changes the location of the point

(TP,FP):

• (0,0): declare everything to be negative class

• (1,1): declare everything to be positive class

• (1,0): ideal

• Diagonal line:

– Random guessing

– Below diagonal line: prediction is opposite

of the true class

77

Using ROC for Model Comparison

No model consistently

outperform the other

M1 is better for small

FPR

M2 is better for large

FPR

Area Under the ROC

curve

Ideal:

Area

=1

Random guess:

Area

= 0.5

78

Test of Significance

• Given two models:

– Model M1: accuracy = 85%, tested on 30 instances

– Model M2: accuracy = 75%, tested on 5000 instances

• Can we say M1 is better than M2?

– How much confidence can we place on accuracy of M1 and

M2?

– Can the difference in performance measure be explained as a

result of random fluctuations in the test set?

• Given x (# of correct predictions), acc=x/N, and N (# of

test instances), can we predict p (true accuracy of

model)?

79

Confidence Interval for Accuracy

• For large test sets (N > 30),

Area = 1 -

– Acc. has a normal distribution

with mean p and variance

p(1-p)/N

P( Z

/2

acc p

Z

p (1 p ) / N

1 / 2

)

1

• Confidence Interval for p:

Z/2

Z1- /2

2 N acc Z Z 4 N acc 4 N acc

p

2( N Z )

2

/2

2

2

/2

2

/2

80

Confidence Interval for Accuracy

• Consider a model that produces an accuracy of 80%

when evaluated on 100 test instances:

– N=100, acc = 0.8

– Let 1- = 0.95 (95% confidence)

– From probability table, Z/2=1.96

1-

Z

0.99 2.58

0.98 2.33

N

50

100

500

1000

5000

0.95 1.96

p(lower)

0.670

0.711

0.763

0.774

0.789

0.90 1.65

p(upper)

0.888

0.866

0.833

0.824

0.811

81

Support Vector Machine (SVM)

• Problem: find a linear hyperplane (decision boundary)

that will separate the data

82

SVM

• One possible solution

B1

83

SVM

• Another possible solution

B2

84

SVM

• Other possible solutions

B2

85

SVM

• Which one is better? B1 or B2?

• How do you define better?

B1

B2

86

SVM

• Find a hyperplane maximizes the margin

– B1 is better than B2

B1

B2

b21

b22

margin

b12

b11

87

SVM

B1

w x b 0

w x b 1

w x b 1

b11

if w x b 1

1

f ( x)

1 if w x b 1

b12

2

Margin 2

|| w ||

88

SVM

2

• We want to maximize: Margin 2

|| w ||

2

|| w ||

– Which is equivalent to minimizing: L( w)

2

– But subjected to the following constraints:

if w x i b 1

1

f ( xi )

1 if w x i b 1

– This is a constrained optimization problem

• Numerical approaches to solve it (e.g., quadratic

programming)

89

Nonlinear SVM

• What if decision boundary is not linear?

– Transform data into higher dimensional space, in which data

points become linear separable

LibSVM: https://www.csie.ntu.edu.tw/~cjlin/libsvm/

90

Ensemble Methods

• So far – we leverage ONE classifier for classification

• Can we use a collection (ensemble) of weak classifiers

to produce a very accurate classifier? Or, can we make

dumb learners smart?

– Generate 100 different decision trees from the same or different

training set and have them vote on the best classification for a

new example

• Key motivation

– Reduce the error rate. Hope is that it will become much more

unlikely that the ensemble will misclassify an example

91

Ensemble Methods

• Construct a set of classifiers from the training data

• Predict class label of previously unseen records by

aggregating predictions made by multiple classifiers

92

Value of Ensembles

• “No Free Lunch” Theorem

– No single algorithm wins all the time!

• When combing multiple independent and diverse

classifiers, each of which is at least more accurate than

random guessing, random errors cancel each other out,

correct decisions are reinforced

• Representative methods

– Bagging: resampling training data

– Boosting: reweighting training data

– Random forest: random subset of features

93

Example

X

X

X

X

X

X

X

X

X

X

X

94

Ensemble Methods

• Suppose there are 25 base classifiers

– Each classifier has error rate, = 0.35

– Assume classifiers are independent

– Probability that the ensemble classifier makes a wrong prediction:

25 i

25i

(

1

)

0.06

i

i 13

• How to generate an ensemble of classifiers?

25

– Bagging

– Boosting

95

Bagging

• Create ensemble by “bootstrap aggregation”

– Repeatedly randomly resampling the training data

Original Data

Bagging (Round 1)

Bagging (Round 2)

Bagging (Round 3)

1

7

1

1

2

8

4

8

3

10

9

5

4

8

1

10

5

2

2

5

6

5

3

5

7

10

2

9

8

10

7

6

9

5

3

3

10

9

2

7

– Each sample has probability (1 – 1/n)n of not being selected

• 36.8% of samples will not be selected for training and thereby end up in the test

• 63.2% will for the training set

– Build classifier on each bootstrap sample

– Combine output by voting (e.g., majority voting)

96

Boosting

• Key insights

– Instead of sampling (as in bagging), re-weigh examples!

– Examples are given weights. At each iteration, a new classifier is

learned and the examples are reweighted to focus the system on

examples that the most recently learned classifier got wrong

– Final classification based on weighted vote of weak classifiers

• Construction

– Start with uniform weighting

– During each step of learning

• Increase weights of the examples which are not correctly learned

by the weak learner

• Decrease weights of the examples which are correctly learned by

the weak learner

97

Example

Original Data

Boosting (Round 1)

Boosting (Round 2)

Boosting (Round 3)

1

7

5

4

2

3

4

4

3

2

9

8

4

8

4

10

5

7

2

4

6

9

5

5

7

4

1

4

8

10

7

6

9

6

4

3

10

3

2

4

Example 4 is hard to classify

Its weight is increased, therefore it is more likely to be chosen

again in subsequent rounds

98

Adaboost

• Base classifiers: C1, C2, …, CT

• Error rate:

1

i

N

w C ( x ) y

N

j 1

j

i

j

j

• Importance of a classifier:

1 1 i

i ln

2 i

99

AdaBoost

• Weight update:

j

if C j ( xi ) yi

w

exp

( j 1)

wi

*

Z j exp j if C j ( xi ) yi

where Z j is the normalization factor

( j)

i

– If any intermediate rounds produce error rate higher than 50%,

the weights are reverted back to 1/n and the resampling

procedure is repeated

• Classification:

C * ( x ) arg max j C j ( x ) y

T

y

j 1

100

Example

Initial weights for each data point

Original

Data

0.1

0.1

0.1

+++

- - - - -

++

Data points

for training

B1

0.0094

Boosting

Round 1

+++

0.0094

0.4623

- - - - - - -

= 1.9459

101

Example

B1

0.0094

Boosting

Round 1

+++

0.0094

0.4623

- - - - - - -

= 1.9459

B2

Boosting

Round 2

0.3037

- - -

0.0009

- - - - -

0.0422

++

= 2.9323

B3

0.0276

0.1819

0.0038

Boosting

Round 3

+++

++ ++ + ++

Overall

+++

- - - - -

= 3.8744

++

102