Final Exam Practice Problems.docx

advertisement

Final – Practice Exam

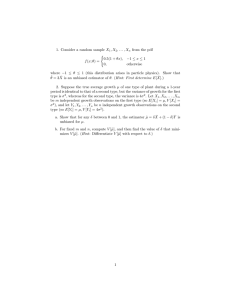

Question #1: If 𝑋1 and 𝑋2 denotes a random sample of size 𝑛 = 2 from the population with

1

density function 𝑓𝑋 (𝑥) = 𝑥 2 1{𝑥 > 1}, then a) find the joint density of 𝑈 = 𝑋1 𝑋2 and 𝑉 = 𝑋2,

and b) find the marginal probability density function of 𝑈.

a) By the independence of the two random variables 𝑋1 and 𝑋2, we have that their joint

density is given by 𝑓𝑋1 𝑋2 (𝑥1 , 𝑥2 ) = 𝑓𝑋1 (𝑥1 )𝑓𝑋2 (𝑥2 ) = 𝑥1−2 𝑥2−2 = (𝑥1 𝑥2 )−2 whenever

𝑢

𝑢

𝑥1 > 0 and 𝑥2 > 0. Then 𝑢 = 𝑥1 𝑥2 and 𝑣 = 𝑥2 implies that 𝑥2 = 𝑣 and 𝑥1 = 𝑥 = 𝑣 , so

2

𝜕𝑥1

we can compute the Jacobian as 𝐽 =

𝜕𝑢

𝑑𝑒𝑡 [𝜕𝑥

2

𝜕𝑢

𝜕𝑥1

𝜕𝑣

]

𝜕𝑥2

𝜕𝑣

1

𝑢

1

− 𝑣2

] = 𝑣. This implies

1

= 𝑑𝑒𝑡 [ 𝑣

0

𝑢

−2 1

𝑢

that the joint density of 𝑈 and 𝑉 is 𝑓𝑈𝑉 (𝑢, 𝑣) = 𝑓𝑋1 𝑋2 (𝑣 , 𝑣) |𝐽| = (𝑣 𝑣)

𝑣

1

= 𝑢2 𝑣

𝑢

whenever 𝑣 > 1 and 𝑣 > 1 → 𝑢 > 𝑣. This also shows 𝑈 and 𝑉 are independent.

𝑢

𝑢 1

b) We have 𝑓𝑈 (𝑢) = ∫1 𝑓𝑈𝑉 (𝑢, 𝑣) 𝑑𝑣 = ∫1

𝑢2 𝑣

1

ln(𝑢)

𝑑𝑣 = 𝑢2 [ln(𝑣)]1𝑢 =

𝑢2

if 𝑢 > 1.

Question #2: Find the Maximum Likelihood Estimators (MLE) of 𝜃 and 𝛼 based on a random

𝛼

sample 𝑋1 , … , 𝑋𝑛 from 𝑓𝑋 (𝑥; 𝜃, 𝛼) = 𝜃𝛼 𝑥 𝛼−1 1{0 ≤ 𝑥 ≤ 𝜃} where 𝜃 > 0 and 𝛼 > 0.

We first note that the likelihood function is given by 𝐿(𝜃, 𝛼) = ∏𝑛𝑖=1 𝑓𝑋 (𝑥𝑖 ; 𝜃, 𝛼) =

∏𝑛𝑖=1 𝛼𝜃 −𝛼 𝑥𝑖𝛼−1 1{0 ≤ 𝑥𝑖 ≤ 𝜃} = 𝛼 𝑛 𝜃 −𝑛𝛼 (∏𝑛𝑖=1 𝑥𝑖 )𝛼−1 1{0 ≤ 𝑥1:𝑛 }1{𝑥𝑛:𝑛 ≤ 𝜃}. Then by

inspection, we see that 𝜃̂𝑀𝐿𝐸 = 𝑋𝑛:𝑛 . To find the MLE of 𝛼, we compute ln[𝐿(𝜃, 𝛼)] =

𝑛 ln(𝛼) − 𝑛𝛼 ln(𝜃) + (𝛼 − 1) ∑𝑛𝑖=1 ln(𝑥𝑖 ) so that the derivative with respect to 𝛼 is

𝜕

𝜕𝛼

𝑛

𝑛

ln[𝐿(𝜃, 𝛼)] = 𝛼 − 𝑛 ln(𝜃) + ∑𝑛𝑖=1 ln(𝑥𝑖 ) = 0 → 𝛼 = 𝑛 ln(𝜃)−∑𝑛

.

𝑖=1 ln(𝑥𝑖 )

𝑛

𝑛 ln(𝑋 ).

)−∑

𝑛:𝑛

𝑖

𝑖=1

second derivative is zero, we have found that 𝛼̂𝑀𝐿𝐸 = 𝑛 ln(𝑋

Since

the

Question #3: If 𝑋1 , … , 𝑋𝑛 is a random sample from 𝑁(𝜇, 𝜎 2 ), then a) find a UMVUE for 𝜇 when

𝜎 2 is known, and b) find a UMVUE for 𝜎 2 when 𝜇 is known.

a) We have previously shown that the Normal distribution is a member of the Regular

Exponential Class (REC), where 𝑆1 = ∑𝑛𝑖=1 𝑋𝑖 and 𝑆2 = ∑𝑛𝑖=1 𝑋𝑖2 are jointly complete

sufficient statistics for the unknown parameters 𝜇 and 𝜎 2 . Since we have that 𝐸(𝑆1 ) =

1

1

𝐸(∑𝑛𝑖=1 𝑋𝑖 ) = ∑𝑛𝑖=1 𝐸(𝑋𝑖 ) = ∑𝑛𝑖=1 𝜇 = 𝑛𝜇, the statistic 𝑇 = 𝑛 𝑆1 = 𝑛 ∑𝑛𝑖=1 𝑋𝑖 = 𝑋̅ will be

unbiased for the unknown parameter 𝜇. The Lehmann-Scheffe Theorem then

guarantees that 𝑇 = 𝑋̅ is a UMVUE for 𝜇 when 𝜎 2 is known.

b) Since 𝐸(𝑆2 ) = 𝐸(∑𝑛𝑖=1 𝑋𝑖2 ) = ∑𝑛𝑖=1 𝐸(𝑋𝑖2 ) = ∑𝑛𝑖=1 𝑉𝑎𝑟(𝑋𝑖 ) + 𝐸(𝑋𝑖 )2 = ∑𝑛𝑖=1 𝜎 2 + 𝜇 2 =

1

𝑛(𝜎 2 + 𝜇 2 ), the statistic 𝑇 = 𝑛 𝑆2 − 𝜇 2 will be unbiased for the unknown parameter

𝜎 2 when 𝜇 is known. The Lehmann-Scheffe Theorem states that 𝑇 is a UMVUE for 𝜎 2 .

𝛼

Question #4: Consider a random sample 𝑋1 , … , 𝑋𝑛 from 𝑓𝑋 (𝑥; 𝜃, 𝛼) = 𝜃𝛼 𝑥 𝛼−1 1{0 ≤ 𝑥 ≤ 𝜃},

where 𝜃 > 0 is unknown but 𝛼 > 0 is known. Find the constants 1 < 𝑎 < 𝑏 depending on the

values of 𝑛 and 𝛼 such that (𝑎𝑋𝑛:𝑛 , 𝑏𝑋𝑛:𝑛 ) is a 90% confidence interval for 𝜃.

𝑥

We first compute the CDF of the population 𝐹𝑋 (𝑥; 𝜃, 𝛼) = ∫0 𝑓𝑋 (𝑡; 𝜃, 𝛼) 𝑑𝑡 =

𝛼

𝑥

𝛼

𝑥

1

𝑥 𝛼

∫ 𝑥 𝛼−1 𝑑𝑡 = 𝜃𝛼 [𝛼 𝑥 𝛼 ] = (𝜃) whenever 0 ≤ 𝑥 ≤ 𝜃. Then we use this to compute

𝜃𝛼 0

0

𝑥 𝑛𝛼

the CDF of the largest order statistic 𝐹𝑋𝑛:𝑛 (𝑥) = 𝑃(𝑋𝑛:𝑛 ≤ 𝑥) = 𝑃(𝑋𝑖 ≤ 𝑥)𝑛 = (𝜃) .

In order for (𝑎𝑋𝑛:𝑛 , 𝑏𝑋𝑛:𝑛 ) to be a 90% confidence interval for 𝜃, it must be the case

that 𝑃(𝑎𝑋𝑛:𝑛 ≥ 𝜃) = 0.05 and 𝑃(𝑏𝑋𝑛:𝑛 ≤ 𝜃) = 0.05. We solve each of these two

𝜃

equations for the unknown constants, so 𝑃(𝑎𝑋𝑛:𝑛 ≥ 𝜃) = 0.05 → 𝑃 (𝑋𝑛:𝑛 ≥ 𝑎) =

𝜃

𝜃

𝜃/𝑎 𝑛𝛼

0.05 → 1 − 𝑃 (𝑋𝑛:𝑛 ≤ 𝑎) = 0.95 → 1 − 𝐹𝑋𝑛:𝑛 (𝑎) = 0.95 → 1 − (

1

𝑎𝑛𝛼

1

1⁄𝑛𝛼

= 0.95 → 𝑎 = (0.95)

20 1⁄𝑛𝛼

= (19)

𝜃

)

= 0.95 → 1 −

. Similarly, we find that 𝑏 = (20)1⁄𝑛𝛼 .

Question #5: If 𝑋1 and 𝑋2 are independent and identically distributed from 𝐸𝑋𝑃(𝜃) such

1

𝑥

𝑋

that their density is 𝑓𝑋 (𝑥) = 𝜃 𝑒 −𝜃 1{𝑥 > 0}, then find the joint density of 𝑈 = 𝑋1 and 𝑉 = 𝑋2.

1

We

have

𝑢

𝑢𝑣

1

1

𝑢 −𝑢(1−𝑣)

0

]| = [𝜃 𝑒 −𝜃 ] [𝜃 𝑒 − 𝜃 ] [𝑢] = 𝜃2 𝑒 𝜃

𝑢

1

𝑓𝑈𝑉 (𝑢, 𝑣) = 𝑓𝑋1 𝑋2 (𝑢, 𝑢𝑣) |𝑑𝑒𝑡 [

𝑣

whenever we have that 𝑢 > 0 and 𝑢𝑣 > 0 → 𝑣 > 0.

Question #6: Let 𝑋1 , … , 𝑋𝑛 be a random sample from 𝑊𝐸𝐼(𝜃, 𝛽) with known 𝛽 such that

𝛽

their common density is 𝑓𝑋 (𝑥; 𝜃, 𝛽) = 𝜃𝛽

𝑥 𝛽

𝑥 𝛽−1 𝑒 −(𝜃)

𝑥 𝛽

𝛽−1 −( 𝑖 )

𝛽

We have 𝐿(𝜃, 𝛽) = ∏𝑛𝑖=1 𝜃𝛽 𝑥𝑖

𝑒

1{𝑥 > 0}. Find the MLE of 𝜃.

𝑛

= (𝛽𝜃 −𝛽 ) (∏𝑛𝑖=1 𝑥𝑖 )𝛽−1 𝑒

𝜃

1

1

𝛽

− 𝛽 ∑𝑛

𝑖=1 𝑥𝑖

𝜃

so that

𝛽

ln[𝐿(𝜃, 𝛽)] = 𝑛 ln(𝛽) − 𝑛𝛽 ln(𝜃) + (𝛽 − 1) ∑𝑛𝑖=1 ln(𝑥𝑖 ) − 𝜃𝛽 ∑𝑛𝑖=1 𝑥𝑖 . Then we can

calculate that the

𝑛=

1

∑𝑛 𝑥 𝛽

𝜃𝛽 𝑖=1 𝑖

𝜕

ln[𝐿(𝜃, 𝛽)] = −

𝜕𝜃

𝛽

𝛽

→𝜃 =

∑𝑛

𝑖=1 𝑥𝑖

𝑛

𝑛𝛽

𝜃

𝛽

𝛽

+ 𝜃𝛽+1 ∑𝑛𝑖=1 𝑥𝑖 = 0 →

𝑛𝛽

𝜃

𝛽

𝛽

= 𝜃𝛽+1 ∑𝑛𝑖=1 𝑥𝑖 →

1

1

𝛽 𝛽

. Therefore, we have found 𝜃̂𝑀𝐿𝐸 = (𝑛 ∑𝑛𝑖=1 𝑋𝑖 ) .

Question #7: Use the Cramer-Rao Lower Bound to show that 𝑋̅ is a UMVUE for 𝜃 based on a

1

𝑥

random sample of size 𝑛 from an 𝐸𝑋𝑃(𝜃) distribution where 𝑓𝑋 (𝑥; 𝜃) = 𝜃 𝑒 −𝜃 1{𝑥 > 0}.

We first find the Cramer-Rao Lower Bound; since 𝜏(𝜃) = 𝜃, we have [𝜏 ′ (𝜃)]2 = 1.

𝑋

Then we have ln 𝑓(𝑋; 𝜃) = − ln(𝜃) − 𝜃 so that

𝜕2

1

𝜕2

2𝑋

𝜕

𝜕𝜃

1

𝑋

ln 𝑓(𝑋; 𝜃) = − 𝜃 + 𝜃2 and

1

2

2𝜃

1

1

ln 𝑓(𝑋; 𝜃) = 𝜃2 − 𝜃3 . Finally, −𝐸 [𝜕𝜃2 ln 𝑓(𝑋; 𝜃)] = − 𝜃2 + 𝜃3 𝐸(𝑋) = 𝜃3 − 𝜃2 = 𝜃2

𝜕𝜃2

implies that 𝐶𝑅𝐿𝐵 =

1

𝜃2

𝑛

. To verify that 𝑋̅ is a UMVUE for 𝜃, we first show that 𝐸(𝑋̅) =

1

1

𝐸 (𝑛 ∑𝑛𝑖=1 𝑋𝑖 ) = 𝑛 ∑𝑛𝑖=1 𝐸(𝑋𝑖 ) = 𝑛 𝑛𝜃 = 𝜃. Then we check that the variance achieves

1

the Cramer-Rao Lower Bound from above, so 𝑉𝑎𝑟(𝑋̅) = 𝑉𝑎𝑟 (𝑛 ∑𝑛𝑖=1 𝑋𝑖 ) =

1

𝑛

∑𝑛𝑖=1 𝑉𝑎𝑟(𝑋𝑖 ) =

2

1

𝑛𝜃 2 =

𝑛2

𝜃2

𝑛

= 𝐶𝑅𝐿𝐵. Thus, 𝑋̅ is a UMVUE for 𝜃.

Question #8: Use the Lehmann-Scheffe Theorem to show that 𝑋̅ is a UMVUE for 𝜃 based on

𝑥

1

a random sample of size 𝑛 from an 𝐸𝑋𝑃(𝜃) distribution where 𝑓𝑋 (𝑥; 𝜃) = 𝜃 𝑒 −𝜃 1{𝑥 > 0}.

We know that 𝐸𝑋𝑃(𝜃) is a member of the Regular Exponential Class (REC) with

𝑡1 (𝑥) = 𝑥, so the statistic 𝑆 = ∑𝑛𝑖=1 𝑡1 (𝑋𝑖 ) = ∑𝑛𝑖=1 𝑋𝑖 is complete sufficient for the

parameter 𝜃. Then 𝐸(𝑆) = 𝐸(∑𝑛𝑖=1 𝑋𝑖 ) = ∑𝑛𝑖=1 𝐸(𝑋𝑖 ) = 𝑛𝜃 implies that the estimator

1

1

𝑇 = 𝑛 𝑆 = 𝑛 ∑𝑛𝑖=1 𝑋𝑖 = 𝑋̅ is unbiased for 𝜃, so the Lehmann-Scheffe Theorem

guarantees that it will be the Uniform Minimum Variance Unbiased Estimator of 𝜃.

Question #9: Find a 100(1 − 𝛼)% confidence interval for 𝜃 based on a random sample of

𝑥

1

size 𝑛 from an 𝐸𝑋𝑃(𝜃) distribution where 𝑓𝑋 (𝑥) = 𝜃 𝑒 −𝜃 1{𝑥 > 0}. Use the facts that

𝐸𝑋𝑃(𝜃) ≡ 𝐺𝐴𝑀𝑀𝐴(𝜃, 1), that 𝜃 is a scale parameter, and that 𝐺𝐴𝑀𝑀𝐴(2, 𝑛) ≡ 𝜒 2 (2𝑛).

Since 𝜃 is a scale parameter, we know that 𝑄 =

̂ 𝑀𝐿𝐸

𝜃

𝜃

𝑋̅

=𝜃=

∑𝑛

𝑖=1 𝑋𝑖

𝑛𝜃

will be a pivotal

quantity. Note that we used the fact that 𝜃̂𝑀𝐿𝐸 = 𝑋̅, which was derived in a previous

exercise. We begin by noting that since each 𝑋𝑖 ~𝐸𝑋𝑃(𝜃) ≡ 𝐺𝐴𝑀𝑀𝐴(𝜃, 1), we can

conclude that 𝐴 = ∑𝑛𝑖=1 𝑋𝑖 ~𝐺𝐴𝑀𝑀𝐴(𝜃, 𝑛). In order to obtain a chi-square distributed

2

pivotal quantity, we use the modified pivot 𝑅 = 2𝑛𝑄 = 𝜃 ∑𝑛𝑖=1 𝑋𝑖 . To verify its

2

distribution, we use the CDF technique so 𝐹𝑅 (𝑟) = 𝑃(𝑅 ≤ 𝑟) = 𝑃 (𝜃 ∑𝑛𝑖=1 𝑋𝑖 ≤ 𝑟) =

𝑃 (∑𝑛𝑖=1 𝑋𝑖 ≤

1

𝜃𝑟

𝜃𝑟 𝑛−1

( )

𝜃𝑛 Γ(𝑛) 2

2

(

𝑒−

so 𝑃 [𝜒𝛼2 (2𝑛) <

2

𝜃𝑟

implies

) = 𝐹𝐴 ( 2 )

𝜃𝑟

)

2

𝜃

2𝑛𝑋̅

𝜃

𝜃

2

1

𝑑

that

𝜃𝑟

𝑟

2

= 2𝑛Γ(𝑛) 𝑟 𝑛−1 𝑒 −2 . This proves that 𝑅 = 𝜃 ∑𝑛𝑖=1 𝑋𝑖 =

2

< 𝜒1−

𝛼 (2𝑛)] = 1 − 𝛼 → 𝑃 [ 2

𝜒

2

𝜃𝑟 𝜃

𝑓𝑅 (𝑟) = 𝑑𝑟 𝐹𝐴 ( 2 ) = 𝑓𝐴 ( 2 ) 2 =

2𝑛𝑋̅

𝛼 (2𝑛)

1−

2

2𝑛𝑋̅

2𝑛𝑋̅

𝜃

~𝜒 2 (2𝑛)

< 𝜃 < 𝜒2 (2𝑛)] = 1 − 𝛼 is the

𝛼

2

desired 100(1 − 𝛼)% confidence interval for the unknown parameter 𝜃.

Question #10: Let 𝑋1 , … , 𝑋𝑛 be independent and identically distributed from the population

1

with CDF given by 𝐹𝑋 (𝑥) = 1+𝑒 −𝑥 . Find the limiting distribution of 𝑌𝑛 = 𝑋1:𝑛 + ln(𝑛).

We have that 𝐹𝑌𝑛 (𝑦) = 𝑃(𝑌𝑛 ≤ 𝑦) = 𝑃(𝑋1:𝑛 + ln(𝑛) ≤ 𝑦) = 𝑃(𝑋1:𝑛 ≤ 𝑦 − ln(𝑛)) =

𝑛

1 − 𝑃(𝑋1:𝑛 > 𝑦 − ln(𝑛)) = 1 − 𝑃(𝑋𝑖 > 𝑦 − ln(𝑛))𝑛 = 1 − (1 − 𝐹𝑋 (𝑦 − ln(𝑛))) =

𝑛

1

𝑛

𝑒 ln(𝑛)−𝑦

𝑛

𝑛𝑒 −𝑦

1

𝑛

1 − (1 − 1+𝑒 −𝑦+ln(𝑛) ) = 1 − (1+𝑒 ln(𝑛)−𝑦 ) = 1 − (1+𝑛𝑒 −𝑦 ) = 1 − (1 + 𝑛𝑒 −𝑦 ) = 1 −

1

𝑛

(1 + 𝑛𝑒 −𝑦 ) = 1 − (1 +

𝑒𝑦 𝑛

) . Then lim 𝐹𝑌𝑛 (𝑦) = 1 − lim (1 +

𝑛

𝑛→∞

𝑛→∞

𝑐 𝑛𝑏

limiting distribution since lim (1 + 𝑛)

𝑛→∞

𝑒𝑦 𝑛

𝑦

) = 1 − 𝑒 𝑒 is the

𝑛

= 𝑒 𝑐𝑏 for all real numbers 𝑐 and 𝑏.

Question #11: Let 𝑋1 , … , 𝑋𝑛 be independent and identically distributed from the population

1

with a PDF given by 𝑓𝑋 (𝑥) = 2 1{−1 < 𝑥 < 1}. Approximate 𝑃(∑100

𝑖=1 𝑋𝑖 ≤ 0) using Φ(𝑧).

1

From the given density, we know that 𝑋~𝑈𝑁𝐼𝐹(−1,1) so 𝐸(𝑋) = 0 and 𝑉𝑎𝑟(𝑋) = 3.

100

100

Then if we define 𝑌 = ∑100

𝑖=1 𝑋𝑖 , we have that 𝐸(𝑌) = 𝐸(∑𝑖=1 𝑋𝑖 ) = ∑𝑖=1 𝐸(𝑋𝑖 ) = 0 and

100

𝑉𝑎𝑟(𝑌) = 𝑉𝑎𝑟(∑100

𝑖=1 𝑋𝑖 ) = ∑𝑖=1 𝑉𝑎𝑟(𝑋𝑖 ) = 100/3. These facts allow us to compute

𝑃(∑100

𝑖=1 𝑋𝑖 ≤ 0) = 𝑃(𝑌 ≤ 0) = 𝑃 (

𝑌−0

√100/3

≤

0−0

) ≈ 𝑃(𝑍 ≤ 0) = Φ(0) = 1/2.

√100/3

1

Question #12: Suppose that 𝑋 is a random variable with density 𝑓𝑋 (𝑥) = 2 𝑒 −|𝑥| for 𝑥 ∈ ℝ.

Compute the Moment Generating Function (MGF) of 𝑋 and use it to find 𝐸(𝑋 2 ).

∞

∞

1

By definition, we have 𝑀𝑋 (𝑡) = 𝐸(𝑒 𝑡𝑋 ) = ∫−∞ 𝑒 𝑡𝑥 𝑓𝑋 (𝑥) 𝑑𝑥 = ∫−∞ 𝑒 𝑡𝑥 𝑒 −|𝑥| 𝑑𝑥 =

2

∞

∫ 𝑒 𝑡𝑥 𝑒 −𝑥

2 0

1

1

0

1

∞

1

0

𝑑𝑥 + 2 ∫−∞ 𝑒 𝑡𝑥 𝑒 𝑥 𝑑𝑥 = 2 ∫0 𝑒 𝑥(𝑡−1) 𝑑𝑥 + 2 ∫−∞ 𝑒 𝑥(𝑡+1) 𝑑𝑥. After integrating

1

and collecting like terms, we obtain 𝑀𝑋 (𝑡) = 2 [(𝑡 + 1)−1 − (𝑡 − 1)−1 ]. We then

compute

𝑑

1

𝑀 (𝑡) = 2 [−(𝑡 + 1)−2 + (𝑡 − 1)−2 ] and

𝑑𝑡 𝑋

𝑑2

1

2

2

1

𝑑2

𝑑𝑡 2

1

2

2

𝑀𝑋 (𝑡) = 2 [(𝑡+1)3 − (𝑡−1)3 ] so

that 𝐸(𝑋 2 ) = 𝑑𝑡 2 𝑀𝑋 (0) = 2 [(0+1)3 − (0−1)3 ] = 2 (2 + 2) = 2.

1

Question #13: Let 𝑋 be a random variable with density 𝑓𝑋 (𝑥) = 4 1{−2 < 𝑥 < 2}. Compute

the probability density function of the transformed random variable 𝑌 = 𝑋 3 .

1

1

We have 𝐹𝑌 (𝑦) = 𝑃(𝑌 ≤ 𝑦) = 𝑃(𝑋 3 ≤ 𝑦) = 𝑃 (𝑋 ≤ 𝑦 3 ) = 𝐹𝑋 (𝑦 3 ) so that 𝑓𝑌 (𝑦) =

𝑑

1

1

1

2

11

2

𝐹 (𝑦 3 ) = 𝑓𝑋 (𝑦 3 ) 3 𝑦 −3 = 4 3 𝑦 −3 =

𝑑𝑦 𝑋

1

2

12𝑦 3

1

if −2 < 𝑦 3 < 2 → −8 < 𝑦 < 8.

Question #14: Let 𝑋1 , … , 𝑋𝑛 be a random sample from the density 𝑓𝑋 (𝑥) = 𝜃𝑥 𝜃−1 whenever

0 < 𝑥 < 1 and zero otherwise. Find a complete sufficient statistic for the parameter 𝜃.

We begin by verifying that the density is a member of the Regular Exponential Class

(REC) by showing that 𝑓𝑋 (𝑥) = exp{ln(𝜃𝑥 𝜃−1 )} = exp{ln(𝜃) + (𝜃 − 1) ln(𝑥)} =

𝜃 exp{(𝜃 − 1) ln(𝑥)}, where 𝑞1 (𝜃) = 𝜃 − 1 and 𝑡1 (𝑥) = ln(𝑥). Then we know that the

statistic 𝑆 = ∑𝑛𝑖=1 𝑡1 (𝑋𝑖 ) = ∑𝑛𝑖=1 ln(𝑋𝑖 ) is complete sufficient for the parameter 𝜃.

Question #15: Let 𝑋1 , … , 𝑋𝑛 be a random sample from the density 𝑓𝑋 (𝑥) = 𝜃𝑥 𝜃−1 whenever

0 < 𝑥 < 1 and zero otherwise. Find the Maximum Likelihood Estimator (MLE) for 𝜃 > 0.

We have 𝐿(𝜃) = ∏𝑛𝑖=1 𝑓𝑋 (𝑥𝑖 ) = ∏𝑛𝑖=1 𝜃𝑥𝑖𝜃−1 = 𝜃 𝑛 (∏𝑛𝑖=1 𝑥𝑖 )𝜃−1 so that ln[𝐿(𝜃)] =

𝑛 ln(𝜃) + (𝜃 − 1) ∑𝑛𝑖=1 ln(𝑥𝑖 ) and

𝜕

𝑛

ln[𝐿(𝜃)] = 𝜃 + ∑𝑛𝑖=1 ln(𝑥𝑖 ) = 0 → 𝜃 = ∑𝑛

𝜕𝜃

−𝑛

.

𝑖=1 ln(𝑥𝑖 )

Thus, we have found that the Maximum Likelihood Estimator is 𝜃̂𝑀𝐿𝐸 = ∑𝑛

−𝑛

.

𝑖=1 ln(𝑋𝑖 )

Question #16: Let 𝑋1 , … , 𝑋𝑛 be a random sample from the density 𝑓𝑋 (𝑥) = 𝜃𝑥 𝜃−1 whenever

0 < 𝑥 < 1 and zero otherwise. Find the Method of Moments Estimator (MME) for 𝜃 > 0.

1

1

1

𝜃

1

𝜃

We have 𝐸(𝑋) = ∫0 𝑥𝑓𝑋 (𝑥) 𝑑𝑥 = ∫0 𝑥𝜃𝑥 𝜃−1 𝑑𝑥 = 𝜃 ∫0 𝑥 𝜃 𝑑𝑥 = 𝜃+1 [𝑥 𝜃+1 ]0 = 𝜃+1, so

𝜃

𝑋̅

that 𝜇1′ = 𝑀1′ → 𝜃+1 = 𝑋̅ → 𝜃(1 − 𝑋̅) = 𝑋̅ so the desired estimator is 𝜃̂𝑀𝑀𝐸 = 1−𝑋̅.