FINAL REPORT Analysis of Survey of Brookline Educators Teaching and Student Learning

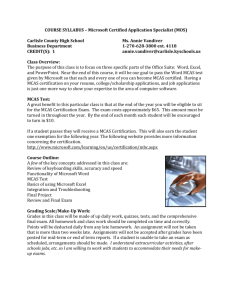

advertisement