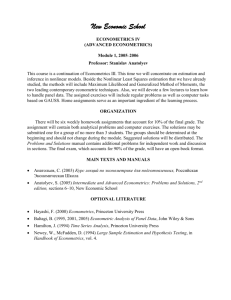

Instrumental variables and GMM: Estimation and Testing BOSTON COLLEGE

advertisement