Investigations into the Robustness of

Computer-Synthesized Congestion Control

by

Pratiksha Ranjit Thaker

S.B., Massachusetts Institute of Technology (2014)

Submitted to the Department of Electrical Engineering and Computer

Science

in partial fulfillment of the requirements for the degree of

Master of Engineering in Electrical Engineering and Computer Science

at the

MASSACHUSETTS INSTITUTE OF TECHNOLOGY

June 2015

c Massachusetts Institute of Technology 2015. All rights reserved.

Author . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Department of Electrical Engineering and Computer Science

May 22, 2015

Certified by . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Hari Balakrishnan

Fujitsu Professor

Thesis Supervisor

Accepted by . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Prof. Albert R. Meyer

Chairman, Masters of Engineering Thesis Committee

2

Investigations into the Robustness of Computer-Synthesized

Congestion Control

by

Pratiksha Ranjit Thaker

Submitted to the Department of Electrical Engineering and Computer Science

on May 22, 2015, in partial fulfillment of the

requirements for the degree of

Master of Engineering in Electrical Engineering and Computer Science

Abstract

Recent work has shown that computer-synthesized TCP congestion control protocols

can outperform the state of the art. However, these protocols are generally too complex

to reason about. Human engineers therefore might not trust them enough to deploy

them in real networks.

This thesis presents two contributions toward the practical deployment of computersynthesized congestion-control algorithms. First, we describe a simple, human-designed

protocol that performs comparably to computer-optimized protocols using only 10 lines

of code, suggesting that it may be feasible to optimize for interpretability in addition

to performance. Second, we introduce techniques for reasoning about the behavior

of black-box protocols via extensive simulation, which reveal regions of potentially

undesirable behavior in both computer-optimized protocols and a NewReno-like TCP

implementation, highlighting areas to focus further engineering effort.

Thesis Supervisor: Hari Balakrishnan

Title: Fujitsu Professor

3

4

Acknowledgements

I am lucky to have had Hari Balakrishnan as both a class professor and a research

advisor. His enthusiasm and energy are likely to convince anyone that networking

research is a worthwhile pursuit; they certainly convinced me.

Keith Winstein was fortunately working on Remy at around the same time that I was

looking for projects like Remy. I have learned a great deal from his unique perspective

and approach to research.

Anirudh Sivaraman has provided invaluable guidance, moral and technical support,

and many colorful stories.

The ideas in this thesis have benefited from discussions with many people, including Hamsa Balakrishnan, Leslie Kaelbling, Russ Tedrake, Gustavo Goretkin, and Jacob

Steinhardt.

The experiments in this thesis were made possible by the PDOS group’s generosity

in sharing the use of their multicore machines.

Thanks to Sheila Marian for her tireless logistical support.

I owe a great deal to Stefanie Tellex, who taught me all the things I didn’t learn in

class, and trusted that I could do things I didn’t know I was capable of doing.

I am so glad to have spent this year in NMS and on G9, where I have found better

labmates and floor-mates than I could have hoped for.

My friends at and outside MIT have made countless late nights (and early mornings)

all worthwhile.

And finally, I am thankful to my family, near and far, for their love and support: especially my parents, who have given me more than words can express; and my brother,

who I am so very grateful to have in my life.

This work was supported by a Google research award.

5

6

Contents

1 Introduction

13

1.1 Contributions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

2 Related work

14

17

2.1 TCP congestion control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

17

2.2 Remy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

18

2.3 Safety analysis of congestion-control protocols . . . . . . . . . . . . . . . .

19

3 The Kite protocol

21

3.1 Design . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

22

3.1.1 Congestion signals . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

22

3.1.2 Windowing and pacing . . . . . . . . . . . . . . . . . . . . . . . . . .

23

3.1.3 Algorithm . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

23

3.2 Evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

24

3.3 Discussion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

27

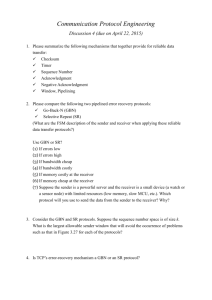

4 Toward automated verification of congestion control

31

4.1 Approach . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

32

4.1.1 Cycle detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

33

4.1.2 Network state . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

33

4.2 Single sender . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

36

7

4.2.1 Sensitivity to initial conditions . . . . . . . . . . . . . . . . . . . . .

36

4.2.2 Convergence time . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

39

4.3 Multiple senders . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

41

4.3.1 A sender joins the network . . . . . . . . . . . . . . . . . . . . . . . .

41

4.3.2 A sender leaves the network . . . . . . . . . . . . . . . . . . . . . . .

43

4.4 Discussion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

46

5 Future work and conclusions

49

8

List of Figures

3-1 Performance of Kite over a range of link speeds. . . . . . . . . . . . . . . .

27

3-2 Performance of Kite over a range of RTTs. . . . . . . . . . . . . . . . . . . .

28

3-3 Performance of Kite over a range of multiplexing. . . . . . . . . . . . . . .

29

4-1 Throughput maps generated by sweeping a range of initial conditions

for a single sender running one of two Remy-generated protocols. An

omniscient sender would achieve 100% throughput (white). . . . . . . .

38

4-2 Cycle convergence times for a one-sender system in a single-bottleneck

network with a 150 ms round-trip time. . . . . . . . . . . . . . . . . . . . .

40

4-3 Cycle convergence times for a two-sender system in a single-bottleneck

network with a 32 Mbits/s link and 150 ms round-trip time. The x-axis

indicates the time at which a second sender joins the network (starting

at time 0 when the first sender starts, until the first sender reaches a

cycle). The y-axis indicates the amount of time from when the second

sender starts until the entire network state enters a cycle. While a system

of two RemyCCs converges to a cycle within under 35 seconds regardless

of when the second sender turns on, a system of AIMD senders can take

up to 2000 seconds to converge for the same network parameters. . . . .

9

42

4-4 Time required for a single sender to return to a cycle after a second

sender has entered and left the network, in a network of RemyCCs

trained for link speeds between 1 and 1000 Mbits/s. The x-axis specifies

the period of time for which the second sender turns on before turning

off again. The y-axis shows the number of milliseconds required for the

first sender to return to a cycle. The convergence time for the first sender

increases systematically the longer the second sender is on, except when

the second sender’s flow duration is short (under one second). . . . . . .

45

4-5 Variation in cycle trajectories in one variable of the state of a two-sender

system of RemyCCs. The trajectories vary significantly and must be analyzed separately. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

10

46

List of Tables

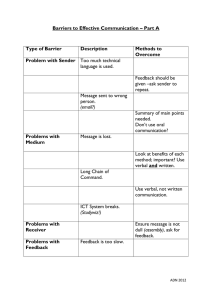

3.1 Training and evaluation scenarios for breadth in link speed. . . . . . . . .

24

3.2 Training and evaluation scenarios for breadth in multiplexing. . . . . . .

25

3.3 Training and evaluation scenarios for breadth in propagation delay. . . .

25

11

12

Chapter 1

Introduction

A computer that wants to send data over a network – an endpoint – must answer a

difficult question: knowing almost nothing about the state of the network, when is the

best time to send this data, and how quickly? Endpoints in the Internet use congestioncontrol protocols to make these decisions. The inputs to these protocols are various

signals of network state; the outputs are modifications to the sender’s current rules for

sending packets.

Traditional approaches to designing congestion-control protocols are reactive: once

a protocol is deployed in a network, unexpected deficiencies in performance might

surface; as a result, a researcher might propose a new protocol to resolve those deficiencies. Yet the myriad congestion-control schemes that have arisen from this cycle

are not well-understood. Which scheme should an endpoint use if it is running a videoconference application, and its packets cannot suffer long delays? Which should it use

for a large file transfer across continents, where throughput is paramount? Various

protocols claim to cater to these objectives, but support for these claims is often based

on intuition and limited experimentation.

Remy [28] is a tool that synthesizes congestion-control protocols according to a

user-specified objective function, under certain assumptions about the network condi13

tions in which the protocol is intended to operate. The experiments in [23] demonstrate that the protocols Remy produces perform well – in fact, they outperform existing protocols such as TCP Cubic [11] and even protocols that require modifications

to routers in the network, such as CoDel [20], on certain objective functions. However, the protocols that Remy generates are extremely complex, with hundreds of rules

that prevent human network engineers from developing an intuition for its behavior.

The complexity of Remy-generated protocols – “RemyCCs” – is a barrier to their deployment. In particular, while a protocol such as TCP New Reno [12] might have

unexpected emergent behavior, the algorithms that produce this behavior at endpoints

are simple enough that it might be possible for a researcher or engineer to reason about

the unexpected behavior and develop a solution for it. A comparable situation arising

from the use of a RemyCC might be impossible to fix without – or even with – expert

knowledge of the optimization procedure.

This suggests that there are two avenues for gaining enough trust in the behavior

of a RemyCC to deploy it in practice: develop RemyCCs that are simple enough at

the endpoints that humans can reason about and fix unexpectedly poor behavior, or

develop tools for verifying the safety of black-box protocols so that we might convince

ourselves that emergent problems will not arise at all.

1.1

Contributions

This thesis presents two main contributions:

1. The design and evaluation of a simple congestion-control protocol, Kite, a proofof-concept that demonstrates that good performance need not necessarily come

at the cost of increased protocol complexity (Chapter 3). Our evaluation shows

that Kite can achieve performance comparable to Remy-designed protocols on

broad operating ranges using only 10 lines of code, compared to the hundreds of

14

rules that Remy optimizes.

2. Preliminary steps toward a verification tool to automatically assess the safety and

performance of black-box congestion-control protocols (Chapter 4). We develop

a framework in which to reason about the long-term performance and safety of

congestion-control protocols by extensive, fine-grained simulation. By identifying

cycles in the state of the entire network over the course of execution, we can

study the robustness of protocols to variation in initial conditions as well as to

flow arrivals and departures at arbitrary times. Our analysis reveals potentially

undesirable behavior in both RemyCCs and a NewReno-like TCP implementation

that merits further investigation.

15

16

Chapter 2

Related work

2.1

TCP congestion control

Congestion control for TCP is an area with a rich literature, starting with Ramakrishnan

and Jain’s DECbit scheme [22] and Jacobson’s TCP Tahoe and Reno [14] in the 1980s,

and continuing to the present.

“End-to-end” congestion control attempts to use only information available at the

endpoints – such as suspected packet losses or packet round-trip times – to make decisions about sending packets. Most TCP congestion-control protocols control packetsending behavior by moderating a congestion window, which determines the maximum

number of packets the connection may have outstanding at a given moment.

The primary differentiators among end-to-end protocols are the statistic used to

measure congestion and the rules for modifying the window on a congestion event.

Many TCP variants use packet loss as a congestion signal, such as NewReno [12],

BIC [29] and Cubic [11], and Datacenter TCP (DCTCP) [1]. However, recent work

[10] has suggested that the presence of deep, “bloated” buffers in the Internet renders

packet loss an ineffective signal, and that packets will accumulate long delays if senders

wait for a loss event before reducing their congestion window. Other protocols use

17

increasing packet delay as a signal of congestion, such as Vegas [4], FAST TCP [27], and

Compound TCP [25]. These delay-based protocols are the subject of some debate due

to their dependence on accurate round-trip time measurements, which may make their

performance sensitive to changes in the network; furthermore, their sensitivity to high

latency might cause them to suffer in the face of existing loss-based TCP mechanisms

[18].

“In-network” congestion control algorithms attempt to provide the endpoints with

more information about the network state so that they can make better decisions to

avoid congestion through router participation. These schemes use techniques such as

using acknowledgements to notify endpoints of congestion [8, 15], managing delay in

queues by dropping packets before they fill the buffer [9, 20, 17, 6, 21], or explicitly

setting a rate at which endpoints should send data [24].

2.2

Remy

Remy [28] is a tool for synthesizing congestion-control protocols. It takes as input a

model of the network and a particular objective function to optimize, and uses extensive simulation of the provided network parameters to synthesize a protocol according

to the given objective function. A network model might include a range of link speeds

for the bottleneck link, an estimate of the degree of multiplexing in the network, the

expected round-trip propagation delay, and the buffer sizes at the routers, with some

tolerance for uncertainty in all of these parameters. While an objective function could

take many forms, existing evaluations of Remy consider objective functions of the form

log(throughput) − δ log(delay),

where δ is a factor that trades off the relative importance of throughput and delay. That

is, a δ value of zero would indicate that the objective is to maximize throughput; a δ

18

value of one places equal importance on throughput and delay. Remy maps the space

of congestion signals – measured statistics such as packet delays and acknowledgement

interarrival times – to congestion window and rate update rules.

Sivaraman et al. in [23] explore the capabilities and limitations of Remy-designed

protocols optimized over large parameter ranges for an objective function that places

equal importance on delay and throughput. This work indicates that Remy can synthesize protocols that perform well even when provided only limited prior knowledge

about the network, or when the network is heterogeneous (that is, senders have different, and even competing, objectives).

2.3

Safety analysis of congestion-control protocols

A primary concern when deploying a new network protocol is whether it has the potential to cause unexpected and catastrophic behavior. This concern often stems from

the spectre of congestion collapse [19], in which network throughput dramatically

decreases as a result of poor behavior at the endpoints, such as excessive packet retransmissions.

Traditional approaches to analyzing the safety of a congestion-control protocol involve constructing a continuous-time model of the protocol that is amenable to techniques from control theory. For instance, Kelly in [16] uses such a model to analyze the

rate fairness of TCP; Alizadeh et al. in [2] use a limit cycle analysis to study throughput, delay, and convergence properties of DCTCP [1]. Similar analyses have also been

published for in-network congestion-control schemes, such as XCP [15, 3], and activequeue-management schemes [13]. These techniques are generally focused on analyzing the behavior of an aggregate of flows on a particular network topology. For these

protocols, a stability analysis is also useful for identifying safe values of free parameters

(such as a multiplicative decrease factor) when tuning the protocol for deployment.

19

At a more fine-grained level, Veres et al. [26] have used simulations to demonstrate that TCP exhibits chaotic behavior when subjected to small perturbations of the

congestion window.

20

Chapter 3

The Kite protocol

Remy [28] produces congestion-control protocols that perform well on certain objective functions, but are extremely complex, with hundreds of rules determining the

behavior of each endpoint. The complexity of a Remy-optimized protocol (“RemyCC”)

presents a barrier to deployment; while some may find that a RemyCC’s performance

is sufficient to convince them to run it on their computers, others are likely to object

that they cannot trust the behavior of a black-box protocol.

It may well be the case that a protocol that performs well in a diverse Internet

with many competing objectives must be very complex, and if this were true, we might

sacrifice our understanding of the protocols we run in favor of good performance on

the objectives of our choosing. However, if we were able to optimize simpler protocols

with comparable performance, we may not need to resign ourselves to using black-box

algorithms.

In this chapter, we discuss a human-designed protocol, Kite, which attempts to

perform as well as RemyCCs optimized for the objective function

log (throughput) − log (delay)

on broad ranges of network parameters.

21

3.1

Design

Kite replaces the window and pacing update rules in a RemyCC, but maintains the same

state variables. Algorithm 1 gives pseudocode for the update rules, executed on every

ACK arrival at the sender. Kite’s design is informed by the rule tables of the RemyCCs

in [23]; although it ultimately uses fewer congestion signals, and its update rules are

structured differently, some investigation into the behavior of these RemyCCs provided

inspiration for the design described here.

3.1.1

Congestion signals

Kite uses two of the congestion signals used by Remy [28]:

1. An exponentially-weighted moving average over the interarrival times between

acknowledgements (with a weight of 1/8 placed on new samples).

2. The ratio between the most recent RTT and the minimum RTT seen during the

current connection.

In addition to these two signals, Remy uses an exponentially-weighted moving average

over the time between TCP sender timestamps reflected in packet acknowledgements,

but Kite seems to perform reasonably well without this signal. Furthermore, [23] introduces a fourth congestion signal (the same exponentially-weighted moving average

of ACK interarrival times, averaged over more samples) in order to perform well over

a large range of link speeds, but Kite does not use this signal and achieves higher performance on the same range. This suggests that this “slow” EWMA of ACK interarrival

times might be necessary only as an artifact of an inefficient optimization procedure.

22

3.1.2

Windowing and pacing

Remy uses both windowing and pacing in its algorithms, optimizing window increase

and decrease rules as well as sending rates. In contrast, Kite incrementally opens its

window but, once open, never closes the window again, effectively using the window

to regulate sending only in the first few RTTs. However, this conservative increase

appears to be particularly important in order for Kite to perform well at both very low

and very high link speeds; without it, Kite would be either too aggressive at low link

speeds or not aggressive enough for high link speeds.

We are cautious about drawing general conclusions about the benefits of windowing and pacing from these observations. More complex windowing rules might provide

benefits in the form of increased performance at high degrees of multiplexing or robustness to noise in the network. In practice, accurate, fine-grained pacing may not be

feasible at very high link speeds; we have not explored the sensitivity of Kite’s performance to jitter in the network. Conservatively, this observation indicates that for the

objective and parameters that Kite operates in, it may be sufficient to optimize pacing

rules alone, or coarser-grained windowing rules.

3.1.3

Algorithm

Kite follows two intuitive rules:

• use the RTT ratio estimate to reduce standing queues, and

• use the ACK interarrival times to estimate the available capacity in the network

and send accordingly.

Line 8 and line 10 increase the sending rate when queueing is estimated to be

sufficiently low. Lines 7 through 11 ensure that the sending rate does not increase too

quickly when available capacity is small (i.e., when ACKs are arriving slowly).

23

Algorithm 1 Kite

1: initialize cwnd

= 15

2: initialize intersend_time

= 0.5 ms

3:

4: on each ACK do

5: if rtt_ratio ≤ 1.01 then

6:

7:

8:

9:

10:

11:

12:

13:

14:

cwnd += 1

if ack_ewma > 1 ms then

intersend_time = ack_ewma / (ack_ewma / 10 + 1)

else

intersend_time = ack_ewma / (ack_ewma + 1)

end if

else

intersend_time = ack_ewma × 1.1

end if

. Slower link speed

. Faster link speed

The constants in this algorithm, including the initialization defaults, are tuned

roughly for performance; we do not claim that they are correct or optimal for practical scenarios. In particular, they are tuned for performance on the network parameter

ranges described in Section 3.2.

3.2

Evaluation

Link speeds

1–1000 Mbps

22–44 Mbps

RTT

150 ms

150 ms

On avg.

1 sec

1 sec

Off avg.

1 sec

1 sec

# senders

2

2

(a) Training ranges for RemyCCs designed for breadth in link speed.

Link speeds

1–1000 Mbps

RTT

150 ms

# senders

2

(b) Testing scenario to explore breadth in link speed.

Table 3.1: Training and evaluation scenarios for breadth in link speed.

We evaluate Kite on a number of network parameter ranges explored in [23] against

the same RemyCCs evaluated in that paper, as well as with two common schemes in

24

Link speeds

15 Mbps

15 Mbps

On avg.

1 sec

1 sec

Off avg.

1 sec

1 sec

min-RTT

150 ms

150 ms

# senders

2

100

(a) Training ranges for RemyCCs designed for breadth in multiplexing.

Link speeds

15 Mbps

15 Mbps

On avg.

1 sec

1 sec

Off avg.

1 sec

1 sec

min-RTT

150 ms

150 ms

# senders

1–100

1–100

Buffer

5 BDP

No drops

(b) Testing scenarios to explore breadth in multiplexing

Table 3.2: Training and evaluation scenarios for breadth in multiplexing.

Link speeds

33 Mbps

33 Mbps

On avg.

1 sec

1 sec

Off avg.

1 sec

1 sec

min-RTT

150 ms

50–250 ms

# senders

2

2

(a) Training ranges for RemyCCs designed for breadth in propagation delay.

Link speeds

33 Mbps

On avg.

1 sec

Off avg.

1 sec

min-RTT

1, 2, 3 . . . 300 ms

# senders

2

(b) Testing scenario to explore breadth in propagation delay.

Table 3.3: Training and evaluation scenarios for breadth in propagation delay.

wide use today:

1. TCP Cubic [11], the default congestion-control protocol in Linux, and

2. Cubic over CoDel with stochastic fair queueing [20], an active-queue-management

and scheduling algorithm that runs on bottleneck routers and assists endpoints

in achieving a fairer and more efficient use of network resources.

We evaluate each protocol on a dumbbell network topology with one bottleneck

link. In each evaluation, senders turn on for a period of time drawn from an exponential distribution with mean 1 second, and turn off for a period of time drawn from

the same distribution before turning on again. Queues in the network are first-in,

25

first-out (FIFO) queues except for evaluations using Cubic-over-sfqCoDel, which runs

CoDel with stochastic fair queueing. Unless specified otherwise, buffers are sized at 5

times the bandwidth-delay product of the network (the network link speed times the

minimum round-trip time).

The evaluations are performed in the ns-2 simulator. For brevity, we evaluate only

against the RemyCCs trained for either the low or high extreme of breadth in a particular network parameter.

In all cases, the objective function evaluated is

log (throughput) − log (delay).

The network is homogeneous; that is, all endpoints in each experiment run the same

algorithm. In each evaluation result we also include the results for a hypothetical omniscient protocol, which has perfect knowledge of the network and can therefore give

each sender its fair-share allocation of the link bandwidth while incurring no queueing

delay.

Figure 3-1 shows results for protocols tested over a broad range of link speeds (Table 3.1b). Kite outperforms a RemyCC trained for a thousand-fold range of link speeds

over almost the entire testing range. It does not achieve performance comparable to a

RemyCC trained for a two-fold range of link speeds within its own training range, but

far outperforms it outside that range (as does the thousand-fold RemyCC).

Figure 3-2 shows results for protocols tested on a range of round-trip times (Table 3.3b). Although Kite does not achieve quite the same level of performance as a

RemyCC trained for a large range of RTTs, it comes close, and maintains consistency in

performance across all but the very lowest RTT values.

Figure 3-3 shows results for protocols tested for variation in the number of senders

contending for the network (Table 3.2b) – both with a buffer size of 5 times the

26

0.0

Normalized objective function

−0.5

Protocol

Cubic

Cubic−over−sfqCoDel

Kite

−1.0

Omniscient

RemyCC (1000x)

RemyCC (2x)

−1.5

−2.0

1

10

100

1000

Link speed (Mbits/s)

Figure 3-1: Performance of Kite over a range of link speeds.

bandwidth-delay product (Figure 3-3a), and with a large enough buffer that no packets

are dropped (Figure 3-3b). Figure 3-3 shows that while Kite does not achieve utility

as high as some RemyCC for a particular number of senders, it has better breadth; that

is, it performs better at small numbers of senders than a RemyCC trained for a large

number of senders, and performs better at large numbers of senders than a RemyCC

trained for a small number of senders.

3.3

Discussion

While we do not make claims about the general utility or robustness of this protocol, it

provides a counterpoint to the discussion in [28] that we may be resigned to increased

complexity at endpoints if we wish to design protocols with predictable aggregate behavior for specific objective functions. Kite requires about 10 lines of code to perform

well on an objective function for which Remy generates a protocol with hundreds of

27

0.0

Normalized objective function

−0.5

Protocol

Cubic

Cubic−over−sfqCoDel

Kite

−1.0

Omniscient

RemyCC (150 ms exactly)

RemyCC (50−250 ms)

−1.5

−2.0

100

200

300

RTT (ms)

Figure 3-2: Performance of Kite over a range of RTTs.

rules. This suggests that there is promise for new protocol synthesis tools that generate interpretable protocols without sacrificing performance, smoothing the path for

acceptance of computer-generated protocols by the networking community. Such a

tool might additionally provide a framework in which to rigorously evaluate tradeoffs

among interpretability, performance, and robustness.

These results also suggest that there may be hope for a “one-size-fits-all” protocol

that performs well on wide ranges of several network parameters at once, at least on

certain objectives. (A more intelligent protocol might also try to infer when an objective

is infeasible to achieve, and act according to a “next-best” alternative, as in the case of

a latency-sensitive protocol competing with a buffer-filling TCP variant).

It is also worth noting that each RemyCC in the evaluation was trained for breadth

in only one network parameter, and given perfect knowledge of all other parameters.

It may be possible to optimize a RemyCC for breadth in all three parameters (link

speed, round-trip time, and number of senders), but, given the large space of training

28

Normalized objective function

0.0

Protocol

−0.5

Cubic

Cubic−over−sfqCoDel

Kite

Omniscient

RemyCC (1−100 senders)

RemyCC (1−2 senders)

−1.0

−1.5

25

50

75

100

Number of senders

(a) Buffer size 5x BDP.

0

Normalized objective function

−1

Protocol

Cubic

Cubic−over−sfqCoDel

Kite

−2

Omniscient

RemyCC (1−100 senders)

RemyCC (1−2 senders)

−3

−4

25

50

75

100

Number of senders

(b) No packet drops.

Figure 3-3: Performance of Kite over a range of multiplexing.

29

scenarios, it would take weeks for the current optimizer to create such a protocol even

on a many-core server.

The discussion in this chapter highlights one additional benefit of an automated

protocol-synthesis tool: it is free of many biases and preconceptions that we have

about network congestion control, and can give us insight into different approaches

that might work better than what we could otherwise design. Even if interpretable

protocol synthesis is a distant future, we might be able to use the output of tools like

Remy to help inform and accelerate our engineering efforts.

30

Chapter 4

Toward automated verification of

congestion control

When a new congestion-control protocol is proposed, a primary concern from researchers and engineers is whether the protocol has failure modes that could lead

to catastrophic collapse in the Internet (cf. [19]). However, these failure modes are

difficult to predict and may be discovered only after deployment, when poor behavior

begins to manifest as deterioration in application performance and user experience.

One preventative measure is to perform general analyses of protocol behavior to find

failure modes or convince the community that the protocol is safe to use and will generally result in desirable behavior.

Traditional methods of analysis involve, for example, manually constructing a

continuous-time fluid model of a protocol, qualitatively demonstrating its correspondence with network simulations, and using techniques from control theory to establish

various desirable properties of the protocol [16, 3, 2]. While these analyses are useful for making statements about aggregate, average flow behavior, they require expert

knowledge to construct and are rarely helpful for comparing new protocols side-byside. Moreover, for complex protocols such as those generated by Remy [28], a fluid

31

model analysis is likely inaccessible even to an expert.

This chapter describes preliminary work toward an automated verification system

for congestion-control protocols.

4.1

Approach

To evaluate the safety of a protocol, we must have tools for reasoning about its longterm behavior. Continuous-time, continuous-state systems might be amenable to controltheoretic analysis, but at the finest granularity, the evolution and state of a network

contain both discrete and continuous elements. While fluid model analysis might predict favorable, stable equilibria (e.g. [16]), finer-grained simulation can reveal chaotic

behavior (for example, [26] describes divergence in TCP congestion window evolution

with small perturbations to initial conditions).

Here we explore the extreme of fine-grained safety analysis: using network simulations to directly verify properties of congestion-control protocols. We identify the

network state variables required to fully determine the future evolution of the network

state, and search for cyclic behavior in this state as we simulate the network forward.

If the evolution of the network state converges to a cycle, we can evaluate the senders’

objective functions over the course of that cycle in order to make statements about

the long-term value of the objective function, in addition to making statements similar to those made in traditional fluid-model analyses (e.g., [2]) about buffer sizes and

fairness. If we observe that the network enters multiple different cycles as a result of

perturbations, we can reason about the worst- and best-case performance of the protocol (under the simulated conditions) by evaluating these statistics over all observed

cycles.

32

4.1.1

Cycle detection

To find cycles in the network state, we simulate the network in an event-driven simulator and use Brent’s cycle-detection algorithm [5], polling the network state only on

network element events (e.g., a sender sending a packet, or a queue draining a packet).

We truncate continuous state variables to a precision of eight decimal places.

Brent’s algorithm (Algorithm 2) is a modification of Floyd’s cycle-detection algorithm [7]. Floyd’s algorithm uses two pointers to find a cycle in a linked list, by running one “slow” pointer through the list one node at a time, and another “fast” pointer

through the list two nodes at a time. If the pointers are ever equal, there is a cycle in

the list. In the context of a network simulation, we can run two simulations in parallel: one simulation one network event at a time, and a second simulation two network

events at a time; if the network states in these two simulations are ever the same, then

we have found a cycle in the evolution of the network.

Brent’s algorithm is more efficient than Floyd’s algorithm, as it runs the fast network

forward by powers of two rather than multiples of two, and simultaneously calculates

the number of network events in the cycle. Therefore, once a cycle has been detected,

we can easily find the time required for the network state to converge to this cycle by

once again running two simulations, one of which is exactly one cycle-length ahead

of the other. When the states of the two simulations are equal, the lagging simulation

is at the beginning of the first occurrence of the cycle. We can then run this lagging

simulation forward one cycle-length to calculate statistics, such as the average utility,

over the course of the cycle.

4.1.2

Network state

We use the same tools to evaluate RemyCC protocols as well as a simple TCP protocol

using additive increase/multiplicative decrease (AIMD) congestion window rules. This

33

Algorithm 2 Brent’s cycle-detection algorithm

Input: Starting network configuration

. Phase 1: detect the existence of a cycle

initialize fast_network to starting configuration

while simulated_time < time_limit do

initialize slow_network to current configuration of fast_network

steps_taken = 0

step_limit = 1

cycle_found = false

while steps_taken < step_limit do

run fast_network until network event

steps_taken++

if state of slow_network == state of fast_network then

cycle_found = true

break

end if

end while

if cycle_found then

break

end if

steps_taken = 0

step_limit *= 2

end while

. Phase 2: find the beginning of the cycle

re-initialize fast_network to starting configuration

re-initialize slow_network to starting configuration

for steps_taken iterations do

run fast_network until network event

end for

while state of slow_network != state of fast_network do

run fast_network until network event

run slow_network until network event

end while

. The “slow network” is now at the start of the cycle.

Output: Beginning of cycle

34

requires minimal annotation in the form of the specific state variables to maintain for

each sender.

For a single RemyCC sender, we maintain the following state variables:

• The RemyCC memory:

– EWMA (with a weight of 1/8) of interarrival times between acknowledgements

– EWMA (with a weight of 1/256) of interarrival times between acknowledgements

– EWMA of time between sender timestamps in the acknowledgements

– Ratio between the most recent RTT and the minimum RTT

• Current window size

• Time since last ACK received

For an AIMD sender, we maintain the following state variables:

• Current window size

• Number of packets remaining until window is filled

• Time since the last time a packet loss was detected

For the remainder of the network, we maintain the following state variables:

• State of each sender

• Current queue size

• Source ID of packet at the front of the queue

• Time until next event for each network element: senders, link, and receiver

35

4.2

4.2.1

Single sender

Sensitivity to initial conditions

Using the cycle-based technique described in the previous section, we can examine

various protocols’ behavior in steady state with a single sender in the network. In

particular, we study a RemyCC trained for a two-fold range of link speeds and another

trained for a thousand-fold range of link speeds, as described in Section 3.2. In this

experiment, we extensively simulate each RemyCC in a network with one sender, one

32 Mbits/s bottleneck link, and a round-trip time of 150 ms. We perturb the initial

conditions of the sender in two variables: the exponentially-weighted moving average

of ACK interarrival times that Remy maintains, and the size of the buffer at the time

the sender turns on. Figure 4-1 plots the value of the objective function of

log(throughput) − log(delay),

which gives equal weight to throughput and delay, for the cycles that this single sender

enters as the initial conditions are varied.

These plots highlight the fragility incurred from the piecewise nature of the protocol; slight variations in initial conditions can significantly impact the long-term utility

that the sender achieves. Figure 4-1a shows that a RemyCC trained for link speeds

between 22 and 44 Mbits/s achieves near 100% throughput for all initial conditions

tested. However, closer inspection (Figure 4-1b) reveals that these throughput values

are very sensitive to small variations in initial conditions; an increase of a single packet

in the initial buffer size can cause a throughput loss of almost 5%. A more robust

RemyCC might be designed by performing such evaluations during the optimization

procedure, and attempting to ensure that these regions of the initial conditions space

are continuous.

36

(a) Normalized throughput averaged over cycles reached by a RemyCC

trained for a two-fold range of link speeds [23]. Lighter values are closer to

full throughput.

(b) Normalized throughput averaged over cycles reached by a RemyCC

trained for a two-fold range of link speeds [23], with scale adjusted to highlight irregularities. The values are all close to 100% throughput, but small

perturbations to the initial conditions result in differing outcomes.

37

(c) Normalized throughput averaged over cycles reached by a RemyCC

trained for a thousand-fold range of link speeds [23]. Lighter values are

closer to full throughput. Initial buffer sizes below about 900 packets result in 60% or greater throughput; however, initial buffer sizes above 900

packets cause throughput to drop to below 50% and as low as 10%.

Figure 4-1: Throughput maps generated by sweeping a range of initial conditions for

a single sender running one of two Remy-generated protocols. An omniscient sender

would achieve 100% throughput (white).

38

A RemyCC trained for link speeds between 1 and 1000 Mbits/s exhibits more complex behavior (Figure 4-1c); the sender converges to a range of possible throughput

values. (The delay incurred was roughly the same for all initial conditions tested.) This

protocol achieves good throughput – above 60% – if the buffer size is below about 900

packets; above this value, throughput drops to as low as 10% of the link bandwidth.

This indicates that this RemyCC does have failure modes triggered by an increase in

buffer size, which should be investigated further before it is deployed in a real network.

4.2.2

Convergence time

We can additionally study the time required for the single-sender system to converge

to a cycle as a metric of the feasibility of this analysis. We sample 100 link speeds

distributed logarithmically over the range 1 Mbit/s to 1000 Mbits/s and measure the

time required for the state of a single-sender system to converge to a cycle. Figure 4-2

shows the convergence behavior for two RemyCCs optimized for breadth in link speed.

For a RemyCC trained for a two-fold range of link speeds (22-44 Mbits/s, Figure 4-2a),

the convergence time increases within the training range, where the protocol performs

better, indicating that cyclic behavior does not always correlate with good performance.

In fact, for link speeds between 22 and 23 Mbits/s, the system does not converge to a

cycle even after 5000 simulated seconds.

A thousand-fold RemyCC (trained for link speeds between 1 and 1000 Mbits/s,

Figure 4-2b) performs better; a single sender running this RemyCC converges to a cycle

within 16 seconds for the sampled link speeds. While this does not necessarily have

direct implications for performance, it does mean that this cycle-based analysis tool is

not suited to the RemyCC in Figure 4-2a, as it has regions even within the parameter

range it is trained for where it does not converge to a cycle in a tractably short duration.

39

5e+05

Time required to enter cycle (ms)

4e+05

3e+05

2e+05

1e+05

0e+00

1

10

100

Link speed (Mbits/s)

(a) Convergence times for a single sender running a RemyCC trained for

a two-fold range of link speeds (22-44 Mbits/s). The red line indicates a

link rate for which the system did not enter a cycle after 5000 simulated

seconds. Notably, the convergence times are lower outside the training

range, where the protocol performance is otherwise poorer.

16000

Time required to enter cycle (ms)

12000

8000

4000

1

10

100

Link speed (Mbits/s)

(b) Convergence times for a single sender running a RemyCC trained

for a thousand-fold range of link speeds (1-1000 Mbits/s).

Figure 4-2: Cycle convergence times for a one-sender system in a single-bottleneck

network with a 150 ms round-trip time.

40

4.3

Multiple senders

The presence of cyclic behavior allows us to reason about the safety of a protocol as

senders join and leave the network. We study the case in which a second sender could

join and leave the network at an arbitrary time after the first sender has turned on.

For these experiments, we assume zero initial conditions in all state variables, studying

noise only in the form of senders joining and leaving.

4.3.1

A sender joins the network

Consider a network in which a single sender s1 takes time t 1 to converge to a cycle

and time c1 to complete the cycle after it has converged. To reason about the safety

of a new sender s2 joining the network after s1 , we must consider the possibility that

s2 joins at an arbitrary time until t 1 + c1 . That is, we must simulate the second sender

joining at any time offset t on ∈ [0, t 1 + c1 ]. If the two-sender system then converges to

a cycle, we can apply the same tools as in the one-sender case to evaluate the safety of

that cycle.

Figure 4-3 shows cycle convergence times for the case of a sender joining the network for two different protocols: a RemyCC trained for a thousand-fold range of link

speeds (1-1000 Mbits/s) and an AIMD sender. The test network consisted of a single

32 Mbits/s link with a 150 ms round-trip time. The network with AIMD senders was

limited to a maximum buffer size of 10 packets in order to have tractable convergence

times. The simulation was terminated if it did not reach a cycle within 5000 simulated

seconds.

The AIMD protocol has regions of t on offsets in which the two-sender system did

not enter a cycle within the allotted time. When the two-sender system did converge,

the RemyCC system converged much faster than the AIMD system, on the order of tens

of simulated seconds rather than hundreds. (The convergence time of a two-sender

41

Time required to enter cycle (ms)

30000

20000

10000

0

1000

2000

3000

Offset of second sender start (ms)

(a) Convergence times for a RemyCC trained for a

thousand-fold range of link speeds (1-1000 Mbits/s). No

limit was set on the buffer size.

Time required to enter cycle (ms)

2000000

1500000

1000000

500000

0e+00

1e+05

2e+05

3e+05

Offset of second sender start (ms)

(b) Convergence times for a simple AIMD sender. The

maximum buffer size was 10 packets to limit convergence

time. A red line indicates that the two-sender system did

not converge within 5000 simulated seconds.

Figure 4-3: Cycle convergence times for a two-sender system in a single-bottleneck

network with a 32 Mbits/s link and 150 ms round-trip time. The x-axis indicates the

time at which a second sender joins the network (starting at time 0 when the first

sender starts, until the first sender reaches a cycle). The y-axis indicates the amount

of time from when the second sender starts until the entire network state enters a

cycle. While a system of two RemyCCs converges to a cycle within under 35 seconds

regardless of when the second sender turns on, a system of AIMD senders can take up

to 2000 seconds to converge for the same network parameters.

42

AIMD system improves, predictably, in a scenario such as the one explored in [2] with

a 10 Gbits/s link and an RTT of several hundred microseconds.) The RemyCC, with

short cycles, is better suited to the analysis technique we describe; this suggests that it

would be beneficial to incorporate not only a requirement for cyclic behavior but also

a restriction on cycle length in future protocol-synthesis tools.

4.3.2

A sender leaves the network

When a sender leaves a network, we would like to ensure that the subsequent network

behavior is safe. Consider a two-sender network in which sender s1 turns on at time

0 and sender s2 turns on at some time offset t on ≥ 0. Define t 2 to be the time (after

t on ) required for this two-sender system to enter a cycle and c2 to be the time required

to complete the cycle after it has converged. The cycles of each sender need not be

interchangeable; that is, the state of s1 may cycle through different values than the

state of s2 (which joined the network some time after s1 ). Therefore, we must consider

the possibility of either sender turning off at an arbitrary time t o f f ∈ [t on , t on + t 2 + c2 ].

If we can demonstrate that the network enters a cycle for any combination of t on and

t o f f , we can then reason about the safety of a second sender entering and leaving

the network at arbitrary times, by examining the average value of a user-specified

objective function evaluated over each cycle. As in Section 4.3.1, if the network fails

to enter a cycle after an appropriately long simulated duration for some values of t on

and t o f f , this might indicate an unsafe mode of operation (or, conservatively, a mode of

operation which our current tool cannot reason about automatically, and merits further

investigation).

We have carried out this validation process for a two-sender system of RemyCCs

trained for a thousand-fold range of link speeds (1-1000 Mbits/s), in a network with

a single 32 Mbits/s bottleneck and 150 ms round-trip time. We simulate values of

t on and t o f f at integral numbers of milliseconds in the full range of t on ∈ [0, t 1 +

43

c1 ], t o f f ∈ [t on , t on + t 2 + c2 ], terminating any simulations running longer than 5000

simulated seconds. If the two-sender system does not reach any cycle, we terminate

the evaluation and do not simulate any t o f f values. (An alternate method might be to

fix the flow length of s2 to make the analysis more tractable and simulate all possible

values of t on for that flow, checking only whether the single-sender system enters a

cycle after s2 completes its flow. This would be agnostic of the two-sender system

entering a cycle.)

The full validation, of about 80 million simulations, took roughly 12 hours on an

80-core server. We plot one such evaluation in Figure 4-4, for a network in which a

sender s2 turns on 100 milliseconds into the execution of sender s1 . We sample integral

durations (in milliseconds) for which s2 remains on before it turns off and leaves the

network. In Figure 4-4, we plot the time required for the s1 to re-enter a cycle after

s2 turns off. For this setup, the time required for s1 to reach a cycle increases almost

linearly with the sending duration of s2 . Note the erratic convergence behavior when

s2 is only on for a short period of time: this might indicate that it takes s1 longer to

return to a cycle if it encounters a momentary disruption, or simply that this particular

duration of s2 sending results in a network configuration from which s1 takes longer to

return to a cycle.

This analysis can be optimized somewhat if we can show that the two-sender system

enters one unique cycle regardless of the value of t on , as we then need only consider the

various trajectories taken during the time from t on until the network enters a cycle at

t on + t 2 , and there is only one cyclic trajectory to analyze from t on + t 2 until t on + t 2 + c2 .

RemyCCs appear not to have this property; for the RemyCC trained for a two-fold range

of link speeds, varying the t on value seems almost always to result in a unique cycle

for the subsequent two-sender system. Moreover, these cycles are qualitiatively quite

different, as evidenced by Figure 4-5. However, our simple AIMD model does have this

property: for any value of t on , the two-sender system will always enter the same cycle.

44

Convergence time for first sender (ms)

20000

15000

10000

5000

4000

8000

12000

16000

Duration for which second sender remains on (ms)

Figure 4-4: Time required for a single sender to return to a cycle after a second sender

has entered and left the network, in a network of RemyCCs trained for link speeds

between 1 and 1000 Mbits/s. The x-axis specifies the period of time for which the

second sender turns on before turning off again. The y-axis shows the number of

milliseconds required for the first sender to return to a cycle. The convergence time

for the first sender increases systematically the longer the second sender is on, except

when the second sender’s flow duration is short (under one second).

45

25

EWMA of ACK interarrival rate

20

Second sender offset

15

102.615

21.378

996.223

10

5

1000

2000

3000

4000

Sequence number of network event

Figure 4-5: Variation in cycle trajectories in one variable of the state of a two-sender

system of RemyCCs. The trajectories vary significantly and must be analyzed separately.

Since the AIMD protocol has much longer convergence and cycle times than RemyCCs

for comparable network parameters (link speed and round trip time), compressing the

cycles of the two-sender system into a single unique cycle might save a considerable

amount of time when evaluating the safety of one sender leaving the network.

4.4

Discussion

The results presented in this chapter are preliminary, but they demonstrate that – given

sufficient computational power – it is possible to automatically make statements about

the behavior of a black-box protocol. If we can demonstrate that a protocol enters cycles, a fine-grained analysis of its long-term behavior becomes tractable. It is therefore

beneficial to try to ensure that protocols do have such cyclic behavior in order to be

46

able to reason about their behavior, and a Remy-like protocol optimizer might be able

to enforce this as a requirement during the optimization.

While we take advantage of the presence of cycles in order to reason about the

long-term behavior of protocols, cyclic behavior is by no means required for a protocol

to be safe for deployment: indeed, the best-performing algorithms may have no cyclic

behavior at all, and there may be other ways of automatically evaluating the safety and

robustness of such algorithms.

A considerable amount of further work is required before tools such as the ones

presented in this chapter can be used to determine whether a protocol is truly “safe”

for use in the Internet. One important limitation of a simulation-based approach is that

the results do not immediately generalize to any network configurations outside of the

simulated one. It will be important to determine how to make such generalizations, and

– perhaps even more challenging – how to do so automatically, treating the protocol as

a black box.

However, even our preliminary results reveal some interesting properties of both

RemyCCs and simple AIMD senders. Particularly important is that RemyCCs do exhibit failure modes that can be made clear through extensive simulation. This does not

suggest that computer-synthesized protocols will all exhibit such behavior; in fact, it

suggests that, if developed further, analysis tools such as these can be useful for incorporation into the optimization procedure itself, to ensure that any protocol generated

by an automatic synthesizer is safe.

These kinds of safety guarantees, and formal verification tools, have been explored

in detail by the programming languages community, and we believe that such formal

guarantees will also be useful in engineering new network protocols, whether by hand

or by machine.

47

48

Chapter 5

Future work and conclusions

A number of avenues for future work remain.

The results in Chapter 3 suggest that there is potential to develop a protocolsynthesis tool that produces less complex algorithms, while retaining good performance. One way of building such a synthesizer would be to build a post-processing

step into the Remy optimizer, which regularizes the output of Remy into a simpler protocol. However, the current Remy optimizer takes days, or even weeks, to optimize a

good protocol. Finding a synthesis procedure with all three of these desirable properties – simple outputs, tractable runtime, and good performance – is a challenging, but

perhaps not impossible, task.

The two-sender evaluation method presented in Section 4.3 requires a number of

simulations that grows exponentially in the number of senders and will certainly not

scale to large numbers of senders. We may be able to optimize this by caching results

for trajectories of the state variables that recur in different simulations, as suggested in

Section 4.3.2, but this optimization is not possible for all protocols of interest; in fact,

we demonstrate that RemyCCs enter unique cycles for almost all simulated conditions

in our experiments with two senders. To automatically verify the safety of a protocol

when many flows are arriving and departing stochastically, it will be necessary to find

49

a verification strategy that is computationally tractable, which may not be the same

method presented in this thesis.

While we rely on the existence of cycles in the network state in order to perform the

analyses in Chapter 4, it is clear that many congestion-control protocols will not exhibit

such behavior – in fact, Section 4.3 identifies evaluation regions in which a RemyCC

does not enter a cycle at all, even under perfect, noise-free simulated conditions. To

develop a truly protocol-agnostic verification tool, we will need to develop methods

for reasoning about the long-term behavior of protocols that do not admit cycles in the

network state.

Stochastic flow arrivals and departures are only one form of noise in a network.

Section 4.2 shows that small perturbations in other variables of the network state can

lead to significant differences in performance (an observation true not only for RemyCCs but also, for example, TCP Tahoe [26]). Determining whether a cycle in the

network state is “stable” (in the sense that the network will robustly return to the cycle,

even under perturbations such as packet jitter) is useful in order to generalize our results to performance on real networks. Developing a formalism for making these kinds

of generalizations of simulated results is left to future work.

50

Bibliography

[1] M. Alizadeh, A. Greenberg, D. A. Maltz, J. Padhye, P. Patel, B. Prabhakar, S. Sengupta, and M. Sridharan. Data center tcp (dctcp). ACM SIGCOMM computer

communication review, 41(4):63–74, 2011.

[2] Mohammad Alizadeh, Adel Javanmard, and Balaji Prabhakar. Analysis of dctcp:

stability, convergence, and fairness. In Proceedings of the ACM SIGMETRICS joint

international conference on Measurement and modeling of computer systems, pages

73–84. ACM, 2011.

[3] H. Balakrishnan, N. Dukkipati, N. McKeown, and C. J. Tomlin. Stability analysis of

explicit congestion control protocols. IEEE Communications Letters, 11(10):823–

825, 2007.

[4] L. S. Brakmo and L. L. Peterson. Tcp vegas: End to end congestion avoidance on a

global internet. Selected Areas in Communications, IEEE Journal on, 13(8):1465–

1480, 1995.

[5] R.P. Brent. An improved monte carlo factorization algorithm. BIT Numerical

Mathematics, 20(2):176–184, 1980.

[6] W. Feng, K. Shin, D. Kandlur, and D. Saha. The BLUE Active Queue Management

Algorithms. IEEE/ACM Trans. on Networking, August 2002.

[7] R.W. Floyd. Nondeterministic algorithms. Journal of the ACM (JACM), 14(4):636–

644, 1967.

[8] S. Floyd. Tcp and explicit congestion notification. ACM SIGCOMM Computer

Communication Review, 24(5):8–23, 1994.

[9] S. Floyd and V. Jacobson. Random early detection gateways for congestion avoidance. Networking, IEEE/ACM Transactions on, 1(4):397–413, 1993.

[10] J. Gettys and K. Nichols. Bufferbloat: Dark buffers in the internet. Queue,

9(11):40, 2011.

[11] S. Ha, I. Rhee, and L. Xu. Cubic: a new tcp-friendly high-speed tcp variant. ACM

SIGOPS Operating Systems Review, 42(5):64–74, 2008.

51

[12] J.C. Hoe. Improving the start-up behavior of a congestion control scheme for tcp.

In ACM SIGCOMM Computer Communication Review, volume 26, pages 270–280.

ACM, 1996.

[13] C.V. Hollot, V. Misra, D. Towsley, and W. Gong. Analysis and design of controllers

for aqm routers supporting tcp flows. Automatic Control, IEEE Transactions on,

47(6):945–959, 2002.

[14] V. Jacobson. Congestion avoidance and control. In ACM SIGCOMM Computer

Communication Review, volume 18, pages 314–329. ACM, 1988.

[15] D. Katabi, M. Handley, and C. Rohrs. Congestion control for high bandwidthdelay product networks. In ACM SIGCOMM Computer Communication Review,

volume 32, pages 89–102. ACM, 2002.

[16] F. Kelly. Fairness and stability of end-to-end congestion control*. European journal

of control, 9(2):159–176, 2003.

[17] S. Kunniyur and R. Srikant. Analysis and Design of an Adaptive Virtual Queue

(AVQ) Algorithm for Active Queue Management. In SIGCOMM, 2001.

[18] Jeonghoon Mo, Richard J La, Venkat Anantharam, and Jean Walrand. Analysis

and comparison of tcp reno and vegas. In IEEE INFOCOM, volume 3, pages 1556–

1563. INSTITUTE OF ELECTRICAL ENGINEERS INC (IEEE), 1999.

[19] J. Nagle. Congestion control in IP/TCP internetworks. 1984.

[20] K. Nichols and V. Jacobson. Controlling queue delay. Communications of the ACM,

55(7):42–50, 2012.

[21] R. Pan, B. Prabhakar, and K. Psounis. CHOKe—A Stateless Active Queue Management Scheme for Approximating Fair Bandwidth Allocation. In INFOCOM,

2000.

[22] K. K. Ramakrishnan and R. Jain. A Binary Feedback Scheme for Congestion

Avoidance in Computer Networks. ACM Trans. on Comp. Sys., 8(2):158–181,

May 1990.

[23] A. Sivaraman, K. Winstein, P. Thaker, and H. Balakrishnan. An experimental study

of the learnability of congestion control. In ACM SIGCOMM, Chicago, August

2014.

[24] C.H. Tai, J. Zhu, and N. Dukkipati. Making Large Scale Deployment of RCP

Practical for Real Networks. In INFOCOM, 2008.

[25] K. Tan, J. Song, Q. Zhang, and M. Sridharan. A Compound TCP Approach for

High-speed and Long Distance Networks. In INFOCOM, 2006.

52

[26] A. Veres and M. Boda. The chaotic nature of tcp congestion control. In INFOCOM

2000. Nineteenth Annual Joint Conference of the IEEE Computer and Communications Societies. Proceedings. IEEE, volume 3, pages 1715–1723. IEEE, 2000.

[27] D.X. Wei, C. Jin, S.H. Low, and S. Hegde. FAST TCP: Motivation, Architecture,

Algorithms, Performance. IEEE/ACM Trans. on Networking, 14(6):1246–1259,

2006.

[28] K. Winstein and H. Balakrishnan. TCP ex Machina: Computer-Generated Congestion Control. In ACM SIGCOMM, Hong Kong, August 2013.

[29] L. Xu, K. Harfoush, and I. Rhee. Binary Increase Congestion Control (BIC) for

Fast Long-Distance Networks. In INFOCOM, 2004.

53