chapter 8 annealing

advertisement

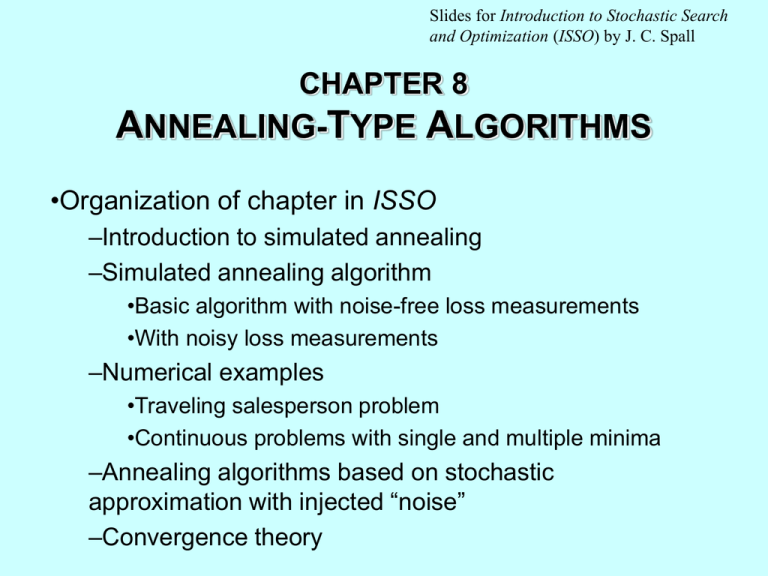

Slides for Introduction to Stochastic Search and Optimization (ISSO) by J. C. Spall CHAPTER 8 ANNEALING-TYPE ALGORITHMS •Organization of chapter in ISSO –Introduction to simulated annealing –Simulated annealing algorithm •Basic algorithm with noise-free loss measurements •With noisy loss measurements –Numerical examples •Traveling salesperson problem •Continuous problems with single and multiple minima –Annealing algorithms based on stochastic approximation with injected “noise” –Convergence theory Background on Simulated Annealing • Continues in spirit of Chaps. 2, 6, and 7 in working with only loss measurements (no direct gradients) • Simulated annealing (SAN) based on analogies to cooling (annealing) of physical substances – Optimal analogous to minimum energy state • Primarily designed to be global optimization method • Based on probabilistic criterion for accepting increased loss value during search process – Metropolis criterion – Allows for temporary increase in loss as means of reaching global minimum • Some convergence theory possible (e.g., Hajek, 1988, for discrete [see p. 213 of ISSO]; Sect. 8.6 in ISSO for continuous ) 8-2 Metropolis Criterion • In iterative process, suppose have current value curr and candidate new value new. Should we accept new if new is worse than curr (i.e., has higher loss value)? • Metropolis criterion (from famous 1953 paper of Metropolis et al.) L(new ) L(curr ) exp c T b gives probability of accepting new value (cb is constant and T is “temperature”; set cb = 1 without loss of generality) • Repeated application of Metropolis criterion (iteration to iteration) provides for convergence of SAN to global minimum – Markov chain theory applies for discrete ; stochastic approximation for continuous 8-3 SAN Algorithm with Noise-Free Loss Measurements Step 0 (initialization) Set initial temperature T and current parameter curr; determine L(curr). Step 1 (candidate value) Randomly determine new value new and determine L(new). Step 2 (compare L values) If L(new) < L(curr), accept new . Alternatively, if L(new) L(curr), accept new with probability given by Metropolis criterion (implemented via Monte Carlo sampling scheme); otherwise keep curr . Step 3 (iterate at fixed temperature) Repeat steps 1 and 2 until T is changed. Step 4 (decrease temperature) Lower T according to the annealing schedule and return to Step 1. Continue till effective convergence. 8-4 SAN Algorithm with Noisy Loss Measurements • As with random search (Chap. 2 of ISSO), standard SAN not designed for noisy measurements y = L + • However, SAN sometimes used with noisy measurements • Standard approach is to form average of loss measurements at each in search process • Alternative is to use threshold idea of Sect. 2.3 of ISSO – Only accept new value if noisy loss value is sufficiently bigger or smaller than current noisy loss • Can use one-sided Chebyshev inequality to characterize likelihood of error at each iteration under general noise distribution • Very limited convergence theory for SAN with noisy measurements 8-5 Traveling Salesperson Problem (TSP) • TSP is famous discrete optimization problem • Many successful uses of SAN with TSP • Basic problem is to find best way for salesperson to hit every city in territory once and only once – Setting arises in many problems of optimization on networks (communications, transportation, etc.) • If tour involves n cities, there are (n–1)! /2 possible solutions – Extremely rapid growth in solution space as n increases – Problem is “NP hard” • Perturbations in SAN steps based on three operations on network: inversion, translation, and switching – Depicted below 8-6 TSP: Standard Search Operations Applied to 8-City Tour Inversion reverses order 2-3-4-5; translation removes section 2-3-4-5 and places it between 6-7; switching interchanges order of 2 and 5. 8-7 TSP (cont’d) Solution to Trivial 4-City Problem where Cost/Link = Distance (Related to Exercise 8.5 in ISSO) 8-8 Some Numerical Results for SAN • Section 8.3 of ISSO reports on three examples for SAN – Small-scale TSP – Problem with no local minima other than global minimum – Problem with multiple local minima • All examples based on stepwise temperature decay in basic SAN steps above and noise-free loss measurements • All SAN runs require algorithm tuning to pick: – Initial T – Number of iterations at fixed T – Choice of 0 < < 1, representing amount of reduction in each temperature decay – Method for generating candidate new • Brief descriptions follow on slides below…. 8-9 Small-Scale TSP (Example 8.1 in ISSO) • 10-city tour (very small by industrial standards) – Know by enumeration that minimum cost of tour = 440 • Randomly chose inversion, translation, or switching at each iteration – Tuning required to choose “good” probabilities of selecting these operators • 8 of 10 SAN runs find minimum cost tour – Sample mean cost of initial tour is 700; sample mean of final tour is 444 • Essential to success is adequate use of inversion operator; 0 of 10 SAN runs find optimal tour if probability of inversion is 0.50 • SAN successfully used in much larger TSPs – E.g., seminal 1983 (!) Kirkpatrick et al. paper in Science considers TSP with 400 cities 8-10 Comparison of SAN and Two Random Search Algorithms (Example 8.2 in ISSO) • Considered very simple p = 2 “quartic loss” seen earlier: L() t14 t12 t1t2 t22 ( [t1, t2 ]T ) • Function has single global minimum; no local minima • Table below gives sample mean terminal loss value, where initial loss = 4.00; L() = 0 No. of meas. Random search B Random search C SAN Tinit = 0.01 SAN Tinit = 0.10 100 0.00053 0.328 1.86 0.091 0.00038 0.0024 10,000 2.7 10 6 2.510 7 • SAN performs well, but random search even better in this problem 8-11 Evaluation of SAN in Problem with Multiple Local Minima (Example 8.3 in ISSO) • Many numerical studies in literature showing favorable results for SAN • Loss function in study of Brooks and Morgan (1995): L() t12 2t22 0.3cos(3t1 ) 0.4cos(4t2 ) with = [t1, t2]T and = [1, 1]2 • Function has many local minima with a unique global minimum • Study compares quasi-Newton method and SAN – “Apples vs. oranges” (gradient-based vs. non-gradientbased) • 20% of quasi-Newton runs and 100% of SAN runs ended near (random initial conditions) 8-12 Global Optimization via Annealing of Stochastic Approximation • SAN not only way annealing used for global optimization • With appropriate annealing, stochastic approximation (SA) can be used in global optimization • Standard approach is to inject Gaussian noise to r.h.s. of SA recursion: ˆ k 1 ˆ k akGk (ˆ k ) bk w k (*) where Gk is direct gradient measurement (Chap. 5) or gradient approximation (FDSA or SPSA), bk 0 (the “annealing”), and wk N(0,Ipp) • Injected noise wk generated by Monte Carlo • Eqn. (*) has theoretical basis for formal convergence (Sect. 8.4 of ISSO) 8-13 Global Optimization via Annealing of Stochastic Approximation (cont’d) • Careful selection of ak and bk required to achieve global convergence • Stochastic rate of convergence is slow: ˆ k ˆ O 1 log k when ak= a/(k+1), < 1 ˆ k ˆ O 1 loglog k when ak= a/(k+1) • Above slow rates are price to be paid for global convergence • SPSA without injected randomness (i.e., bk = 0) is global optimizer under certain conditions – Much faster O 1 k / 2 convergence rate (0< 2/3) 8-14 Ratio of Asymptotic Estimation Errors with and without Injected Randomness (bk > 0 and bk = 0, resp.) 70 constant 60 ˆ k ˆ ˆ k ˆ bk 0 bk 0 1 loglog k 1 k 1/ 3 50 40 30 20 10 Iterations, k 103 104 105 106 8-15