Sec 4.7

advertisement

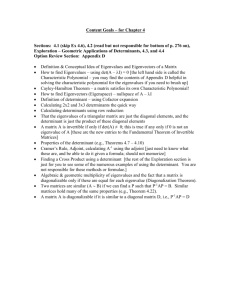

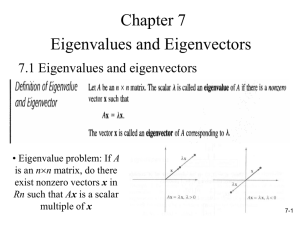

Chapter 4 Section 4.7 Similarity Transformations and Diagonalization Similar Matrices If A,B are two 𝑛 × 𝑛 and there is a third 𝑛 × 𝑛 matrix S that is non-singular (i.e. invertible) such that 𝐵 = 𝑆 −1 𝐴𝑆 then A and B are called similar matrices or A is similar to B. A is similar to B if there is a matrix S such that: 𝐵 = 𝑆 −1 𝐴𝑆 The reason we use the word similar is that the two matrices share many of the same features as some of the next results will show. Theorem If A and B are similar matrices then the characteristic polynomial for A call it 𝑝 𝑡 is equal to the characteristic polynomial for B call it 𝑞 𝑡 and the eigenvalues of A are the same as the eigenvalues of B with the same algebraic multiplicities. Let S be the non-singular matrix so that 𝐵 = 𝑆 −1 𝐴𝑆, that means that det 𝑆 ≠ 0. 𝑞 𝑡 = det 𝐵 − 𝑡𝐼 = det 𝑆 −1 𝐴𝑆 − 𝑡𝐼 = det 𝑆 −1 𝐴𝑆 − 𝑡𝑆 −1 𝑆 = det 𝑆 −1 𝐴 − 𝑡𝐼 𝑆 = det 𝑆 −1 det 𝐴 − 𝑡𝐼 det 𝑆 1 = det 𝑆 det 𝐴 − 𝑡𝐼 det 𝑆 = det 𝐴 − 𝑡𝐼 =𝑝 𝑡 If the matrices have the same characteristic polynomials they will both factor the same way and have the same roots which are the eigenvalues. The number of time they occur in factored form will also be equal, giving equal algebraic multiplicities. Careful similar matrices may not have the same eigenvectors but they are related. Theorem If A and B are similar with non-singular matrix S, such that 𝐴 = 𝑆 −1 𝐵𝑆 and 𝜆 is an eigenvalue for A with corresponding eigenvector 𝐯 ≠ 𝜽 then the eigenvector that corresponds to the eigenvalue 𝜆 for B is the vector 𝑆𝐯. Since S is non-singular and 𝐯 ≠ 𝜽 then 𝑆𝐯 ≠ 0. 𝐵 𝑆𝐯 = 𝐼 𝐵𝑆 𝐯 = 𝑆𝑆 −1 𝐵𝑆 𝐯 = 𝑆 𝑆 −1 𝐵𝑆 𝐯 = 𝑆 𝐴𝐯 = 𝑆 𝜆𝐯 = 𝜆𝑆𝐯 Therefore 𝜆 is an eigenvalue of B with corresponding eigenvector 𝑆𝐯. Corollary If A and B are similar with non-singular matrix S, such that 𝐴 = 𝑆 −1 𝐵𝑆 and 𝜆1 , ⋯ , 𝜆𝑘 are eigenvalues of A with corresponding eigenvectors 𝐯1 , ⋯ , 𝐯𝑘 then 𝜆1 , ⋯ , 𝜆𝑘 are eigenvalues of B with corresponding eigenvectors S𝐯1 , ⋯ , S𝐯𝑘 . Example For the matrix A to the right find the eigenvalues (there are 3) and corresponding eigenvectors 𝐯1 , 𝐯2 , 𝐯3 . Let 𝑆 = 𝐯1 , 𝐯2 , 𝐯3 , find 𝑆 −1 and use it to compute 𝑆 −1 𝐴𝑆. 4 3 𝐴 = −6 −5 0 0 Use a 3rd row expansion to find the characteristic polynomial. 4−𝑡 3 2 4−𝑡 𝑝 𝑡 = −6 −5 − 𝑡 0 = 2−𝑡 −6 0 0 2−𝑡 = 2 − 𝑡 𝑡2 + 𝑡 − 2 = 2 − 𝑡 𝑡 + 2 𝑡 − 1 3 = 2−𝑡 −5 − 𝑡 4 − 𝑡 −5 − 𝑡 + 18 The eigenvalues are : -2, 1, 2 2 0 2 1 12 6 3 2 𝐴 + 2𝐼 = −6 −3 0 , row reduces to 0 0 0 0 4 0 0 3 3 𝐴 − 𝐼 = −6 −6 0 0 2 𝐴 − 2𝐼 = −6 0 2 1 0 , row reduces to 0 1 0 1 0 0 1 0 3 2 −7 0 , row reduces to 0 1 0 0 0 0 0 −12 −1 1 , vector solution: 𝑥2 1 , let 𝐯1 = 2 0 0 0 0 −1 −1 1 , vector solution: 𝑥2 1 , let 𝐯2 = 1 0 0 0 7 −72 7 2 3 , vector solution: 𝑥3 −3 , let 𝐯3 = −6 2 0 1 −1 −1 Forming the matrix with eigenvector columns the matrix 𝑆 = 𝐯1 , 𝐯2 , 𝐯3 = 2 1 0 0 1 1 −12 Augmenting the matrix with I and row reducing it we get that 𝑆 −1 = −2 −1 4 1 0 0 2 1 1 𝑆 −1 𝐴𝑆 = −2 −1 0 0 −12 4 4 −6 1 0 2 3 −5 0 2 −1 −1 7 −2 0 0 2 1 −6 = 0 1 2 0 0 2 0 0 0 0 2 Observe that the result of the matrix computation 𝑆 −1 𝐴𝑆 is a diagonal matrix with the eigenvalues of the matrix A going down the diagonal! This is not a coincidence. 7 −6 . 2 Theorem Let A be a 𝑛 × 𝑛 matrix with eigenvalues 𝜆1 , ⋯ , 𝜆𝑘 if the basis vectors for the eigenspaces 𝐸𝜆1 , ⋯ , 𝐸𝜆𝑘 form a complete basis for ℝ𝑛 (i.e. the are n vectors 𝐯1 , ⋯ , 𝐯𝑛 in the combined bases for all the eigenspaces) then if we set 𝑆 = 𝐯1 , ⋯ , 𝐯𝑛 the result of 𝑆 −1 𝐴𝑆 will be a diagonal matrix with the eigenvalues of A going down the diagonal. The reason for this comes from making 2 observations 1. If 𝐯1 , ⋯ , 𝐯𝑛 are eigenvectors of A then 𝐴𝐯1 = 𝜆1 𝐯1 , ⋯ , 𝐴𝐯𝑛 = 𝜆𝑛 𝐯𝑛 . 2. Since 𝑆 −1 𝑆 = 𝐼 = 𝐞1 , ⋯ , 𝐞𝑛 then 𝑆 −1 𝐯1 , ⋯ , 𝐯𝑛 = 𝑆 −1 𝐯1 , ⋯ , 𝑆 −1 𝐯𝑛 = 𝐞1 , ⋯ , 𝐞𝑛 or that in other words 𝑆 −1 𝐯1 = 𝐞1 , ⋯ , 𝑆 −1 𝐯𝑛 = 𝐞𝑛 . 𝑆 −1 𝐴𝑆 = 𝑆 −1 𝐴 𝐯1 , ⋯ , 𝐯𝑛 = 𝑆 −1 𝐴𝐯1 , ⋯ , 𝐴𝐯𝑛 = 𝑆 −1 𝜆1 𝐯1 , ⋯ , 𝜆𝑛 𝐯𝑛 = 𝑆 −1 𝜆1 𝐯1 , ⋯ , 𝑆 −1 𝜆𝑛 𝐯𝑛 = 𝜆1 𝑆 −1 𝐯1 , ⋯ , 𝜆𝑛 𝑆 −1 𝐯𝑛 = 𝜆1 𝐞1 , ⋯ , 𝜆𝑛 𝐞𝑛 Now write out the matrix 𝜆1 𝐞1 , ⋯ , 𝜆𝑛 𝐞𝑛 we se that 𝜆1 𝐞1 , ⋯ , 𝜆𝑛 𝐞𝑛 𝜆1 = ⋮ 0 ⋯ 0 ⋱ ⋮ ⋯ 𝜆𝑛 Diagonalizable Matrices A 𝑛 × 𝑛 matrix A is diagonalizable (or diagonalizes) if there is a non-singular matrix S such that 𝑆 −1 𝐴𝑆 = 𝐷 where the matrix D is a diagonal matrix. Theorem If the 𝑛 × 𝑛 matrix A is not defective then the matrix A is diagonalizable. Since the algebraic multiplicity is equal to the geometric multiplicity the total number of basis vector in all eigenspaces will be n. Example Determine if the matrix A to the right is diagonalizable. If the matrix is diagonalizable find a matrix S such that 𝑆 −1 𝐴𝑆 = 𝐷 where D is a diagonal matrix. 𝐴= 8 −6 9 −7 Begin by computing the characteristic polynomial 𝑝 𝑡 and eigenvalues. 𝑝 𝑡 = 8−𝑡 −6 9 = 8 − 𝑡 −7 − 𝑡 + 54 = 𝑡 2 − 𝑡 − 2 = 𝑡 + 1 𝑡 − 2 −7 − 𝑡 We see from the above polynomial the eigenvalues are -1 and 2. The eigenvalues are distinct so the matrix is not defective. Since A is not defective it is diagonalizable. To find S we need to compute a basis for each eigenspace. 𝐴+𝐼 = 9 9 1 1 −1 −1 , row reduces to , vector solution : 𝑥2 , let 𝐯1 = −6 −6 0 0 1 1 1 6 9 𝐴 − 2𝐼 = , row reduces to −6 −9 0 So 𝑆 = 3 2 0 , vector solution : 𝑥2 −32 −3 , let 𝐯2 = 2 1 1 2 −1 −3 , use the 2 × 2 matrix inverse formula for 𝑆 −1 = 1 1 2 −1 3 2 3 = −1 −1 −1 We can check this with the following computation: 8 9 −1 −3 −1 2 3 2 3 1 −6 𝑆 −1 𝐴𝑆 = = = 0 −1 −1 −6 −7 1 2 −1 −1 −1 4 0 2 Matrix Functions and Diagonal Matrices Diagonal matrices behave just like numbers for matrix functions that are polynomials. Begin by considering the product of two diagonal matrices which is another diagonal matrix. 𝑑11 ⋮ 0 ⋯ 0 ⋱ ⋮ ⋯ 𝑑𝑛𝑛 𝑒11 ⋮ 0 ⋯ 0 𝑑11 𝑒11 ⋱ ⋮ = ⋮ ⋯ 𝑒𝑛𝑛 0 You can just multiply the corresponding diagonal entries. Taking a 𝑚𝑡ℎ power of a diagonal matrix is a matter of taking a power of each diagonal entry. 𝑑11 ⋮ 0 ⋯ ⋱ ⋯ 0 ⋮ 𝑑𝑛𝑛 𝑒𝑛𝑛 ⋯ 0 ⋱ ⋮ ⋯ 𝑑𝑛𝑛 𝑚 𝑚 𝑑11 = ⋮ 0 ⋯ 0 ⋱ ⋮ 𝑚 ⋯ 𝑑𝑛𝑛 Multiplying a diagonal matrix by a scalar multiplies each diagonal entry. Adding two diagonal matrices adds the corresponding diagonal entries. Now if the function 𝑓 𝑡 is a polynomial function with 𝑓 𝑡 = 𝑐𝑚 𝑡 𝑚 + ⋯ + 𝑐1 𝑡 + 𝑐0 we get the following for a diagonal matrix D: 𝑓 𝐷 = 𝑐𝑚 𝑑11 ⋮ 0 ⋯ 0 ⋱ ⋮ ⋯ 𝑑𝑛𝑛 𝑚 + ⋯ + 𝑐0 𝑓 𝑑11 1 ⋯ 0 ⋮ ⋮ ⋱ ⋮ = 0 0 ⋯ 1 ⋯ 0 ⋱ ⋮ ⋯ 𝑓 𝑑𝑛𝑛 The difficulty is what if the matrix is not a diagonal matrix? Finding a value of a certain matrix function could involve a great number of calculations considering how you do matrix multiplication. If the matrix you want to evaluate in the function is diagonalizable then there is a huge short cut. Example If matrix A is similar to B with non-singular matrix S such that 𝐵 = 𝑆 −1 𝐴𝑆 show that 𝐵2 = 𝑆 −1 𝐴2 𝑆 and 𝐵3 = 𝑆 −1 𝐴3 𝑆. 𝐵2 = 𝑆 −1 𝐴𝑆 2 = 𝑆 −1 𝐴𝑆 𝑆 −1 𝐴𝑆 = 𝑆 −1 𝐴 𝑆 −1 𝑆 𝐴𝑆 = 𝑆 −1 𝐴𝐼𝐴𝑆 = 𝑆 −1 𝐴2 𝑆 𝐵3 = 𝑆 −1 𝐴𝑆 𝑆 −1 𝐴𝑆 𝑆 −1 𝐴𝑆 = 𝑆 −1 𝐴 𝑆 −1 𝑆 𝐴 𝑆 −1 𝑆 𝐴𝑆 = 𝑆 −1 𝐴𝐼𝐴𝐼𝐴𝑆 = 𝑆 −1 𝐴3 𝑆 Computing Powers of Similar Matrices If A and B are similar matrices with 𝐵 = 𝑆 −1 𝐴𝑆 for a non-singular matrix S then for any positive integer m, 𝐵𝑚 = 𝑆 −1 𝐴𝑚 𝑆 and 𝐴𝑚 = 𝑆𝐵𝑚 𝑆 −1. 𝐵𝑚 = 𝑆 −1 𝐴𝑚 𝑆 𝐴𝑚 = 𝑆𝐵𝑚 𝑆 −1 𝐵𝑚 = 𝑆 −1 𝐴𝑆 ⋯ 𝑆 −1 𝐴𝑆 = 𝑆 −1 𝐴 𝑆 −1 𝑆 ⋯ 𝑆 −1 𝑆 𝐴 𝑆 = 𝑆 −1 𝐴𝐼 ⋯ 𝐼𝐴 𝑆 = 𝑆 −1 𝐴𝑚 𝑆 𝑚 𝑡𝑖𝑚𝑒𝑠 𝑚 𝑡𝑖𝑚𝑒𝑠 𝑚 𝑡𝑖𝑚𝑒𝑠 Example Compute 𝐴5 where A is the matrix to the right from the previous example. We can take advantage of the fact that 𝑆 −1 𝐴𝑆 = 𝐷 a diagonal matrix to compute this where S and D are given to the right. −1 −3 −1 0 5 2 𝐴 = 𝑆𝐷 𝑆 = 0 2 −1 1 2 −3 98 99 −1 −3 −2 = = −66 −67 1 2 −32 −32 5 5 −1 3 −1 = −1 1 𝑆= −1 1 𝐴= 8 −6 9 −7 −1 0 −3 ,𝐷 = 0 2 2 −3 −1 0 2 3 0 32 −1 −1 2 Instead of four matrix multiplications we only needed to do two or 50% faster! Computing Matrix Functions of Similar Matrices If A and B are similar matrices with 𝐵 = 𝑆 −1 𝐴𝑆 for a non-singular matrix S then and if 𝑓 𝑡 = 𝑐𝑚 𝑡 𝑚 + ⋯ + 𝑐1 𝑡 + 𝑐0 then the matrices 𝑓 𝐴 and 𝑓 𝐵 can be computed as given to the right. 𝑓 𝐵 = 𝑆 −1 𝑓 𝐴 𝑆 𝑓 𝐴 = 𝑆𝑓 𝐵 𝑆 −1 If we can express a function as a polynomial (that is defined for all values) then we can evaluate what the matrix is using this method. For example the function sin 𝑥 is expressed as an infinite polynomial in the following way: 𝑥 3 𝑥 5 𝑥 7 𝑥 9 𝑥 11 sin 𝑥 = 𝑥 − + − + − ±⋯ 3! 5! 7! 9! 11! Example Compute sin 𝐴 , for the previous matrix A given to the right. We can take advantage of the fact that 𝑆 −1 𝐴𝑆 = 𝐷 a diagonal matrix to compute this where S and D are given to the right. 8 9 −1 = 𝑆 sin −6 −7 0 −1 −3 2 sin −1 3 sin = − sin 2 sin 1 2 sin 𝐴= 𝑆= 0 −1 −3 sin −1 𝑆 −1 = 0 2 1 2 −1 −2 sin −1 + 3 sin 2 = 2 2 sin −1 − 2 sin 2 −1 1 8 −6 9 −7 −1 0 −3 ,𝐷 = 0 2 2 0 2 3 sin 2 −1 1 −3 sin −1 − 3 sin 2 3 sin −1 + 2 sin 2 Orthogonal Matrices If the columns of a 𝑛 × 𝑛 matrix 𝑄 = 𝑄1 , ⋯ , 𝑄𝑛 form an orthonormal basis for ℝ𝑛 . That is the set of vectors 𝑄1 , ⋯ , 𝑄𝑛 is a basis such that 𝑄𝑖𝑇 𝑄𝑗 = 0 𝑓𝑜𝑟 𝑖 ≠ 𝑗 and 𝑄𝑖𝑇 𝑄𝑖 = 1. The matrix Q is called an orthogonal matrix and has the property that 𝑄−1 = 𝑄𝑇 . Example Apply Gram-Schmidt to the basis for ℝ3 given to the right to find an orthogonal matrix Q. 1 0 0 0 −1 𝐰1 = 0 , 𝐰2 = 1 − 𝑝𝑟𝑜𝑗𝐰1 1 = 1 − 2 −1 1 1 1 2 2 2 2 0 𝐰3 = 1 − 𝑝𝑟𝑜𝑗𝐰1 1 − 𝑝𝑟𝑜𝑗𝐰2 1 = 1 − 2 2 2 2 2 𝐰1 𝐰1 1 2 = 0 , −1 1 2 𝑄= 0 −1 2 1 6 2 6 1 6 𝐰1 𝐰1 1 3 −1 3 1 3 = 1 6 2 6 1 6 , 𝑄𝑇 = , 𝐰1 𝐰1 1 2 1 6 1 3 = 0 2 6 −1 3 1 3 −1 3 1 3 −1 2 1 6 1 3 1 1 2 0 = 1 , 𝐰2 1 −1 2 1 1 6 0 −6 2 = −1 1 1 0 2 0 , 1 , 1 −1 1 2 1 = 2𝐰2 = 2 1 1 −1 1 This gives us an orthogonal basis for ℝ3 . Normalize each vector by dividing by its length to get a vector of length 1. This is now an orthonormal basis for ℝ3 . 1 2 𝑇 𝑄𝑄 = 0 −1 2 1 6 2 6 1 6 1 3 −1 3 1 3 1 2 1 6 1 3 0 2 6 −1 3 −1 2 1 6 1 3 1 0 = 0 1 0 0 0 0 1